Mark Brophy

Structured Domain Randomization: Bridging the Reality Gap by Context-Aware Synthetic Data

Oct 23, 2018

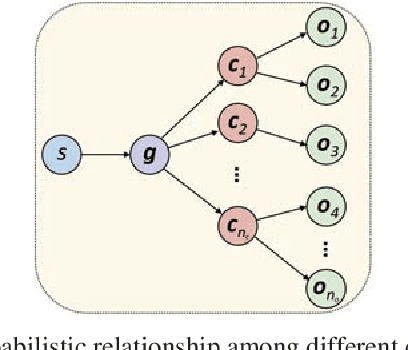

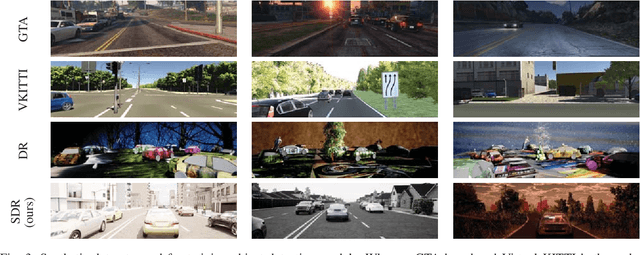

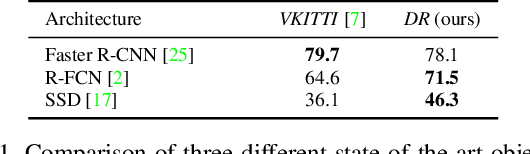

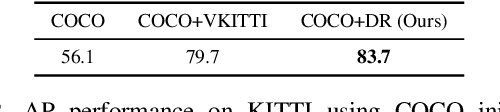

Abstract:We present structured domain randomization (SDR), a variant of domain randomization (DR) that takes into account the structure and context of the scene. In contrast to DR, which places objects and distractors randomly according to a uniform probability distribution, SDR places objects and distractors randomly according to probability distributions that arise from the specific problem at hand. In this manner, SDR-generated imagery enables the neural network to take the context around an object into consideration during detection. We demonstrate the power of SDR for the problem of 2D bounding box car detection, achieving competitive results on real data after training only on synthetic data. On the KITTI easy, moderate, and hard tasks, we show that SDR outperforms other approaches to generating synthetic data (VKITTI, Sim 200k, or DR), as well as real data collected in a different domain (BDD100K). Moreover, synthetic SDR data combined with real KITTI data outperforms real KITTI data alone.

Training Deep Networks with Synthetic Data: Bridging the Reality Gap by Domain Randomization

Apr 23, 2018

Abstract:We present a system for training deep neural networks for object detection using synthetic images. To handle the variability in real-world data, the system relies upon the technique of domain randomization, in which the parameters of the simulator$-$such as lighting, pose, object textures, etc.$-$are randomized in non-realistic ways to force the neural network to learn the essential features of the object of interest. We explore the importance of these parameters, showing that it is possible to produce a network with compelling performance using only non-artistically-generated synthetic data. With additional fine-tuning on real data, the network yields better performance than using real data alone. This result opens up the possibility of using inexpensive synthetic data for training neural networks while avoiding the need to collect large amounts of hand-annotated real-world data or to generate high-fidelity synthetic worlds$-$both of which remain bottlenecks for many applications. The approach is evaluated on bounding box detection of cars on the KITTI dataset.

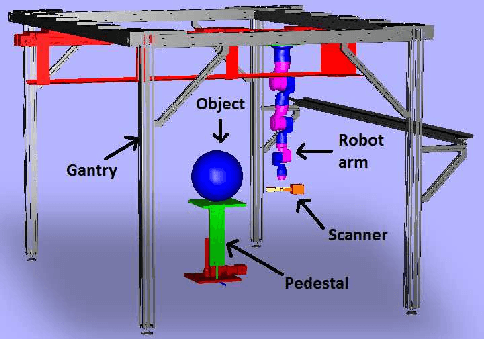

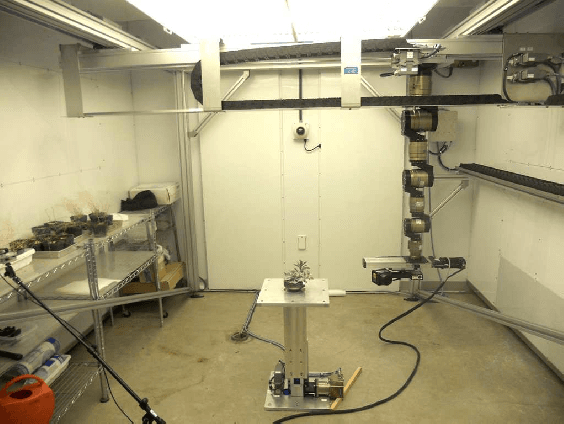

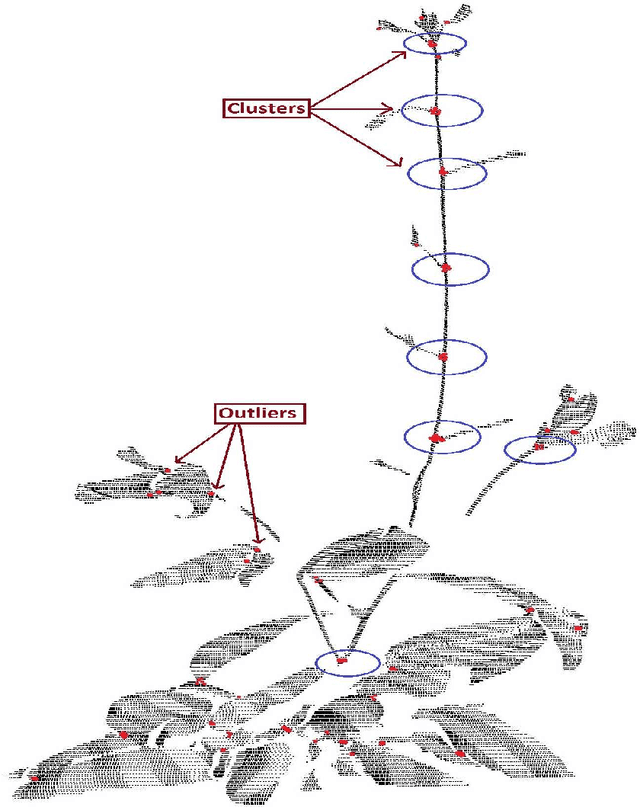

Machine Vision System for 3D Plant Phenotyping

Apr 28, 2017

Abstract:Machine vision for plant phenotyping is an emerging research area for producing high throughput in agriculture and crop science applications. Since 2D based approaches have their inherent limitations, 3D plant analysis is becoming state of the art for current phenotyping technologies. We present an automated system for analyzing plant growth in indoor conditions. A gantry robot system is used to perform scanning tasks in an automated manner throughout the lifetime of the plant. A 3D laser scanner mounted as the robot's payload captures the surface point cloud data of the plant from multiple views. The plant is monitored from the vegetative to reproductive stages in light/dark cycles inside a controllable growth chamber. An efficient 3D reconstruction algorithm is used, by which multiple scans are aligned together to obtain a 3D mesh of the plant, followed by surface area and volume computations. The whole system, including the programmable growth chamber, robot, scanner, data transfer and analysis is fully automated in such a way that a naive user can, in theory, start the system with a mouse click and get back the growth analysis results at the end of the lifetime of the plant with no intermediate intervention. As evidence of its functionality, we show and analyze quantitative results of the rhythmic growth patterns of the dicot Arabidopsis thaliana(L.), and the monocot barley (Hordeum vulgare L.) plants under their diurnal light/dark cycles.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge