Maria Ivanova

PRS: Sharp Feature Priors for Resolution-Free Surface Remeshing

Nov 30, 2023Abstract:Surface reconstruction with preservation of geometric features is a challenging computer vision task. Despite significant progress in implicit shape reconstruction, state-of-the-art mesh extraction methods often produce aliased, perceptually distorted surfaces and lack scalability to high-resolution 3D shapes. We present a data-driven approach for automatic feature detection and remeshing that requires only a coarse, aliased mesh as input and scales to arbitrary resolution reconstructions. We define and learn a collection of surface-based fields to (1) capture sharp geometric features in the shape with an implicit vertexwise model and (2) approximate improvements in normals alignment obtained by applying edge-flips with an edgewise model. To support scaling to arbitrary complexity shapes, we learn our fields using local triangulated patches, fusing estimates on complete surface meshes. Our feature remeshing algorithm integrates the learned fields as sharp feature priors and optimizes vertex placement and mesh connectivity for maximum expected surface improvement. On a challenging collection of high-resolution shape reconstructions in the ABC dataset, our algorithm improves over state-of-the-art by 26% normals F-score and 42% perceptual $\text{RMSE}_{\text{v}}$.

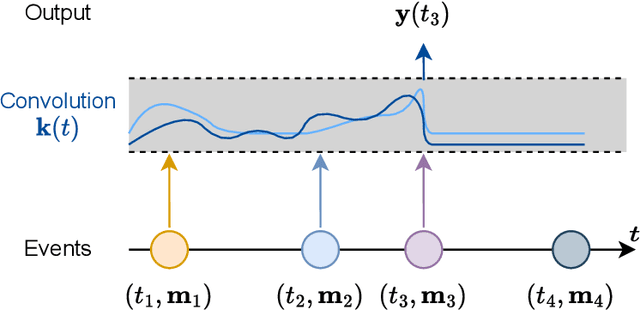

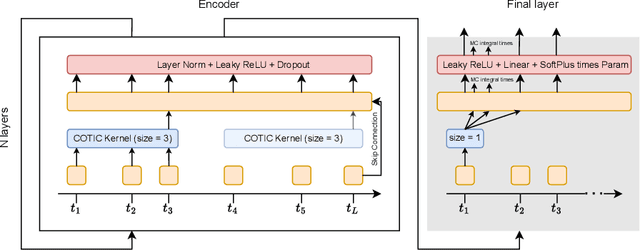

Continuous-time convolutions model of event sequences

Feb 13, 2023

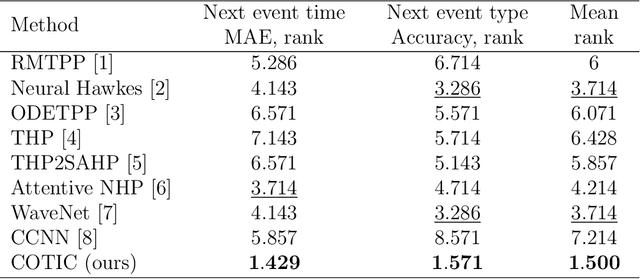

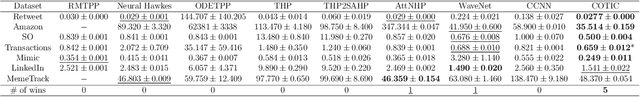

Abstract:Massive samples of event sequences data occur in various domains, including e-commerce, healthcare, and finance. There are two main challenges regarding inference of such data: computational and methodological. The amount of available data and the length of event sequences per client are typically large, thus it requires long-term modelling. Moreover, this data is often sparse and non-uniform, making classic approaches for time series processing inapplicable. Existing solutions include recurrent and transformer architectures in such cases. To allow continuous time, the authors introduce specific parametric intensity functions defined at each moment on top of existing models. Due to the parametric nature, these intensities represent only a limited class of event sequences. We propose the COTIC method based on a continuous convolution neural network suitable for non-uniform occurrence of events in time. In COTIC, dilations and multi-layer architecture efficiently handle dependencies between events. Furthermore, the model provides general intensity dynamics in continuous time - including self-excitement encountered in practice. The COTIC model outperforms existing approaches on majority of the considered datasets, producing embeddings for an event sequence that can be used to solve downstream tasks - e.g. predicting next event type and return time. The code of the proposed method can be found in the GitHub repository (https://github.com/VladislavZh/COTIC).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge