Manu Kapur

MathTutorBench: A Benchmark for Measuring Open-ended Pedagogical Capabilities of LLM Tutors

Feb 26, 2025Abstract:Evaluating the pedagogical capabilities of AI-based tutoring models is critical for making guided progress in the field. Yet, we lack a reliable, easy-to-use, and simple-to-run evaluation that reflects the pedagogical abilities of models. To fill this gap, we present MathTutorBench, an open-source benchmark for holistic tutoring model evaluation. MathTutorBench contains a collection of datasets and metrics that broadly cover tutor abilities as defined by learning sciences research in dialog-based teaching. To score the pedagogical quality of open-ended teacher responses, we train a reward model and show it can discriminate expert from novice teacher responses with high accuracy. We evaluate a wide set of closed- and open-weight models on MathTutorBench and find that subject expertise, indicated by solving ability, does not immediately translate to good teaching. Rather, pedagogy and subject expertise appear to form a trade-off that is navigated by the degree of tutoring specialization of the model. Furthermore, tutoring appears to become more challenging in longer dialogs, where simpler questioning strategies begin to fail. We release the benchmark, code, and leaderboard openly to enable rapid benchmarking of future models.

Towards the Pedagogical Steering of Large Language Models for Tutoring: A Case Study with Modeling Productive Failure

Oct 03, 2024

Abstract:One-to-one tutoring is one of the most efficient methods of teaching. Following the rise in popularity of Large Language Models (LLMs), there have been efforts to use them to create conversational tutoring systems, which can make the benefits of one-to-one tutoring accessible to everyone. However, current LLMs are primarily trained to be helpful assistants and thus lack crucial pedagogical skills. For example, they often quickly reveal the solution to the student and fail to plan for a richer multi-turn pedagogical interaction. To use LLMs in pedagogical scenarios, they need to be steered towards using effective teaching strategies: a problem we introduce as Pedagogical Steering and believe to be crucial for the efficient use of LLMs as tutors. We address this problem by formalizing a concept of tutoring strategy, and introducing StratL, an algorithm to model a strategy and use prompting to steer the LLM to follow this strategy. As a case study, we create a prototype tutor for high school math following Productive Failure (PF), an advanced and effective learning design. To validate our approach in a real-world setting, we run a field study with 17 high school students in Singapore. We quantitatively show that StratL succeeds in steering the LLM to follow a Productive Failure tutoring strategy. We also thoroughly investigate the existence of spillover effects on desirable properties of the LLM, like its ability to generate human-like answers. Based on these results, we highlight the challenges in Pedagogical Steering and suggest opportunities for further improvements. We further encourage follow-up research by releasing a dataset of Productive Failure problems and the code of our prototype and algorithm.

Stepwise Verification and Remediation of Student Reasoning Errors with Large Language Model Tutors

Jul 12, 2024

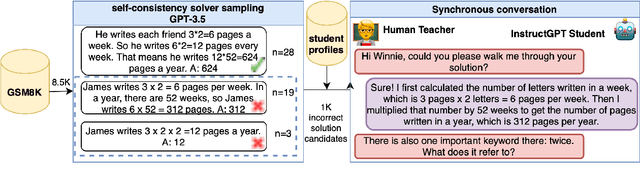

Abstract:Large language models (LLMs) present an opportunity to scale high-quality personalized education to all. A promising approach towards this means is to build dialog tutoring models that scaffold students' problem-solving. However, even though existing LLMs perform well in solving reasoning questions, they struggle to precisely detect student's errors and tailor their feedback to these errors. Inspired by real-world teaching practice where teachers identify student errors and customize their response based on them, we focus on verifying student solutions and show how grounding to such verification improves the overall quality of tutor response generation. We collect a dataset of 1K stepwise math reasoning chains with the first error step annotated by teachers. We show empirically that finding the mistake in a student solution is challenging for current models. We propose and evaluate several verifiers for detecting these errors. Using both automatic and human evaluation we show that the student solution verifiers steer the generation model towards highly targeted responses to student errors which are more often correct with less hallucinations compared to existing baselines.

MathDial: A Dialogue Tutoring Dataset with Rich Pedagogical Properties Grounded in Math Reasoning Problems

May 23, 2023

Abstract:Although automatic dialogue tutors hold great potential in making education personalized and more accessible, research on such systems has been hampered by a lack of sufficiently large and high-quality datasets. However, collecting such datasets remains challenging, as recording tutoring sessions raises privacy concerns and crowdsourcing leads to insufficient data quality. To address this problem, we propose a framework to semi-synthetically generate such dialogues by pairing real teachers with a large language model (LLM) scaffolded to represent common student errors. In this paper, we describe our ongoing efforts to use this framework to collect MathDial, a dataset of currently ca. 1.5k tutoring dialogues grounded in multi-step math word problems. We show that our dataset exhibits rich pedagogical properties, focusing on guiding students using sense-making questions to let them explore problems. Moreover, we outline that MathDial and its grounding annotations can be used to finetune language models to be more effective tutors (and not just solvers) and highlight remaining challenges that need to be addressed by the research community. We will release our dataset publicly to foster research in this socially important area of NLP.

Opportunities and Challenges in Neural Dialog Tutoring

Jan 24, 2023

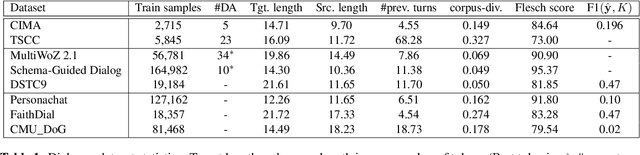

Abstract:Designing dialog tutors has been challenging as it involves modeling the diverse and complex pedagogical strategies employed by human tutors. Although there have been significant recent advances in neural conversational systems using large language models and growth in available dialog corpora, dialog tutoring has largely remained unaffected by these advances. In this paper, we rigorously analyze various generative language models on two dialog tutoring datasets for language learning using automatic and human evaluations to understand the new opportunities brought by these advances as well as the challenges we must overcome to build models that would be usable in real educational settings. We find that although current approaches can model tutoring in constrained learning scenarios when the number of concepts to be taught and possible teacher strategies are small, they perform poorly in less constrained scenarios. Our human quality evaluation shows that both models and ground-truth annotations exhibit low performance in terms of equitable tutoring, which measures learning opportunities for students and how engaging the dialog is. To understand the behavior of our models in a real tutoring setting, we conduct a user study using expert annotators and find a significantly large number of model reasoning errors in 45% of conversations. Finally, we connect our findings to outline future work.

Nonlinear and Machine Learning Analyses on High-Density EEG data of Math Experts and Novices

Dec 01, 2022Abstract:Current trend in neurosciences is to use naturalistic stimuli, such as cinema, class-room biology or video gaming, aiming to understand the brain functions during ecologically valid conditions. Naturalistic stimuli recruit complex and overlapping cognitive, emotional and sensory brain processes. Brain oscillations form underlying mechanisms for such processes, and further, these processes can be modified by expertise. Human cortical oscillations are often analyzed with linear methods despite brain as a biological system is highly nonlinear. This study applies a relatively robust nonlinear method, Higuchi fractal dimension (HFD), to classify cortical oscillations of math experts and novices when they solve long and complex math demonstrations in an EEG laboratory. Brain imaging data, which is collected over a long time span during naturalistic stimuli, enables the application of data-driven analyses. Therefore, we also explore the neural signature of math expertise with machine learning algorithms. There is a need for novel methodologies in analyzing naturalistic data because formulation of theories of the brain functions in the real world based on reductionist and simplified study designs is both challenging and questionable. Data-driven intelligent approaches may be helpful in developing and testing new theories on complex brain functions. Our results clarify the different neural signature, analyzed by HFD, of math experts and novices during complex math and suggest machine learning as a promising data-driven approach to understand the brain processes in expertise and mathematical cognition.

Automatic Generation of Socratic Subquestions for Teaching Math Word Problems

Nov 23, 2022

Abstract:Socratic questioning is an educational method that allows students to discover answers to complex problems by asking them a series of thoughtful questions. Generation of didactically sound questions is challenging, requiring understanding of the reasoning process involved in the problem. We hypothesize that such questioning strategy can not only enhance the human performance, but also assist the math word problem (MWP) solvers. In this work, we explore the ability of large language models (LMs) in generating sequential questions for guiding math word problem-solving. We propose various guided question generation schemes based on input conditioning and reinforcement learning. On both automatic and human quality evaluations, we find that LMs constrained with desirable question properties generate superior questions and improve the overall performance of a math word problem solver. We conduct a preliminary user study to examine the potential value of such question generation models in the education domain. Results suggest that the difficulty level of problems plays an important role in determining whether questioning improves or hinders human performance. We discuss the future of using such questioning strategies in education.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge