Lingjia Liu

Louis

When Learning Hurts: Fixed-Pole RNN for Real-Time Online Training

Feb 25, 2026Abstract:Recurrent neural networks (RNNs) can be interpreted as discrete-time state-space models, where the state evolution corresponds to an infinite-impulse-response (IIR) filtering operation governed by both feedforward weights and recurrent poles. While, in principle, all parameters including pole locations can be optimized via backpropagation through time (BPTT), such joint learning incurs substantial computational overhead and is often impractical for applications with limited training data. Echo state networks (ESNs) mitigate this limitation by fixing the recurrent dynamics and training only a linear readout, enabling efficient and stable online adaptation. In this work, we analytically and empirically examine why learning recurrent poles does not provide tangible benefits in data-constrained, real-time learning scenarios. Our analysis shows that pole learning renders the weight optimization problem highly non-convex, requiring significantly more training samples and iterations for gradient-based methods to converge to meaningful solutions. Empirically, we observe that for complex-valued data, gradient descent frequently exhibits prolonged plateaus, and advanced optimizers offer limited improvement. In contrast, fixed-pole architectures induce stable and well-conditioned state representations even with limited training data. Numerical results demonstrate that fixed-pole networks achieve superior performance with lower training complexity, making them more suitable for online real-time tasks.

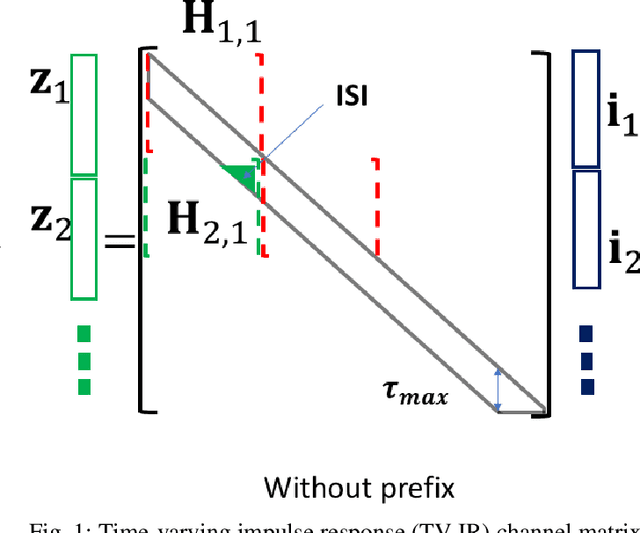

A Universal Neural Receiver that Learns at the Speed of Wireless

Feb 17, 2026Abstract:Today we design wireless networks using mathematical models that govern communication in different propagation environments. We rely on measurement campaigns to deliver parametrized propagation models, and on the 3GPP standards process to optimize model-based performance, but as wireless networks become more complex this model-based approach is losing ground. Mobile Network Operators (MNOs) are counting on Artificial Intelligence (AI) to transform wireless by increasing spectral efficiency, reducing signaling overhead, and enabling continuous network innovation through software upgrades. They may also be interested in new use cases like integrated sensing and communications (ISAC). All we need is an AI-native physical layer, so why not simply tailor the offline AI algorithms that have revolutionized image and natural language processing to the wireless domain? We argue that these algorithms rely on off-line training that is precluded by the sub-millisecond speeds at which the wireless interference environment changes. We present an alternative architecture, a universal neural receiver based on convolution, which governs transmit and receive signal processing of any signal in any part of the wireless spectrum. Our neural receiver is designed to invert convolution, and we separate the question of which convolution to invert from the actual deconvolution. The neural network that performs deconvolution is very simple, and we configure this network by setting weights based on domain knowledge. By telling our neural network what we know, we avoid extensive offline training. By developing a universal receiver, we hope to simplify discussions about the proper choice of waveform for different use cases in the international standards. Since the receiver architecture is largely independent of technologies introduced at the base station, we hope to increase the rate of innovation in wireless.

LiQSS: Post-Transformer Linear Quantum-Inspired State-Space Tensor Networks for Real-Time 6G

Jan 18, 2026Abstract:Proactive and agentic control in Sixth-Generation (6G) Open Radio Access Networks (O-RAN) requires control-grade prediction under stringent Near-Real-Time (Near-RT) latency and computational constraints. While Transformer-based models are effective for sequence modeling, their quadratic complexity limits scalability in Near-RT RAN Intelligent Controller (RIC) analytics. This paper investigates a post-Transformer design paradigm for efficient radio telemetry forecasting. We propose a quantum-inspired many-body state-space tensor network that replaces self-attention with stable structured state-space dynamics kernels, enabling linear-time sequence modeling. Tensor-network factorizations in the form of Tensor Train (TT) / Matrix Product State (MPS) representations are employed to reduce parameterization and data movement in both input projections and prediction heads, while lightweight channel gating and mixing layers capture non-stationary cross-Key Performance Indicator (KPI) dependencies. The proposed model is instantiated as an agentic perceive-predict xApp and evaluated on a bespoke O-RAN KPI time-series dataset comprising 59,441 sliding windows across 13 KPIs, using Reference Signal Received Power (RSRP) forecasting as a representative use case. Our proposed Linear Quantum-Inspired State-Space (LiQSS) model is 10.8x-15.8x smaller and approximately 1.4x faster than prior structured state-space baselines. Relative to Transformer-based models, LiQSS achieves up to a 155x reduction in parameter count and up to 2.74x faster inference, without sacrificing forecasting accuracy.

Spreading over OFDM for Integrated Sensing and Communications (ISAC) Ranging: Multi-user Interference Mitigation

May 04, 2025

Abstract:In the context of communication-centric integrated sensing and communication (ISAC), the orthogonal frequency division multiplexing (OFDM) waveform was proven to be optimal in minimizing ranging sidelobes when random signaling is used. A typical assumption in OFDM-based ranging is that the max target delay is less than the cyclic prefix (CP) length, which is equivalent to performing a \textit{periodic} correlation between the signal reflected from the target and the transmitted signal. In the multi-user case, such as in Orthogonal Frequency Division Multiple Access (OFDMA), users are assigned disjoint subsets of subcarriers which eliminates mutual interference between the communication channels of the different users. However, ranging involves an aperiodic correlation operation for target ranges with delays greater than the CP length. Aperiodic correlation between signals from disjoint frequency bands will not be zero, resulting in mutual interference between different user bands. We refer to this as \textit{inter-band} (IB) cross-correlation interference. In this work, we analytically characterize IB interference and quantify its impact on the integrated sidelobe levels (ISL). We introduce an orthogonal spreading layer on top of OFDM that can reduce IB interference resulting in ISL levels significantly lower than for OFDM without spreading in the multi-user setup. We validate our claims through simulations, and using an upper bound on IB energy which we show that it can be minimized using our proposed spreading. However, for orthogonal spreading to be effective, a price must be paid in terms of spectral utilization, which is yet another manifestation of the trade-off between sensing accuracy and data communication capacity

Mobile Distributed MIMO (MD-MIMO) for NextG: Mobility Meets Cooperation in Distributed Arrays

Apr 16, 2025Abstract:Distributed multiple-input multiple-output (D\mbox{-}MIMO) is a promising technology to realize the promise of massive MIMO gains by fiber-connecting the distributed antenna arrays, thereby overcoming the form factor limitations of co-located MIMO. In this paper, we introduce the concept of mobile D-MIMO (MD-MIMO) network, a further extension of the D-MIMO technology where distributed antenna arrays are connected to the base station with a wireless link allowing all radio network nodes to be mobile. This approach significantly improves deployment flexibility and reduces operating costs, enabling the network to adapt to the highly dynamic nature of next-generation (NextG) networks. We discuss use cases, system design, network architecture, and the key enabling technologies for MD-MIMO. Furthermore, we investigate a case study of MD-MIMO for vehicular networks, presenting detailed performance evaluations for both downlink and uplink. The results show that an MD-MIMO network can provide substantial improvements in network throughput and reliability.

Distributed Uplink Joint Transmission for 6G Communication

Apr 11, 2025

Abstract:This paper investigates the spectral efficiency achieved through uplink joint transmission, where a serving user and the network users (UEs) collaborate by jointly transmitting to the base station (BS). The analysis incorporates the resource requirements for information sharing among UEs as a critical factor in the capacity evaluation. Furthermore, coherent and non-coherent joint transmission schemes are compared under various transmission power scenarios, providing insights into spectral and energy efficiency. A selection algorithm identifying the optimal UEs for joint transmission, achieving maximum capacity, is discussed. The results indicate that uplink joint transmission is one of the promising techniques for enabling 6G, achieving greater spectral efficiency even when accounting for the resource requirements for information sharing.

Digital Twin Enabled Site Specific Channel Precoding: Over the Air CIR Inference

Jan 27, 2025Abstract:This paper investigates the significance of designing a reliable, intelligent, and true physical environment-aware precoding scheme by leveraging an accurately designed channel twin model to obtain realistic channel state information (CSI) for cellular communication systems. Specifically, we propose a fine-tuned multi-step channel twin design process that can render CSI very close to the CSI of the actual environment. After generating a precise CSI, we execute precoding using the obtained CSI at the transmitter end. We demonstrate a two-step parameters' tuning approach to design channel twin by ray tracing (RT) emulation, then further fine-tuning of CSI by employing an artificial intelligence (AI) based algorithm can significantly reduce the gap between actual CSI and the fine-tuned digital twin (DT) rendered CSI. The simulation results show the effectiveness of the proposed novel approach in designing a true physical environment-aware channel twin model.

Reducing Inter-user Interference: Precoding over OFDM for Enhanced MTC

Jan 09, 2025

Abstract:In the physical layer (PHY) of modern cellular systems, information is transmitted as a sequence of resource blocks (RBs) across various domains with each resource block limited to a certain time and frequency duration. In the PHY of 4G/5G systems, data is transmitted in the unit of transport block (TB) across a fixed number of physical RBs based on resource allocation decisions. Using sharp band-limiting in the frequency domain can provide good separation between different resource allocations without wasting resources in guard bands. However, using sharp filters comes at the cost of elongating the overall system impulse response which can accentuate inter-symbol interference (ISI). In a multi-user setup, such as in Machine Type Communication (MTC), different users are allocated resources across time and frequency, and operate at different power levels. If strict band-limiting separation is used, high power user signals can leak in time into low power user allocations. The ISI extent, i.e., the number of neighboring symbols that contribute to the interference, depends both on the channel delay spread and the spectral concentration properties of the signaling waveforms. We hypothesize that using a precoder that effectively transforms an OFDM waveform basis into a basis comprised of prolate spheroidal sequences (DPSS) can minimize the ISI extent when strictly confined frequency allocations are used. Analytical expressions for upper bounds on ISI are derived. In addition, simulation results support our hypothesis.

Towards xAI: Configuring RNN Weights using Domain Knowledge for MIMO Receive Processing

Oct 09, 2024Abstract:Deep learning is making a profound impact in the physical layer of wireless communications. Despite exhibiting outstanding empirical performance in tasks such as MIMO receive processing, the reasons behind the demonstrated superior performance improvement remain largely unclear. In this work, we advance the field of Explainable AI (xAI) in the physical layer of wireless communications utilizing signal processing principles. Specifically, we focus on the task of MIMO-OFDM receive processing (e.g., symbol detection) using reservoir computing (RC), a framework within recurrent neural networks (RNNs), which outperforms both conventional and other learning-based MIMO detectors. Our analysis provides a signal processing-based, first-principles understanding of the corresponding operation of the RC. Building on this fundamental understanding, we are able to systematically incorporate the domain knowledge of wireless systems (e.g., channel statistics) into the design of the underlying RNN by directly configuring the untrained RNN weights for MIMO-OFDM symbol detection. The introduced RNN weight configuration has been validated through extensive simulations demonstrating significant performance improvements. This establishes a foundation for explainable RC-based architectures in MIMO-OFDM receive processing and provides a roadmap for incorporating domain knowledge into the design of neural networks for NextG systems.

A Novel Interference Minimizing Waveform for Wireless Channels with Fractional Delay: Inter-block Interference Analysis

Sep 04, 2024

Abstract:In the physical layer (PHY) of modern cellular systems, information is transmitted as a sequence of resource blocks (RBs) across various domains with each resource block limited to a certain time and frequency duration. In the PHY of 4G/5G systems, data is transmitted in the unit of transport block (TB) across a fixed number of physical RBs based on resource allocation decisions. This simultaneous time and frequency localized structure of resource allocation is at odds with the perennial time-frequency compactness limits. Specifically, the band-limiting operation will disrupt the time localization and lead to inter-block interference (IBI). The IBI extent, i.e., the number of neighboring blocks that contribute to the interference, depends mainly on the spectral concentration properties of the signaling waveforms. Deviating from the standard Gabor-frame based multi-carrier approaches which use time-frequency shifted versions of a single prototype pulse, the use of a set of multiple mutually orthogonal pulse shapes-that are not related by a time-frequency shift relationship-is proposed. We hypothesize that using discrete prolate spheroidal sequences (DPSS) as the set of waveform pulse shapes reduces IBI. Analytical expressions for upper bounds on IBI are derived as well as simulation results provided that support our hypothesis.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge