Leonhard Donle

Incorporating Anatomical Awareness for Enhanced Generalizability and Progression Prediction in Deep Learning-Based Radiographic Sacroiliitis Detection

May 12, 2024

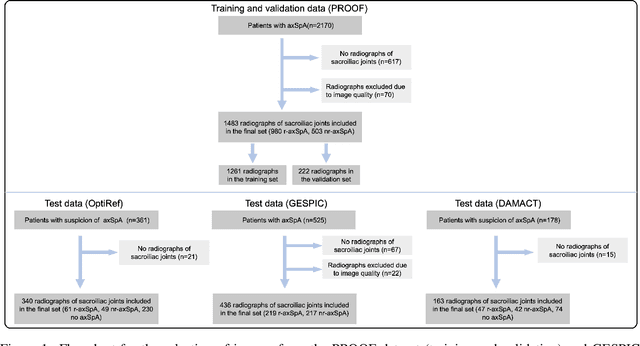

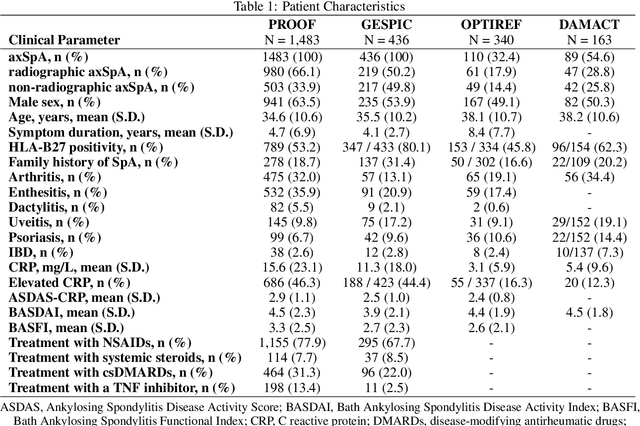

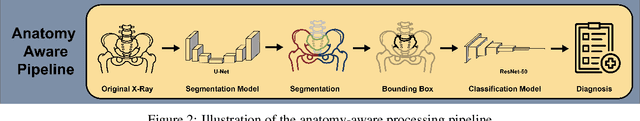

Abstract:Purpose: To examine whether incorporating anatomical awareness into a deep learning model can improve generalizability and enable prediction of disease progression. Methods: This retrospective multicenter study included conventional pelvic radiographs of 4 different patient cohorts focusing on axial spondyloarthritis (axSpA) collected at university and community hospitals. The first cohort, which consisted of 1483 radiographs, was split into training (n=1261) and validation (n=222) sets. The other cohorts comprising 436, 340, and 163 patients, respectively, were used as independent test datasets. For the second cohort, follow-up data of 311 patients was used to examine progression prediction capabilities. Two neural networks were trained, one on images cropped to the bounding box of the sacroiliac joints (anatomy-aware) and the other one on full radiographs. The performance of the models was compared using the area under the receiver operating characteristic curve (AUC), accuracy, sensitivity, and specificity. Results: On the three test datasets, the standard model achieved AUC scores of 0.853, 0.817, 0.947, with an accuracy of 0.770, 0.724, 0.850. Whereas the anatomy-aware model achieved AUC scores of 0.899, 0.846, 0.957, with an accuracy of 0.821, 0.744, 0.906, respectively. The patients who were identified as high risk by the anatomy aware model had an odds ratio of 2.16 (95% CI: 1.19, 3.86) for having progression of radiographic sacroiliitis within 2 years. Conclusion: Anatomical awareness can improve the generalizability of a deep learning model in detecting radiographic sacroiliitis. The model is published as fully open source alongside this study.

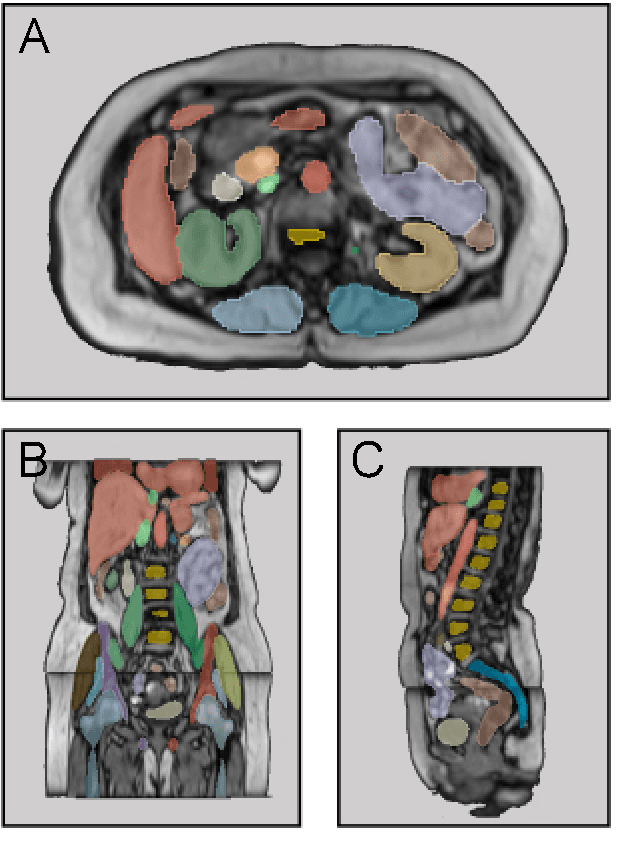

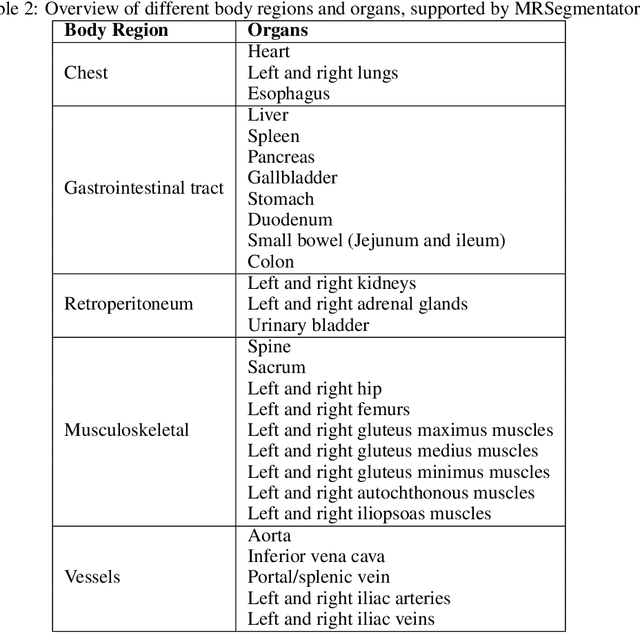

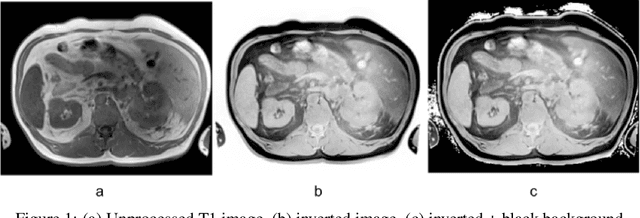

MRSegmentator: Robust Multi-Modality Segmentation of 40 Classes in MRI and CT Sequences

May 10, 2024

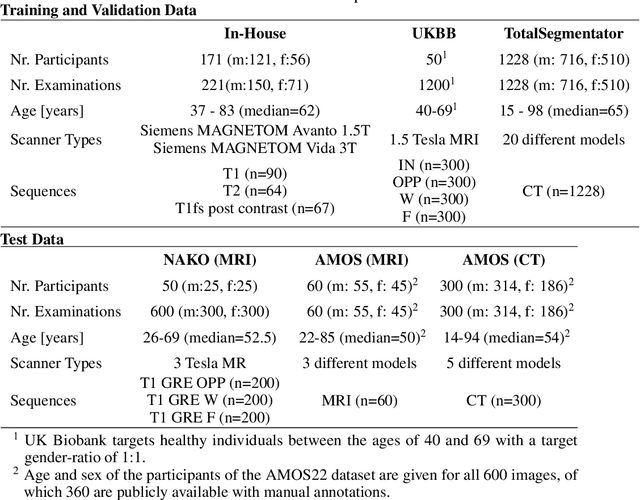

Abstract:Purpose: To introduce a deep learning model capable of multi-organ segmentation in MRI scans, offering a solution to the current limitations in MRI analysis due to challenges in resolution, standardized intensity values, and variability in sequences. Materials and Methods: he model was trained on 1,200 manually annotated MRI scans from the UK Biobank, 221 in-house MRI scans and 1228 CT scans, leveraging cross-modality transfer learning from CT segmentation models. A human-in-the-loop annotation workflow was employed to efficiently create high-quality segmentations. The model's performance was evaluated on NAKO and the AMOS22 dataset containing 600 and 60 MRI examinations. Dice Similarity Coefficient (DSC) and Hausdorff Distance (HD) was used to assess segmentation accuracy. The model will be open sourced. Results: The model showcased high accuracy in segmenting well-defined organs, achieving Dice Similarity Coefficient (DSC) scores of 0.97 for the right and left lungs, and 0.95 for the heart. It also demonstrated robustness in organs like the liver (DSC: 0.96) and kidneys (DSC: 0.95 left, 0.95 right), which present more variability. However, segmentation of smaller and complex structures such as the portal and splenic veins (DSC: 0.54) and adrenal glands (DSC: 0.65 left, 0.61 right) revealed the need for further model optimization. Conclusion: The proposed model is a robust, tool for accurate segmentation of 40 anatomical structures in MRI and CT images. By leveraging cross-modality learning and interactive annotation, the model achieves strong performance and generalizability across diverse datasets, making it a valuable resource for researchers and clinicians. It is open source and can be downloaded from https://github.com/hhaentze/MRSegmentator.

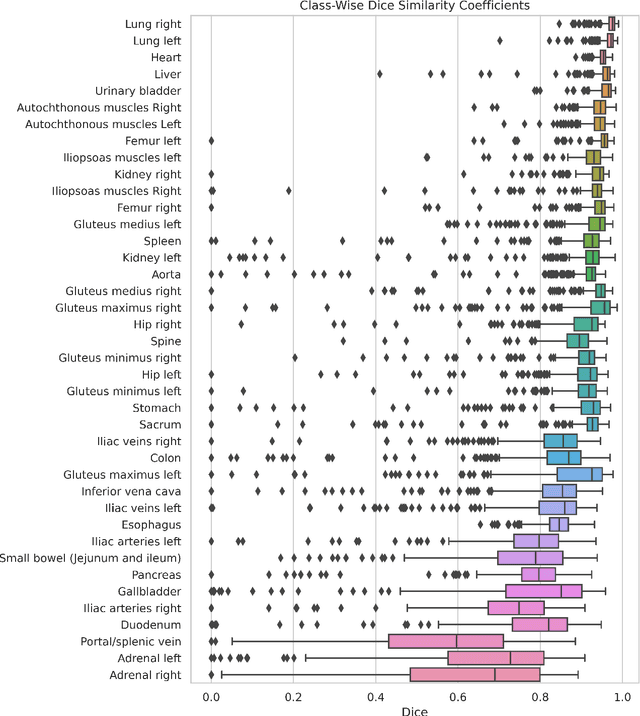

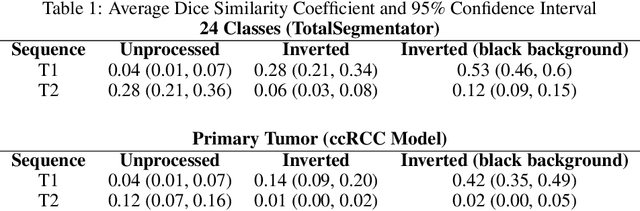

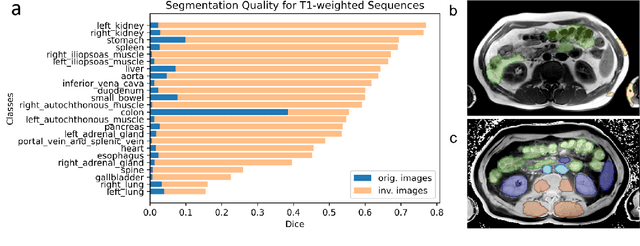

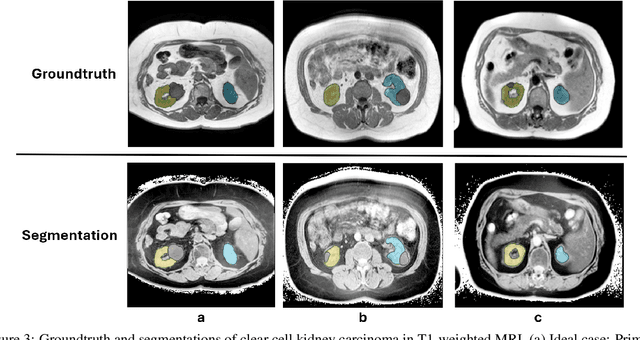

Improve Cross-Modality Segmentation by Treating MRI Images as Inverted CT Scans

May 04, 2024

Abstract:Computed tomography (CT) segmentation models frequently include classes that are not currently supported by magnetic resonance imaging (MRI) segmentation models. In this study, we show that a simple image inversion technique can significantly improve the segmentation quality of CT segmentation models on MRI data, by using the TotalSegmentator model, applied to T1-weighted MRI images, as example. Image inversion is straightforward to implement and does not require dedicated graphics processing units (GPUs), thus providing a quick alternative to complex deep modality-transfer models for generating segmentation masks for MRI data.

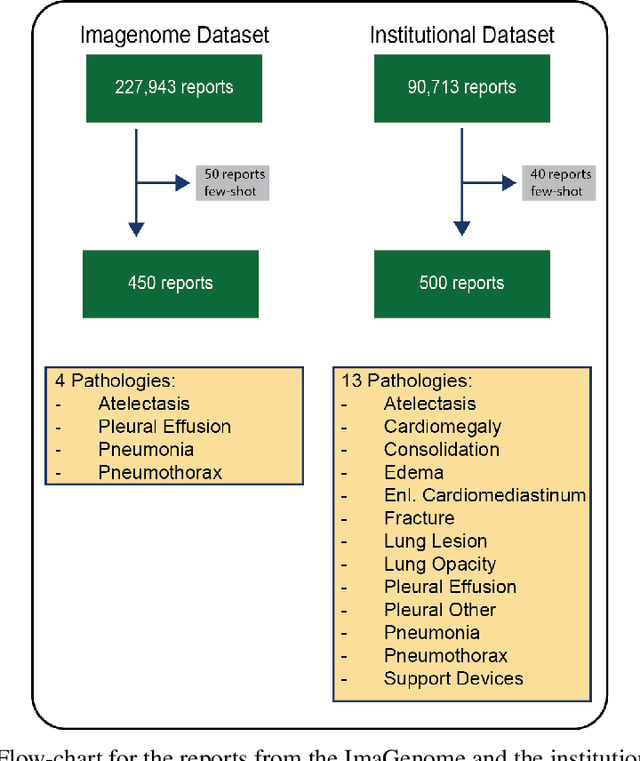

Is Open-Source There Yet? A Comparative Study on Commercial and Open-Source LLMs in Their Ability to Label Chest X-Ray Reports

Feb 19, 2024

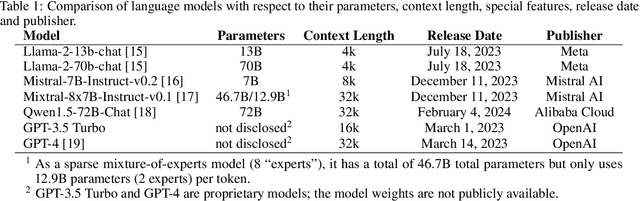

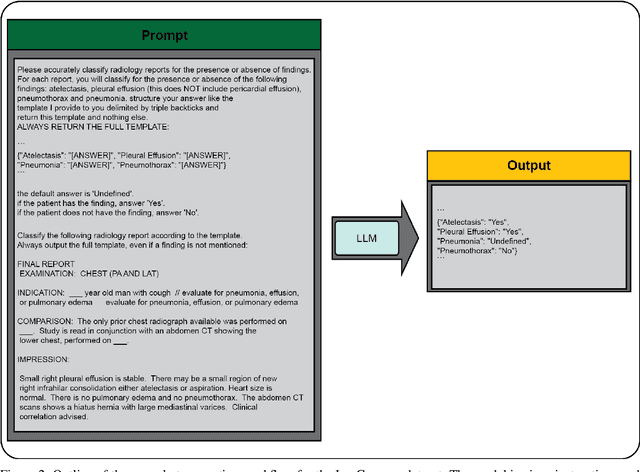

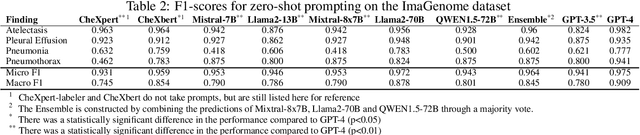

Abstract:Introduction: With the rapid advances in large language models (LLMs), there have been numerous new open source as well as commercial models. While recent publications have explored GPT-4 in its application to extracting information of interest from radiology reports, there has not been a real-world comparison of GPT-4 to different leading open-source models. Materials and Methods: Two different and independent datasets were used. The first dataset consists of 540 chest x-ray reports that were created at the Massachusetts General Hospital between July 2019 and July 2021. The second dataset consists of 500 chest x-ray reports from the ImaGenome dataset. We then compared the commercial models GPT-3.5 Turbo and GPT-4 from OpenAI to the open-source models Mistral-7B, Mixtral-8x7B, Llama2-13B, Llama2-70B, QWEN1.5-72B and CheXbert and CheXpert-labeler in their ability to accurately label the presence of multiple findings in x-ray text reports using different prompting techniques. Results: On the ImaGenome dataset, the best performing open-source model was Llama2-70B with micro F1-scores of 0.972 and 0.970 for zero- and few-shot prompts, respectively. GPT-4 achieved micro F1-scores of 0.975 and 0.984, respectively. On the institutional dataset, the best performing open-source model was QWEN1.5-72B with micro F1-scores of 0.952 and 0.965 for zero- and few-shot prompting, respectively. GPT-4 achieved micro F1-scores of 0.975 and 0.973, respectively. Conclusion: In this paper, we show that while GPT-4 is superior to open-source models in zero-shot report labeling, the implementation of few-shot prompting can bring open-source models on par with GPT-4. This shows that open-source models could be a performant and privacy preserving alternative to GPT-4 for the task of radiology report classification.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge