Lena Eichermüller

SVT-AV1 Encoding Bitrate Estimation Using Motion Search Information

Jul 08, 2024

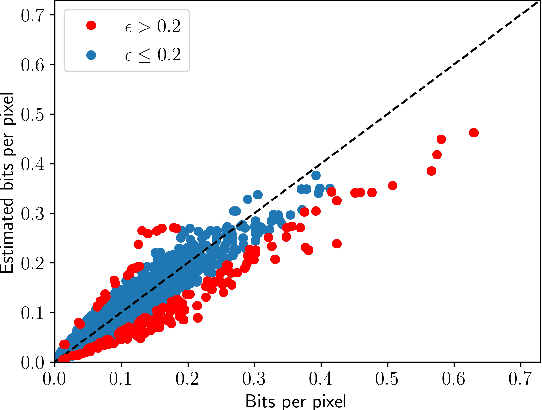

Abstract:Enabling high compression efficiency while keeping encoding energy consumption at a low level, requires prioritization of which videos need more sophisticated encoding techniques. However, the effects vary highly based on the content, and information on how good a video can be compressed is required. This can be measured by estimating the encoded bitstream size prior to encoding. We identified the errors between estimated motion vectors from Motion Search, an algorithm that predicts temporal changes in videos, correlates well to the encoded bitstream size. Combining Motion Search with Random Forests, the encoding bitrate can be estimated with a Pearson correlation of above 0.96.

Encoding Time and Energy Model for SVT-AV1 based on Video Complexity

Jan 30, 2024Abstract:The share of online video traffic in global carbon dioxide emissions is growing steadily. To comply with the demand for video media, dedicated compression techniques are continuously optimized, but at the expense of increasingly higher computational demands and thus rising energy consumption at the video encoder side. In order to find the best trade-off between compression and energy consumption, modeling encoding energy for a wide range of encoding parameters is crucial. We propose an encoding time and energy model for SVT-AV1 based on empirical relations between the encoding time and video parameters as well as encoder configurations. Furthermore, we model the influence of video content by established content descriptors such as spatial and temporal information. We then use the predicted encoding time to estimate the required energy demand and achieve a prediction error of 19.6 % for encoding time and 20.9 % for encoding energy.

Encoder Complexity Control in SVT-AV1 by Speed-Adaptive Preset Switching

Jul 11, 2023

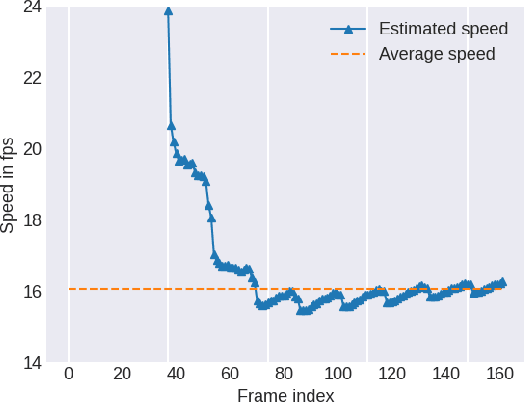

Abstract:Current developments in video encoding technology lead to continuously improving compression performance but at the expense of increasingly higher computational demands. Regarding the online video traffic increases during the last years and the concomitant need for video encoding, encoder complexity control mechanisms are required to restrict the processing time to a sufficient extent in order to find a reasonable trade-off between performance and complexity. We present a complexity control mechanism in SVT-AV1 by using speed-adaptive preset switching to comply with the remaining time budget. This method enables encoding with a user-defined time constraint within the complete preset range with an average precision of 8.9 \% without introducing any additional latencies.

The Bjøntegaard Bible -- Why your Way of Comparing Video Codecs May Be Wrong

Apr 25, 2023

Abstract:In this paper, we provide an in-depth assessment on the Bj{\o}ntegaard Delta. We construct a large data set of video compression performance comparisons using a diverse set of metrics including PSNR, VMAF, bitrate, and processing energies. These metrics are evaluated for visual data types such as classic perspective video, 360{\deg} video, point clouds, and screen content. As compression technology, we consider multiple hybrid video codecs as well as state-of-the-art neural network based compression methods. Using additional performance points inbetween standard points defined by parameters such as the quantization parameter, we assess the interpolation error of the Bj{\o}ntegaard-Delta (BD) calculus and its impact on the final BD value. Performing an in-depth analysis, we find that the BD calculus is most accurate in the standard application of rate-distortion comparisons with mean errors below 0.5 percentage points. For other applications, the errors are higher (up to 10 percentage points), but can be reduced by a higher number of performance points. We finally come up with recommendations on how to use the BD calculus such that the validity of the resulting BD-values is maximized. Main recommendations include the use of Akima interpolation, the interpretation of relative difference curves, and the use of the logarithmic domain for saturating metrics such as SSIM and VMAF.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge