Laurent Vermue

PyKEEN 1.0: A Python Library for Training and Evaluating Knowledge Graph Embeddings

Jul 30, 2020

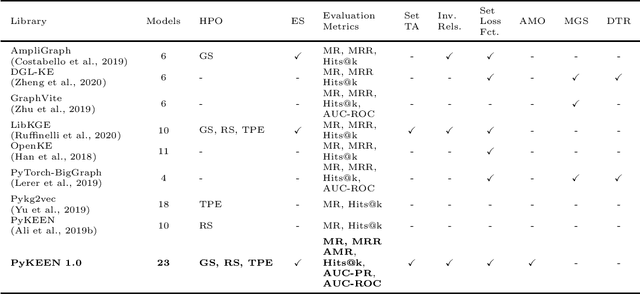

Abstract:Recently, knowledge graph embeddings (KGEs) received significant attention, and several software libraries have been developed for training and evaluating KGEs. While each of them addresses specific needs, we re-designed and re-implemented PyKEEN, one of the first KGE libraries, in a community effort. PyKEEN 1.0 enables users to compose knowledge graph embedding models (KGEMs) based on a wide range of interaction models, training approaches, loss functions, and permits the explicit modeling of inverse relations. Besides, an automatic memory optimization has been realized in order to exploit the provided hardware optimally, and through the integration of Optuna extensive hyper-parameter optimization (HPO) functionalities are provided.

Bringing Light Into the Dark: A Large-scale Evaluation of Knowledge Graph Embedding Models Under a Unified Framework

Jun 23, 2020

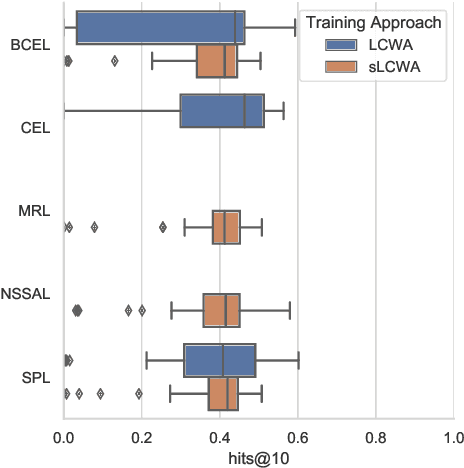

Abstract:The heterogeneity in recently published knowledge graph embedding models' implementations, training, and evaluation has made fair and thorough comparisons difficult. In order to assess the reproducibility of previously published results, we re-implemented and evaluated 19 interaction models in the PyKEEN software package. Here, we outline which results could be reproduced with their reported hyper-parameters, which could only be reproduced with alternate hyper-parameters, and which could not be reproduced at all as well as provide insight as to why this might be the case. We then performed a large-scale benchmarking on four datasets with several thousands of experiments and 21,246 GPU hours of computation time. We present insights gained as to best practices, best configurations for each model, and where improvements could be made over previously published best configurations. Our results highlight that the combination of model architecture, training approach, loss function, and the explicit modeling of inverse relations is crucial for a model's performances, and not only determined by the model architecture. We provide evidence that several architectures can obtain results competitive to the state-of-the-art when configured carefully. We have made all code, experimental configurations, results, and analyses that lead to our interpretations available at https://github.com/pykeen/pykeen and https://github.com/pykeen/benchmarking

Interpretable and Fair Comparison of Link Prediction or Entity Alignment Methods with Adjusted Mean Rank

Feb 17, 2020

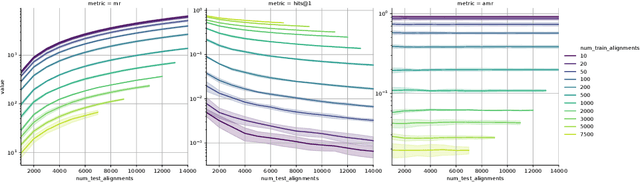

Abstract:In this work, we take a closer look at the evaluation of two families of methods for enriching information from knowledge graphs: Link Prediction and Entity Alignment. In the current experimental setting, multiple different scores are employed to assess different aspects of model performance. We analyze the informative value of these evaluation measures and identify several shortcomings. In particular, we demonstrate that all existing scores can hardly be used to compare results across different datasets. Moreover, this problem may also arise when comparing different train/test splits for the same dataset. We show that this leads to various problems in the interpretation of results, which may support misleading conclusions. Therefore, we propose a different evaluation and demonstrate empirically how this helps for fair, comparable and interpretable assessment of model performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge