Kristijonas Čyras

Argumentation and Machine Learning

Oct 31, 2024Abstract:This chapter provides an overview of research works that present approaches with some degree of cross-fertilisation between Computational Argumentation and Machine Learning. Our review of the literature identified two broad themes representing the purpose of the interaction between these two areas: argumentation for machine learning and machine learning for argumentation. Across these two themes, we systematically evaluate the spectrum of works across various dimensions, including the type of learning and the form of argumentation framework used. Further, we identify three types of interaction between these two areas: synergistic approaches, where the Argumentation and Machine Learning components are tightly integrated; segmented approaches, where the two are interleaved such that the outputs of one are the inputs of the other; and approximated approaches, where one component shadows the other at a chosen level of detail. We draw conclusions about the suitability of certain forms of Argumentation for supporting certain types of Machine Learning, and vice versa, with clear patterns emerging from the review. Whilst the reviewed works provide inspiration for successfully combining the two fields of research, we also identify and discuss limitations and challenges that ought to be addressed in order to ensure that they remain a fruitful pairing as AI advances.

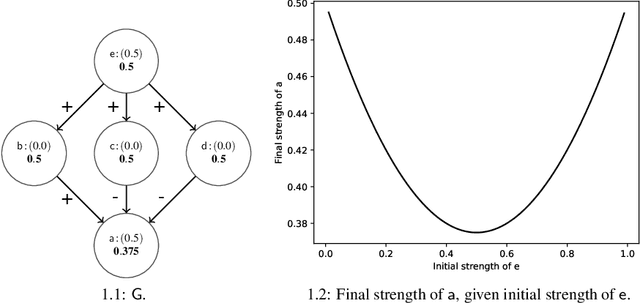

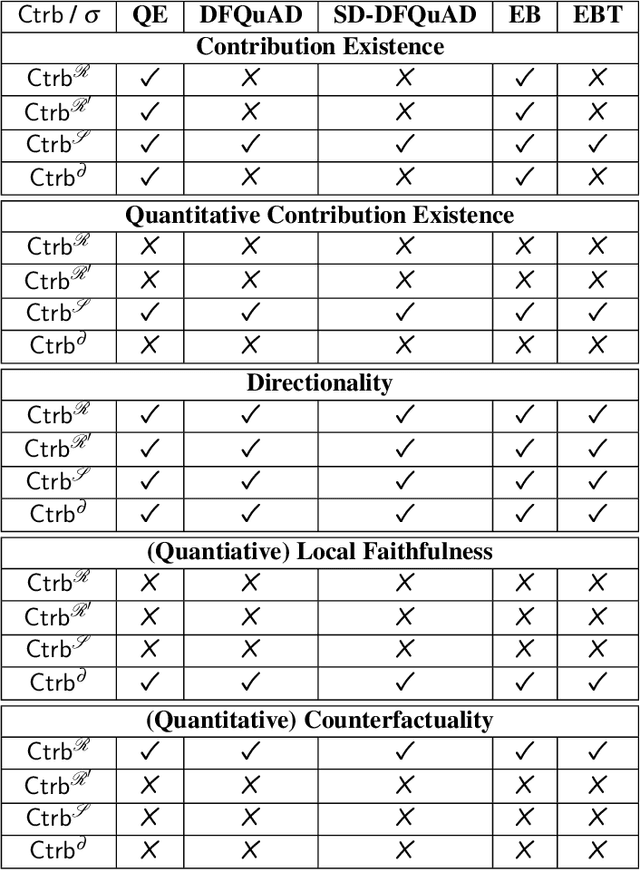

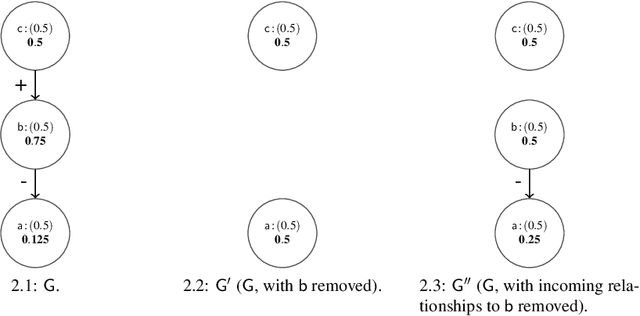

Contribution Functions for Quantitative Bipolar Argumentation Graphs: A Principle-based Analysis

Jan 16, 2024

Abstract:We present a principle-based analysis of contribution functions for quantitative bipolar argumentation graphs that quantify the contribution of one argument to another. The introduced principles formalise the intuitions underlying different contribution functions as well as expectations one would have regarding the behaviour of contribution functions in general. As none of the covered contribution functions satisfies all principles, our analysis can serve as a tool that enables the selection of the most suitable function based on the requirements of a given use case.

Argumentative XAI: A Survey

May 24, 2021

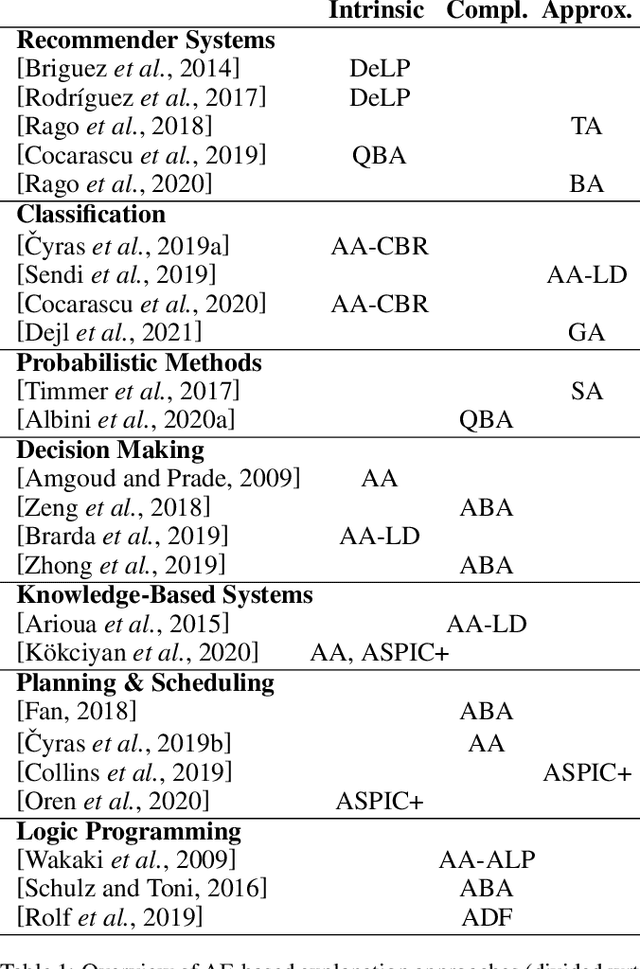

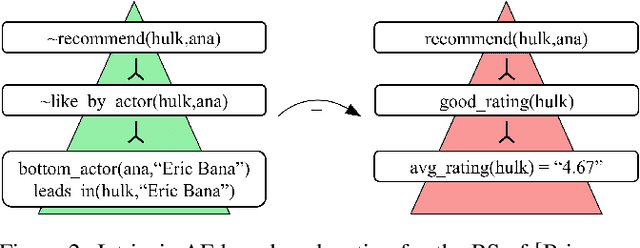

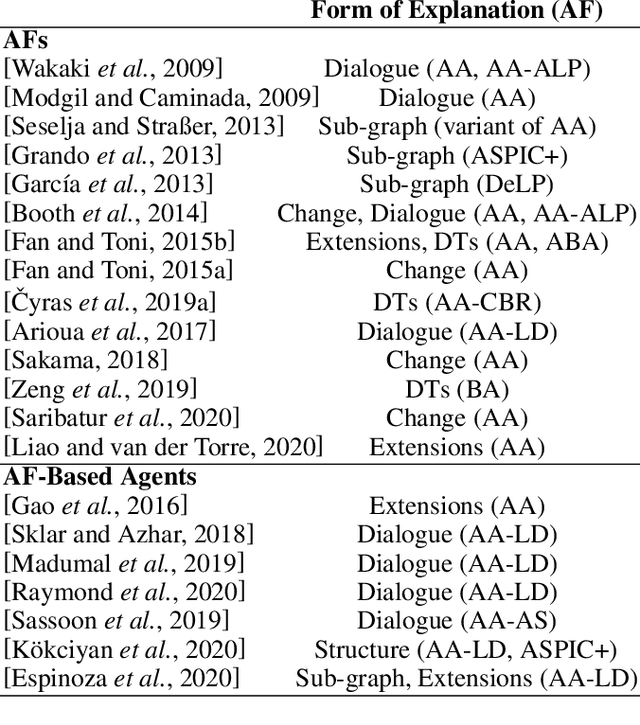

Abstract:Explainable AI (XAI) has been investigated for decades and, together with AI itself, has witnessed unprecedented growth in recent years. Among various approaches to XAI, argumentative models have been advocated in both the AI and social science literature, as their dialectical nature appears to match some basic desirable features of the explanation activity. In this survey we overview XAI approaches built using methods from the field of computational argumentation, leveraging its wide array of reasoning abstractions and explanation delivery methods. We overview the literature focusing on different types of explanation (intrinsic and post-hoc), different models with which argumentation-based explanations are deployed, different forms of delivery, and different argumentation frameworks they use. We also lay out a roadmap for future work.

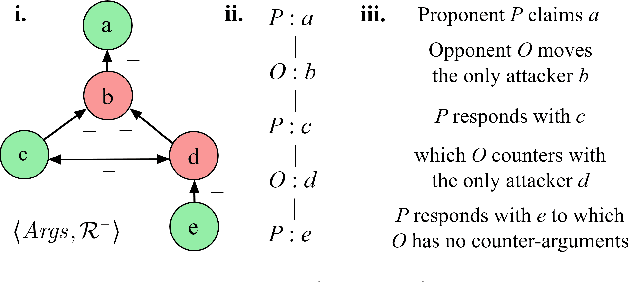

Complexity Results and Algorithms for Bipolar Argumentation

Mar 05, 2019

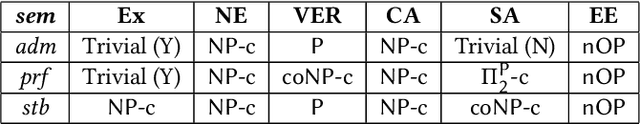

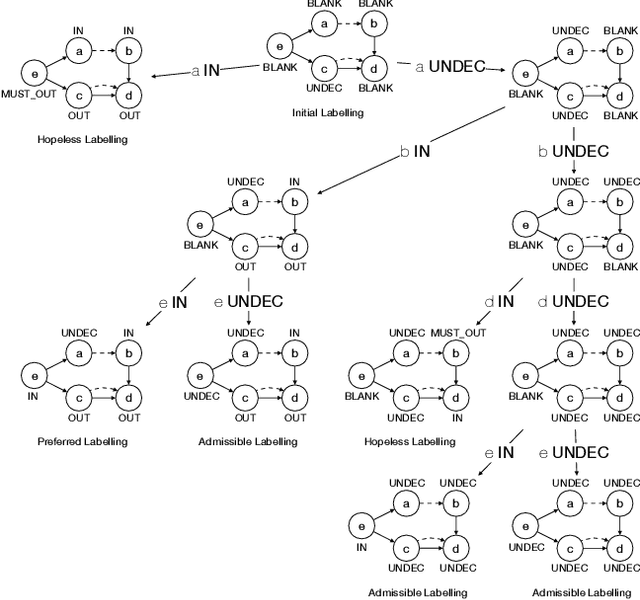

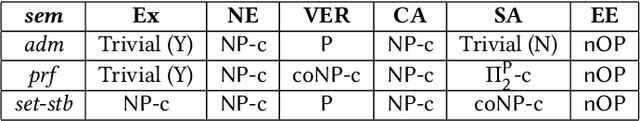

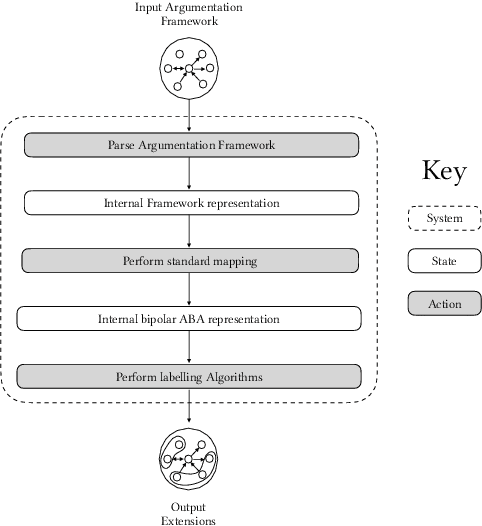

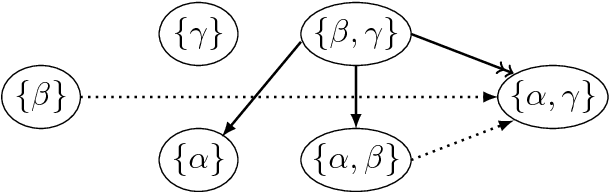

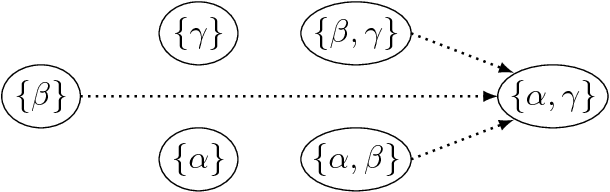

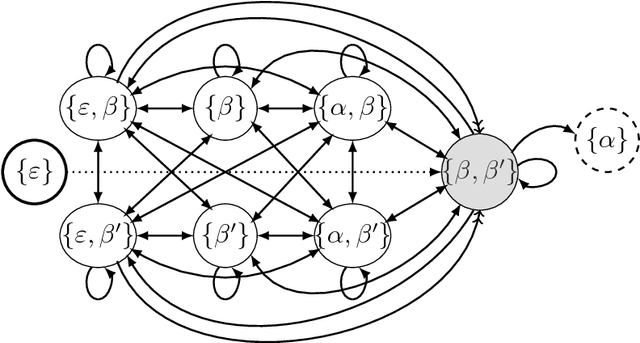

Abstract:Bipolar Argumentation Frameworks (BAFs) admit several interpretations of the support relation and diverging definitions of semantics. Recently, several classes of BAFs have been captured as instances of bipolar Assumption-Based Argumentation, a class of Assumption-Based Argumentation (ABA). In this paper, we establish the complexity of bipolar ABA, and consequently of several classes of BAFs. In addition to the standard five complexity problems, we analyse the rarely-addressed extension enumeration problem too. We also advance backtracking-driven algorithms for enumerating extensions of bipolar ABA frameworks, and consequently of BAFs under several interpretations. We prove soundness and completeness of our algorithms, describe their implementation and provide a scalability evaluation. We thus contribute to the study of the as yet uninvestigated complexity problems of (variously interpreted) BAFs as well as of bipolar ABA, and provide the lacking implementations thereof.

Resolving Conflicts in Clinical Guidelines using Argumentation

Feb 20, 2019

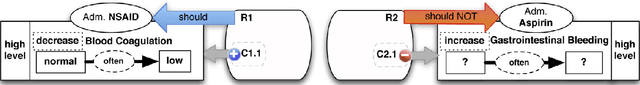

Abstract:Automatically reasoning with conflicting generic clinical guidelines is a burning issue in patient-centric medical reasoning where patient-specific conditions and goals need to be taken into account. It is even more challenging in the presence of preferences such as patient's wishes and clinician's priorities over goals. We advance a structured argumentation formalism for reasoning with conflicting clinical guidelines, patient-specific information and preferences. Our formalism integrates assumption-based reasoning and goal-driven selection among reasoning outcomes. Specifically, we assume applicability of guideline recommendations concerning the generic goal of patient well-being, resolve conflicts among recommendations using patient's conditions and preferences, and then consider prioritised patient-centered goals to yield non-conflicting, goal-maximising and preference-respecting recommendations. We rely on the state-of-the-art Transition-based Medical Recommendation model for representing guideline recommendations and augment it with context given by the patient's conditions, goals, as well as preferences over recommendations and goals. We establish desirable properties of our approach in terms of sensitivity to recommendation conflicts and patient context.

Argumentation for Explainable Scheduling (Full Paper with Proofs)

Nov 13, 2018

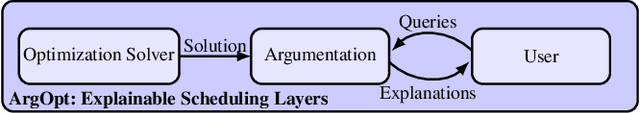

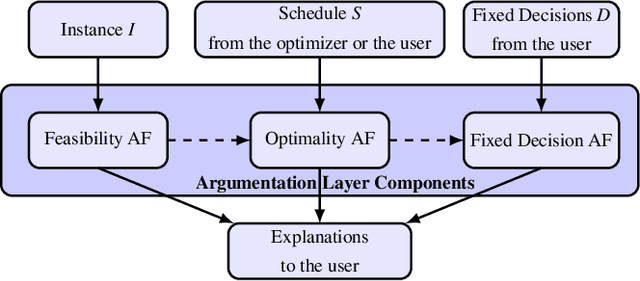

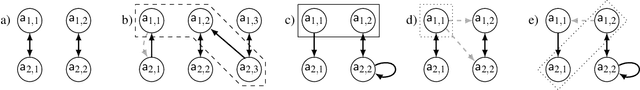

Abstract:Mathematical optimization offers highly-effective tools for finding solutions for problems with well-defined goals, notably scheduling. However, optimization solvers are often unexplainable black boxes whose solutions are inaccessible to users and which users cannot interact with. We define a novel paradigm using argumentation to empower the interaction between optimization solvers and users, supported by tractable explanations which certify or refute solutions. A solution can be from a solver or of interest to a user (in the context of 'what-if' scenarios). Specifically, we define argumentative and natural language explanations for why a schedule is (not) feasible, (not) efficient or (not) satisfying fixed user decisions, based on models of the fundamental makespan scheduling problem in terms of abstract argumentation frameworks (AFs). We define three types of AFs, whose stable extensions are in one-to-one correspondence with schedules that are feasible, efficient and satisfying fixed decisions, respectively. We extract the argumentative explanations from these AFs and the natural language explanations from the argumentative ones.

ABA+: Assumption-Based Argumentation with Preferences

Oct 12, 2016

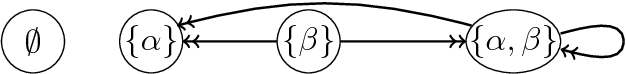

Abstract:We present ABA+, a new approach to handling preferences in a well known structured argumentation formalism, Assumption-Based Argumentation (ABA). In ABA+, preference information given over assumptions is incorporated directly into the attack relation, thus resulting in attack reversal. ABA+ conservatively extends ABA and exhibits various desirable features regarding relationship among argumentation semantics as well as preference handling. We also introduce Weak Contraposition, a principle concerning reasoning with rules and preferences that relaxes the standard principle of contraposition, while guaranteeing additional desirable features for ABA+.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge