Kirill Muravyev

Federal Research Center for Computer Science and Control of Russian Academy of Sciences

NavTopo: Leveraging Topological Maps For Autonomous Navigation Of a Mobile Robot

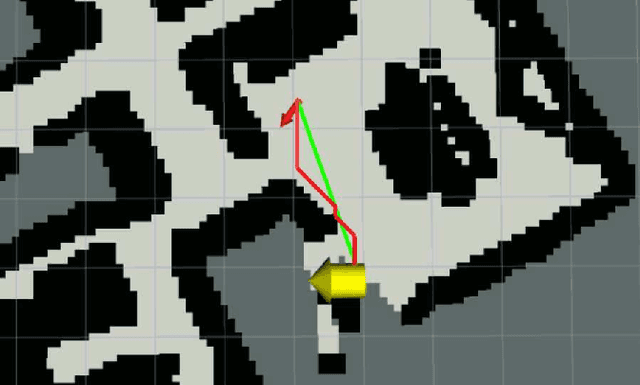

Oct 15, 2024Abstract:Autonomous navigation of a mobile robot is a challenging task which requires ability of mapping, localization, path planning and path following. Conventional mapping methods build a dense metric map like an occupancy grid, which is affected by odometry error accumulation and consumes a lot of memory and computations in large environments. Another approach to mapping is the usage of topological properties, e.g. adjacency of locations in the environment. Topological maps are less prone to odometry error accumulation and high resources consumption, and also enable fast path planning because of the graph sparsity. Based on this idea, we proposed NavTopo - a full navigation pipeline based on topological map and two-level path planning. The pipeline localizes in the graph by matching neural network descriptors and 2D projections of the input point clouds, which significantly reduces memory consumption compared to metric and topological point cloud-based approaches. We test our approach in a large indoor photo-relaistic simulated environment and compare it to a metric map-based approach based on popular metric mapping method RTAB-MAP. The experimental results show that our topological approach significantly outperforms the metric one in terms of performance, keeping proper navigational efficiency.

* This paper is published in proceedings of the 9th International Conference "Interactive Collaborative Robotics" (ICR 2024)

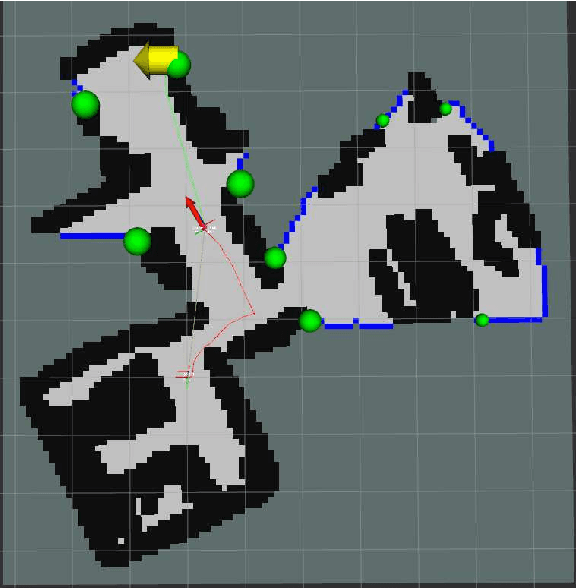

PRISM-TopoMap: Online Topological Mapping with Place Recognition and Scan Matching

Apr 02, 2024

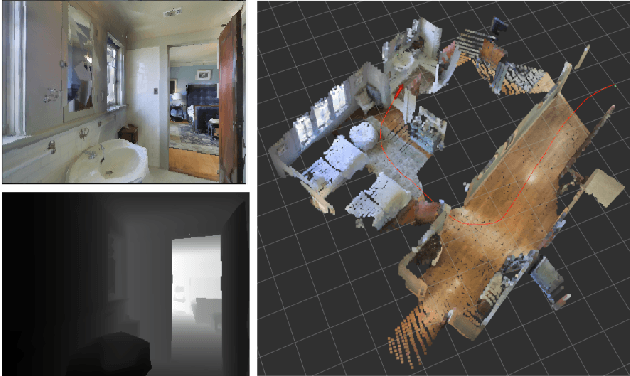

Abstract:Mapping is one of the crucial tasks enabling autonomous navigation of a mobile robot. Conventional mapping methods output dense geometric map representation, e.g. an occupancy grid, which is not trivial to keep consistent for the prolonged runs covering large environments. Meanwhile, capturing the topological structure of the workspace enables fast path planning, is less prone to odometry error accumulation and does not consume much memory. Following this idea, this paper introduces PRISM-TopoMap -- a topological mapping method that maintains a graph of locally aligned locations not relying on global metric coordinates. The proposed method involves learnable multimodal place recognition paired with the scan matching pipeline for localization and loop closure in the graph of locations. The latter is updated online and the robot is localized in a proper node at each time step. We conduct a broad experimental evaluation of the suggested approach in a range of photo-realistic environments and on a real robot (wheeled differential driven Husky robot), and compare it to state of the art. The results of the empirical evaluation confirm that PRISM-Topomap consistently outperforms competitors across several measures of mapping and navigation efficiency and performs well on a real robot. The code of PRISM-Topomap is open-sourced and available at https://github.com/kirillMouraviev/prism-topomap.

Interactive Semantic Map Representation for Skill-based Visual Object Navigation

Nov 07, 2023Abstract:Visual object navigation using learning methods is one of the key tasks in mobile robotics. This paper introduces a new representation of a scene semantic map formed during the embodied agent interaction with the indoor environment. It is based on a neural network method that adjusts the weights of the segmentation model with backpropagation of the predicted fusion loss values during inference on a regular (backward) or delayed (forward) image sequence. We have implemented this representation into a full-fledged navigation approach called SkillTron, which can select robot skills from end-to-end policies based on reinforcement learning and classic map-based planning methods. The proposed approach makes it possible to form both intermediate goals for robot exploration and the final goal for object navigation. We conducted intensive experiments with the proposed approach in the Habitat environment, which showed a significant superiority in navigation quality metrics compared to state-of-the-art approaches. The developed code and used custom datasets are publicly available at github.com/AIRI-Institute/skill-fusion.

Evaluation of RGB-D SLAM in Large Indoor Environments

Dec 12, 2022Abstract:Simultaneous localization and mapping (SLAM) is one of the key components of a control system that aims to ensure autonomous navigation of a mobile robot in unknown environments. In a variety of practical cases a robot might need to travel long distances in order to accomplish its mission. This requires long-term work of SLAM methods and building large maps. Consequently the computational burden (including high memory consumption for map storage) becomes a bottleneck. Indeed, state-of-the-art SLAM algorithms include specific techniques and optimizations to tackle this challenge, still their performance in long-term scenarios needs proper assessment. To this end, we perform an empirical evaluation of two widespread state-of-the-art RGB-D SLAM methods, suitable for long-term navigation, i.e. RTAB-Map and Voxgraph. We evaluate them in a large simulated indoor environment, consisting of corridors and halls, while varying the odometer noise for a more realistic setup. We provide both qualitative and quantitative analysis of both methods uncovering their strengths and weaknesses. We find that both methods build a high-quality map with low odometry noise but tend to fail with high odometry noise. Voxgraph has lower relative trajectory estimation error and memory consumption than RTAB-Map, while its absolute error is higher.

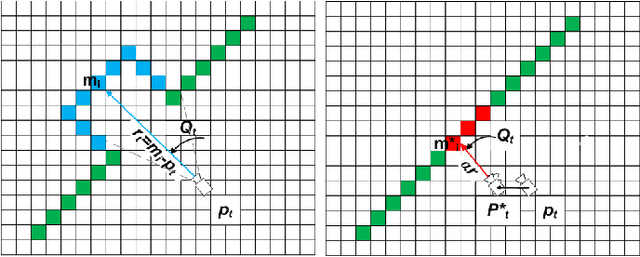

2.5D Mapping, Pathfinding and Path Following For Navigation Of A Differential Drive Robot In Uneven Terrain

Sep 15, 2022

Abstract:Safe navigation in uneven terrains is an important problem in robotic research. In this paper we propose a 2.5D navigation system which consists of elevation map building, path planning and local path following with obstacle avoidance. For local path following we use Model Predictive Path Integral (MPPI) control method. We propose novel cost-functions for MPPI in order to adapt it to elevation maps and motion through unevenness. We evaluate our system on multiple synthetic tests and in a simulated environment with different types of obstacles and rough surfaces.

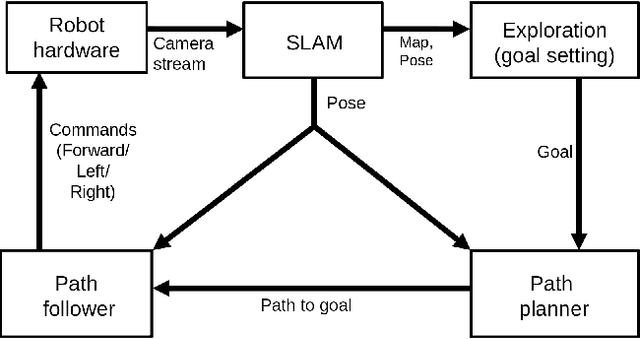

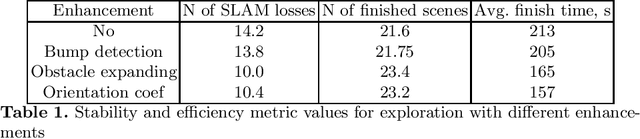

Enhancing exploration algorithms for navigation with visual SLAM

Oct 18, 2021

Abstract:Exploration is an important step in autonomous navigation of robotic systems. In this paper we introduce a series of enhancements for exploration algorithms in order to use them with vision-based simultaneous localization and mapping (vSLAM) methods. We evaluate developed approaches in photo-realistic simulator in two modes: with ground-truth depths and neural network reconstructed depth maps as vSLAM input. We evaluate standard metrics in order to estimate exploration coverage.

MAOMaps: A Photo-Realistic Benchmark For vSLAM and Map Merging Quality Assessment

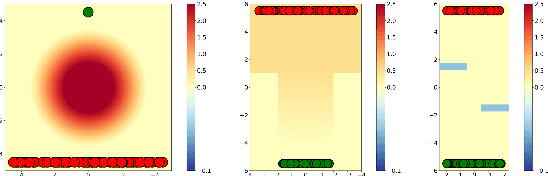

May 31, 2021

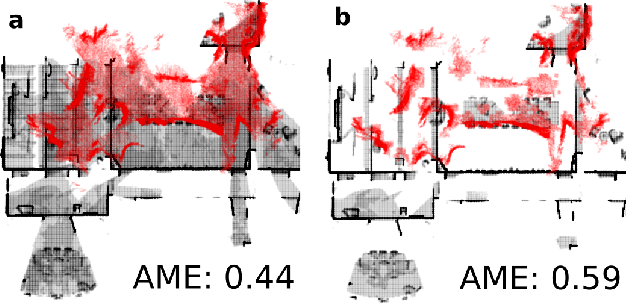

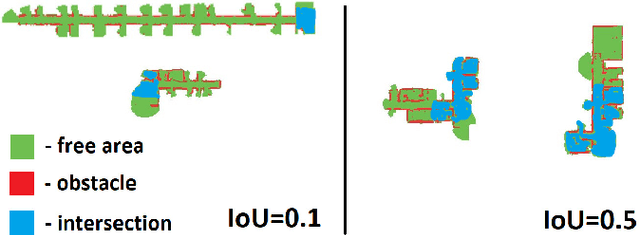

Abstract:Running numerous experiments in simulation is a necessary step before deploying a control system on a real robot. In this paper we introduce a novel benchmark that is aimed at quantitatively evaluating the quality of vision-based simultaneous localization and mapping (vSLAM) and map merging algorithms. The benchmark consists of both a dataset and a set of tools for automatic evaluation. The dataset is photo-realistic and provides both the localization and the map ground truth data. This makes it possible to evaluate not only the localization part of the SLAM pipeline but the mapping part as well. To compare the vSLAM-built maps and the ground-truth ones we introduce a novel way to find correspondences between them that takes the SLAM context into account (as opposed to other approaches like nearest neighbors). The benchmark is ROS-compatable and is open-sourced to the community. The data and the code are available at: \texttt{github.com/CnnDepth/MAOMaps}.

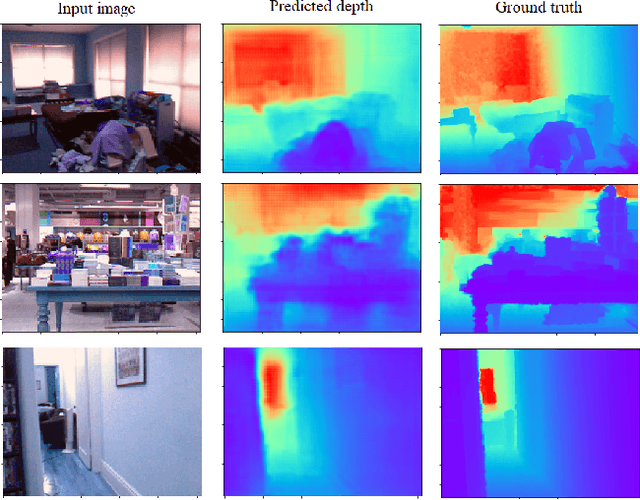

Real-time Vision-based Depth Reconstruction with NVidia Jetson

Jul 16, 2019

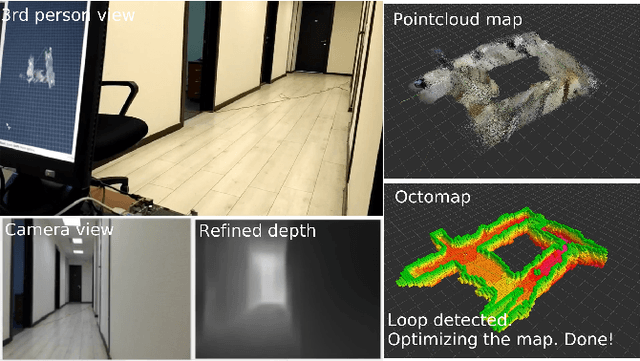

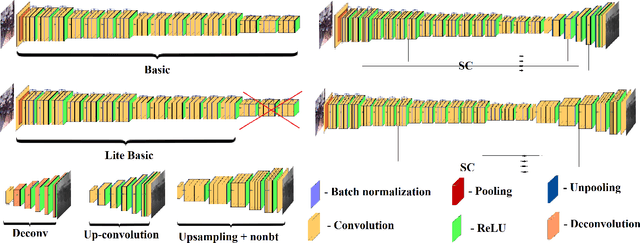

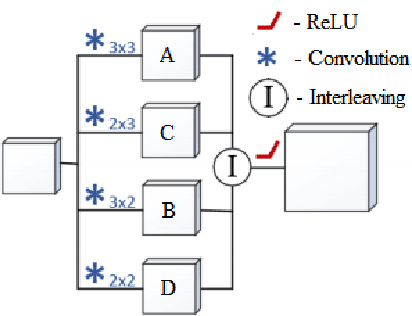

Abstract:Vision-based depth reconstruction is a challenging problem extensively studied in computer vision but still lacking universal solution. Reconstructing depth from single image is particularly valuable to mobile robotics as it can be embedded to the modern vision-based simultaneous localization and mapping (vSLAM) methods providing them with the metric information needed to construct accurate maps in real scale. Typically, depth reconstruction is done nowadays via fully-convolutional neural networks (FCNNs). In this work we experiment with several FCNN architectures and introduce a few enhancements aimed at increasing both the effectiveness and the efficiency of the inference. We experimentally determine the solution that provides the best performance/accuracy tradeoff and is able to run on NVidia Jetson with the framerates exceeding 16FPS for 320 x 240 input. We also evaluate the suggested models by conducting monocular vSLAM of unknown indoor environment on NVidia Jetson TX2 in real-time. Open-source implementation of the models and the inference node for Robot Operating System (ROS) are available at https://github.com/CnnDepth/tx2_fcnn_node.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge