Keren Zhu

LLM-Enhanced Bayesian Optimization for Efficient Analog Layout Constraint Generation

Jun 07, 2024

Abstract:Analog layout synthesis faces significant challenges due to its dependence on manual processes, considerable time requirements, and performance instability. Current Bayesian Optimization (BO)-based techniques for analog layout synthesis, despite their potential for automation, suffer from slow convergence and extensive data needs, limiting their practical application. This paper presents the \texttt{LLANA} framework, a novel approach that leverages Large Language Models (LLMs) to enhance BO by exploiting the few-shot learning abilities of LLMs for more efficient generation of analog design-dependent parameter constraints. Experimental results demonstrate that \texttt{LLANA} not only achieves performance comparable to state-of-the-art (SOTA) BO methods but also enables a more effective exploration of the analog circuit design space, thanks to LLM's superior contextual understanding and learning efficiency. The code is available at \url{https://github.com/dekura/LLANA}.

Practical Layout-Aware Analog/Mixed-Signal Design Automation with Bayesian Neural Networks

Nov 27, 2023Abstract:The high simulation cost has been a bottleneck of practical analog/mixed-signal design automation. Many learning-based algorithms require thousands of simulated data points, which is impractical for expensive to simulate circuits. We propose a learning-based algorithm that can be trained using a small amount of data and, therefore, scalable to tasks with expensive simulations. Our efficient algorithm solves the post-layout performance optimization problem where simulations are known to be expensive. Our comprehensive study also solves the schematic-level sizing problem. For efficient optimization, we utilize Bayesian Neural Networks as a regression model to approximate circuit performance. For layout-aware optimization, we handle the problem as a multi-fidelity optimization problem and improve efficiency by exploiting the correlations from cheaper evaluations. We present three test cases to demonstrate the efficiency of our algorithms. Our tests prove that the proposed approach is more efficient than conventional baselines and state-of-the-art algorithms.

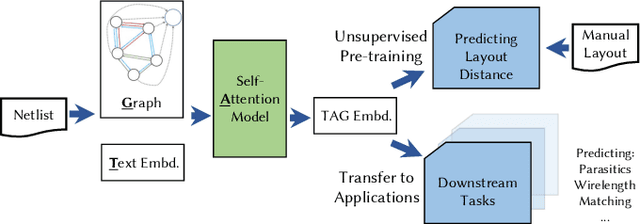

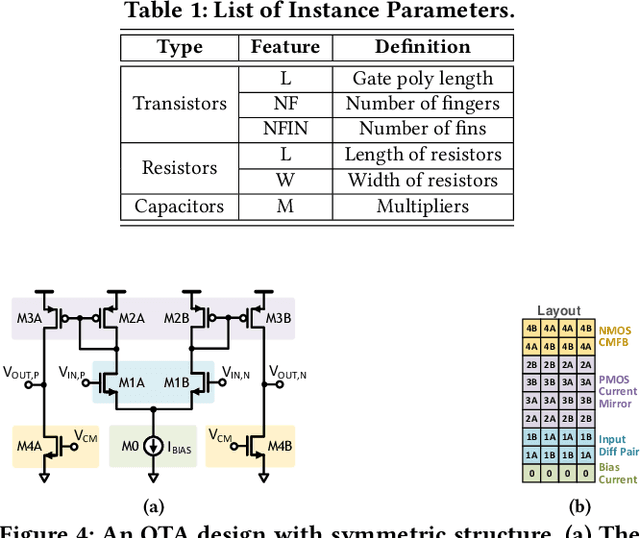

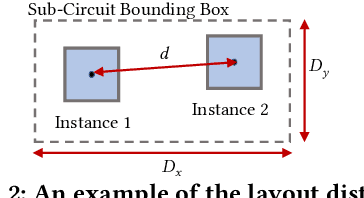

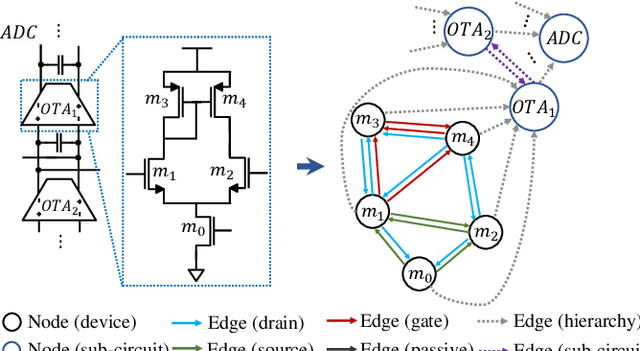

TAG: Learning Circuit Spatial Embedding From Layouts

Sep 07, 2022

Abstract:Analog and mixed-signal (AMS) circuit designs still rely on human design expertise. Machine learning has been assisting circuit design automation by replacing human experience with artificial intelligence. This paper presents TAG, a new paradigm of learning the circuit representation from layouts leveraging text, self-attention and graph. The embedding network model learns spatial information without manual labeling. We introduce text embedding and a self-attention mechanism to AMS circuit learning. Experimental results demonstrate the ability to predict layout distances between instances with industrial FinFET technology benchmarks. The effectiveness of the circuit representation is verified by showing the transferability to three other learning tasks with limited data in the case studies: layout matching prediction, wirelength estimation, and net parasitic capacitance prediction.

Optimizer Fusion: Efficient Training with Better Locality and Parallelism

Apr 01, 2021

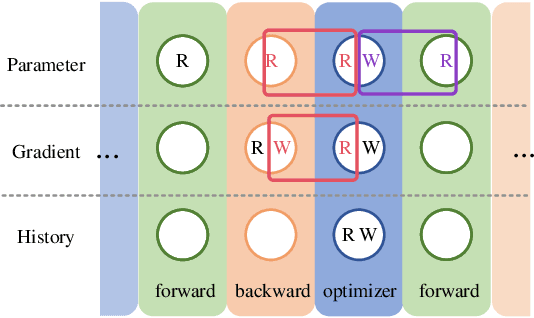

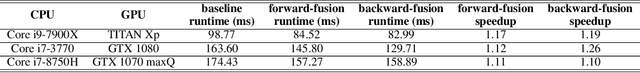

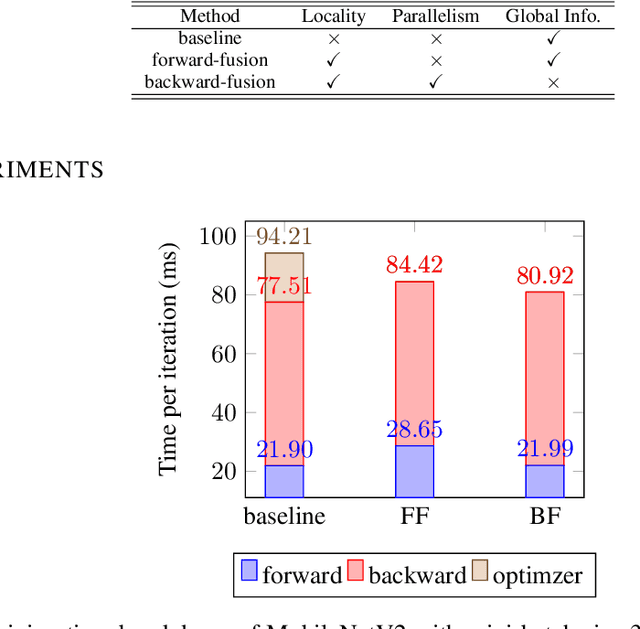

Abstract:Machine learning frameworks adopt iterative optimizers to train neural networks. Conventional eager execution separates the updating of trainable parameters from forward and backward computations. However, this approach introduces nontrivial training time overhead due to the lack of data locality and computation parallelism. In this work, we propose to fuse the optimizer with forward or backward computation to better leverage locality and parallelism during training. By reordering the forward computation, gradient calculation, and parameter updating, our proposed method improves the efficiency of iterative optimizers. Experimental results demonstrate that we can achieve an up to 20% training time reduction on various configurations. Since our methods do not alter the optimizer algorithm, they can be used as a general "plug-in" technique to the training process.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge