Junsuo Zhao

Learn to Think: Bootstrapping LLM Reasoning Capability Through Graph Representation Learning

May 17, 2025Abstract:Large Language Models (LLMs) have achieved remarkable success across various domains. However, they still face significant challenges, including high computational costs for training and limitations in solving complex reasoning problems. Although existing methods have extended the reasoning capabilities of LLMs through structured paradigms, these approaches often rely on task-specific prompts and predefined reasoning processes, which constrain their flexibility and generalizability. To address these limitations, we propose a novel framework that leverages graph learning to enable more flexible and adaptive reasoning capabilities for LLMs. Specifically, this approach models the reasoning process of a problem as a graph and employs LLM-based graph learning to guide the adaptive generation of each reasoning step. To further enhance the adaptability of the model, we introduce a Graph Neural Network (GNN) module to perform representation learning on the generated reasoning process, enabling real-time adjustments to both the model and the prompt. Experimental results demonstrate that this method significantly improves reasoning performance across multiple tasks without requiring additional training or task-specific prompt design. Code can be found in https://github.com/zch65458525/L2T.

Large Language Model Enhancers for Graph Neural Networks: An Analysis from the Perspective of Causal Mechanism Identification

May 15, 2025Abstract:The use of large language models (LLMs) as feature enhancers to optimize node representations, which are then used as inputs for graph neural networks (GNNs), has shown significant potential in graph representation learning. However, the fundamental properties of this approach remain underexplored. To address this issue, we propose conducting a more in-depth analysis of this issue based on the interchange intervention method. First, we construct a synthetic graph dataset with controllable causal relationships, enabling precise manipulation of semantic relationships and causal modeling to provide data for analysis. Using this dataset, we conduct interchange interventions to examine the deeper properties of LLM enhancers and GNNs, uncovering their underlying logic and internal mechanisms. Building on the analytical results, we design a plug-and-play optimization module to improve the information transfer between LLM enhancers and GNNs. Experiments across multiple datasets and models validate the proposed module.

LLM Enhancers for GNNs: An Analysis from the Perspective of Causal Mechanism Identification

May 13, 2025Abstract:The use of large language models (LLMs) as feature enhancers to optimize node representations, which are then used as inputs for graph neural networks (GNNs), has shown significant potential in graph representation learning. However, the fundamental properties of this approach remain underexplored. To address this issue, we propose conducting a more in-depth analysis of this issue based on the interchange intervention method. First, we construct a synthetic graph dataset with controllable causal relationships, enabling precise manipulation of semantic relationships and causal modeling to provide data for analysis. Using this dataset, we conduct interchange interventions to examine the deeper properties of LLM enhancers and GNNs, uncovering their underlying logic and internal mechanisms. Building on the analytical results, we design a plug-and-play optimization module to improve the information transfer between LLM enhancers and GNNs. Experiments across multiple datasets and models validate the proposed module.

Bootstrapping Heterogeneous Graph Representation Learning via Large Language Models: A Generalized Approach

Dec 11, 2024

Abstract:Graph representation learning methods are highly effective in handling complex non-Euclidean data by capturing intricate relationships and features within graph structures. However, traditional methods face challenges when dealing with heterogeneous graphs that contain various types of nodes and edges due to the diverse sources and complex nature of the data. Existing Heterogeneous Graph Neural Networks (HGNNs) have shown promising results but require prior knowledge of node and edge types and unified node feature formats, which limits their applicability. Recent advancements in graph representation learning using Large Language Models (LLMs) offer new solutions by integrating LLMs' data processing capabilities, enabling the alignment of various graph representations. Nevertheless, these methods often overlook heterogeneous graph data and require extensive preprocessing. To address these limitations, we propose a novel method that leverages the strengths of both LLM and GNN, allowing for the processing of graph data with any format and type of nodes and edges without the need for type information or special preprocessing. Our method employs LLM to automatically summarize and classify different data formats and types, aligns node features, and uses a specialized GNN for targeted learning, thus obtaining effective graph representations for downstream tasks. Theoretical analysis and experimental validation have demonstrated the effectiveness of our method.

MBDS: A Multi-Body Dynamics Simulation Dataset for Graph Networks Simulators

Oct 04, 2024

Abstract:Modeling the structure and events of the physical world constitutes a fundamental objective of neural networks. Among the diverse approaches, Graph Network Simulators (GNS) have emerged as the leading method for modeling physical phenomena, owing to their low computational cost and high accuracy. The datasets employed for training and evaluating physical simulation techniques are typically generated by researchers themselves, often resulting in limited data volume and quality. Consequently, this poses challenges in accurately assessing the performance of these methods. In response to this, we have constructed a high-quality physical simulation dataset encompassing 1D, 2D, and 3D scenes, along with more trajectories and time-steps compared to existing datasets. Furthermore, our work distinguishes itself by developing eight complete scenes, significantly enhancing the dataset's comprehensiveness. A key feature of our dataset is the inclusion of precise multi-body dynamics, facilitating a more realistic simulation of the physical world. Utilizing our high-quality dataset, we conducted a systematic evaluation of various existing GNS methods. Our dataset is accessible for download at https://github.com/Sherlocktein/MBDS, offering a valuable resource for researchers to enhance the training and evaluation of their methodologies.

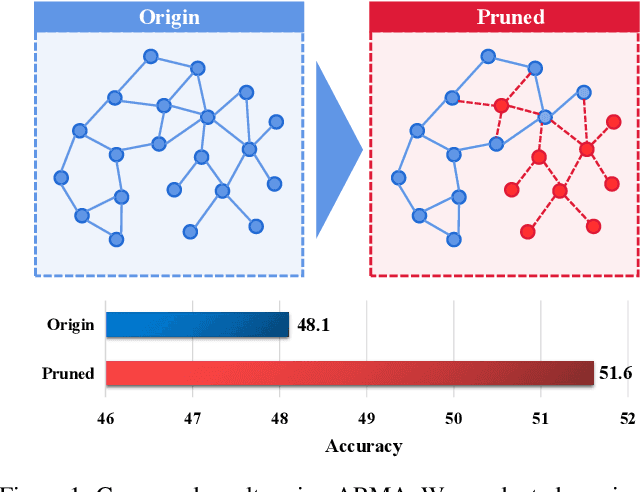

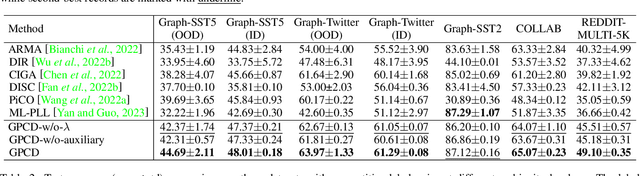

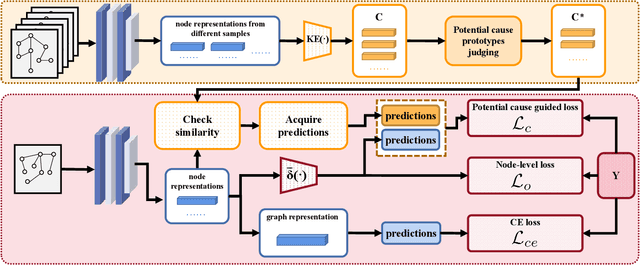

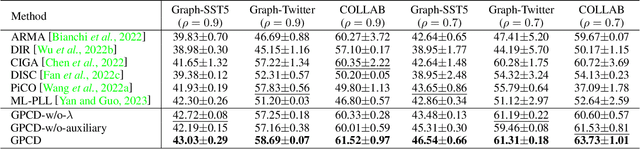

Graph Partial Label Learning with Potential Cause Discovering

Mar 18, 2024

Abstract:Graph Neural Networks (GNNs) have gained considerable attention for their potential in addressing challenges posed by complex graph-structured data in diverse domains. However, accurately annotating graph data for training is difficult due to the inherent complexity and interconnectedness of graphs. To tackle this issue, we propose a novel graph representation learning method that enables GNN models to effectively learn discriminative information even in the presence of noisy labels within the context of Partially Labeled Learning (PLL). PLL is a critical weakly supervised learning problem, where each training instance is associated with a set of candidate labels, including both the true label and additional noisy labels. Our approach leverages potential cause extraction to obtain graph data that exhibit a higher likelihood of possessing a causal relationship with the labels. By incorporating auxiliary training based on the extracted graph data, our model can effectively filter out the noise contained in the labels. We support the rationale behind our approach with a series of theoretical analyses. Moreover, we conduct extensive evaluations and ablation studies on multiple datasets, demonstrating the superiority of our proposed method.

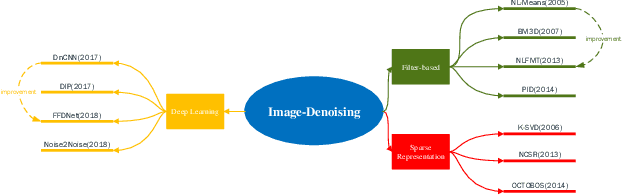

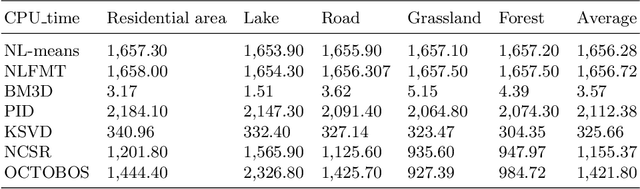

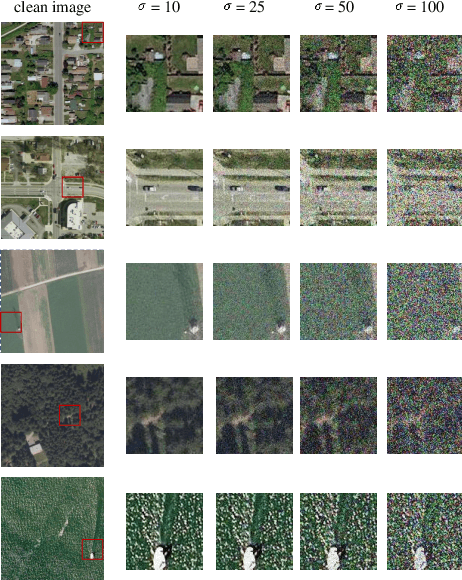

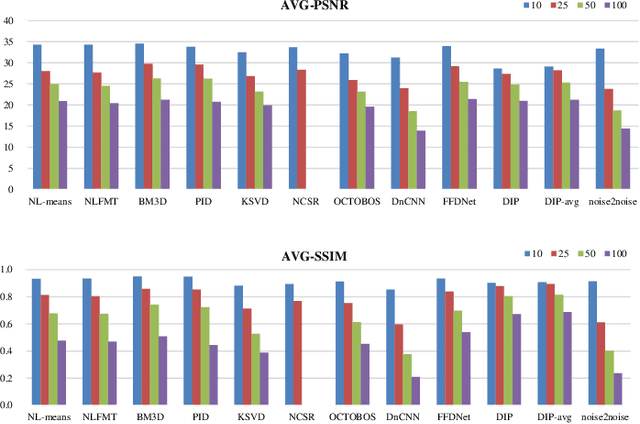

A Research and Strategy of Remote Sensing Image Denoising Algorithms

May 24, 2019

Abstract:Most raw data download from satellites are useless, resulting in transmission waste, one solution is to process data directly on satellites, then only transmit the processed results to the ground. Image processing is the main data processing on satellites, in this paper, we focus on image denoising which is the basic image processing. There are many high-performance denoising approaches at present, however, most of them rely on advanced computing resources or rich images on the ground. Considering the limited computing resources of satellites and the characteristics of remote sensing images, we do some research on these high-performance ground image denoising approaches and compare them in simulation experiments to analyze whether they are suitable for satellites. According to the analysis results, we propose two feasible image denoising strategies for satellites based on satellite TianZhi-1.

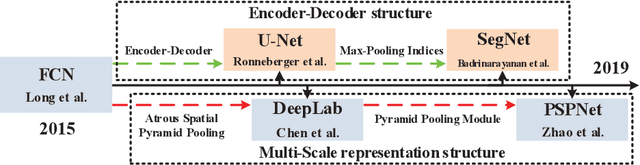

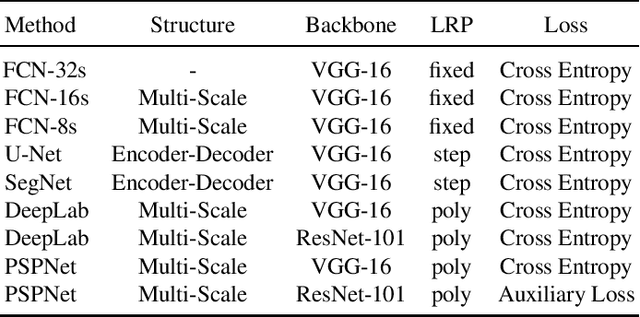

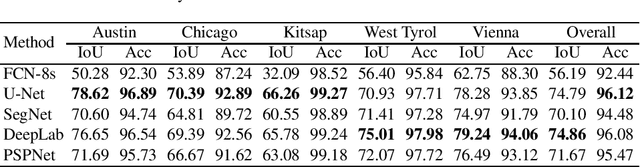

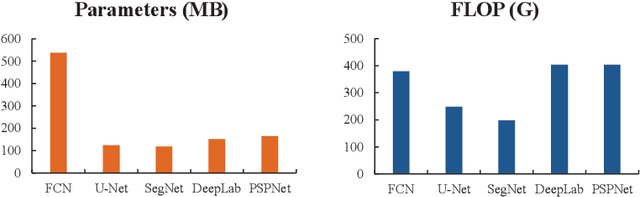

A Comparison and Strategy of Semantic Segmentation on Remote Sensing Images

May 24, 2019

Abstract:In recent years, with the development of aerospace technology, we use more and more images captured by satellites to obtain information. But a large number of useless raw images, limited data storage resource and poor transmission capability on satellites hinder our use of valuable images. Therefore, it is necessary to deploy an on-orbit semantic segmentation model to filter out useless images before data transmission. In this paper, we present a detailed comparison on the recent deep learning models. Considering the computing environment of satellites, we compare methods from accuracy, parameters and resource consumption on the same public dataset. And we also analyze the relation between them. Based on experimental results, we further propose a viable on-orbit semantic segmentation strategy. It will be deployed on the TianZhi-2 satellite which supports deep learning methods and will be lunched soon.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge