Johannes Fürnkranz

Probabilistic Scoring Lists for Interpretable Machine Learning

Jul 31, 2024

Abstract:A scoring system is a simple decision model that checks a set of features, adds a certain number of points to a total score for each feature that is satisfied, and finally makes a decision by comparing the total score to a threshold. Scoring systems have a long history of active use in safety-critical domains such as healthcare and justice, where they provide guidance for making objective and accurate decisions. Given their genuine interpretability, the idea of learning scoring systems from data is obviously appealing from the perspective of explainable AI. In this paper, we propose a practically motivated extension of scoring systems called probabilistic scoring lists (PSL), as well as a method for learning PSLs from data. Instead of making a deterministic decision, a PSL represents uncertainty in the form of probability distributions, or, more generally, probability intervals. Moreover, in the spirit of decision lists, a PSL evaluates features one by one and stops as soon as a decision can be made with enough confidence. To evaluate our approach, we conduct a case study in the medical domain.

Efficiently Training Neural Networks for Imperfect Information Games by Sampling Information Sets

Jul 08, 2024Abstract:In imperfect information games, the evaluation of a game state not only depends on the observable world but also relies on hidden parts of the environment. As accessing the obstructed information trivialises state evaluations, one approach to tackle such problems is to estimate the value of the imperfect state as a combination of all states in the information set, i.e., all possible states that are consistent with the current imperfect information. In this work, the goal is to learn a function that maps from the imperfect game information state to its expected value. However, constructing a perfect training set, i.e. an enumeration of the whole information set for numerous imperfect states, is often infeasible. To compute the expected values for an imperfect information game like \textit{Reconnaissance Blind Chess}, one would need to evaluate thousands of chess positions just to obtain the training target for a single state. Still, the expected value of a state can already be approximated with appropriate accuracy from a much smaller set of evaluations. Thus, in this paper, we empirically investigate how a budget of perfect information game evaluations should be distributed among training samples to maximise the return. Our results show that sampling a small number of states, in our experiments roughly 3, for a larger number of separate positions is preferable over repeatedly sampling a smaller quantity of states. Thus, we find that in our case, the quantity of different samples seems to be more important than higher target quality.

Contrastive Learning of Preferences with a Contextual InfoNCE Loss

Jul 08, 2024

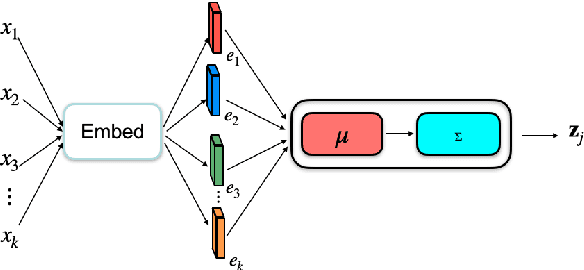

Abstract:A common problem in contextual preference ranking is that a single preferred action is compared against several choices, thereby blowing up the complexity and skewing the preference distribution. In this work, we show how one can solve this problem via a suitable adaptation of the CLIP framework.This adaptation is not entirely straight-forward, because although the InfoNCE loss used by CLIP has achieved great success in computer vision and multi-modal domains, its batch-construction technique requires the ability to compare arbitrary items, and is not well-defined if one item has multiple positive associations in the same batch. We empirically demonstrate the utility of our adapted version of the InfoNCE loss in the domain of collectable card games, where we aim to learn an embedding space that captures the associations between single cards and whole card pools based on human selections. Such selection data only exists for restricted choices, thus generating concrete preferences of one item over a set of other items rather than a perfect fit between the card and the pool. Our results show that vanilla CLIP does not perform well due to the aforementioned intuitive issues. However, by adapting CLIP to the problem, we receive a model outperforming previous work trained with the triplet loss, while also alleviating problems associated with mining triplets.

Neural Network-based Information Set Weighting for Playing Reconnaissance Blind Chess

Jul 08, 2024

Abstract:In imperfect information games, the game state is generally not fully observable to players. Therefore, good gameplay requires policies that deal with the different information that is hidden from each player. To combat this, effective algorithms often reason about information sets; the sets of all possible game states that are consistent with a player's observations. While there is no way to distinguish between the states within an information set, this property does not imply that all states are equally likely to occur in play. We extend previous research on assigning weights to the states in an information set in order to facilitate better gameplay in the imperfect information game of Reconnaissance Blind Chess. For this, we train two different neural networks which estimate the likelihood of each state in an information set from historical game data. Experimentally, we find that a Siamese neural network is able to achieve higher accuracy and is more efficient than a classical convolutional neural network for the given domain. Finally, we evaluate an RBC-playing agent that is based on the generated weightings and compare different parameter settings that influence how strongly it should rely on them. The resulting best player is ranked 5th on the public leaderboard.

Learning With Generalised Card Representations for "Magic: The Gathering"

Jul 08, 2024Abstract:A defining feature of collectable card games is the deck building process prior to actual gameplay, in which players form their decks according to some restrictions. Learning to build decks is difficult for players and models alike due to the large card variety and highly complex semantics, as well as requiring meaningful card and deck representations when aiming to utilise AI. In addition, regular releases of new card sets lead to unforeseeable fluctuations in the available card pool, thus affecting possible deck configurations and requiring continuous updates. Previous Game AI approaches to building decks have often been limited to fixed sets of possible cards, which greatly limits their utility in practice. In this work, we explore possible card representations that generalise to unseen cards, thus greatly extending the real-world utility of AI-based deck building for the game "Magic: The Gathering".We study such representations based on numerical, nominal, and text-based features of cards, card images, and meta information about card usage from third-party services. Our results show that while the particular choice of generalised input representation has little effect on learning to predict human card selections among known cards, the performance on new, unseen cards can be greatly improved. Our generalised model is able to predict 55\% of human choices on completely unseen cards, thus showing a deep understanding of card quality and strategy.

Predictive change point detection for heterogeneous data

May 11, 2023Abstract:A change point detection (CPD) framework assisted by a predictive machine learning model called ''Predict and Compare'' is introduced and characterised in relation to other state-of-the-art online CPD routines which it outperforms in terms of false positive rate and out-of-control average run length. The method's focus is on improving standard methods from sequential analysis such as the CUSUM rule in terms of these quality measures. This is achieved by replacing typically used trend estimation functionals such as the running mean with more sophisticated predictive models (Predict step), and comparing their prognosis with actual data (Compare step). The two models used in the Predict step are the ARIMA model and the LSTM recursive neural network. However, the framework is formulated in general terms, so as to allow the use of other prediction or comparison methods than those tested here. The power of the method is demonstrated in a tribological case study in which change points separating the run-in, steady-state, and divergent wear phases are detected in the regime of very few false positives.

Efficient learning of large sets of locally optimal classification rules

Jan 26, 2023Abstract:Conventional rule learning algorithms aim at finding a set of simple rules, where each rule covers as many examples as possible. In this paper, we argue that the rules found in this way may not be the optimal explanations for each of the examples they cover. Instead, we propose an efficient algorithm that aims at finding the best rule covering each training example in a greedy optimization consisting of one specialization and one generalization loop. These locally optimal rules are collected and then filtered for a final rule set, which is much larger than the sets learned by conventional rule learning algorithms. A new example is classified by selecting the best among the rules that cover this example. In our experiments on small to very large datasets, the approach's average classification accuracy is higher than that of state-of-the-art rule learning algorithms. Moreover, the algorithm is highly efficient and can inherently be processed in parallel without affecting the learned rule set and so the classification accuracy. We thus believe that it closes an important gap for large-scale classification rule induction.

* article, 40 pages, Machine Learning journal (2023)

Supervised and Reinforcement Learning from Observations in Reconnaissance Blind Chess

Aug 03, 2022

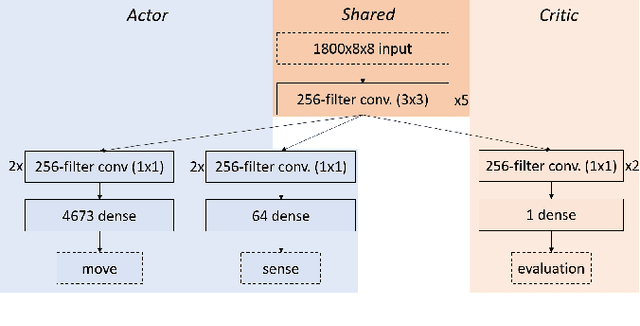

Abstract:In this work, we adapt a training approach inspired by the original AlphaGo system to play the imperfect information game of Reconnaissance Blind Chess. Using only the observations instead of a full description of the game state, we first train a supervised agent on publicly available game records. Next, we increase the performance of the agent through self-play with the on-policy reinforcement learning algorithm Proximal Policy Optimization. We do not use any search to avoid problems caused by the partial observability of game states and only use the policy network to generate moves when playing. With this approach, we achieve an ELO of 1330 on the RBC leaderboard, which places our agent at position 27 at the time of this writing. We see that self-play significantly improves performance and that the agent plays acceptably well without search and without making assumptions about the true game state.

Quantity vs Quality: Investigating the Trade-Off between Sample Size and Label Reliability

Apr 20, 2022

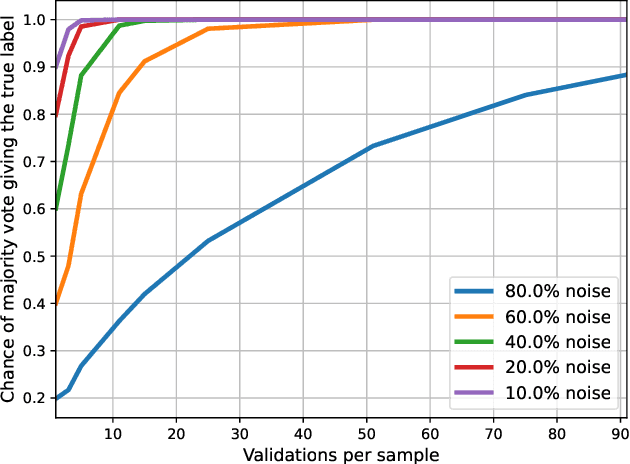

Abstract:In this paper, we study learning in probabilistic domains where the learner may receive incorrect labels but can improve the reliability of labels by repeatedly sampling them. In such a setting, one faces the problem of whether the fixed budget for obtaining training examples should rather be used for obtaining all different examples or for improving the label quality of a smaller number of examples by re-sampling their labels. We motivate this problem in an application to compare the strength of poker hands where the training signal depends on the hidden community cards, and then study it in depth in an artificial setting where we insert controlled noise levels into the MNIST database. Our results show that with increasing levels of noise, resampling previous examples becomes increasingly more important than obtaining new examples, as classifier performance deteriorates when the number of incorrect labels is too high. In addition, we propose two different validation strategies; switching from lower to higher validations over the course of training and using chi-square statistics to approximate the confidence in obtained labels.

GausSetExpander: A Simple Approach for Entity Set Expansion

Feb 28, 2022

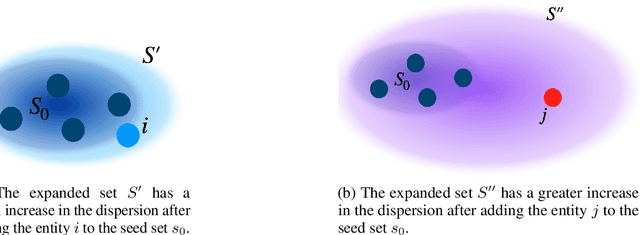

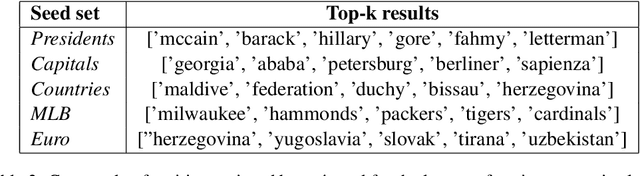

Abstract:Entity Set Expansion is an important NLP task that aims at expanding a small set of entities into a larger one with items from a large pool of candidates. In this paper, we propose GausSetExpander, an unsupervised approach based on optimal transport techniques. We propose to re-frame the problem as choosing the entity that best completes the seed set. For this, we interpret a set as an elliptical distribution with a centroid which represents the mean and a spread that is represented by the scale parameter. The best entity is the one that increases the spread of the set the least. We demonstrate the validity of our approach by comparing to state-of-the art approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge