Irina Fedulova

Medical Image Captioning via Generative Pretrained Transformers

Sep 28, 2022

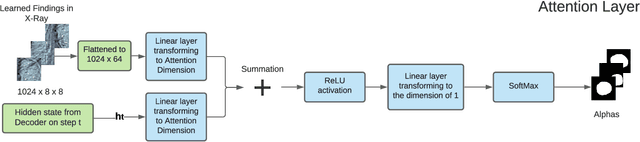

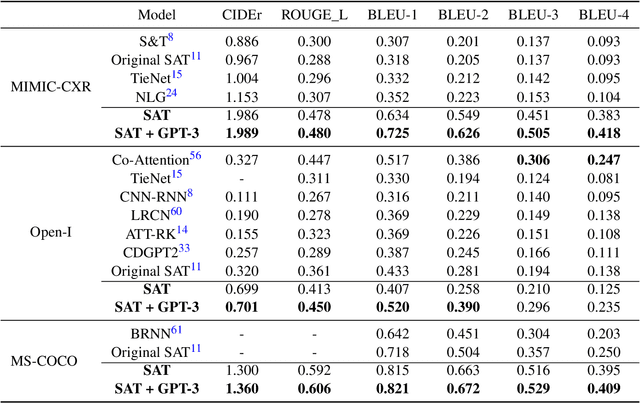

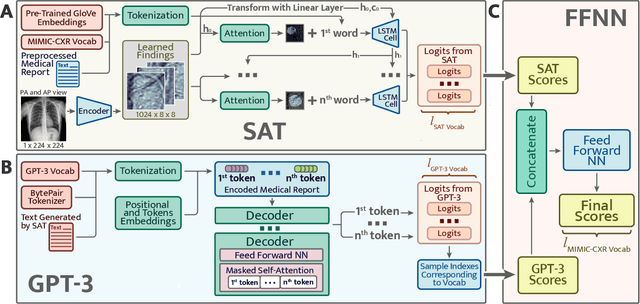

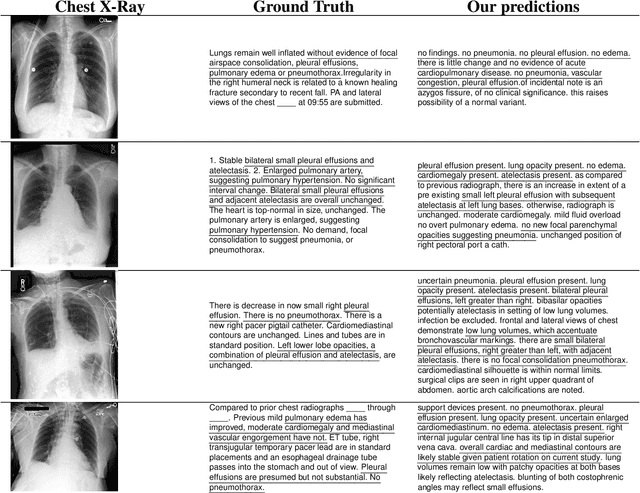

Abstract:The automatic clinical caption generation problem is referred to as proposed model combining the analysis of frontal chest X-Ray scans with structured patient information from the radiology records. We combine two language models, the Show-Attend-Tell and the GPT-3, to generate comprehensive and descriptive radiology records. The proposed combination of these models generates a textual summary with the essential information about pathologies found, their location, and the 2D heatmaps localizing each pathology on the original X-Ray scans. The proposed model is tested on two medical datasets, the Open-I, MIMIC-CXR, and the general-purpose MS-COCO. The results measured with the natural language assessment metrics prove their efficient applicability to the chest X-Ray image captioning.

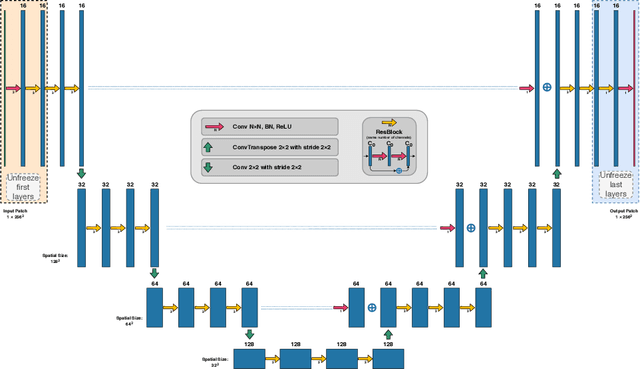

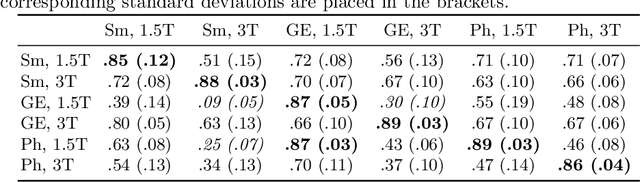

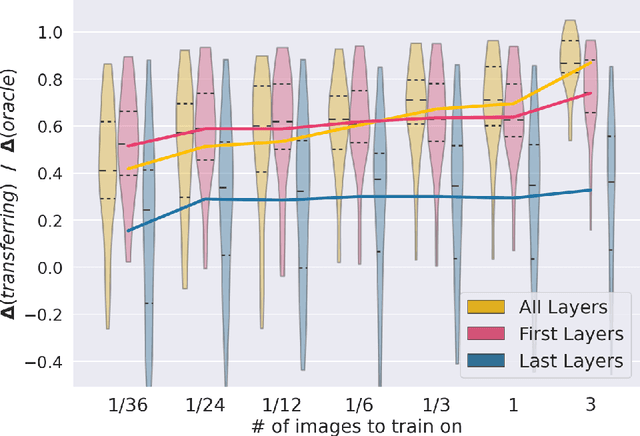

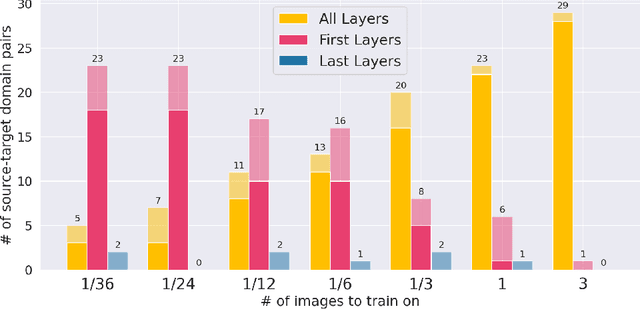

First U-Net Layers Contain More Domain Specific Information Than The Last Ones

Aug 17, 2020

Abstract:MRI scans appearance significantly depends on scanning protocols and, consequently, the data-collection institution. These variations between clinical sites result in dramatic drops of CNN segmentation quality on unseen domains. Many of the recently proposed MRI domain adaptation methods operate with the last CNN layers to suppress domain shift. At the same time, the core manifestation of MRI variability is a considerable diversity of image intensities. We hypothesize that these differences can be eliminated by modifying the first layers rather than the last ones. To validate this simple idea, we conducted a set of experiments with brain MRI scans from six domains. Our results demonstrate that 1) domain-shift may deteriorate the quality even for a simple brain extraction segmentation task (surface Dice Score drops from 0.85-0.89 even to 0.09); 2) fine-tuning of the first layers significantly outperforms fine-tuning of the last layers in almost all supervised domain adaptation setups. Moreover, fine-tuning of the first layers is a better strategy than fine-tuning of the whole network, if the amount of annotated data from the new domain is strictly limited.

Anomaly Detection with Deep Perceptual Autoencoders

Jun 23, 2020

Abstract:Anomaly detection is the problem of recognizing abnormal inputs based on the seen examples of normal data. Despite recent advances of deep learning in recognizing image anomalies, these methods still prove incapable of handling complex medical images, such as barely visible abnormalities in chest X-rays and metastases in lymph nodes. To address this problem, we introduce a new powerful method of image anomaly detection. It relies on the classical autoencoder approach with a re-designed training pipeline to handle high-resolution, complex images and a robust way of computing an image abnormality score. We revisit the very problem statement of fully unsupervised anomaly detection, where no abnormal examples at all are provided during the model setup. We propose to relax this unrealistic assumption by using a very small number of anomalies of confined variability merely to initiate the search of hyperparameters of the model. We evaluate our solution on natural image datasets with a known benchmark, as well as on two medical datasets containing radiology and digital pathology images. The proposed approach suggests a new strong baseline for image anomaly detection and outperforms state-of-the-art approaches in complex medical image analysis tasks.

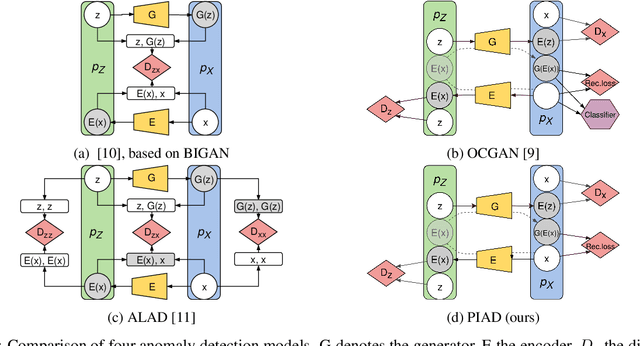

Perceptual Image Anomaly Detection

Sep 12, 2019

Abstract:We present a novel method for image anomaly detection, where algorithms that use samples drawn from some distribution of "normal" data, aim to detect out-of-distribution (abnormal) samples. Our approach includes a combination of encoder and generator for mapping an image distribution to a predefined latent distribution and vice versa. It leverages Generative Adversarial Networks to learn these data distributions and uses perceptual loss for the detection of image abnormality. To accomplish this goal, we introduce a new similarity metric, which expresses the perceived similarity between images and is robust to changes in image contrast. Secondly, we introduce a novel approach for the selection of weights of a multi-objective loss function (image reconstruction and distribution mapping) in the absence of a validation dataset for hyperparameter tuning. After training, our model measures the abnormality of the input image as the perceptual dissimilarity between it and the closest generated image of the modeled data distribution. The proposed approach is extensively evaluated on several publicly available image benchmarks and achieves state-of-the-art performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge