Hye-Young Paik

Ambiguity Resolution in Text-to-Structured Data Mapping

May 16, 2025Abstract:Ambiguity in natural language is a significant obstacle for achieving accurate text to structured data mapping through large language models (LLMs), which affects the performance of tasks such as mapping text to agentic tool calling and text-to-SQL queries. Existing methods of ambiguity handling either exploit ReACT framework to produce the correct mapping through trial and error, or supervised fine tuning to guide models to produce a biased mapping to improve certain tasks. In this paper, we adopt a different approach that characterizes the representation difference of ambiguous text in the latent space and leverage the difference to identify ambiguity before mapping them to structured data. To detect ambiguity of a sentence, we focused on the relationship between ambiguous questions and their interpretations and what cause the LLM ignore multiple interpretations. Different to the distance calculated by dense embedding vectors, we utilize the observation that ambiguity is caused by concept missing in latent space of LLM to design a new distance measurement, computed through the path kernel by the integral of gradient values for each concepts from sparse-autoencoder (SAE) under each state. We identify patterns to distinguish ambiguous questions with this measurement. Based on our observation, We propose a new framework to improve the performance of LLMs on ambiguous agentic tool calling through missing concepts prediction.

Decentralised Governance for Foundation Model based AI Systems: Exploring the Role of Blockchain in Responsible AI

Aug 31, 2023Abstract:Foundation models including large language models (LLMs) are increasingly attracting interest worldwide for their distinguished capabilities and potential to perform a wide variety of tasks. Nevertheless, people are concerned about whether foundation model based AI systems are properly governed to ensure trustworthiness of foundation model based AI systems and to prevent misuse that could harm humans, society and the environment. In this paper, we identify eight governance challenges of foundation model based AI systems regarding the three fundamental dimensions of governance: decision rights, incentives, and accountability. Furthermore, we explore the potential of blockchain as a solution to address the challenges by providing a distributed ledger to facilitate decentralised governance. We present an architecture that demonstrates how blockchain can be leveraged to realise governance in foundation model based AI systems.

Profiler: Profile-Based Model to Detect Phishing Emails

Aug 18, 2022

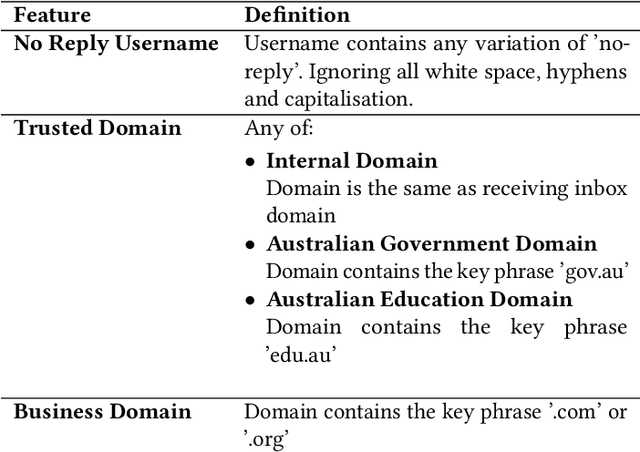

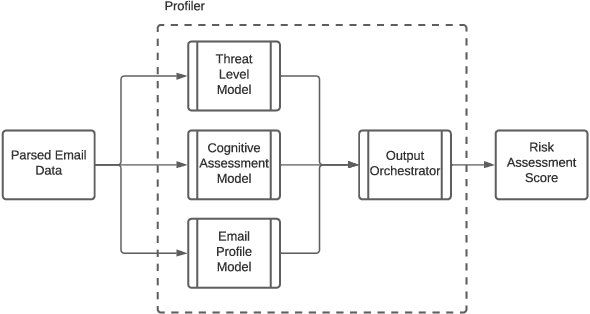

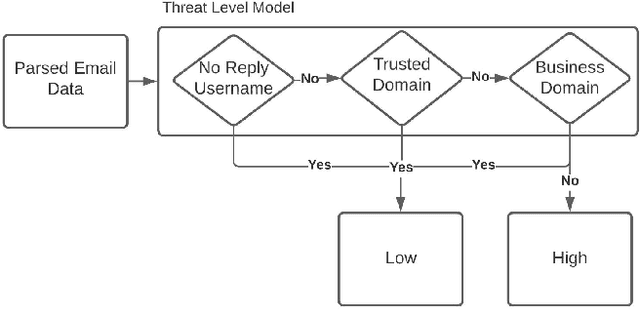

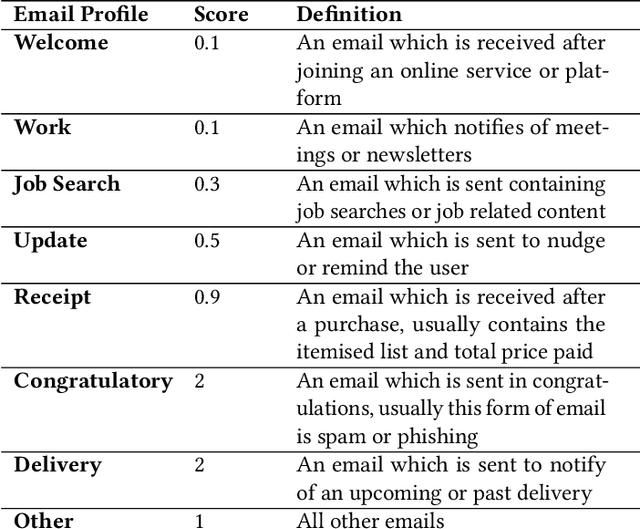

Abstract:Email phishing has become more prevalent and grows more sophisticated over time. To combat this rise, many machine learning (ML) algorithms for detecting phishing emails have been developed. However, due to the limited email data sets on which these algorithms train, they are not adept at recognising varied attacks and, thus, suffer from concept drift; attackers can introduce small changes in the statistical characteristics of their emails or websites to successfully bypass detection. Over time, a gap develops between the reported accuracy from literature and the algorithm's actual effectiveness in the real world. This realises itself in frequent false positive and false negative classifications. To this end, we propose a multidimensional risk assessment of emails to reduce the feasibility of an attacker adapting their email and avoiding detection. This horizontal approach to email phishing detection profiles an incoming email on its main features. We develop a risk assessment framework that includes three models which analyse an email's (1) threat level, (2) cognitive manipulation, and (3) email type, which we combine to return the final risk assessment score. The Profiler does not require large data sets to train on to be effective and its analysis of varied email features reduces the impact of concept drift. Our Profiler can be used in conjunction with ML approaches, to reduce their misclassifications or as a labeller for large email data sets in the training stage. We evaluate the efficacy of the Profiler against a machine learning ensemble using state-of-the-art ML algorithms on a data set of 9000 legitimate and 900 phishing emails from a large Australian research organisation. Our results indicate that the Profiler's mitigates the impact of concept drift, and delivers 30% less false positive and 25% less false negative email classifications over the ML ensemble's approach.

* 12 pages

A Decision Model for Federated Learning Architecture Pattern Selection

Apr 28, 2022

Abstract:Federated learning is growing fast in both academia and industry to resolve data hungriness and privacy issues in machine learning. A federated learning system being widely distributed with different components and stakeholders requires software system design thinking. For instance, multiple patterns and tactics have been summarised by researchers that cover various aspects, from client management, training configuration, model deployment, etc. However, the multitude of patterns leaves the designers confused about when and which pattern to adopt or adapt. Therefore, in this paper, we present a set of decision models to assist designers and architects who have limited knowledge in federated learning, in selecting architectural patterns for federated learning architecture design. Each decision model maps functional and non-functional requirements of federated learning systems to a set of patterns. we also clarify the trade-offs that may be implicit in the patterns. We evaluated the decision model through a set of interviews with practitioners to assess the correctness and usefulness in guiding the architecture design process through various design decision options.

Blockchain-based Trustworthy Federated Learning Architecture

Aug 16, 2021

Abstract:Federated learning is an emerging privacy-preserving AI technique where clients (i.e., organisations or devices) train models locally and formulate a global model based on the local model updates without transferring local data externally. However, federated learning systems struggle to achieve trustworthiness and embody responsible AI principles. In particular, federated learning systems face accountability and fairness challenges due to multi-stakeholder involvement and heterogeneity in client data distribution. To enhance the accountability and fairness of federated learning systems, we present a blockchain-based trustworthy federated learning architecture. We first design a smart contract-based data-model provenance registry to enable accountability. Additionally, we propose a weighted fair data sampler algorithm to enhance fairness in training data. We evaluate the proposed approach using a COVID-19 X-ray detection use case. The evaluation results show that the approach is feasible to enable accountability and improve fairness. The proposed algorithm can achieve better performance than the default federated learning setting in terms of the model's generalisation and accuracy.

FLRA: A Reference Architecture for Federated Learning Systems

Jun 22, 2021

Abstract:Federated learning is an emerging machine learning paradigm that enables multiple devices to train models locally and formulate a global model, without sharing the clients' local data. A federated learning system can be viewed as a large-scale distributed system, involving different components and stakeholders with diverse requirements and constraints. Hence, developing a federated learning system requires both software system design thinking and machine learning knowledge. Although much effort has been put into federated learning from the machine learning perspectives, our previous systematic literature review on the area shows that there is a distinct lack of considerations for software architecture design for federated learning. In this paper, we propose FLRA, a reference architecture for federated learning systems, which provides a template design for federated learning-based solutions. The proposed FLRA reference architecture is based on an extensive review of existing patterns of federated learning systems found in the literature and existing industrial implementation. The FLRA reference architecture consists of a pool of architectural patterns that could address the frequently recurring design problems in federated learning architectures. The FLRA reference architecture can serve as a design guideline to assist architects and developers with practical solutions for their problems, which can be further customised.

A Systematic Literature Review on Federated Machine Learning: From A Software Engineering Perspective

Jul 27, 2020

Abstract:Federated learning is an emerging machine learning paradigm where multiple clients train models locally to formulate a global model under the coordination of a central server. To identify the state-of-the-art in federated learning from a software engineering perspective, we performed a systematic literature review with the extracted 231 primary studies. The results show that most of the known motivations of federated learning appear to be the most studied federated learning challenges, including data privacy, communication efficiency, and statistical heterogeneity. Also, there are only a few real-world applications of federated learning. More studies are needed before production-level adoption can take place

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge