Hatice Savas

Predicting Risk of Pulmonary Fibrosis Formation in PASC Patients

May 15, 2025Abstract:While the acute phase of the COVID-19 pandemic has subsided, its long-term effects persist through Post-Acute Sequelae of COVID-19 (PASC), commonly known as Long COVID. There remains substantial uncertainty regarding both its duration and optimal management strategies. PASC manifests as a diverse array of persistent or newly emerging symptoms--ranging from fatigue, dyspnea, and neurologic impairments (e.g., brain fog), to cardiovascular, pulmonary, and musculoskeletal abnormalities--that extend beyond the acute infection phase. This heterogeneous presentation poses substantial challenges for clinical assessment, diagnosis, and treatment planning. In this paper, we focus on imaging findings that may suggest fibrotic damage in the lungs, a critical manifestation characterized by scarring of lung tissue, which can potentially affect long-term respiratory function in patients with PASC. This study introduces a novel multi-center chest CT analysis framework that combines deep learning and radiomics for fibrosis prediction. Our approach leverages convolutional neural networks (CNNs) and interpretable feature extraction, achieving 82.2% accuracy and 85.5% AUC in classification tasks. We demonstrate the effectiveness of Grad-CAM visualization and radiomics-based feature analysis in providing clinically relevant insights for PASC-related lung fibrosis prediction. Our findings highlight the potential of deep learning-driven computational methods for early detection and risk assessment of PASC-related lung fibrosis--presented for the first time in the literature.

Shifts in Doctors' Eye Movements Between Real and AI-Generated Medical Images

Apr 21, 2025Abstract:Eye-tracking analysis plays a vital role in medical imaging, providing key insights into how radiologists visually interpret and diagnose clinical cases. In this work, we first analyze radiologists' attention and agreement by measuring the distribution of various eye-movement patterns, including saccades direction, amplitude, and their joint distribution. These metrics help uncover patterns in attention allocation and diagnostic strategies. Furthermore, we investigate whether and how doctors' gaze behavior shifts when viewing authentic (Real) versus deep-learning-generated (Fake) images. To achieve this, we examine fixation bias maps, focusing on first, last, short, and longest fixations independently, along with detailed saccades patterns, to quantify differences in gaze distribution and visual saliency between authentic and synthetic images.

Eyes Tell the Truth: GazeVal Highlights Shortcomings of Generative AI in Medical Imaging

Mar 26, 2025

Abstract:The demand for high-quality synthetic data for model training and augmentation has never been greater in medical imaging. However, current evaluations predominantly rely on computational metrics that fail to align with human expert recognition. This leads to synthetic images that may appear realistic numerically but lack clinical authenticity, posing significant challenges in ensuring the reliability and effectiveness of AI-driven medical tools. To address this gap, we introduce GazeVal, a practical framework that synergizes expert eye-tracking data with direct radiological evaluations to assess the quality of synthetic medical images. GazeVal leverages gaze patterns of radiologists as they provide a deeper understanding of how experts perceive and interact with synthetic data in different tasks (i.e., diagnostic or Turing tests). Experiments with sixteen radiologists revealed that 96.6% of the generated images (by the most recent state-of-the-art AI algorithm) were identified as fake, demonstrating the limitations of generative AI in producing clinically accurate images.

Mortality Prediction of Pulmonary Embolism Patients with Deep Learning and XGBoost

Nov 27, 2024

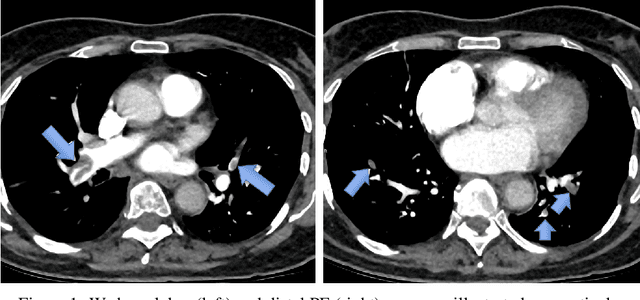

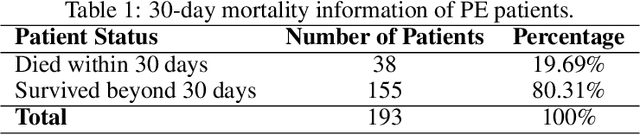

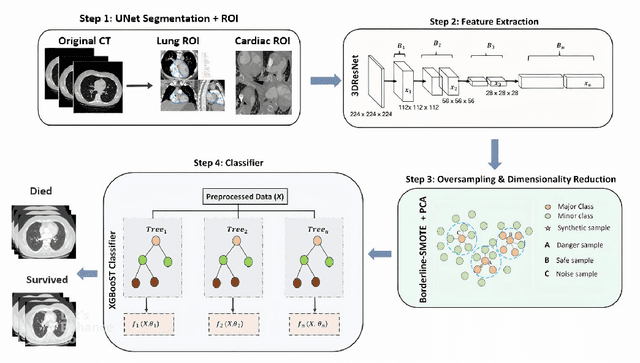

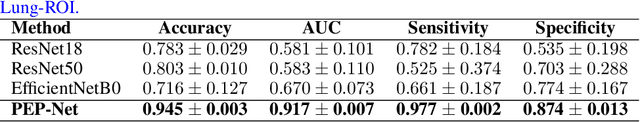

Abstract:Pulmonary Embolism (PE) is a serious cardiovascular condition that remains a leading cause of mortality and critical illness, underscoring the need for enhanced diagnostic strategies. Conventional clinical methods have limited success in predicting 30-day in-hospital mortality of PE patients. In this study, we present a new algorithm, called PEP-Net, for 30-day mortality prediction of PE patients based on the initial imaging data (CT) that opportunistically integrates a 3D Residual Network (3DResNet) with Extreme Gradient Boosting (XGBoost) algorithm with patient level binary labels without annotations of the emboli and its extent. Our proposed system offers a comprehensive prediction strategy by handling class imbalance problems, reducing overfitting via regularization, and reducing the prediction variance for more stable predictions. PEP-Net was tested in a cohort of 193 volumetric CT scans diagnosed with Acute PE, and it demonstrated a superior performance by significantly outperforming baseline models (76-78\%) with an accuracy of 94.5\% (+/-0.3) and 94.0\% (+/-0.7) when the input image is either lung region (Lung-ROI) or heart region (Cardiac-ROI). Our results advance PE prognostics by using only initial imaging data, setting a new benchmark in the field. While purely deep learning models have become the go-to for many medical classification (diagnostic) tasks, combined ResNet and XGBoost models herein outperform sole deep learning models due to a potential reason for having lack of enough data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge