Haobin Tan

UpDLRM: Accelerating Personalized Recommendation using Real-World PIM Architecture

Jun 20, 2024

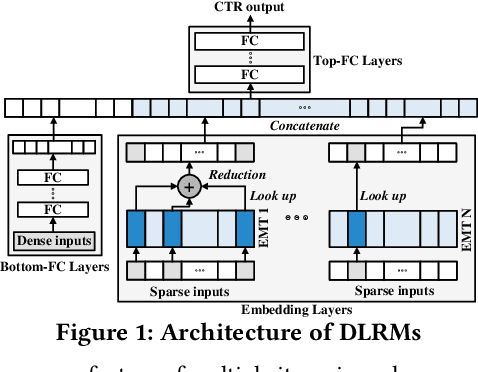

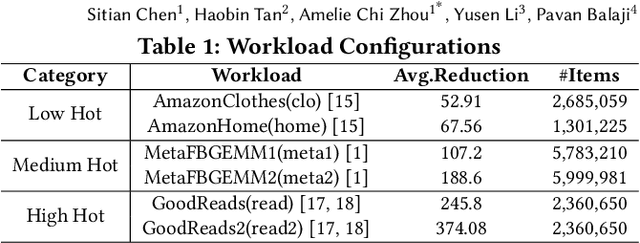

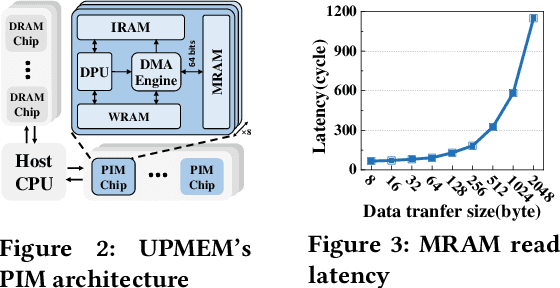

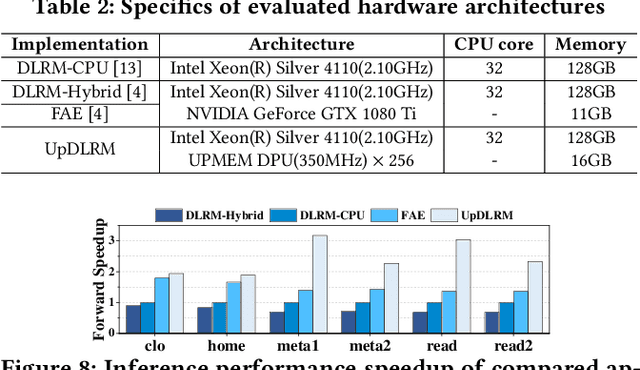

Abstract:Deep Learning Recommendation Models (DLRMs) have gained popularity in recommendation systems due to their effectiveness in handling large-scale recommendation tasks. The embedding layers of DLRMs have become the performance bottleneck due to their intensive needs on memory capacity and memory bandwidth. In this paper, we propose UpDLRM, which utilizes real-world processingin-memory (PIM) hardware, UPMEM DPU, to boost the memory bandwidth and reduce recommendation latency. The parallel nature of the DPU memory can provide high aggregated bandwidth for the large number of irregular memory accesses in embedding lookups, thus offering great potential to reduce the inference latency. To fully utilize the DPU memory bandwidth, we further studied the embedding table partitioning problem to achieve good workload-balance and efficient data caching. Evaluations using real-world datasets show that, UpDLRM achieves much lower inference time for DLRM compared to both CPU-only and CPU-GPU hybrid counterparts.

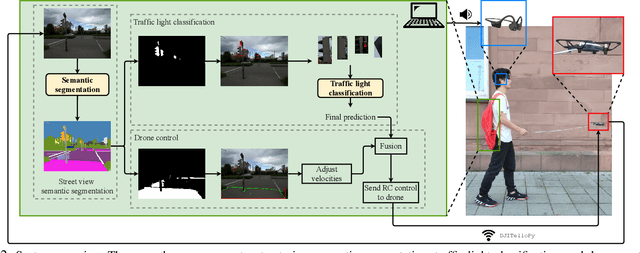

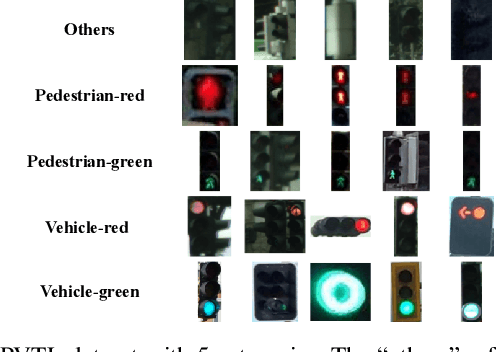

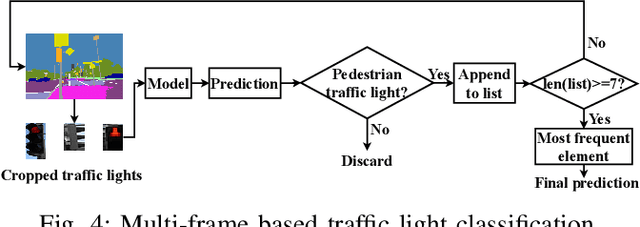

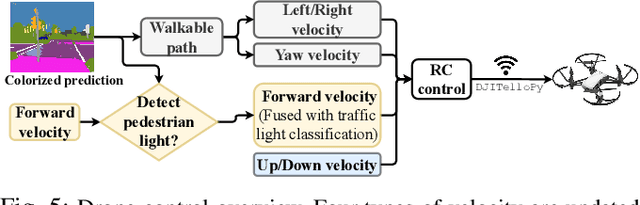

Flying Guide Dog: Walkable Path Discovery for the Visually Impaired Utilizing Drones and Transformer-based Semantic Segmentation

Aug 16, 2021

Abstract:Lacking the ability to sense ambient environments effectively, blind and visually impaired people (BVIP) face difficulty in walking outdoors, especially in urban areas. Therefore, tools for assisting BVIP are of great importance. In this paper, we propose a novel "flying guide dog" prototype for BVIP assistance using drone and street view semantic segmentation. Based on the walkable areas extracted from the segmentation prediction, the drone can adjust its movement automatically and thus lead the user to walk along the walkable path. By recognizing the color of pedestrian traffic lights, our prototype can help the user to cross a street safely. Furthermore, we introduce a new dataset named Pedestrian and Vehicle Traffic Lights (PVTL), which is dedicated to traffic light recognition. The result of our user study in real-world scenarios shows that our prototype is effective and easy to use, providing new insight into BVIP assistance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge