Hannu Tenhunen

Asynchronous Corner Tracking Algorithm based on Lifetime of Events for DAVIS Cameras

Oct 29, 2020

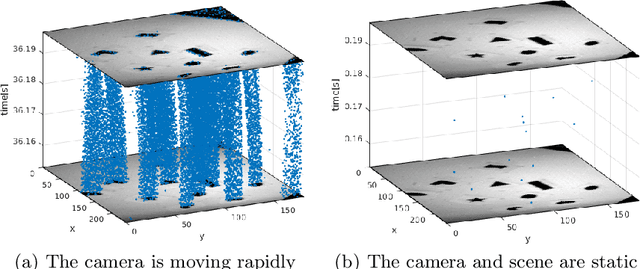

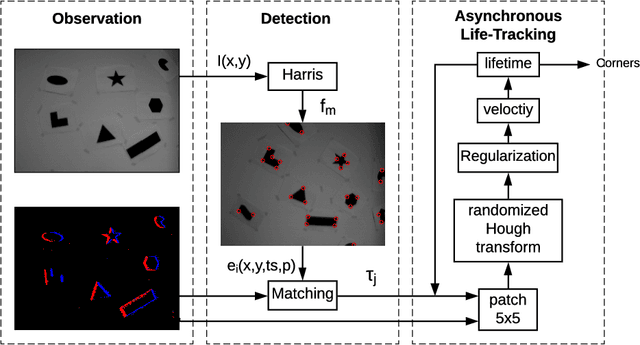

Abstract:Event cameras, i.e., the Dynamic and Active-pixel Vision Sensor (DAVIS) ones, capture the intensity changes in the scene and generates a stream of events in an asynchronous fashion. The output rate of such cameras can reach up to 10 million events per second in high dynamic environments. DAVIS cameras use novel vision sensors that mimic human eyes. Their attractive attributes, such as high output rate, High Dynamic Range (HDR), and high pixel bandwidth, make them an ideal solution for applications that require high-frequency tracking. Moreover, applications that operate in challenging lighting scenarios can exploit the high HDR of event cameras, i.e., 140 dB compared to 60 dB of traditional cameras. In this paper, a novel asynchronous corner tracking method is proposed that uses both events and intensity images captured by a DAVIS camera. The Harris algorithm is used to extract features, i.e., frame-corners from keyframes, i.e., intensity images. Afterward, a matching algorithm is used to extract event-corners from the stream of events. Events are solely used to perform asynchronous tracking until the next keyframe is captured. Neighboring events, within a window size of 5x5 pixels around the event-corner, are used to calculate the velocity and direction of extracted event-corners by fitting the 2D planar using a randomized Hough transform algorithm. Experimental evaluation showed that our approach is able to update the location of the extracted corners up to 100 times during the blind time of traditional cameras, i.e., between two consecutive intensity images.

Night vision obstacle detection and avoidance based on Bio-Inspired Vision Sensors

Oct 29, 2020

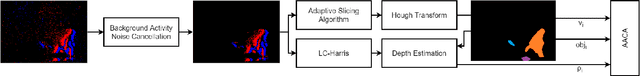

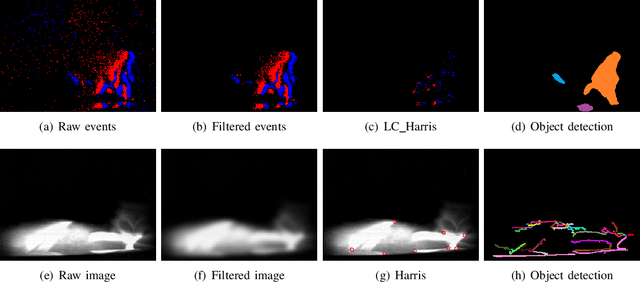

Abstract:Moving towards autonomy, unmanned vehicles rely heavily on state-of-the-art collision avoidance systems (CAS). However, the detection of obstacles especially during night-time is still a challenging task since the lighting conditions are not sufficient for traditional cameras to function properly. Therefore, we exploit the powerful attributes of event-based cameras to perform obstacle detection in low lighting conditions. Event cameras trigger events asynchronously at high output temporal rate with high dynamic range of up to 120 $dB$. The algorithm filters background activity noise and extracts objects using robust Hough transform technique. The depth of each detected object is computed by triangulating 2D features extracted utilising LC-Harris. Finally, asynchronous adaptive collision avoidance (AACA) algorithm is applied for effective avoidance. Qualitative evaluation is compared using event-camera and traditional camera.

Dynamic Resource-aware Corner Detection for Bio-inspired Vision Sensors

Oct 29, 2020

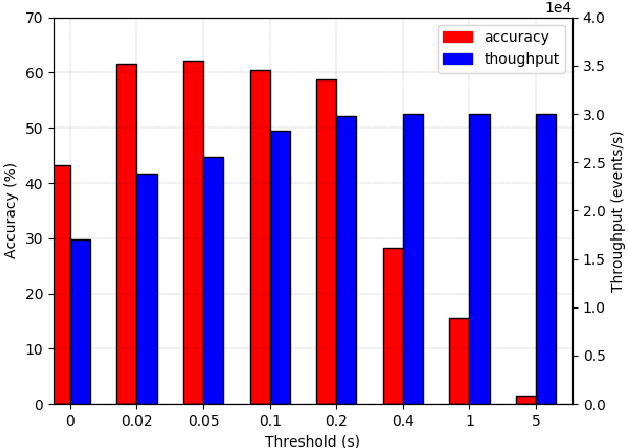

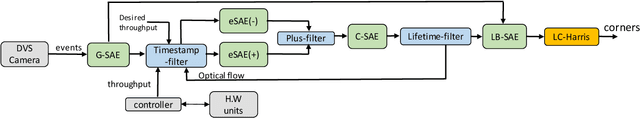

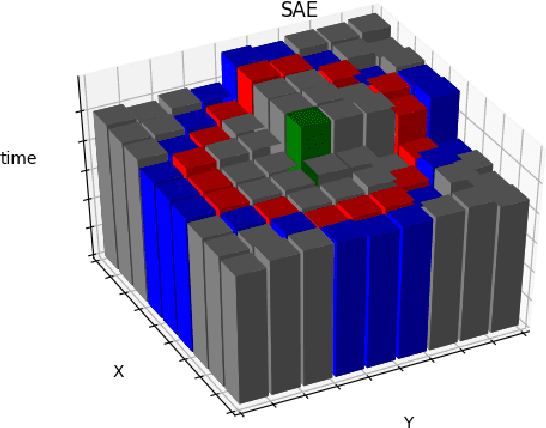

Abstract:Event-based cameras are vision devices that transmit only brightness changes with low latency and ultra-low power consumption. Such characteristics make event-based cameras attractive in the field of localization and object tracking in resource-constrained systems. Since the number of generated events in such cameras is huge, the selection and filtering of the incoming events are beneficial from both increasing the accuracy of the features and reducing the computational load. In this paper, we present an algorithm to detect asynchronous corners from a stream of events in real-time on embedded systems. The algorithm is called the Three Layer Filtering-Harris or TLF-Harris algorithm. The algorithm is based on an events' filtering strategy whose purpose is 1) to increase the accuracy by deliberately eliminating some incoming events, i.e., noise, and 2) to improve the real-time performance of the system, i.e., preserving a constant throughput in terms of input events per second, by discarding unnecessary events with a limited accuracy loss. An approximation of the Harris algorithm, in turn, is used to exploit its high-quality detection capability with a low-complexity implementation to enable seamless real-time performance on embedded computing platforms. The proposed algorithm is capable of selecting the best corner candidate among neighbors and achieves an average execution time savings of 59 % compared with the conventional Harris score. Moreover, our approach outperforms the competing methods, such as eFAST, eHarris, and FA-Harris, in terms of real-time performance, and surpasses Arc* in terms of accuracy.

Dynamic Formation Reshaping Based on Point Set Registration in a Swarm of Drones

Oct 29, 2020

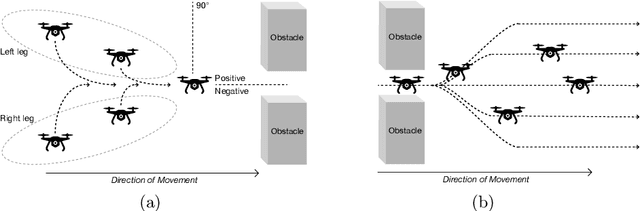

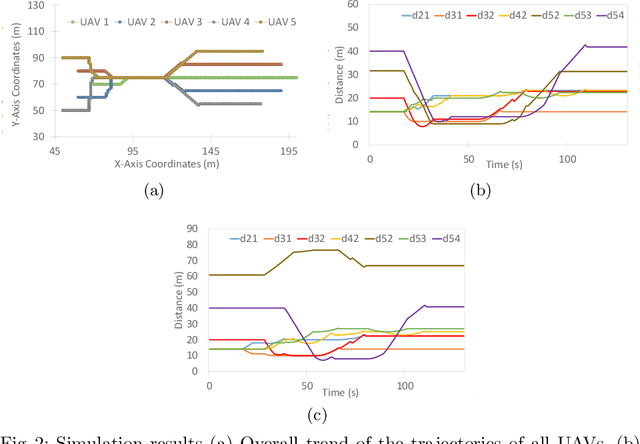

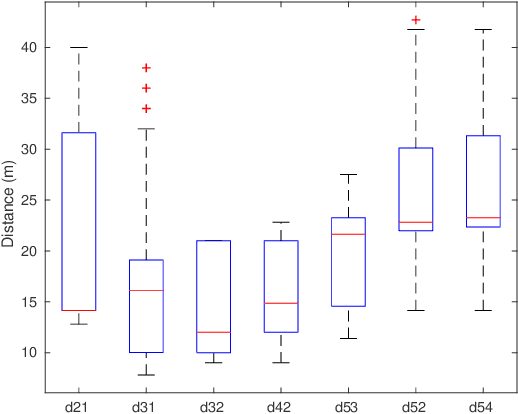

Abstract:This work focuses on the formation reshaping in an optimized manner in autonomous swarm of drones. Here, the two main problems are: 1) how to break and reshape the initial formation in an optimal manner, and 2) how to do such reformation while minimizing the overall deviation of the drones and the overall time, i.e., without slowing down. To address the first problem, we introduce a set of routines for the drones/agents to follow while reshaping to a secondary formation shape. And the second problem is resolved by utilizing the temperature function reduction technique, originally used in the point set registration process. The goal is to be able to dynamically reform the shape of multi-agent based swarm in near-optimal manner while going through narrow openings between, for instance obstacles, and then bringing the agents back to their original shape after passing through the narrow passage using point set registration technique.

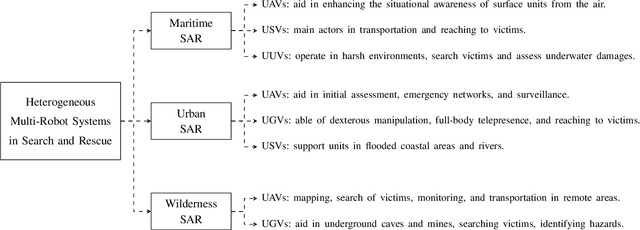

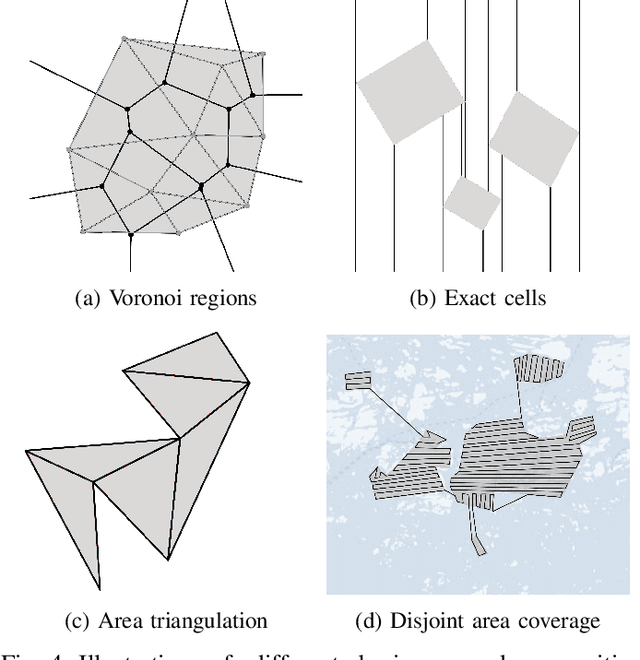

Collaborative Multi-Robot Systems for Search and Rescue: Coordination and Perception

Aug 28, 2020

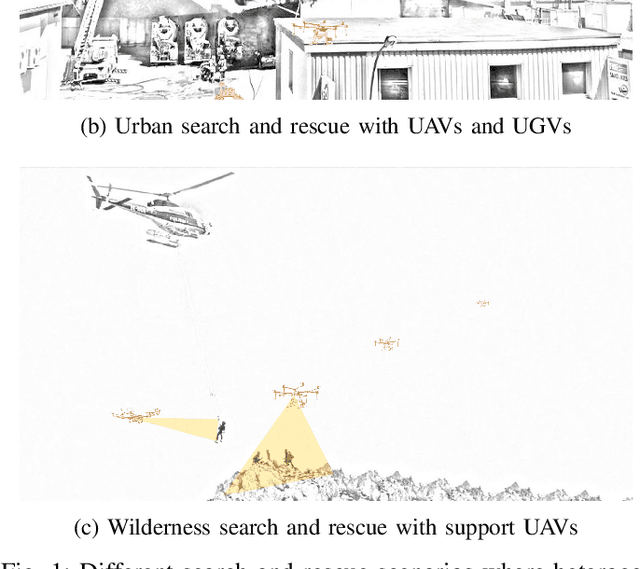

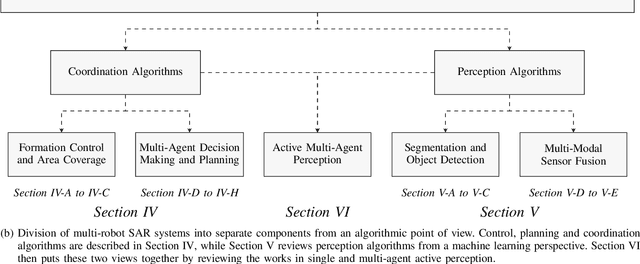

Abstract:Autonomous or teleoperated robots have been playing increasingly important roles in civil applications in recent years. Across the different civil domains where robots can support human operators, one of the areas where they can have more impact is in search and rescue (SAR) operations. In particular, multi-robot systems have the potential to significantly improve the efficiency of SAR personnel with faster search of victims, initial assessment and mapping of the environment, real-time monitoring and surveillance of SAR operations, or establishing emergency communication networks, among other possibilities. SAR operations encompass a wide variety of environments and situations, and therefore heterogeneous and collaborative multi-robot systems can provide the most advantages. In this paper, we review and analyze the existing approaches to multi-robot SAR support, from an algorithmic perspective and putting an emphasis on the methods enabling collaboration among the robots as well as advanced perception through machine vision and multi-agent active perception. Furthermore, we put these algorithms in the context of the different challenges and constraints that various types of robots (ground, aerial, surface or underwater) encounter in different SAR environments (maritime, urban, wilderness or other post-disaster scenarios). This is, to the best of our knowledge, the first review considering heterogeneous SAR robots across different environments, while giving two complimentary points of view: control mechanisms and machine perception. Based on our review of the state-of-the-art, we discuss the main open research questions, and outline our insights on the current approaches that have potential to improve the real-world performance of multi-robot SAR systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge