Giuseppe Ughi

Invariant Risk Minimisation for Cross-Organism Inference: Substituting Mouse Data for Human Data in Human Risk Factor Discovery

Nov 14, 2021

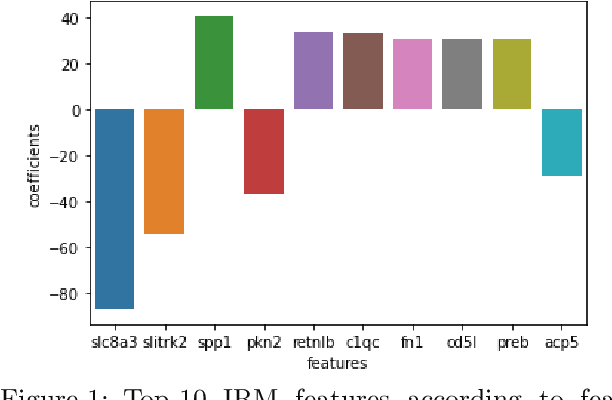

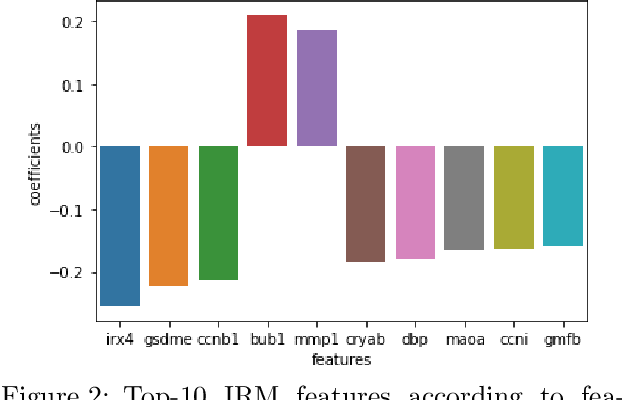

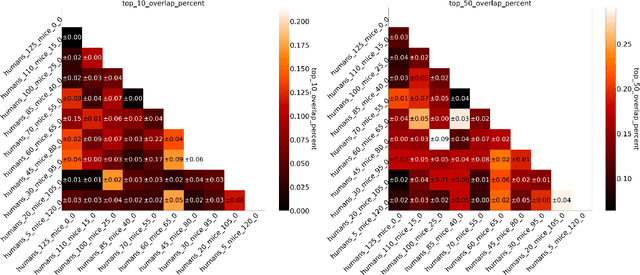

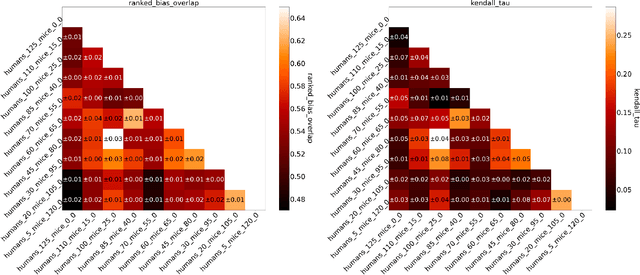

Abstract:Human medical data can be challenging to obtain due to data privacy concerns, difficulties conducting certain types of experiments, or prohibitive associated costs. In many settings, data from animal models or in-vitro cell lines are available to help augment our understanding of human data. However, this data is known for having low etiological validity in comparison to human data. In this work, we augment small human medical datasets with in-vitro data and animal models. We use Invariant Risk Minimisation (IRM) to elucidate invariant features by considering cross-organism data as belonging to different data-generating environments. Our models identify genes of relevance to human cancer development. We observe a degree of consistency between varying the amounts of human and mouse data used, however, further work is required to obtain conclusive insights. As a secondary contribution, we enhance existing open source datasets and provide two uniformly processed, cross-organism, homologue gene-matched datasets to the community.

An Empirical Study of Derivative-Free-Optimization Algorithms for Targeted Black-Box Attacks in Deep Neural Networks

Dec 03, 2020

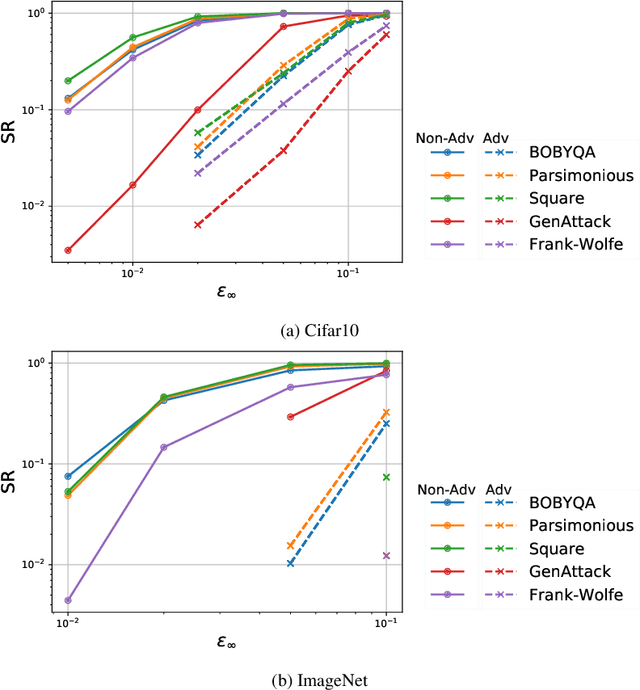

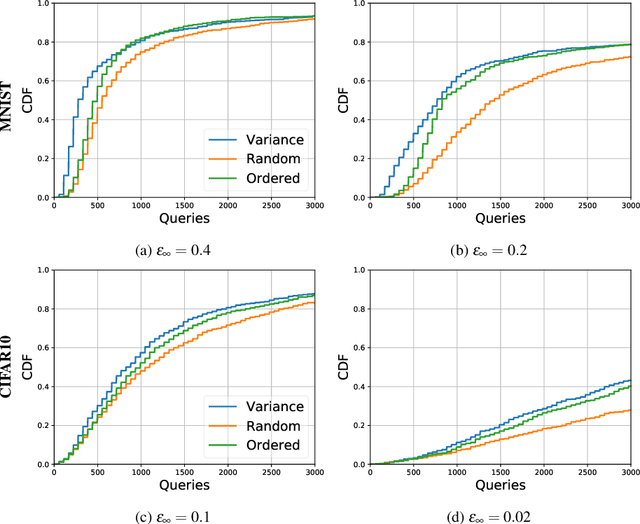

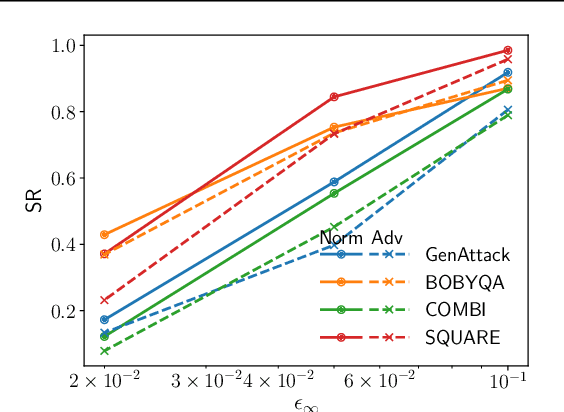

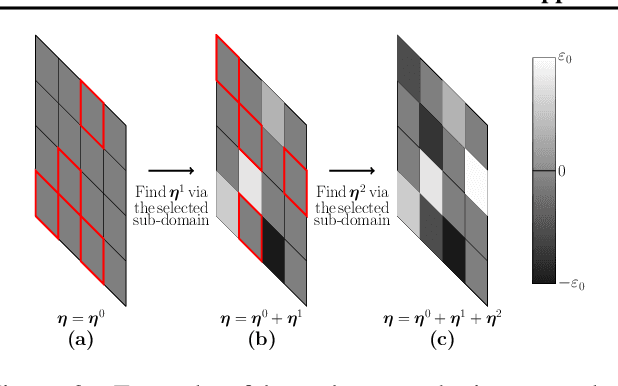

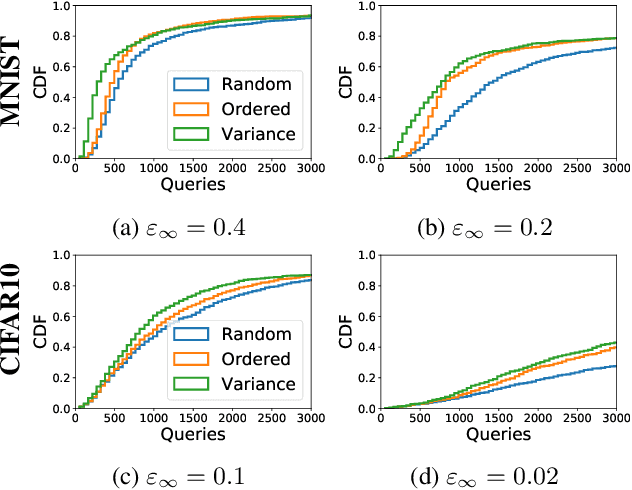

Abstract:We perform a comprehensive study on the performance of derivative free optimization (DFO) algorithms for the generation of targeted black-box adversarial attacks on Deep Neural Network (DNN) classifiers assuming the perturbation energy is bounded by an $\ell_\infty$ constraint and the number of queries to the network is limited. This paper considers four pre-existing state-of-the-art DFO-based algorithms along with the introduction of a new algorithm built on BOBYQA, a model-based DFO method. We compare these algorithms in a variety of settings according to the fraction of images that they successfully misclassify given a maximum number of queries to the DNN. The experiments disclose how the likelihood of finding an adversarial example depends on both the algorithm used and the setting of the attack; algorithms limiting the search of adversarial example to the vertices of the $\ell^\infty$ constraint work particularly well without structural defenses, while the presented BOBYQA based algorithm works better for especially small perturbation energies. This variance in performance highlights the importance of new algorithms being compared to the state-of-the-art in a variety of settings, and the effectiveness of adversarial defenses being tested using as wide a range of algorithms as possible.

A Model-Based Derivative-Free Approach to Black-Box Adversarial Examples: BOBYQA

Feb 24, 2020

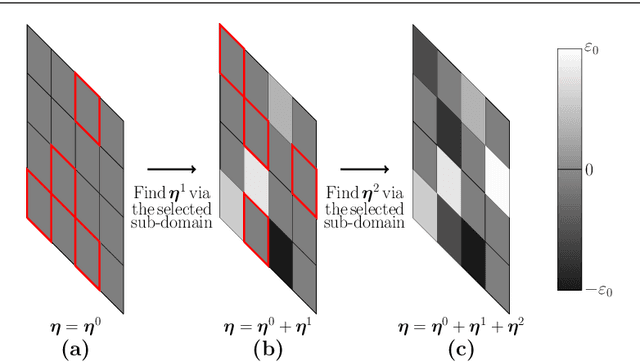

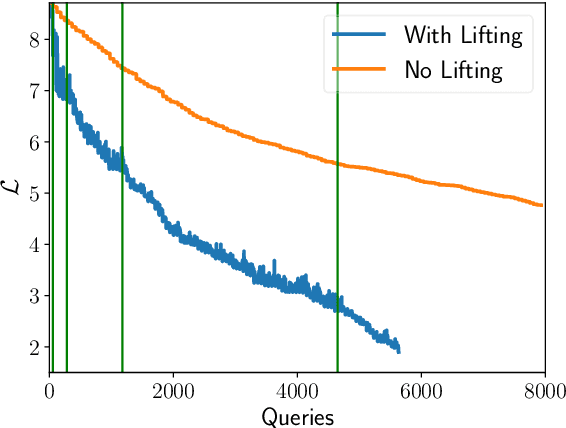

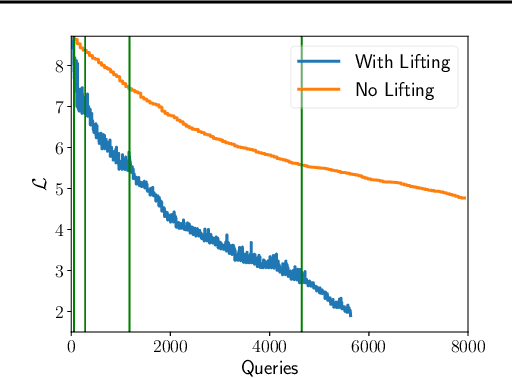

Abstract:We demonstrate that model-based derivative free optimisation algorithms can generate adversarial targeted misclassification of deep networks using fewer network queries than non-model-based methods. Specifically, we consider the black-box setting, and show that the number of networks queries is less impacted by making the task more challenging either through reducing the allowed $\ell^{\infty}$ perturbation energy or training the network with defences against adversarial misclassification. We illustrate this by contrasting the BOBYQA algorithm with the state-of-the-art model-free adversarial targeted misclassification approaches based on genetic, combinatorial, and direct-search algorithms. We observe that for high $\ell^{\infty}$ energy perturbations on networks, the aforementioned simpler model-free methods require the fewest queries. In contrast, the proposed BOBYQA based method achieves state-of-the-art results when the perturbation energy decreases, or if the network is trained against adversarial perturbations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge