Fuxin Jiang

Reasoning and Tool-use Compete in Agentic RL:From Quantifying Interference to Disentangled Tuning

Feb 01, 2026Abstract:Agentic Reinforcement Learning (ARL) focuses on training large language models (LLMs) to interleave reasoning with external tool execution to solve complex tasks. Most existing ARL methods train a single shared model parameters to support both reasoning and tool use behaviors, implicitly assuming that joint training leads to improved overall agent performance. Despite its widespread adoption, this assumption has rarely been examined empirically. In this paper, we systematically investigate this assumption by introducing a Linear Effect Attribution System(LEAS), which provides quantitative evidence of interference between reasoning and tool-use behaviors. Through an in-depth analysis, we show that these two capabilities often induce misaligned gradient directions, leading to training interference that undermines the effectiveness of joint optimization and challenges the prevailing ARL paradigm. To address this issue, we propose Disentangled Action Reasoning Tuning(DART), a simple and efficient framework that explicitly decouples parameter updates for reasoning and tool-use via separate low-rank adaptation modules. Experimental results show that DART consistently outperforms baseline methods with averaged 6.35 percent improvements and achieves performance comparable to multi-agent systems that explicitly separate tool-use and reasoning using a single model.

OmniSQL: Synthesizing High-quality Text-to-SQL Data at Scale

Mar 04, 2025Abstract:Text-to-SQL, the task of translating natural language questions into SQL queries, plays a crucial role in enabling non-experts to interact with databases. While recent advancements in large language models (LLMs) have significantly enhanced text-to-SQL performance, existing approaches face notable limitations in real-world text-to-SQL applications. Prompting-based methods often depend on closed-source LLMs, which are expensive, raise privacy concerns, and lack customization. Fine-tuning-based methods, on the other hand, suffer from poor generalizability due to the limited coverage of publicly available training data. To overcome these challenges, we propose a novel and scalable text-to-SQL data synthesis framework for automatically synthesizing large-scale, high-quality, and diverse datasets without extensive human intervention. Using this framework, we introduce SynSQL-2.5M, the first million-scale text-to-SQL dataset, containing 2.5 million samples spanning over 16,000 synthetic databases. Each sample includes a database, SQL query, natural language question, and chain-of-thought (CoT) solution. Leveraging SynSQL-2.5M, we develop OmniSQL, a powerful open-source text-to-SQL model available in three sizes: 7B, 14B, and 32B. Extensive evaluations across nine datasets demonstrate that OmniSQL achieves state-of-the-art performance, matching or surpassing leading closed-source and open-source LLMs, including GPT-4o and DeepSeek-V3, despite its smaller size. We release all code, datasets, and models to support further research.

Disentangled Interpretable Representation for Efficient Long-term Time Series Forecasting

Nov 26, 2024

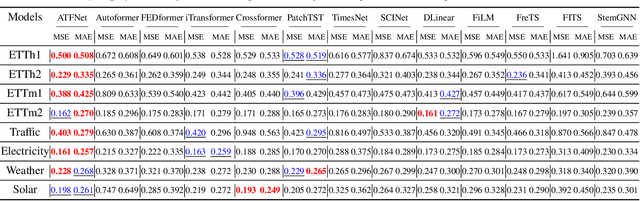

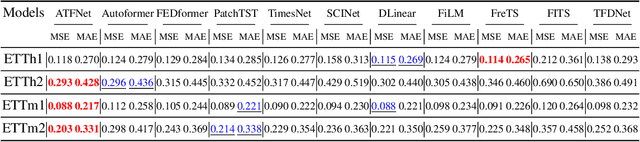

Abstract:Industry 5.0 introduces new challenges for Long-term Time Series Forecasting (LTSF), characterized by high-dimensional, high-resolution data and high-stakes application scenarios. Against this backdrop, developing efficient and interpretable models for LTSF becomes a key challenge. Existing deep learning and linear models often suffer from excessive parameter complexity and lack intuitive interpretability. To address these issues, we propose DiPE-Linear, a Disentangled interpretable Parameter-Efficient Linear network. DiPE-Linear incorporates three temporal components: Static Frequential Attention (SFA), Static Temporal Attention (STA), and Independent Frequential Mapping (IFM). These components alternate between learning in the frequency and time domains to achieve disentangled interpretability. The decomposed model structure reduces parameter complexity from quadratic in fully connected networks (FCs) to linear and computational complexity from quadratic to log-linear. Additionally, a Low-Rank Weight Sharing policy enhances the model's ability to handle multivariate series. Despite operating within a subspace of FCs with limited expressive capacity, DiPE-Linear demonstrates comparable or superior performance to both FCs and nonlinear models across multiple open-source and real-world LTSF datasets, validating the effectiveness of its sophisticatedly designed structure. The combination of efficiency, accuracy, and interpretability makes DiPE-Linear a strong candidate for advancing LTSF in both research and real-world applications. The source code is available at https://github.com/wintertee/DiPE-Linear.

ATFNet: Adaptive Time-Frequency Ensembled Network for Long-term Time Series Forecasting

Apr 08, 2024

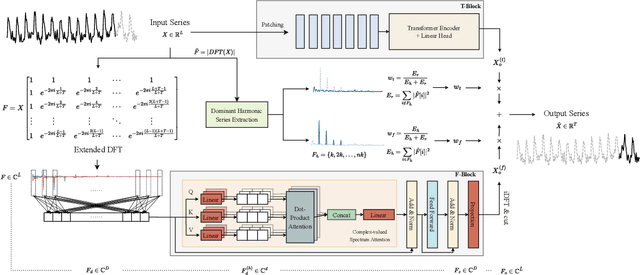

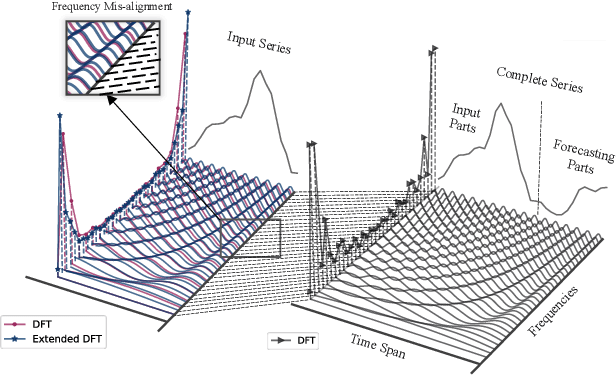

Abstract:The intricate nature of time series data analysis benefits greatly from the distinct advantages offered by time and frequency domain representations. While the time domain is superior in representing local dependencies, particularly in non-periodic series, the frequency domain excels in capturing global dependencies, making it ideal for series with evident periodic patterns. To capitalize on both of these strengths, we propose ATFNet, an innovative framework that combines a time domain module and a frequency domain module to concurrently capture local and global dependencies in time series data. Specifically, we introduce Dominant Harmonic Series Energy Weighting, a novel mechanism for dynamically adjusting the weights between the two modules based on the periodicity of the input time series. In the frequency domain module, we enhance the traditional Discrete Fourier Transform (DFT) with our Extended DFT, designed to address the challenge of discrete frequency misalignment. Additionally, our Complex-valued Spectrum Attention mechanism offers a novel approach to discern the intricate relationships between different frequency combinations. Extensive experiments across multiple real-world datasets demonstrate that our ATFNet framework outperforms current state-of-the-art methods in long-term time series forecasting.

A Novel Hybrid Framework for Hourly PM2.5 Concentration Forecasting Using CEEMDAN and Deep Temporal Convolutional Neural Network

Dec 07, 2020

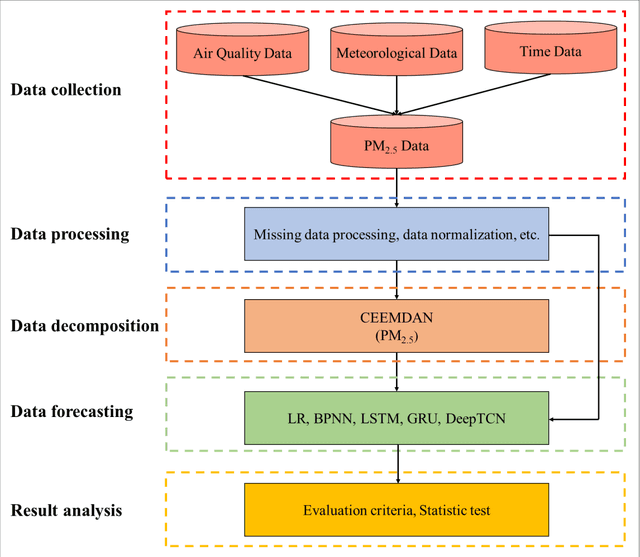

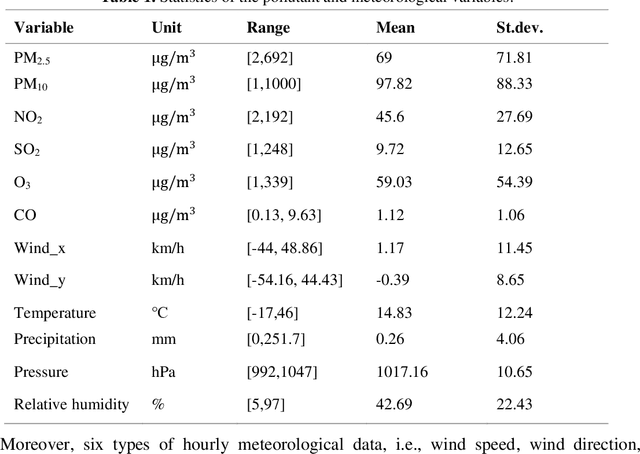

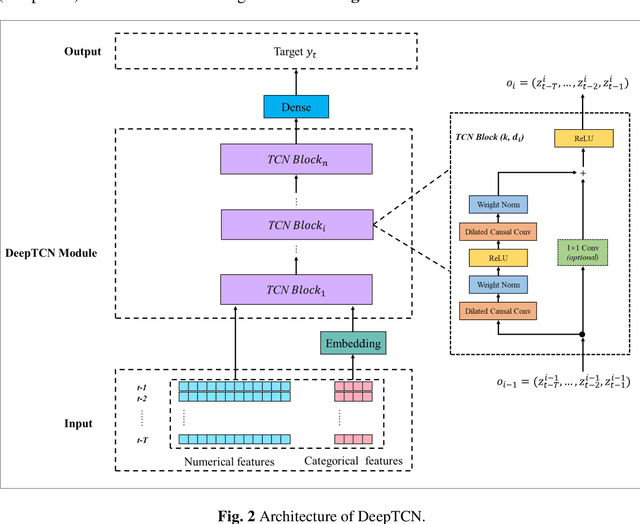

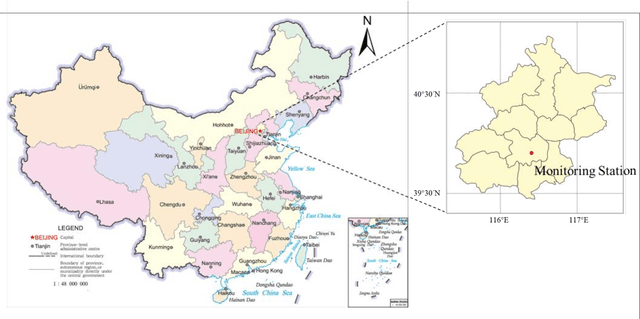

Abstract:For hourly PM2.5 concentration prediction, accurately capturing the data patterns of external factors that affect PM2.5 concentration changes, and constructing a forecasting model is one of efficient means to improve forecasting accuracy. In this study, a novel hybrid forecasting model based on complete ensemble empirical mode decomposition with adaptive noise (CEEMDAN) and deep temporal convolutional neural network (DeepTCN) is developed to predict PM2.5 concentration, by modelling the data patterns of historical pollutant concentrations data, meteorological data, and discrete time variables' data. Taking PM2.5 concentration of Beijing as the sample, experimental results showed that the forecasting accuracy of the proposed CEEMDAN-DeepTCN model is verified to be the highest when compared with the time series model, artificial neural network, and the popular deep learning models. The new model has improved the capability to model the PM2.5-related factor data patterns, and can be used as a promising tool for forecasting PM2.5 concentrations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge