Fuqing Duan

School of Computer Science, Beijing Institute of Technology

MaskBlur: Spatial and Angular Data Augmentation for Light Field Image Super-Resolution

Oct 09, 2024Abstract:Data augmentation (DA) is an effective approach for enhancing model performance with limited data, such as light field (LF) image super-resolution (SR). LF images inherently possess rich spatial and angular information. Nonetheless, there is a scarcity of DA methodologies explicitly tailored for LF images, and existing works tend to concentrate solely on either the spatial or angular domain. This paper proposes a novel spatial and angular DA strategy named MaskBlur for LF image SR by concurrently addressing spatial and angular aspects. MaskBlur consists of spatial blur and angular dropout two components. Spatial blur is governed by a spatial mask, which controls where pixels are blurred, i.e., pasting pixels between the low-resolution and high-resolution domains. The angular mask is responsible for angular dropout, i.e., selecting which views to perform the spatial blur operation. By doing so, MaskBlur enables the model to treat pixels differently in the spatial and angular domains when super-resolving LF images rather than blindly treating all pixels equally. Extensive experiments demonstrate the efficacy of MaskBlur in significantly enhancing the performance of existing SR methods. We further extend MaskBlur to other LF image tasks such as denoising, deblurring, low-light enhancement, and real-world SR. Code is publicly available at \url{https://github.com/chaowentao/MaskBlur}.

LFSRDiff: Light Field Image Super-Resolution via Diffusion Models

Nov 27, 2023

Abstract:Light field (LF) image super-resolution (SR) is a challenging problem due to its inherent ill-posed nature, where a single low-resolution (LR) input LF image can correspond to multiple potential super-resolved outcomes. Despite this complexity, mainstream LF image SR methods typically adopt a deterministic approach, generating only a single output supervised by pixel-wise loss functions. This tendency often results in blurry and unrealistic results. Although diffusion models can capture the distribution of potential SR results by iteratively predicting Gaussian noise during the denoising process, they are primarily designed for general images and struggle to effectively handle the unique characteristics and information present in LF images. To address these limitations, we introduce LFSRDiff, the first diffusion-based LF image SR model, by incorporating the LF disentanglement mechanism. Our novel contribution includes the introduction of a disentangled U-Net for diffusion models, enabling more effective extraction and fusion of both spatial and angular information within LF images. Through comprehensive experimental evaluations and comparisons with the state-of-the-art LF image SR methods, the proposed approach consistently produces diverse and realistic SR results. It achieves the highest perceptual metric in terms of LPIPS. It also demonstrates the ability to effectively control the trade-off between perception and distortion. The code is available at \url{https://github.com/chaowentao/LFSRDiff}.

OccCasNet: Occlusion-aware Cascade Cost Volume for Light Field Depth Estimation

May 28, 2023

Abstract:Light field (LF) depth estimation is a crucial task with numerous practical applications. However, mainstream methods based on the multi-view stereo (MVS) are resource-intensive and time-consuming as they need to construct a finer cost volume. To address this issue and achieve a better trade-off between accuracy and efficiency, we propose an occlusion-aware cascade cost volume for LF depth (disparity) estimation. Our cascaded strategy reduces the sampling number while keeping the sampling interval constant during the construction of a finer cost volume. We also introduce occlusion maps to enhance accuracy in constructing the occlusion-aware cost volume. Specifically, we first obtain the coarse disparity map through the coarse disparity estimation network. Then, the sub-aperture images (SAIs) of side views are warped to the center view based on the initial disparity map. Next, we propose photo-consistency constraints between the warped SAIs and the center SAI to generate occlusion maps for each SAI. Finally, we introduce the coarse disparity map and occlusion maps to construct an occlusion-aware refined cost volume, enabling the refined disparity estimation network to yield a more precise disparity map. Extensive experiments demonstrate the effectiveness of our method. Compared with state-of-the-art methods, our method achieves a superior balance between accuracy and efficiency and ranks first in terms of MSE and Q25 metrics among published methods on the HCI 4D benchmark. The code and model of the proposed method are available at https://github.com/chaowentao/OccCasNet.

Learning Sub-Pixel Disparity Distribution for Light Field Depth Estimation

Aug 20, 2022

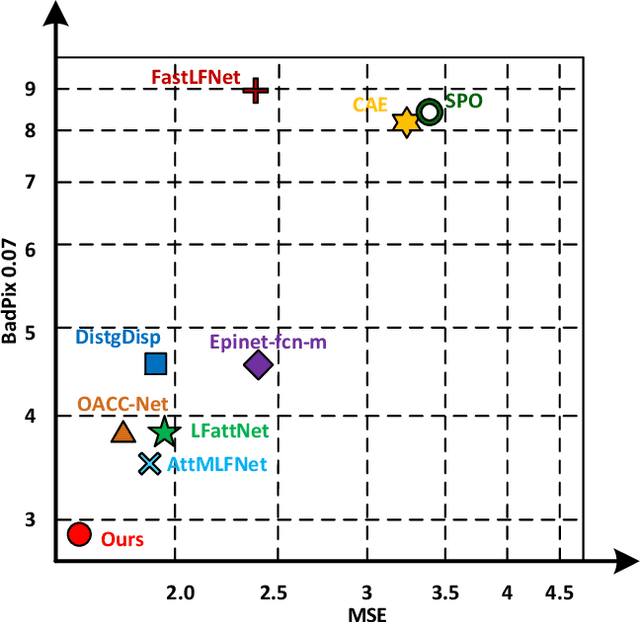

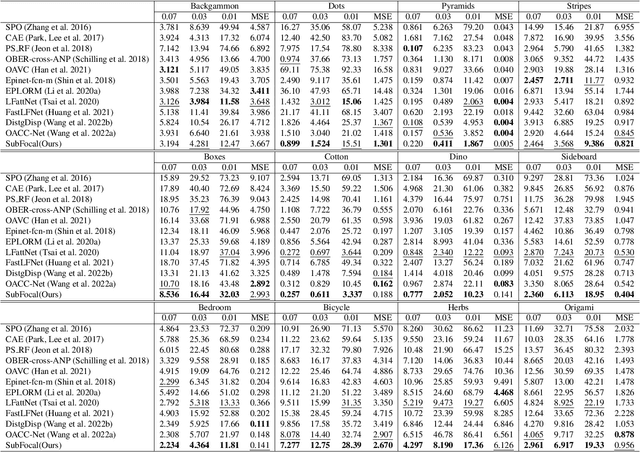

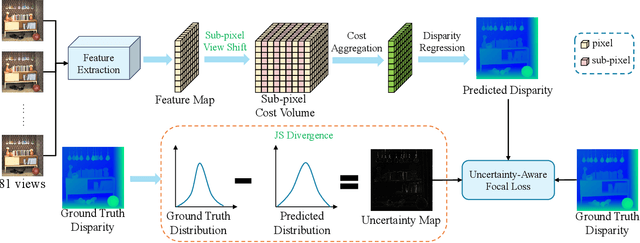

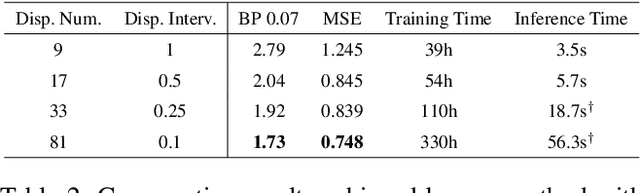

Abstract:Existing light field (LF) depth estimation methods generally consider depth estimation as a regression problem, supervised by a pixel-wise L1 loss between the regressed disparity map and the groundtruth one. However, the disparity map is only a sub-space projection (i.e., an expectation) of the disparity distribution, while the latter one is more essential for models to learn. In this paper, we propose a simple yet effective method to learn the sub-pixel disparity distribution by fully utilizing the power of deep networks. In our method, we construct the cost volume at sub-pixel level to produce a finer depth distribution and design an uncertainty-aware focal loss to supervise the disparity distribution to be close to the groundtruth one. Extensive experimental results demonstrate the effectiveness of our method. Our method, called SubFocal, ranks the first place among 99 submitted algorithms on the HCI 4D LF Benchmark in terms of all the five accuracy metrics (i.e., BadPix0.01, BadPix0.03, BadPix0.07, MSE and Q25), and significantly outperforms recent state-of-the-art LF depth methods such as OACC-Net and AttMLFNet. Code and model are available at https://github.com/chaowentao/SubFocal.

DecisionHoldem: Safe Depth-Limited Solving With Diverse Opponents for Imperfect-Information Games

Jan 27, 2022Abstract:An imperfect-information game is a type of game with asymmetric information. It is more common in life than perfect-information game. Artificial intelligence (AI) in imperfect-information games, such like poker, has made considerable progress and success in recent years. The great success of superhuman poker AI, such as Libratus and Deepstack, attracts researchers to pay attention to poker research. However, the lack of open-source code limits the development of Texas hold'em AI to some extent. This article introduces DecisionHoldem, a high-level AI for heads-up no-limit Texas hold'em with safe depth-limited subgame solving by considering possible ranges of opponent's private hands to reduce the exploitability of the strategy. Experimental results show that DecisionHoldem defeats the strongest openly available agent in heads-up no-limit Texas hold'em poker, namely Slumbot, and a high-level reproduction of Deepstack, viz, Openstack, by more than 730 mbb/h (one-thousandth big blind per round) and 700 mbb/h. Moreover, we release the source codes and tools of DecisionHoldem to promote AI development in imperfect-information games.

Pulsar Candidate Identification with Artificial Intelligence Techniques

Nov 27, 2017

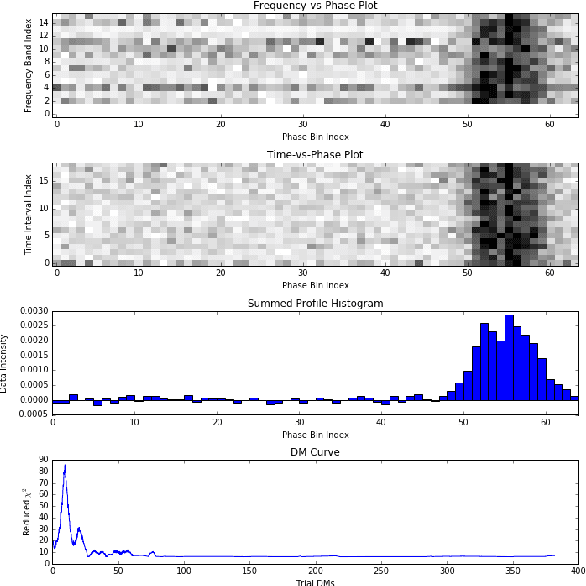

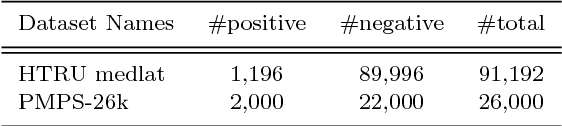

Abstract:Discovering pulsars is a significant and meaningful research topic in the field of radio astronomy. With the advent of astronomical instruments such as he Five-hundred-meter Aperture Spherical Telescope (FAST) in China, data volumes and data rates are exponentially growing. This fact necessitates a focus on artificial intelligence (AI) technologies that can perform the automatic pulsar candidate identification to mine large astronomical data sets. Automatic pulsar candidate identification can be considered as a task of determining potential candidates for further investigation and eliminating noises of radio frequency interferences or other non-pulsar signals. It is very hard to raise the performance of DCNN-based pulsar identification because the limited training samples restrict network structure to be designed deep enough for learning good features as well as the crucial class imbalance problem due to very limited number of real pulsar samples. To address these problems, we proposed a framework which combines deep convolution generative adversarial network (DCGAN) with support vector machine (SVM) to deal with imbalance class problem and to improve pulsar identification accuracy. DCGAN is used as sample generation and feature learning model, and SVM is adopted as the classifier for predicting candidate's labels in the inference stage. The proposed framework is a novel technique which not only can solve imbalance class problem but also can learn discriminative feature representations of pulsar candidates instead of computing hand-crafted features in preprocessing steps too, which makes it more accurate for automatic pulsar candidate selection. Experiments on two pulsar datasets verify the effectiveness and efficiency of our proposed method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge