Friedhelm Hamann

Unsupervised Joint Learning of Optical Flow and Intensity with Event Cameras

Mar 21, 2025Abstract:Event cameras rely on motion to obtain information about scene appearance. In other words, for event cameras, motion and appearance are seen both or neither, which are encoded in the output event stream. Previous works consider recovering these two visual quantities as separate tasks, which does not fit with the nature of event cameras and neglects the inherent relations between both tasks. In this paper, we propose an unsupervised learning framework that jointly estimates optical flow (motion) and image intensity (appearance), with a single network. Starting from the event generation model, we newly derive the event-based photometric error as a function of optical flow and image intensity, which is further combined with the contrast maximization framework, yielding a comprehensive loss function that provides proper constraints for both flow and intensity estimation. Exhaustive experiments show that our model achieves state-of-the-art performance for both optical flow (achieves 20% and 25% improvement in EPE and AE respectively in the unsupervised learning category) and intensity estimation (produces competitive results with other baselines, particularly in high dynamic range scenarios). Last but not least, our model achieves shorter inference time than all the other optical flow models and many of the image reconstruction models, while they output only one quantity. Project page: https://github.com/tub-rip/e2fai

Event-based Tracking of Any Point with Motion-Robust Correlation Features

Nov 28, 2024

Abstract:Tracking any point (TAP) recently shifted the motion estimation paradigm from focusing on individual salient points with local templates to tracking arbitrary points with global image contexts. However, while research has mostly focused on driving the accuracy of models in nominal settings, addressing scenarios with difficult lighting conditions and high-speed motions remains out of reach due to the limitations of the sensor. This work addresses this challenge with the first event camera-based TAP method. It leverages the high temporal resolution and high dynamic range of event cameras for robust high-speed tracking, and the global contexts in TAP methods to handle asynchronous and sparse event measurements. We further extend the TAP framework to handle event feature variations induced by motion - thereby addressing an open challenge in purely event-based tracking - with a novel feature alignment loss which ensures the learning of motion-robust features. Our method is trained with data from a new data generation pipeline and systematically ablated across all design decisions. Our method shows strong cross-dataset generalization and performs 135% better on the average Jaccard metric than the baselines. Moreover, on an established feature tracking benchmark, it achieves a 19% improvement over the previous best event-only method and even surpasses the previous best events-and-frames method by 3.7%.

Fourier-based Action Recognition for Wildlife Behavior Quantification with Event Cameras

Oct 09, 2024Abstract:Event cameras are novel bio-inspired vision sensors that measure pixel-wise brightness changes asynchronously instead of images at a given frame rate. They offer promising advantages, namely a high dynamic range, low latency, and minimal motion blur. Modern computer vision algorithms often rely on artificial neural network approaches, which require image-like representations of the data and cannot fully exploit the characteristics of event data. We propose approaches to action recognition based on the Fourier Transform. The approaches are intended to recognize oscillating motion patterns commonly present in nature. In particular, we apply our approaches to a recent dataset of breeding penguins annotated for "ecstatic display", a behavior where the observed penguins flap their wings at a certain frequency. We find that our approaches are both simple and effective, producing slightly lower results than a deep neural network (DNN) while relying just on a tiny fraction of the parameters compared to the DNN (five orders of magnitude fewer parameters). They work well despite the uncontrolled, diverse data present in the dataset. We hope this work opens a new perspective on event-based processing and action recognition.

MouseSIS: A Frames-and-Events Dataset for Space-Time Instance Segmentation of Mice

Sep 05, 2024

Abstract:Enabled by large annotated datasets, tracking and segmentation of objects in videos has made remarkable progress in recent years. Despite these advancements, algorithms still struggle under degraded conditions and during fast movements. Event cameras are novel sensors with high temporal resolution and high dynamic range that offer promising advantages to address these challenges. However, annotated data for developing learning-based mask-level tracking algorithms with events is not available. To this end, we introduce: ($i$) a new task termed \emph{space-time instance segmentation}, similar to video instance segmentation, whose goal is to segment instances throughout the entire duration of the sensor input (here, the input are quasi-continuous events and optionally aligned frames); and ($ii$) \emph{\dname}, a dataset for the new task, containing aligned grayscale frames and events. It includes annotated ground-truth labels (pixel-level instance segmentation masks) of a group of up to seven freely moving and interacting mice. We also provide two reference methods, which show that leveraging event data can consistently improve tracking performance, especially when used in combination with conventional cameras. The results highlight the potential of event-aided tracking in difficult scenarios. We hope our dataset opens the field of event-based video instance segmentation and enables the development of robust tracking algorithms for challenging conditions.\url{https://github.com/tub-rip/MouseSIS}

Motion-prior Contrast Maximization for Dense Continuous-Time Motion Estimation

Jul 15, 2024Abstract:Current optical flow and point-tracking methods rely heavily on synthetic datasets. Event cameras are novel vision sensors with advantages in challenging visual conditions, but state-of-the-art frame-based methods cannot be easily adapted to event data due to the limitations of current event simulators. We introduce a novel self-supervised loss combining the Contrast Maximization framework with a non-linear motion prior in the form of pixel-level trajectories and propose an efficient solution to solve the high-dimensional assignment problem between non-linear trajectories and events. Their effectiveness is demonstrated in two scenarios: In dense continuous-time motion estimation, our method improves the zero-shot performance of a synthetically trained model on the real-world dataset EVIMO2 by 29%. In optical flow estimation, our method elevates a simple UNet to achieve state-of-the-art performance among self-supervised methods on the DSEC optical flow benchmark. Our code is available at https://github.com/tub-rip/MotionPriorCMax.

* 24 pages, 8 figures, 8 tables, Project Page: https://github.com/tub-rip/MotionPriorCMax

Low-power, Continuous Remote Behavioral Localization with Event Cameras

Dec 06, 2023Abstract:Researchers in natural science need reliable methods for quantifying animal behavior. Recently, numerous computer vision methods emerged to automate the process. However, observing wild species at remote locations remains a challenging task due to difficult lighting conditions and constraints on power supply and data storage. Event cameras offer unique advantages for battery-dependent remote monitoring due to their low power consumption and high dynamic range capabilities. We use this novel sensor to quantify a behavior in Chinstrap penguins called ecstatic display. We formulate the problem as a temporal action detection task, determining the start and end times of the behavior. For this purpose, we recorded a colony of breeding penguins in Antarctica during several weeks and labeled event data on 16 nests. The developed method consists of a generator of candidate time intervals (proposals) and a classifier of the actions within them. The experiments show that the event cameras' natural response to motion is effective for continuous behavior monitoring and detection, reaching a mean average precision (mAP) of 58% (which increases to 63% in good weather conditions). The results also demonstrate the robustness against various lighting conditions contained in the challenging dataset. The low-power capabilities of the event camera allows to record three times longer than with a conventional camera. This work pioneers the use of event cameras for remote wildlife observation, opening new interdisciplinary opportunities. https://tub-rip.github.io/eventpenguins/

Event-based Continuous Color Video Decompression from Single Frames

Nov 30, 2023

Abstract:We present ContinuityCam, a novel approach to generate a continuous video from a single static RGB image, using an event camera. Conventional cameras struggle with high-speed motion capture due to bandwidth and dynamic range limitations. Event cameras are ideal sensors to solve this problem because they encode compressed change information at high temporal resolution. In this work, we propose a novel task called event-based continuous color video decompression, pairing single static color frames and events to reconstruct temporally continuous videos. Our approach combines continuous long-range motion modeling with a feature-plane-based synthesis neural integration model, enabling frame prediction at arbitrary times within the events. Our method does not rely on additional frames except for the initial image, increasing, thus, the robustness to sudden light changes, minimizing the prediction latency, and decreasing the bandwidth requirement. We introduce a novel single objective beamsplitter setup that acquires aligned images and events and a novel and challenging Event Extreme Decompression Dataset (E2D2) that tests the method in various lighting and motion profiles. We thoroughly evaluate our method through benchmarking reconstruction as well as various downstream tasks. Our approach significantly outperforms the event- and image- based baselines in the proposed task.

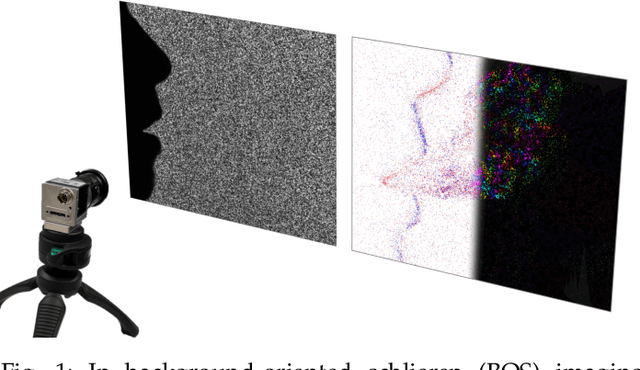

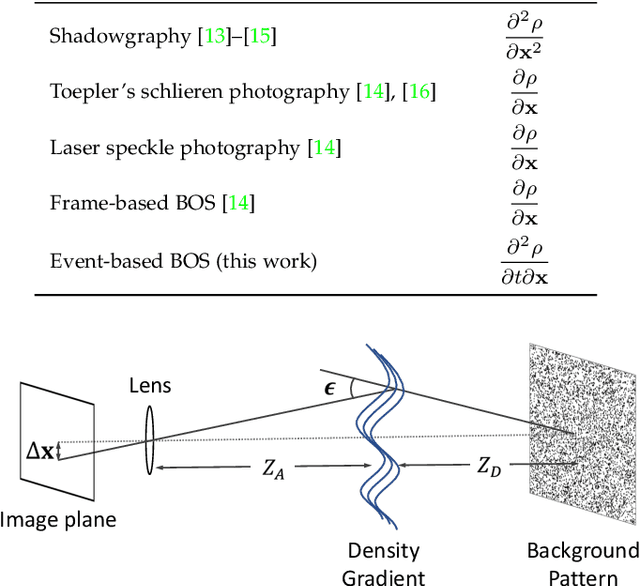

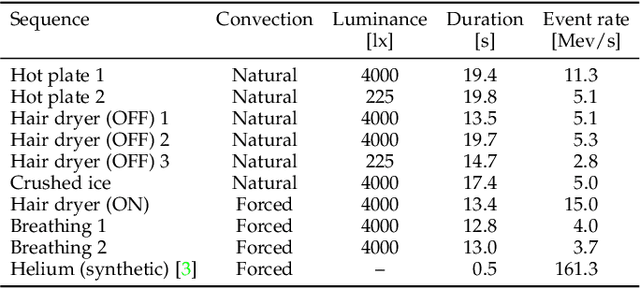

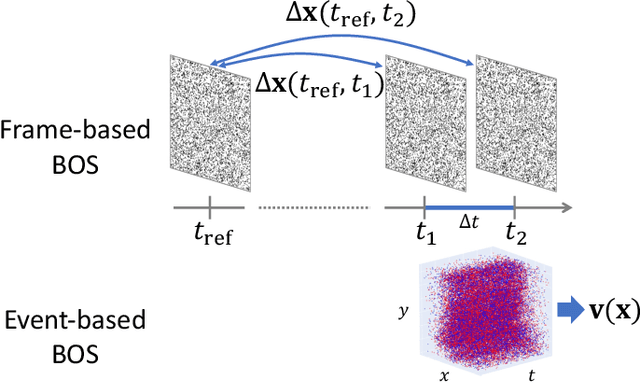

Event-based Background-Oriented Schlieren

Nov 01, 2023

Abstract:Schlieren imaging is an optical technique to observe the flow of transparent media, such as air or water, without any particle seeding. However, conventional frame-based techniques require both high spatial and temporal resolution cameras, which impose bright illumination and expensive computation limitations. Event cameras offer potential advantages (high dynamic range, high temporal resolution, and data efficiency) to overcome such limitations due to their bio-inspired sensing principle. This paper presents a novel technique for perceiving air convection using events and frames by providing the first theoretical analysis that connects event data and schlieren. We formulate the problem as a variational optimization one combining the linearized event generation model with a physically-motivated parameterization that estimates the temporal derivative of the air density. The experiments with accurately aligned frame- and event camera data reveal that the proposed method enables event cameras to obtain on par results with existing frame-based optical flow techniques. Moreover, the proposed method works under dark conditions where frame-based schlieren fails, and also enables slow-motion analysis by leveraging the event camera's advantages. Our work pioneers and opens a new stack of event camera applications, as we publish the source code as well as the first schlieren dataset with high-quality frame and event data. https://github.com/tub-rip/event_based_bos

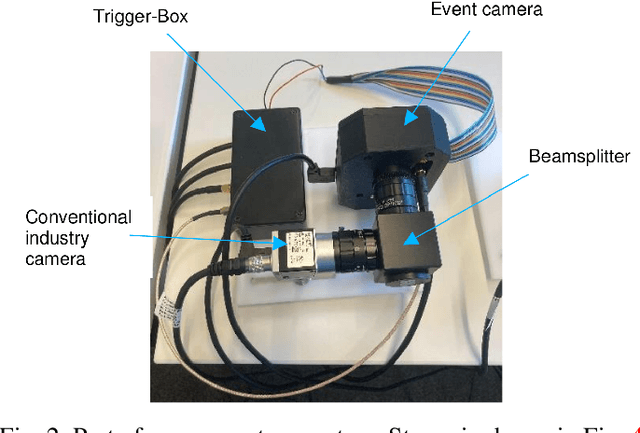

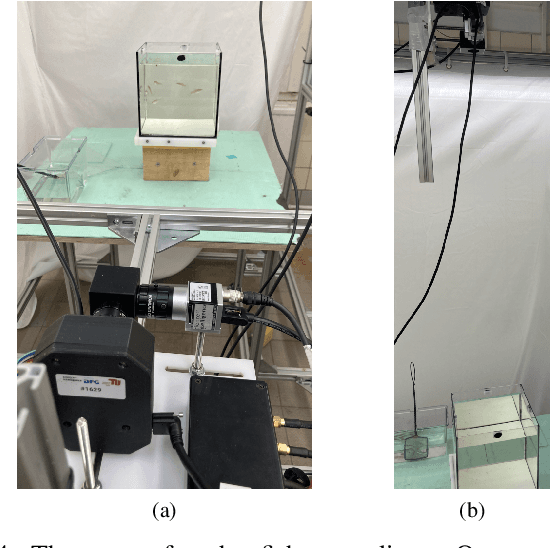

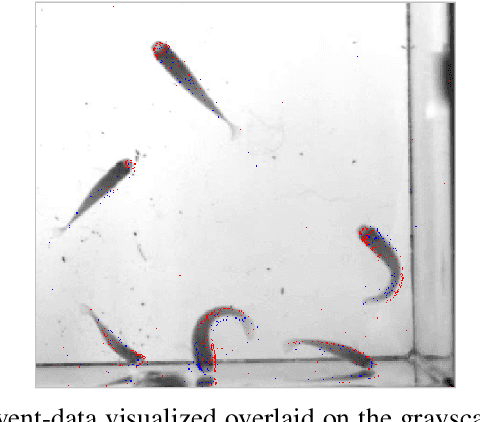

Stereo Co-capture System for Recording and Tracking Fish with Frame- and Event Cameras

Jul 15, 2022

Abstract:This work introduces a co-capture system for multi-animal visual data acquisition using conventional cameras and event cameras. Event cameras offer multiple advantages over frame-based cameras, such as a high temporal resolution and temporal redundancy suppression, which enable us to efficiently capture the fast and erratic movements of fish. We furthermore present an event-based multi-animal tracking algorithm, which proves the feasibility of the approach and sets the baseline for further exploration of combining the advantages of event cameras and conventional cameras for multi-animal tracking.

* 4 pages, 5 figures, 1 table

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge