Shuang Guo

Unsupervised Joint Learning of Optical Flow and Intensity with Event Cameras

Mar 21, 2025Abstract:Event cameras rely on motion to obtain information about scene appearance. In other words, for event cameras, motion and appearance are seen both or neither, which are encoded in the output event stream. Previous works consider recovering these two visual quantities as separate tasks, which does not fit with the nature of event cameras and neglects the inherent relations between both tasks. In this paper, we propose an unsupervised learning framework that jointly estimates optical flow (motion) and image intensity (appearance), with a single network. Starting from the event generation model, we newly derive the event-based photometric error as a function of optical flow and image intensity, which is further combined with the contrast maximization framework, yielding a comprehensive loss function that provides proper constraints for both flow and intensity estimation. Exhaustive experiments show that our model achieves state-of-the-art performance for both optical flow (achieves 20% and 25% improvement in EPE and AE respectively in the unsupervised learning category) and intensity estimation (produces competitive results with other baselines, particularly in high dynamic range scenarios). Last but not least, our model achieves shorter inference time than all the other optical flow models and many of the image reconstruction models, while they output only one quantity. Project page: https://github.com/tub-rip/e2fai

Event-based Photometric Bundle Adjustment

Dec 18, 2024

Abstract:We tackle the problem of bundle adjustment (i.e., simultaneous refinement of camera poses and scene map) for a purely rotating event camera. Starting from first principles, we formulate the problem as a classical non-linear least squares optimization. The photometric error is defined using the event generation model directly in the camera rotations and the semi-dense scene brightness that triggers the events. We leverage the sparsity of event data to design a tractable Levenberg-Marquardt solver that handles the very large number of variables involved. To the best of our knowledge, our method, which we call Event-based Photometric Bundle Adjustment (EPBA), is the first event-only photometric bundle adjustment method that works on the brightness map directly and exploits the space-time characteristics of event data, without having to convert events into image-like representations. Comprehensive experiments on both synthetic and real-world datasets demonstrate EPBA's effectiveness in decreasing the photometric error (by up to 90%), yielding results of unparalleled quality. The refined maps reveal details that were hidden using prior state-of-the-art rotation-only estimation methods. The experiments on modern high-resolution event cameras show the applicability of EPBA to panoramic imaging in various scenarios (without map initialization, at multiple resolutions, and in combination with other methods, such as IMU dead reckoning or previous event-based rotation estimation methods). We make the source code publicly available. https://github.com/tub-rip/epba

Event-based Mosaicing Bundle Adjustment

Sep 11, 2024Abstract:We tackle the problem of mosaicing bundle adjustment (i.e., simultaneous refinement of camera orientations and scene map) for a purely rotating event camera. We formulate the problem as a regularized non-linear least squares optimization. The objective function is defined using the linearized event generation model in the camera orientations and the panoramic gradient map of the scene. We show that this BA optimization has an exploitable block-diagonal sparsity structure, so that the problem can be solved efficiently. To the best of our knowledge, this is the first work to leverage such sparsity to speed up the optimization in the context of event-based cameras, without the need to convert events into image-like representations. We evaluate our method, called EMBA, on both synthetic and real-world datasets to show its effectiveness (50% photometric error decrease), yielding results of unprecedented quality. In addition, we demonstrate EMBA using high spatial resolution event cameras, yielding delicate panoramas in the wild, even without an initial map. Project page: https://github.com/tub-rip/emba

* 14+11 pages, 11 figures, 10 tables, https://github.com/tub-rip/emba

CMax-SLAM: Event-based Rotational-Motion Bundle Adjustment and SLAM System using Contrast Maximization

Mar 12, 2024Abstract:Event cameras are bio-inspired visual sensors that capture pixel-wise intensity changes and output asynchronous event streams. They show great potential over conventional cameras to handle challenging scenarios in robotics and computer vision, such as high-speed and high dynamic range. This paper considers the problem of rotational motion estimation using event cameras. Several event-based rotation estimation methods have been developed in the past decade, but their performance has not been evaluated and compared under unified criteria yet. In addition, these prior works do not consider a global refinement step. To this end, we conduct a systematic study of this problem with two objectives in mind: summarizing previous works and presenting our own solution. First, we compare prior works both theoretically and experimentally. Second, we propose the first event-based rotation-only bundle adjustment (BA) approach. We formulate it leveraging the state-of-the-art Contrast Maximization (CMax) framework, which is principled and avoids the need to convert events into frames. Third, we use the proposed BA to build CMax-SLAM, the first event-based rotation-only SLAM system comprising a front-end and a back-end. Our BA is able to run both offline (trajectory smoothing) and online (CMax-SLAM back-end). To demonstrate the performance and versatility of our method, we present comprehensive experiments on synthetic and real-world datasets, including indoor, outdoor and space scenarios. We discuss the pitfalls of real-world evaluation and propose a proxy for the reprojection error as the figure of merit to evaluate event-based rotation BA methods. We release the source code and novel data sequences to benefit the community. We hope this work leads to a better understanding and fosters further research on event-based ego-motion estimation. Project page: https://github.com/tub-rip/cmax_slam

* 22 pages, 20 figures, 8 tables. https://github.com/tub-rip/cmax_slam

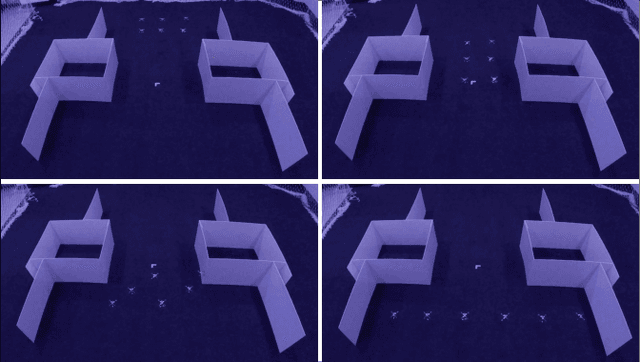

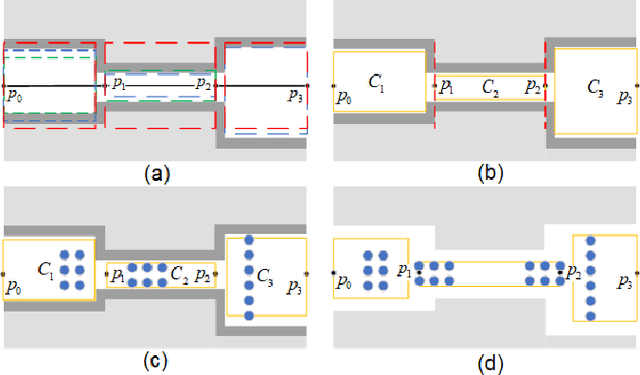

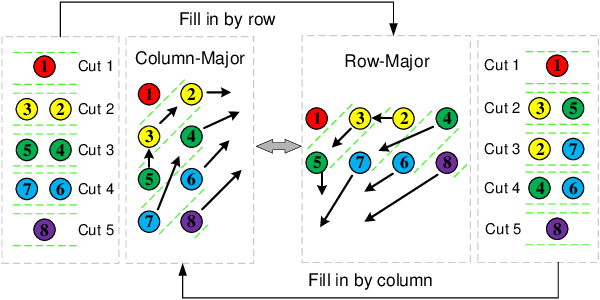

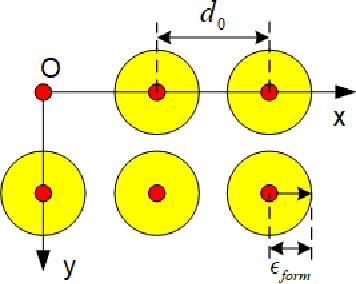

Continuous-time Gaussian Process Trajectory Generation for Multi-robot Formation via Probabilistic Inference

Oct 31, 2020

Abstract:In this paper, we extend a famous motion planning approach GPMP2 to multi-robot cases, yielding a novel centralized trajectory generation method for the multi-robot formation. A sparse Gaussian Process model is employed to represent the continuous-time trajectories of all robots as a limited number of states, which improves computational efficiency due to the sparsity. We add constraints to guarantee collision avoidance between individuals as well as formation maintenance, then all constraints and kinematics are formulated on a factor graph. By introducing a global planner, our proposed method can generate trajectories efficiently for a team of robots which have to get through a width-varying area by adaptive formation change. Finally, we provide the implementation of an incremental replanning algorithm to demonstrate the online operation potential of our proposed framework. The experiments in simulation and real world illustrate the feasibility, efficiency and scalability of our approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge