Etienne Bennequin

Open-Set Likelihood Maximization for Few-Shot Learning

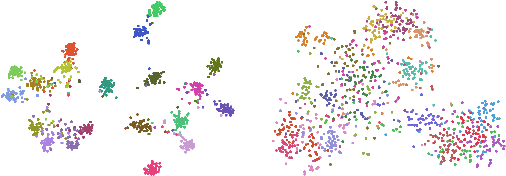

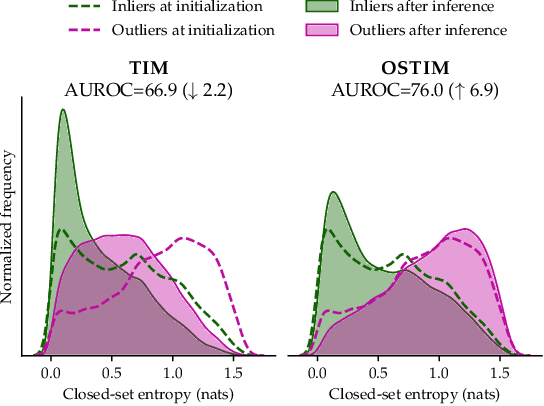

Jan 20, 2023Abstract:We tackle the Few-Shot Open-Set Recognition (FSOSR) problem, i.e. classifying instances among a set of classes for which we only have a few labeled samples, while simultaneously detecting instances that do not belong to any known class. We explore the popular transductive setting, which leverages the unlabelled query instances at inference. Motivated by the observation that existing transductive methods perform poorly in open-set scenarios, we propose a generalization of the maximum likelihood principle, in which latent scores down-weighing the influence of potential outliers are introduced alongside the usual parametric model. Our formulation embeds supervision constraints from the support set and additional penalties discouraging overconfident predictions on the query set. We proceed with a block-coordinate descent, with the latent scores and parametric model co-optimized alternately, thereby benefiting from each other. We call our resulting formulation \textit{Open-Set Likelihood Optimization} (OSLO). OSLO is interpretable and fully modular; it can be applied on top of any pre-trained model seamlessly. Through extensive experiments, we show that our method surpasses existing inductive and transductive methods on both aspects of open-set recognition, namely inlier classification and outlier detection.

Model-Agnostic Few-Shot Open-Set Recognition

Jun 18, 2022

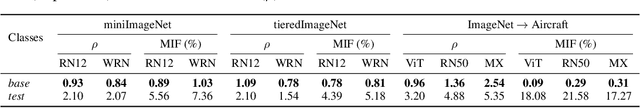

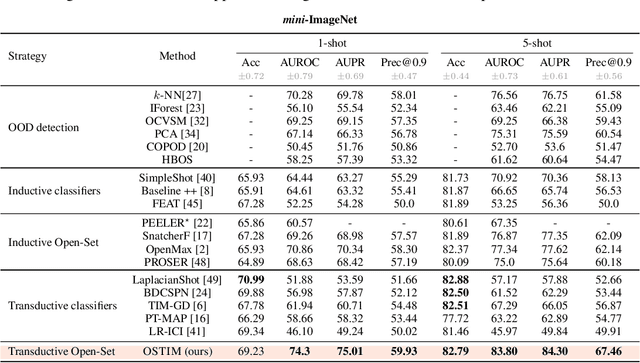

Abstract:We tackle the Few-Shot Open-Set Recognition (FSOSR) problem, i.e. classifying instances among a set of classes for which we only have few labeled samples, while simultaneously detecting instances that do not belong to any known class. Departing from existing literature, we focus on developing model-agnostic inference methods that can be plugged into any existing model, regardless of its architecture or its training procedure. Through evaluating the embedding's quality of a variety of models, we quantify the intrinsic difficulty of model-agnostic FSOSR. Furthermore, a fair empirical evaluation suggests that the naive combination of a kNN detector and a prototypical classifier ranks before specialized or complex methods in the inductive setting of FSOSR. These observations motivated us to resort to transduction, as a popular and practical relaxation of standard few-shot learning problems. We introduce an Open Set Transductive Information Maximization method OSTIM, which hallucinates an outlier prototype while maximizing the mutual information between extracted features and assignments. Through extensive experiments spanning 5 datasets, we show that OSTIM surpasses both inductive and existing transductive methods in detecting open-set instances while competing with the strongest transductive methods in classifying closed-set instances. We further show that OSTIM's model agnosticity allows it to successfully leverage the strong expressive abilities of the latest architectures and training strategies without any hyperparameter modification, a promising sign that architectural advances to come will continue to positively impact OSTIM's performances.

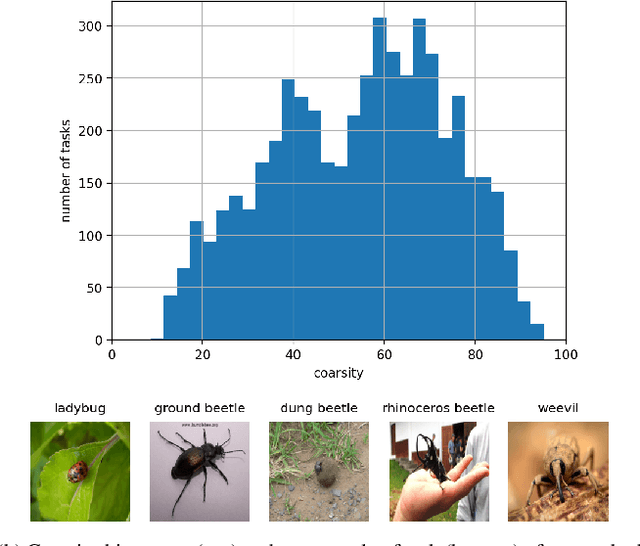

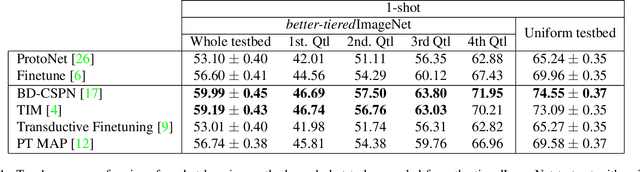

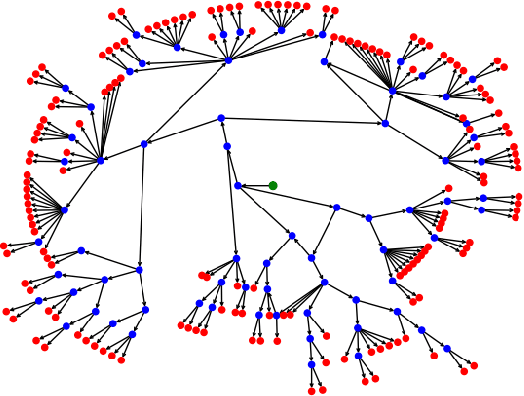

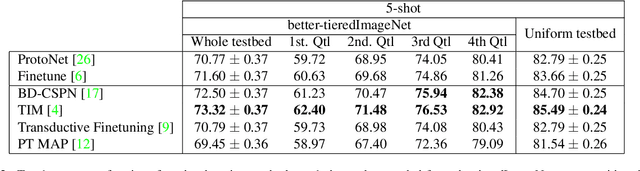

Few-Shot Image Classification Benchmarks are Too Far From Reality: Build Back Better with Semantic Task Sampling

May 10, 2022

Abstract:Every day, a new method is published to tackle Few-Shot Image Classification, showing better and better performances on academic benchmarks. Nevertheless, we observe that these current benchmarks do not accurately represent the real industrial use cases that we encountered. In this work, through both qualitative and quantitative studies, we expose that the widely used benchmark tieredImageNet is strongly biased towards tasks composed of very semantically dissimilar classes e.g. bathtub, cabbage, pizza, schipperke, and cardoon. This makes tieredImageNet (and similar benchmarks) irrelevant to evaluate the ability of a model to solve real-life use cases usually involving more fine-grained classification. We mitigate this bias using semantic information about the classes of tieredImageNet and generate an improved, balanced benchmark. Going further, we also introduce a new benchmark for Few-Shot Image Classification using the Danish Fungi 2020 dataset. This benchmark proposes a wide variety of evaluation tasks with various fine-graininess. Moreover, this benchmark includes many-way tasks (e.g. composed of 100 classes), which is a challenging setting yet very common in industrial applications. Our experiments bring out the correlation between the difficulty of a task and the semantic similarity between its classes, as well as a heavy performance drop of state-of-the-art methods on many-way few-shot classification, raising questions about the scaling abilities of these methods. We hope that our work will encourage the community to further question the quality of standard evaluation processes and their relevance to real-life applications.

Bridging Few-Shot Learning and Adaptation: New Challenges of Support-Query Shift

May 25, 2021

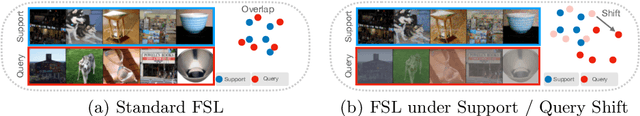

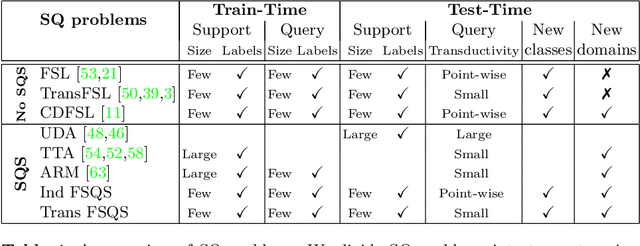

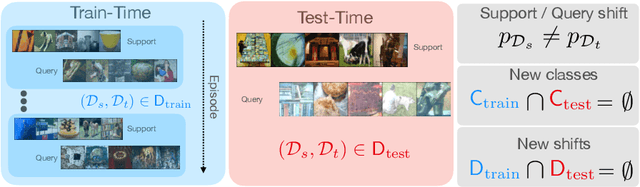

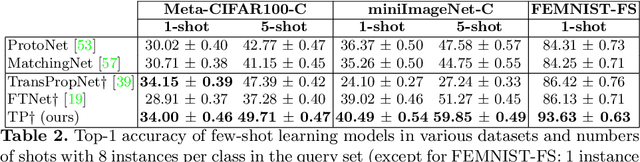

Abstract:Few-Shot Learning (FSL) algorithms have made substantial progress in learning novel concepts with just a handful of labelled data. To classify query instances from novel classes encountered at test-time, they only require a support set composed of a few labelled samples. FSL benchmarks commonly assume that those queries come from the same distribution as instances in the support set. However, in a realistic set-ting, data distribution is plausibly subject to change, a situation referred to as Distribution Shift (DS). The present work addresses the new and challenging problem of Few-Shot Learning under Support/Query Shift (FSQS) i.e., when support and query instances are sampled from related but different distributions. Our contributions are the following. First, we release a testbed for FSQS, including datasets, relevant baselines and a protocol for a rigorous and reproducible evaluation. Second, we observe that well-established FSL algorithms unsurprisingly suffer from a considerable drop in accuracy when facing FSQS, stressing the significance of our study. Finally, we show that transductive algorithms can limit the inopportune effect of DS. In particular, we study both the role of Batch-Normalization and Optimal Transport (OT) in aligning distributions, bridging Unsupervised Domain Adaptation with FSL. This results in a new method that efficiently combines OT with the celebrated Prototypical Networks. We bring compelling experiments demonstrating the advantage of our method. Our work opens an exciting line of research by providing a testbed and strong baselines. Our code is available at https://github.com/ebennequin/meta-domain-shift.

Learning to Communicate in Multi-Agent Reinforcement Learning : A Review

Nov 13, 2019

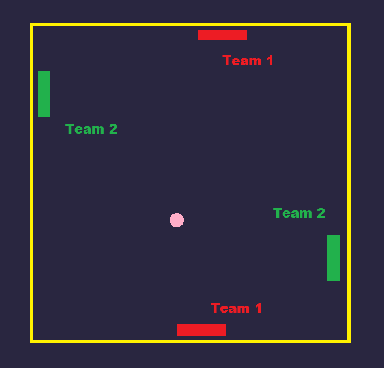

Abstract:We consider the issue of multiple agents learning to communicate through reinforcement learning within partially observable environments, with a focus on information asymmetry in the second part of our work. We provide a review of the recent algorithms developed to improve the agents' policy by allowing the sharing of information between agents and the learning of communication strategies, with a focus on Deep Recurrent Q-Network-based models. We also describe recent efforts to interpret the languages generated by these agents and study their properties in an attempt to generate human-language-like sentences. We discuss the metrics used to evaluate the generated communication strategies and propose a novel entropy-based evaluation metric. Finally, we address the issue of the cost of communication and introduce the idea of an experimental setup to expose this cost in cooperative-competitive game.

Meta-learning algorithms for Few-Shot Computer Vision

Sep 30, 2019

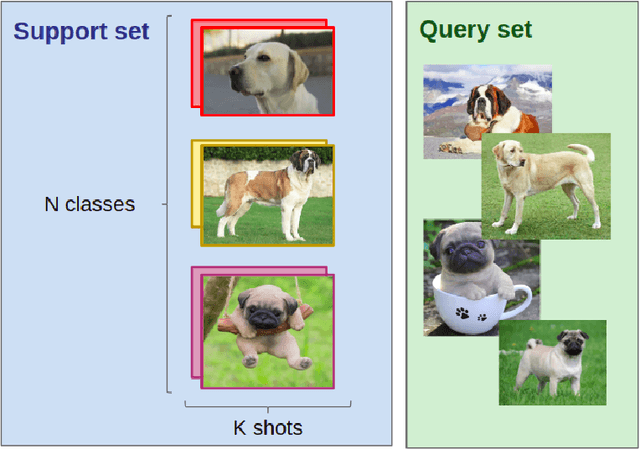

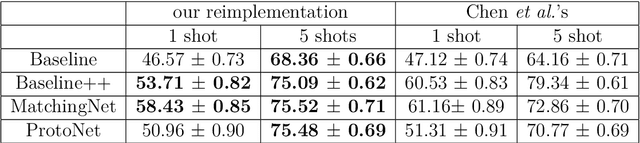

Abstract:Few-Shot Learning is the challenge of training a model with only a small amount of data. Many solutions to this problem use meta-learning algorithms, i.e. algorithms that learn to learn. By sampling few-shot tasks from a larger dataset, we can teach these algorithms to solve new, unseen tasks. This document reports my work on meta-learning algorithms for Few-Shot Computer Vision. This work was done during my internship at Sicara, a French company building image recognition solutions for businesses. It contains: 1. an extensive review of the state-of-the-art in few-shot computer vision; 2. a benchmark of meta-learning algorithms for few-shot image classification; 3. the introduction to a novel meta-learning algorithm for few-shot object detection, which is still in development.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge