Eleonora Grassucci

Closing the gap in multimodal medical representation alignment

Feb 23, 2026Abstract:In multimodal learning, CLIP has emerged as the de-facto approach for mapping different modalities into a shared latent space by bringing semantically similar representations closer while pushing apart dissimilar ones. However, CLIP-based contrastive losses exhibit unintended behaviors that negatively impact true semantic alignment, leading to sparse and fragmented latent spaces. This phenomenon, known as the modality gap, has been partially mitigated for standard text and image pairs but remains unknown and unresolved in more complex multimodal settings, such as the medical domain. In this work, we study this phenomenon in the latter case, revealing that the modality gap is present also in medical alignment, and we propose a modality-agnostic framework that closes this gap, ensuring that semantically related representations are more aligned, regardless of their source modality. Our method enhances alignment between radiology images and clinical text, improving cross-modal retrieval and image captioning.

Closing the Modality Gap Aligns Group-Wise Semantics

Jan 26, 2026Abstract:In multimodal learning, CLIP has been recognized as the \textit{de facto} method for learning a shared latent space across multiple modalities, placing similar representations close to each other and moving them away from dissimilar ones. Although CLIP-based losses effectively align modalities at the semantic level, the resulting latent spaces often remain only partially shared, revealing a structural mismatch known as the modality gap. While the necessity of addressing this phenomenon remains debated, particularly given its limited impact on instance-wise tasks (e.g., retrieval), we prove that its influence is instead strongly pronounced in group-level tasks (e.g., clustering). To support this claim, we introduce a novel method designed to consistently reduce this discrepancy in two-modal settings, with a straightforward extension to the general $n$-modal case. Through our extensive evaluation, we demonstrate our novel insight: while reducing the gap provides only marginal or inconsistent improvements in traditional instance-wise tasks, it significantly enhances group-wise tasks. These findings may reshape our understanding of the modality gap, highlighting its key role in improving performance on tasks requiring semantic grouping.

Beyond Answers: How LLMs Can Pursue Strategic Thinking in Education

Apr 07, 2025

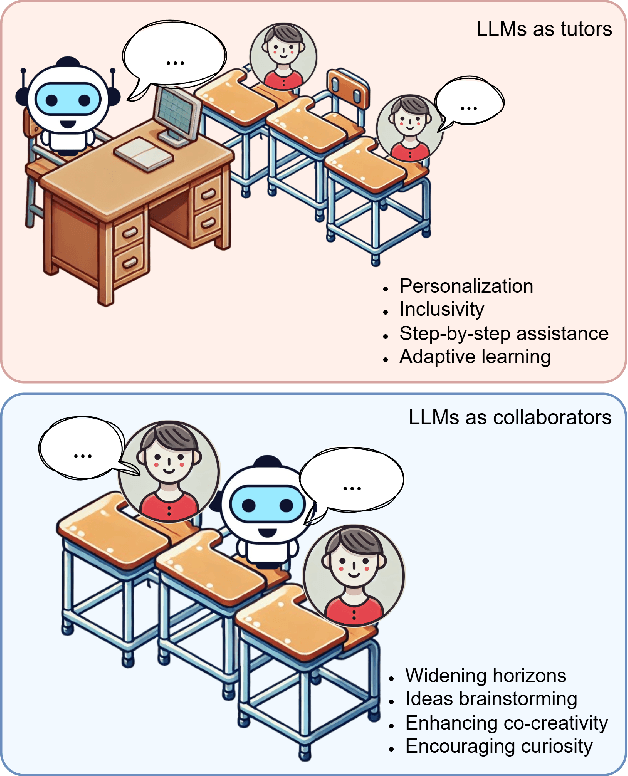

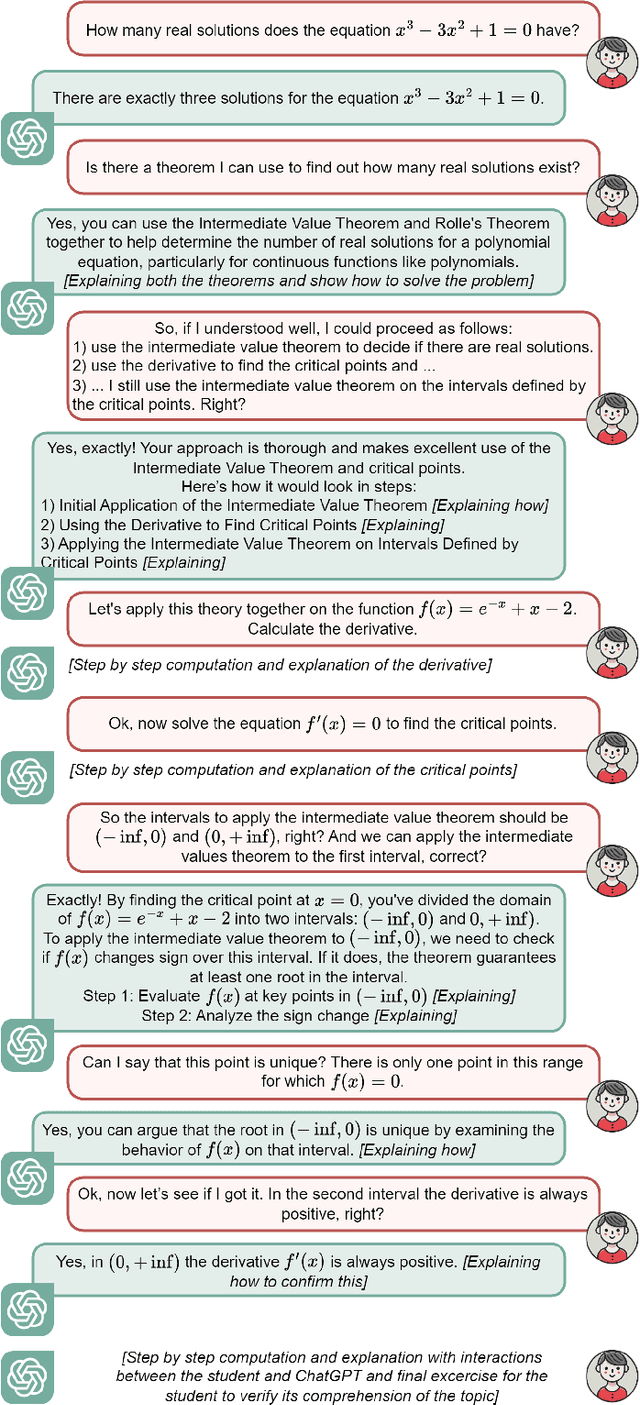

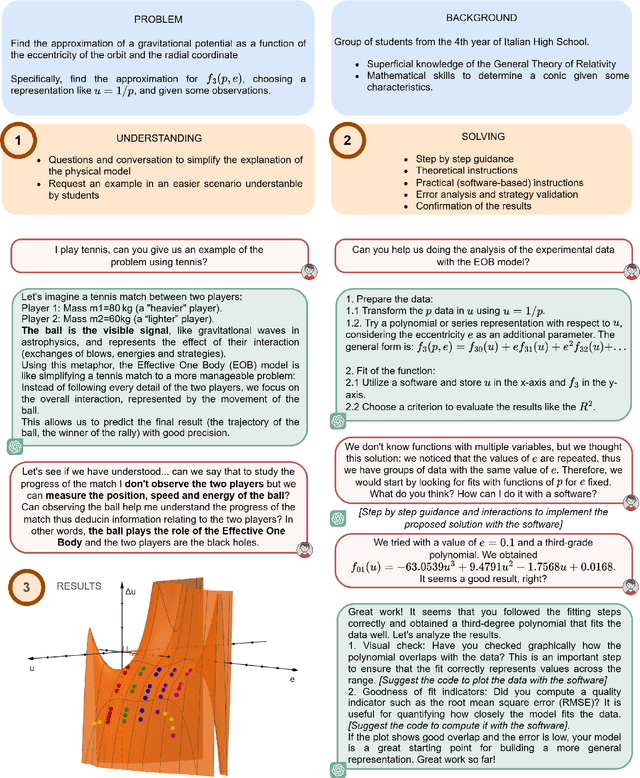

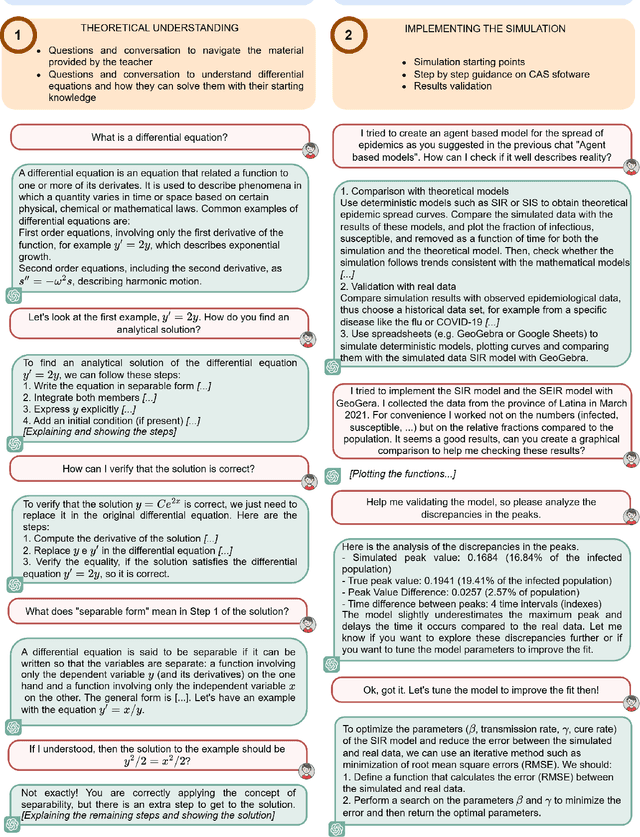

Abstract:Artificial Intelligence (AI) holds transformative potential in education, enabling personalized learning, enhancing inclusivity, and encouraging creativity and curiosity. In this paper, we explore how Large Language Models (LLMs) can act as both patient tutors and collaborative partners to enhance education delivery. As tutors, LLMs personalize learning by offering step-by-step explanations and addressing individual needs, making education more inclusive for students with diverse backgrounds or abilities. As collaborators, they expand students' horizons, supporting them in tackling complex, real-world problems and co-creating innovative projects. However, to fully realize these benefits, LLMs must be leveraged not as tools for providing direct solutions but rather to guide students in developing resolving strategies and finding learning paths together. Therefore, a strong emphasis should be placed on educating students and teachers on the successful use of LLMs to ensure their effective integration into classrooms. Through practical examples and real-world case studies, this paper illustrates how LLMs can make education more inclusive and engaging while empowering students to reach their full potential.

Gramian Multimodal Representation Learning and Alignment

Dec 16, 2024

Abstract:Human perception integrates multiple modalities, such as vision, hearing, and language, into a unified understanding of the surrounding reality. While recent multimodal models have achieved significant progress by aligning pairs of modalities via contrastive learning, their solutions are unsuitable when scaling to multiple modalities. These models typically align each modality to a designated anchor without ensuring the alignment of all modalities with each other, leading to suboptimal performance in tasks requiring a joint understanding of multiple modalities. In this paper, we structurally rethink the pairwise conventional approach to multimodal learning and we present the novel Gramian Representation Alignment Measure (GRAM), which overcomes the above-mentioned limitations. GRAM learns and then aligns $n$ modalities directly in the higher-dimensional space in which modality embeddings lie by minimizing the Gramian volume of the $k$-dimensional parallelotope spanned by the modality vectors, ensuring the geometric alignment of all modalities simultaneously. GRAM can replace cosine similarity in any downstream method, holding for 2 to $n$ modality and providing more meaningful alignment with respect to previous similarity measures. The novel GRAM-based contrastive loss function enhances the alignment of multimodal models in the higher-dimensional embedding space, leading to new state-of-the-art performance in downstream tasks such as video-audio-text retrieval and audio-video classification. The project page, the code, and the pretrained models are available at https://ispamm.github.io/GRAM/.

Lightweight Diffusion Models for Resource-Constrained Semantic Communication

Oct 03, 2024

Abstract:Recently, generative semantic communication models have proliferated as they are revolutionizing semantic communication frameworks, improving their performance, and opening the way to novel applications. Despite their impressive ability to regenerate content from the compressed semantic information received, generative models pose crucial challenges for communication systems in terms of high memory footprints and heavy computational load. In this paper, we present a novel Quantized GEnerative Semantic COmmunication framework, Q-GESCO. The core method of Q-GESCO is a quantized semantic diffusion model capable of regenerating transmitted images from the received semantic maps while simultaneously reducing computational load and memory footprint thanks to the proposed post-training quantization technique. Q-GESCO is robust to different channel noises and obtains comparable performance to the full precision counterpart in different scenarios saving up to 75% memory and 79% floating point operations. This allows resource-constrained devices to exploit the generative capabilities of Q-GESCO, widening the range of applications and systems for generative semantic communication frameworks. The code is available at https://github.com/ispamm/Q-GESCO.

Rethinking Multi-User Semantic Communications with Deep Generative Models

May 16, 2024

Abstract:In recent years, novel communication strategies have emerged to face the challenges that the increased number of connected devices and the higher quality of transmitted information are posing. Among them, semantic communication obtained promising results especially when combined with state-of-the-art deep generative models, such as large language or diffusion models, able to regenerate content from extremely compressed semantic information. However, most of these approaches focus on single-user scenarios processing the received content at the receiver on top of conventional communication systems. In this paper, we propose to go beyond these methods by developing a novel generative semantic communication framework tailored for multi-user scenarios. This system assigns the channel to users knowing that the lost information can be filled in with a diffusion model at the receivers. Under this innovative perspective, OFDMA systems should not aim to transmit the largest part of information, but solely the bits necessary to the generative model to semantically regenerate the missing ones. The thorough experimental evaluation shows the capabilities of the novel diffusion model and the effectiveness of the proposed framework, leading towards a GenAI-based next generation of communications.

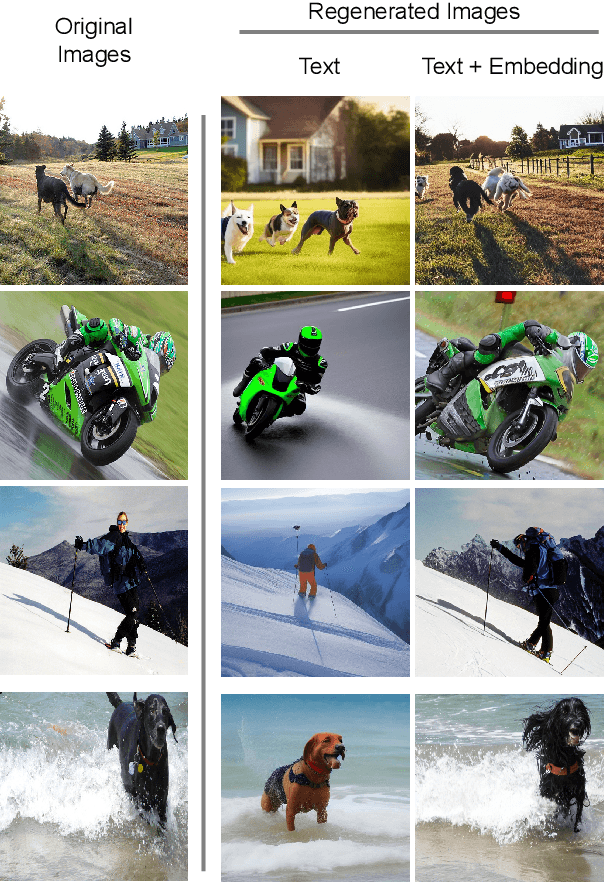

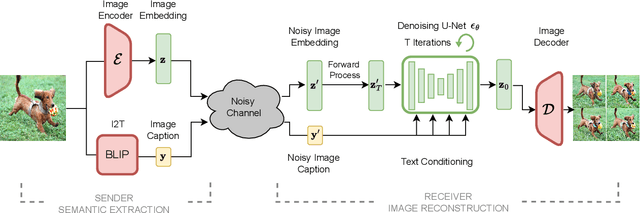

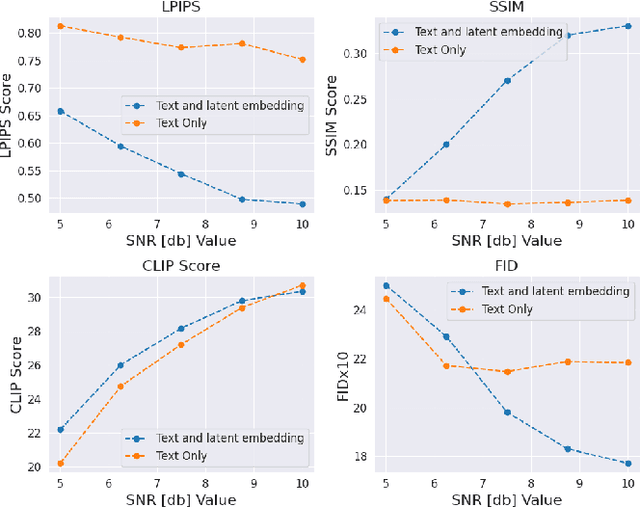

Language-Oriented Semantic Latent Representation for Image Transmission

May 16, 2024

Abstract:In the new paradigm of semantic communication (SC), the focus is on delivering meanings behind bits by extracting semantic information from raw data. Recent advances in data-to-text models facilitate language-oriented SC, particularly for text-transformed image communication via image-to-text (I2T) encoding and text-to-image (T2I) decoding. However, although semantically aligned, the text is too coarse to precisely capture sophisticated visual features such as spatial locations, color, and texture, incurring a significant perceptual difference between intended and reconstructed images. To address this limitation, in this paper, we propose a novel language-oriented SC framework that communicates both text and a compressed image embedding and combines them using a latent diffusion model to reconstruct the intended image. Experimental results validate the potential of our approach, which transmits only 2.09\% of the original image size while achieving higher perceptual similarities in noisy communication channels compared to a baseline SC method that communicates only through text.The code is available at https://github.com/ispamm/Img2Img-SC/ .

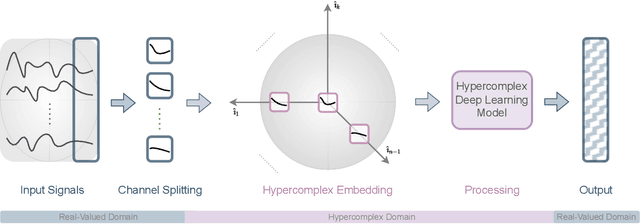

Demystifying the Hypercomplex: Inductive Biases in Hypercomplex Deep Learning

May 11, 2024

Abstract:Hypercomplex algebras have recently been gaining prominence in the field of deep learning owing to the advantages of their division algebras over real vector spaces and their superior results when dealing with multidimensional signals in real-world 3D and 4D paradigms. This paper provides a foundational framework that serves as a roadmap for understanding why hypercomplex deep learning methods are so successful and how their potential can be exploited. Such a theoretical framework is described in terms of inductive bias, i.e., a collection of assumptions, properties, and constraints that are built into training algorithms to guide their learning process toward more efficient and accurate solutions. We show that it is possible to derive specific inductive biases in the hypercomplex domains, which extend complex numbers to encompass diverse numbers and data structures. These biases prove effective in managing the distinctive properties of these domains, as well as the complex structures of multidimensional and multimodal signals. This novel perspective for hypercomplex deep learning promises to both demystify this class of methods and clarify their potential, under a unifying framework, and in this way promotes hypercomplex models as viable alternatives to traditional real-valued deep learning for multidimensional signal processing.

Towards Explaining Hypercomplex Neural Networks

Mar 26, 2024Abstract:Hypercomplex neural networks are gaining increasing interest in the deep learning community. The attention directed towards hypercomplex models originates from several aspects, spanning from purely theoretical and mathematical characteristics to the practical advantage of lightweight models over conventional networks, and their unique properties to capture both global and local relations. In particular, a branch of these architectures, parameterized hypercomplex neural networks (PHNNs), has also gained popularity due to their versatility across a multitude of application domains. Nonetheless, only few attempts have been made to explain or interpret their intricacies. In this paper, we propose inherently interpretable PHNNs and quaternion-like networks, thus without the need for any post-hoc method. To achieve this, we define a type of cosine-similarity transform within the parameterized hypercomplex domain. This PHB-cos transform induces weight alignment with relevant input features and allows to reduce the model into a single linear transform, rendering it directly interpretable. In this work, we start to draw insights into how this unique branch of neural models operates. We observe that hypercomplex networks exhibit a tendency to concentrate on the shape around the main object of interest, in addition to the shape of the object itself. We provide a thorough analysis, studying single neurons of different layers and comparing them against how real-valued networks learn. The code of the paper is available at https://github.com/ispamm/HxAI.

Generative AI Meets Semantic Communication: Evolution and Revolution of Communication Tasks

Jan 10, 2024Abstract:While deep generative models are showing exciting abilities in computer vision and natural language processing, their adoption in communication frameworks is still far underestimated. These methods are demonstrated to evolve solutions to classic communication problems such as denoising, restoration, or compression. Nevertheless, generative models can unveil their real potential in semantic communication frameworks, in which the receiver is not asked to recover the sequence of bits used to encode the transmitted (semantic) message, but only to regenerate content that is semantically consistent with the transmitted message. Disclosing generative models capabilities in semantic communication paves the way for a paradigm shift with respect to conventional communication systems, which has great potential to reduce the amount of data traffic and offers a revolutionary versatility to novel tasks and applications that were not even conceivable a few years ago. In this paper, we present a unified perspective of deep generative models in semantic communication and we unveil their revolutionary role in future communication frameworks, enabling emerging applications and tasks. Finally, we analyze the challenges and opportunities to face to develop generative models specifically tailored for communication systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge