Dhruv Gupta

Hybrid Deep Learning Framework for Classification of Kidney CT Images: Diagnosis of Stones, Cysts, and Tumors

Feb 05, 2025

Abstract:Medical image classification is a vital research area that utilizes advanced computational techniques to improve disease diagnosis and treatment planning. Deep learning models, especially Convolutional Neural Networks (CNNs), have transformed this field by providing automated and precise analysis of complex medical images. This study introduces a hybrid deep learning model that integrates a pre-trained ResNet101 with a custom CNN to classify kidney CT images into four categories: normal, stone, cyst, and tumor. The proposed model leverages feature fusion to enhance classification accuracy, achieving 99.73% training accuracy and 100% testing accuracy. Using a dataset of 12,446 CT images and advanced feature mapping techniques, the hybrid CNN model outperforms standalone ResNet101. This architecture delivers a robust and efficient solution for automated kidney disease diagnosis, providing improved precision, recall, and reduced testing time, making it highly suitable for clinical applications.

TNNGen: Automated Design of Neuromorphic Sensory Processing Units for Time-Series Clustering

Dec 23, 2024

Abstract:Temporal Neural Networks (TNNs), a special class of spiking neural networks, draw inspiration from the neocortex in utilizing spike-timings for information processing. Recent works proposed a microarchitecture framework and custom macro suite for designing highly energy-efficient application-specific TNNs. These recent works rely on manual hardware design, a labor-intensive and time-consuming process. Further, there is no open-source functional simulation framework for TNNs. This paper introduces TNNGen, a pioneering effort towards the automated design of TNNs from PyTorch software models to post-layout netlists. TNNGen comprises a novel PyTorch functional simulator (for TNN modeling and application exploration) coupled with a Python-based hardware generator (for PyTorch-to-RTL and RTL-to-Layout conversions). Seven representative TNN designs for time-series signal clustering across diverse sensory modalities are simulated and their post-layout hardware complexity and design runtimes are assessed to demonstrate the effectiveness of TNNGen. We also highlight TNNGen's ability to accurately forecast silicon metrics without running hardware process flow.

Evolving Domain Adaptation of Pretrained Language Models for Text Classification

Nov 16, 2023

Abstract:Adapting pre-trained language models (PLMs) for time-series text classification amidst evolving domain shifts (EDS) is critical for maintaining accuracy in applications like stance detection. This study benchmarks the effectiveness of evolving domain adaptation (EDA) strategies, notably self-training, domain-adversarial training, and domain-adaptive pretraining, with a focus on an incremental self-training method. Our analysis across various datasets reveals that this incremental method excels at adapting PLMs to EDS, outperforming traditional domain adaptation techniques. These findings highlight the importance of continually updating PLMs to ensure their effectiveness in real-world applications, paving the way for future research into PLM robustness against the natural temporal evolution of language.

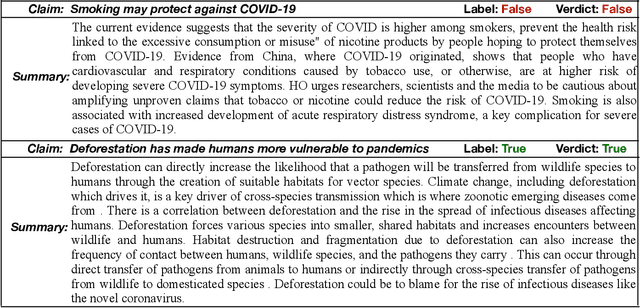

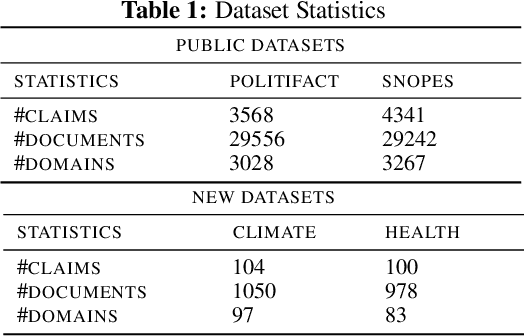

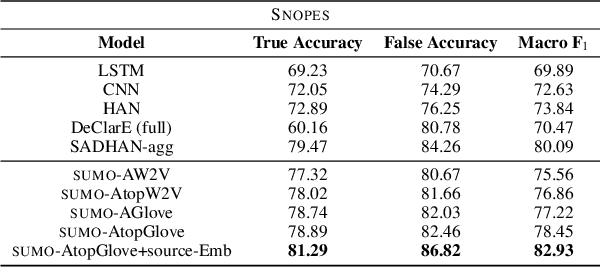

Generating Fact Checking Summaries for Web Claims

Oct 16, 2020

Abstract:We present SUMO, a neural attention-based approach that learns to establish the correctness of textual claims based on evidence in the form of text documents (e.g., news articles or Web documents). SUMO further generates an extractive summary by presenting a diversified set of sentences from the documents that explain its decision on the correctness of the textual claim. Prior approaches to address the problem of fact checking and evidence extraction have relied on simple concatenation of claim and document word embeddings as an input to claim driven attention weight computation. This is done so as to extract salient words and sentences from the documents that help establish the correctness of the claim. However, this design of claim-driven attention does not capture the contextual information in documents properly. We improve on the prior art by using improved claim and title guided hierarchical attention to model effective contextual cues. We show the efficacy of our approach on datasets concerning political, healthcare, and environmental issues.

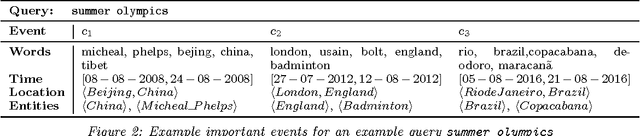

Event Search and Analytics: Detecting Events in Semantically Annotated Corpora for Search and Analytics

Mar 01, 2016

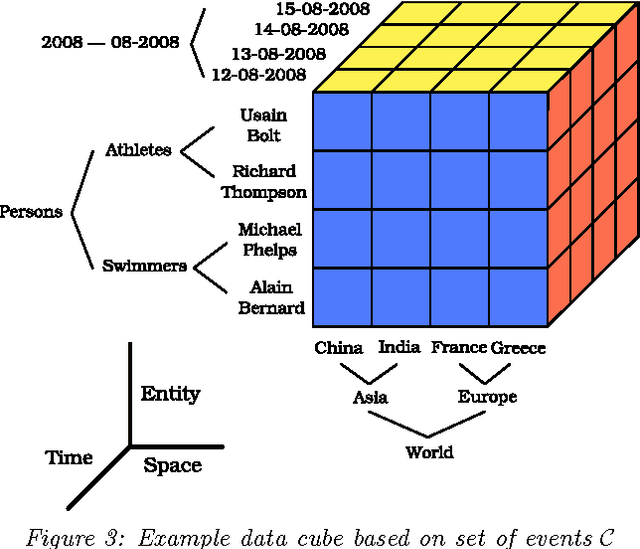

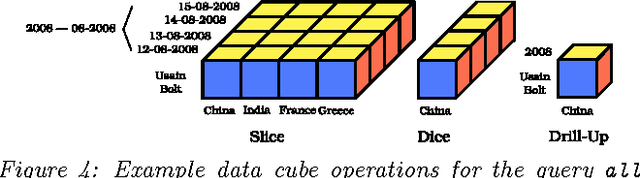

Abstract:In this article, I present the questions that I seek to answer in my PhD research. I posit to analyze natural language text with the help of semantic annotations and mine important events for navigating large text corpora. Semantic annotations such as named entities, geographic locations, and temporal expressions can help us mine events from the given corpora. These events thus provide us with useful means to discover the locked knowledge in them. I pose three problems that can help unlock this knowledge vault in semantically annotated text corpora: i. identifying important events; ii. semantic search; and iii. event analytics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge