Desheng Sun

BSM loss: A superior way in modeling aleatory uncertainty of fine_grained classification

Jun 09, 2022

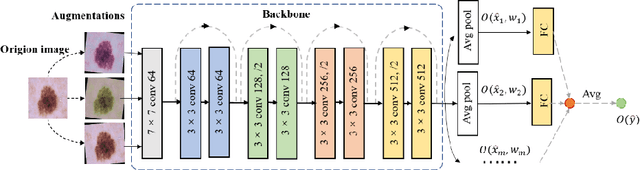

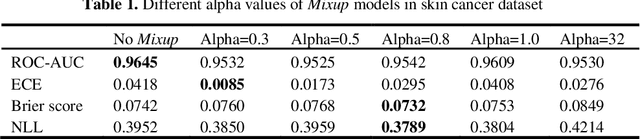

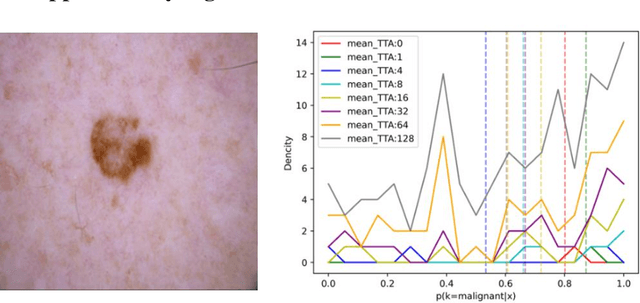

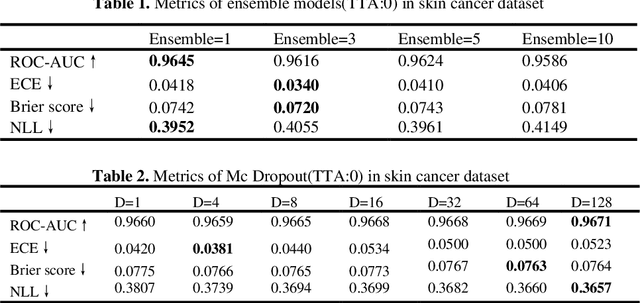

Abstract:Artificial intelligence(AI)-assisted method had received much attention in the risk field such as disease diagnosis. Different from the classification of disease types, it is a fine-grained task to classify the medical images as benign or malignant. However, most research only focuses on improving the diagnostic accuracy and ignores the evaluation of model reliability, which limits its clinical application. For clinical practice, calibration presents major challenges in the low-data regime extremely for over-parametrized models and inherent noises. In particular, we discovered that modeling data-dependent uncertainty is more conducive to confidence calibrations. Compared with test-time augmentation(TTA), we proposed a modified Bootstrapping loss(BS loss) function with Mixup data augmentation strategy that can better calibrate predictive uncertainty and capture data distribution transformation without additional inference time. Our experiments indicated that BS loss with Mixup(BSM) model can halve the Expected Calibration Error(ECE) compared to standard data augmentation, deep ensemble and MC dropout. The correlation between uncertainty and similarity of in-domain data is up to -0.4428 under the BSM model. Additionally, the BSM model is able to perceive the semantic distance of out-of-domain data, demonstrating high potential in real-world clinical practice.

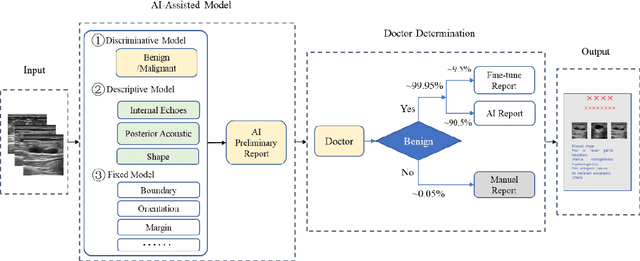

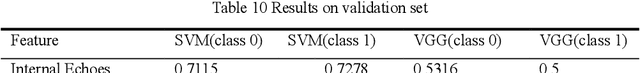

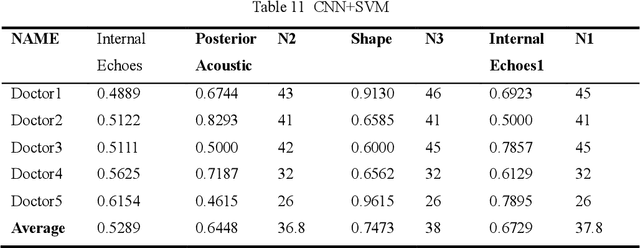

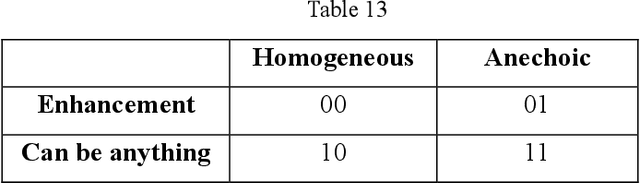

AI assisted method for efficiently generating breast ultrasound screening reports

Jul 28, 2021

Abstract:Ultrasound is the preferred choice for early screening of dense breast cancer. Clinically, doctors have to manually write the screening report which is time-consuming and laborious, and it is easy to miss and miswrite. Therefore, this paper proposes a method for efficiently generating personalized breast ultrasound screening preliminary reports by AI, especially for benign and normal cases which account for the majority. Doctors then make simple adjustments or corrections to quickly generate final reports. The proposed approach has been tested using a database of 1133 breast tumor instances. Experimental results indicate this pipeline improves doctors' work efficiency by up to 90%, which greatly reduces repetitive work.

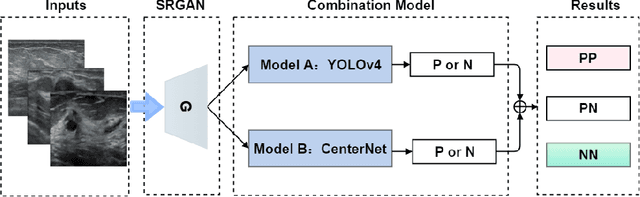

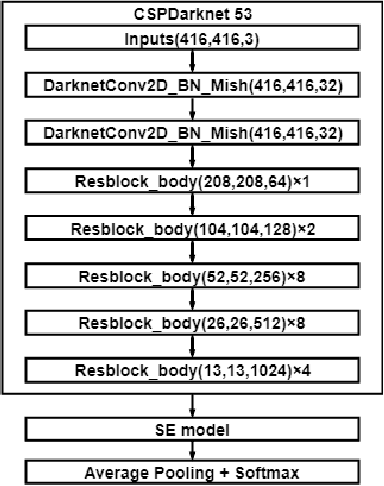

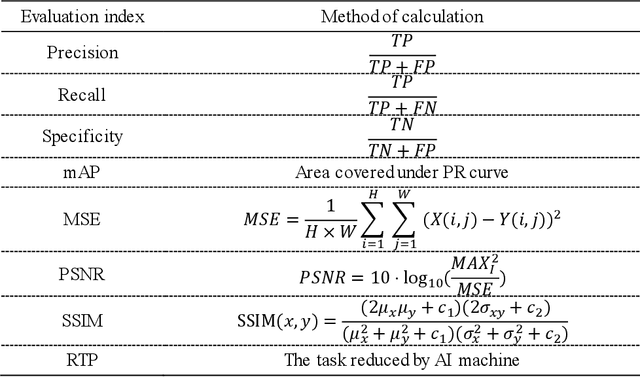

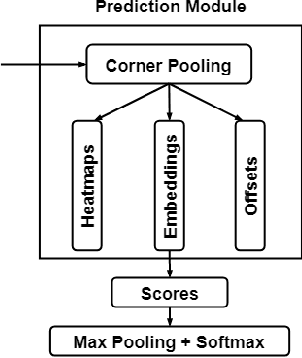

More Reliable AI Solution: Breast Ultrasound Diagnosis Using Multi-AI Combination

Jan 07, 2021

Abstract:Objective: Breast cancer screening is of great significance in contemporary women's health prevention. The existing machines embedded in the AI system do not reach the accuracy that clinicians hope. How to make intelligent systems more reliable is a common problem. Methods: 1) Ultrasound image super-resolution: the SRGAN super-resolution network reduces the unclearness of ultrasound images caused by the device itself and improves the accuracy and generalization of the detection model. 2) In response to the needs of medical images, we have improved the YOLOv4 and the CenterNet models. 3) Multi-AI model: based on the respective advantages of different AI models, we employ two AI models to determine clinical resuls cross validation. And we accept the same results and refuses others. Results: 1) With the help of the super-resolution model, the YOLOv4 model and the CenterNet model both increased the mAP score by 9.6% and 13.8%. 2) Two methods for transforming the target model into a classification model are proposed. And the unified output is in a specified format to facilitate the call of the molti-AI model. 3) In the classification evaluation experiment, concatenated by the YOLOv4 model (sensitivity 57.73%, specificity 90.08%) and the CenterNet model (sensitivity 62.64%, specificity 92.54%), the multi-AI model will refuse to make judgments on 23.55% of the input data. Correspondingly, the performance has been greatly improved to 95.91% for the sensitivity and 96.02% for the specificity. Conclusion: Our work makes the AI model more reliable in medical image diagnosis. Significance: 1) The proposed method makes the target detection model more suitable for diagnosing breast ultrasound images. 2) It provides a new idea for artificial intelligence in medical diagnosis, which can more conveniently introduce target detection models from other fields to serve medical lesion screening.

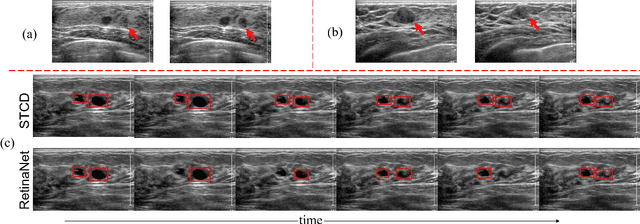

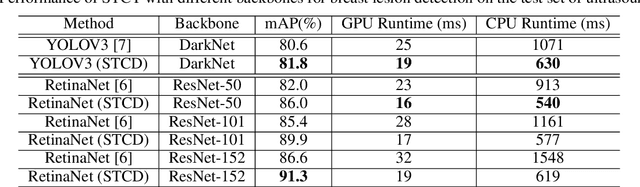

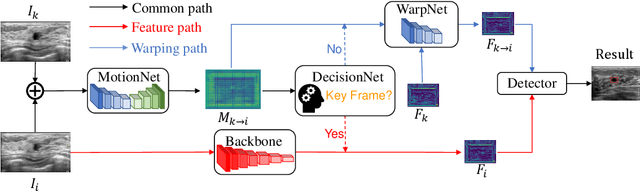

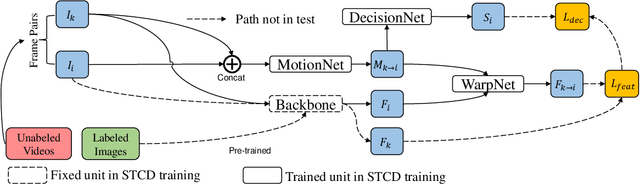

Semi-supervised Breast Lesion Detection in Ultrasound Video Based on Temporal Coherence

Jul 16, 2019

Abstract:Breast lesion detection in ultrasound video is critical for computer-aided diagnosis. However, detecting lesion in video is quite challenging due to the blurred lesion boundary, high similarity to soft tissue and lack of video annotations. In this paper, we propose a semi-supervised breast lesion detection method based on temporal coherence which can detect the lesion more accurately. We aggregate features extracted from the historical key frames with adaptive key-frame scheduling strategy. Our proposed method accomplishes the unlabeled videos detection task by leveraging the supervision information from a different set of labeled images. In addition, a new WarpNet is designed to replace both the traditional spatial warping and feature aggregation operation, leading to a tremendous increase in speed. Experiments on 1,060 2D ultrasound sequences demonstrate that our proposed method achieves state-of-the-art video detection result as 91.3% in mean average precision and 19 ms per frame on GPU, compared to a RetinaNet based detection method in 86.6% and 32 ms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge