Dawn M. Tilbury

University of Michigan

Local Minima Prediction using Dynamic Bayesian Filtering for UGV Navigation in Unstructured Environments

May 20, 2025Abstract:Path planning is crucial for the navigation of autonomous vehicles, yet these vehicles face challenges in complex and real-world environments. Although a global view may be provided, it is often outdated, necessitating the reliance of Unmanned Ground Vehicles (UGVs) on real-time local information. This reliance on partial information, without considering the global context, can lead to UGVs getting stuck in local minima. This paper develops a method to proactively predict local minima using Dynamic Bayesian filtering, based on the detected obstacles in the local view and the global goal. This approach aims to enhance the autonomous navigation of self-driving vehicles by allowing them to predict potential pitfalls before they get stuck, and either ask for help from a human, or re-plan an alternate trajectory.

Training Human-Robot Teams by Improving Transparency Through a Virtual Spectator Interface

Mar 12, 2025Abstract:After-action reviews (AARs) are professional discussions that help operators and teams enhance their task performance by analyzing completed missions with peers and professionals. Previous studies that compared different formats of AARs have mainly focused on human teams. However, the inclusion of robotic teammates brings along new challenges in understanding teammate intent and communication. Traditional AAR between human teammates may not be satisfactory for human-robot teams. To address this limitation, we propose a new training review (TR) tool, called the Virtual Spectator Interface (VSI), to enhance human-robot team performance and situational awareness (SA) in a simulated search mission. The proposed VSI primarily utilizes visual feedback to review subjects' behavior. To examine the effectiveness of VSI, we took elements from AAR to conduct our own TR, designed a 1 x 3 between-subjects experiment with experimental conditions: TR with (1) VSI, (2) screen recording, and (3) non-technology (only verbal descriptions). The results of our experiments demonstrated that the VSI did not result in significantly better team performance than other conditions. However, the TR with VSI led to more improvement in the subjects SA over the other conditions.

Sequential Manipulation of Deformable Linear Object Networks with Endpoint Pose Measurements using Adaptive Model Predictive Control

Feb 15, 2024

Abstract:Robotic manipulation of deformable linear objects (DLOs) is an active area of research, though emerging applications, like automotive wire harness installation, introduce constraints that have not been considered in prior work. Confined workspaces and limited visibility complicate prior assumptions of multi-robot manipulation and direct measurement of DLO configuration (state). This work focuses on single-arm manipulation of stiff DLOs (StDLOs) connected to form a DLO network (DLON), for which the measurements (output) are the endpoint poses of the DLON, which are subject to unknown dynamics during manipulation. To demonstrate feasibility of output-based control without state estimation, direct input-output dynamics are shown to exist by training neural network models on simulated trajectories. Output dynamics are then approximated with polynomials and found to contain well-known rigid body dynamics terms. A composite model consisting of a rigid body model and an online data-driven residual is developed, which predicts output dynamics more accurately than either model alone, and without prior experience with the system. An adaptive model predictive controller is developed with the composite model for DLON manipulation, which completes DLON installation tasks, both in simulation and with a physical automotive wire harness.

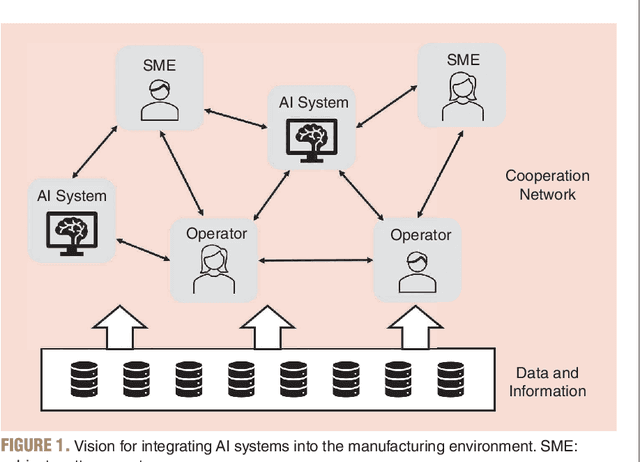

Opportunities and Challenges to Integrate Artificial Intelligence into Manufacturing Systems: Thoughts from a Panel Discussion

Mar 20, 2023

Abstract:Rapid advances in artificial intelligence (AI) have the potential to significantly increase the productivity, quality, and profitability in future manufacturing systems. Traditional mass-production will give way to personalized production, with each item made to order, at the low cost and high-quality consumers have come to expect. Manufacturing systems will have the intelligence to be resilient to multiple disruptions, from small-scale machine breakdowns, to large-scale natural disasters. Products will be made with higher precision and lower variability. While gains have been made towards the development of these factories of the future, many challenges remain to fully realize this vision. To consider the challenges and opportunities associated with this topic, a panel of experts from Industry, Academia, and Government was invited to participate in an active discussion at the 2022 Modeling, Estimation and Control Conference (MECC) held in Jersey City, New Jersey from October 3- 5, 2022. The panel discussion focused on the challenges and opportunities to more fully integrate AI into manufacturing systems. Three overarching themes emerged from the panel discussion. First, to be successful, AI will need to work seamlessly, and in an integrated manner with humans (and vice versa). Second, significant gaps in the infrastructure needed to enable the full potential of AI into the manufacturing ecosystem, including sufficient data availability, storage, and analysis, must be addressed. And finally, improved coordination between universities, industry, and government agencies can facilitate greater opportunities to push the field forward. This article briefly summarizes these three themes, and concludes with a discussion of promising directions.

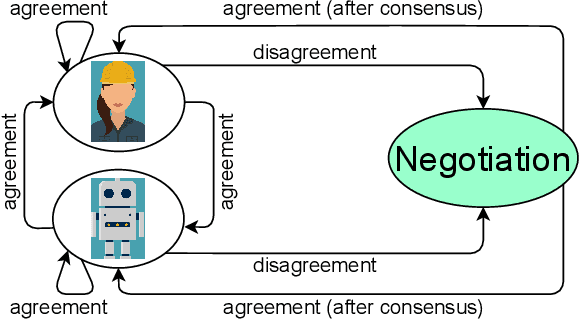

Considerations for Task Allocation in Human-Robot Teams

Oct 06, 2022Abstract:In human-robot teams where agents collaborate together, there needs to be a clear allocation of tasks to agents. Task allocation can aid in achieving the presumed benefits of human-robot teams, such as improved team performance. Many task allocation methods have been proposed that include factors such as agent capability, availability, workload, fatigue, and task and domain-specific parameters. In this paper, selected work on task allocation is reviewed. In addition, some areas for continued and further consideration in task allocation are discussed. These areas include level of collaboration, novel tasks, unknown and dynamic agent capabilities, negotiation and fairness, and ethics. Where applicable, we also mention some of our work on task allocation. Through continued efforts and considerations in task allocation, human-robot teaming can be improved.

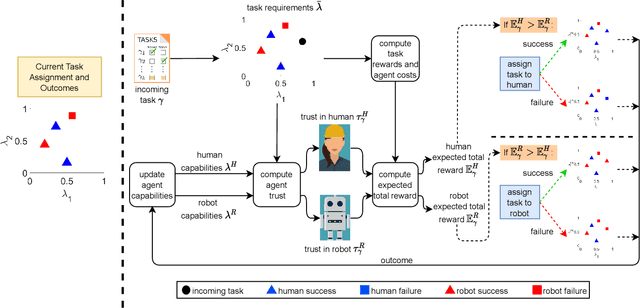

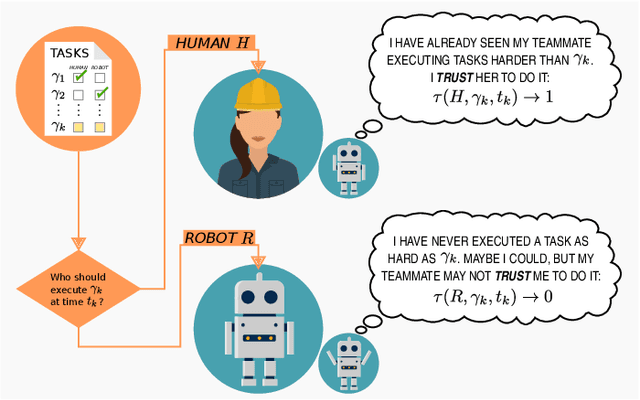

Using Trust for Heterogeneous Human-Robot Team Task Allocation

Oct 08, 2021

Abstract:Human-robot teams have the ability to perform better across various tasks than human-only and robot-only teams. However, such improvements cannot be realized without proper task allocation. Trust is an important factor in teaming relationships, and can be used in the task allocation strategy. Despite the importance, most existing task allocation strategies do not incorporate trust. This paper reviews select studies on trust and task allocation. We also summarize and discuss how a bi-directional trust model can be used for a task allocation strategy. The bi-directional trust model represents task requirements and agents by their capabilities, and can be used to predict trust for both existing and new tasks. Our task allocation approach uses predicted trust in the agent and expected total reward for task assignment. Finally, we present some directions for future work, including the incorporation of trust from the human and human capacity for task allocation, and a negotiation phase for resolving task disagreements.

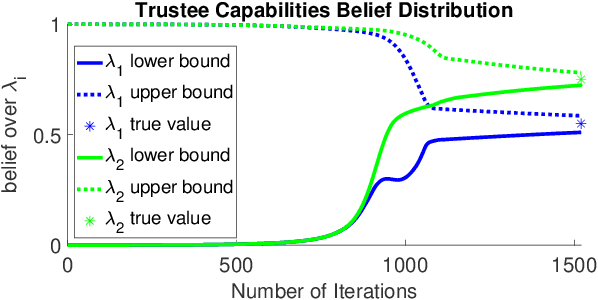

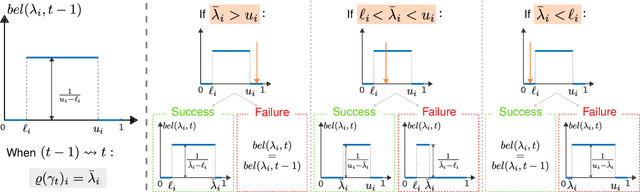

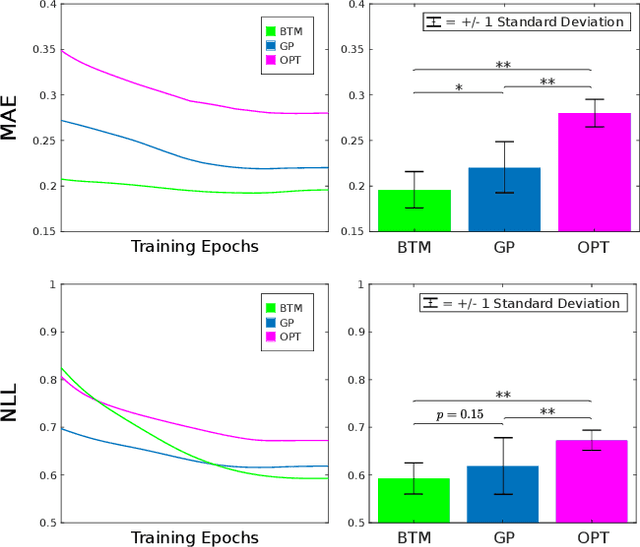

A Unified Bi-directional Model for Natural and Artificial Trust in Human-Robot Collaboration

Jun 04, 2021

Abstract:We introduce a novel capabilities-based bi-directional multi-task trust model that can be used for trust prediction from either a human or a robotic trustor agent. Tasks are represented in terms of their capability requirements, while trustee agents are characterized by their individual capabilities. Trustee agents' capabilities are not deterministic; they are represented by belief distributions. For each task to be executed, a higher level of trust is assigned to trustee agents who have demonstrated that their capabilities exceed the task's requirements. We report results of an online experiment with 284 participants, revealing that our model outperforms existing models for multi-task trust prediction from a human trustor. We also present simulations of the model for determining trust from a robotic trustor. Our model is useful for control authority allocation applications that involve human-robot teams.

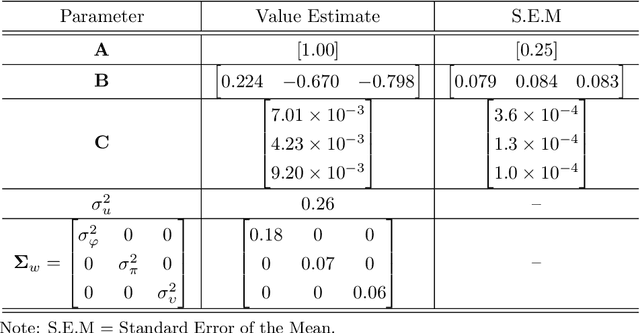

Using Trust in Automation to Enhance Driver-Autonomous Vehicle Interaction and Improve Team Performance

Jun 03, 2021

Abstract:Trust in robots has been gathering attention from multiple directions, as it has special relevance in the theoretical descriptions of human-robot interactions. It is essential for reaching high acceptance and usage rates of robotic technologies in society, as well as for enabling effective human-robot teaming. Researchers have been trying to model the development of trust in robots to improve the overall rapport between humans and robots. Unfortunately, the miscalibration of trust in automation is a common issue that jeopardizes the effectiveness of automation use. It happens when a user's trust levels are not appropriate to the capabilities of the automation being used. Users can be: under-trusting the automation -- when they do not use the functionalities that the machine can perform correctly because of a lack of trust; or over-trusting the automation -- when, due to an excess of trust, they use the machine in situations where its capabilities are not adequate. The main objective of this work is to examine driver's trust development in the ADS. We aim to model how risk factors (e.g.: false alarms and misses from the ADS) and the short-term interactions associated with these risk factors influence the dynamics of drivers' trust in the ADS. The driving context facilitates the instrumentation to measure trusting behaviors, such as drivers' eye movements and usage time of the automated features. Our findings indicate that a reliable characterization of drivers' trusting behaviors and a consequent estimation of trust levels is possible. We expect that these techniques will permit the design of ADSs able to adapt their behaviors to attempt to adjust driver's trust levels. This capability could avoid under- and over-trusting, which could harm their safety or their performance.

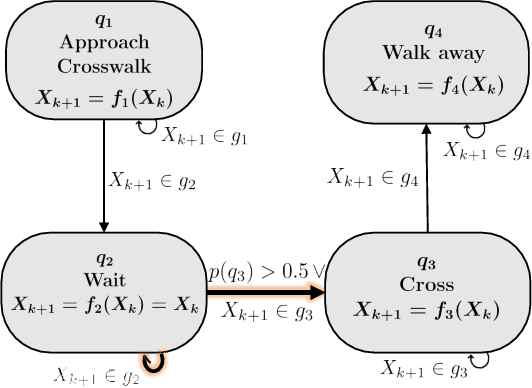

Efficient Behavior-aware Control of Automated Vehicles at Crosswalks using Minimal Information Pedestrian Prediction Model

Mar 22, 2020

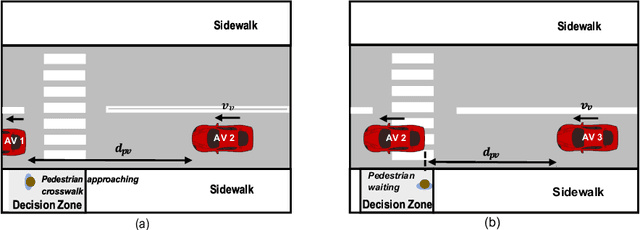

Abstract:For automated vehicles (AVs) to reliably navigate through crosswalks, they need to understand pedestrians crossing behaviors. Simple and reliable pedestrian behavior models aid in real-time AV control by allowing the AVs to predict future pedestrian behaviors. In this paper, we present a Behavior aware Model Predictive Controller (B-MPC) for AVs that incorporates long-term predictions of pedestrian crossing behavior using a previously developed pedestrian crossing model. The model incorporates pedestrians gap acceptance behavior and utilizes minimal pedestrian information, namely their position and speed, to predict pedestrians crossing behaviors. The BMPC controller is validated through simulations and compared to a rule-based controller. By incorporating predictions of pedestrian behavior, the B-MPC controller is able to efficiently plan for longer horizons and handle a wider range of pedestrian interaction scenarios than the rule-based controller. Results demonstrate the applicability of the controller for safe and efficient navigation at crossing scenarios.

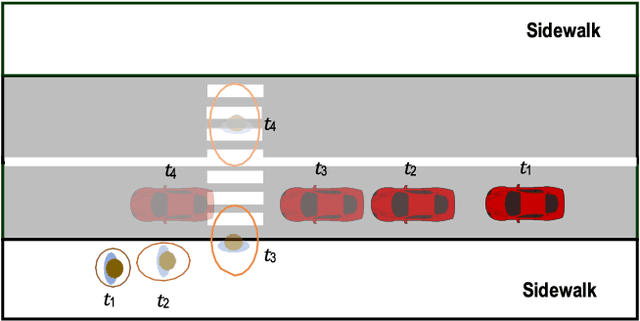

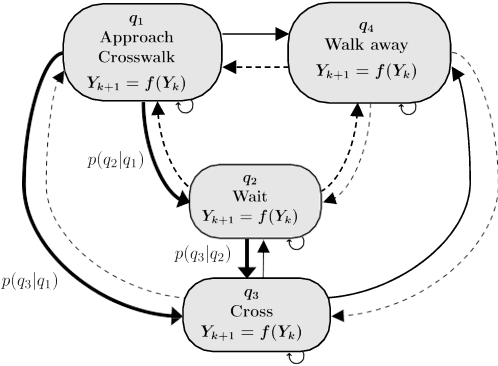

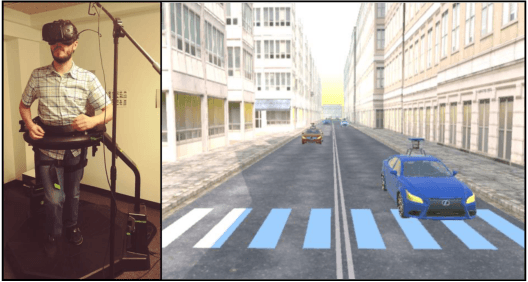

Analysis and Prediction of Pedestrian Crosswalk Behavior during Automated Vehicle Interactions

Mar 22, 2020

Abstract:For safe navigation around pedestrians, automated vehicles (AVs) need to plan their motion by accurately predicting pedestrians trajectories over long time horizons. Current approaches to AV motion planning around crosswalks predict only for short time horizons (1-2 s) and are based on data from pedestrian interactions with human-driven vehicles (HDVs). In this paper, we develop a hybrid systems model that uses pedestrians gap acceptance behavior and constant velocity dynamics for long-term pedestrian trajectory prediction when interacting with AVs. Results demonstrate the applicability of the model for long-term (> 5 s) pedestrian trajectory prediction at crosswalks. Further we compared measures of pedestrian crossing behaviors in the immersive virtual environment (when interacting with AVs) to that in the real world (results of published studies of pedestrians interacting with HDVs), and found similarities between the two. These similarities demonstrate the applicability of the hybrid model of AV interactions developed from an immersive virtual environment (IVE) for real-world scenarios for both AVs and HDVs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge