Cong T. Nguyen

Generative AI-enabled Blockchain Networks: Fundamentals, Applications, and Case Study

Jan 28, 2024Abstract:Generative Artificial Intelligence (GAI) has recently emerged as a promising solution to address critical challenges of blockchain technology, including scalability, security, privacy, and interoperability. In this paper, we first introduce GAI techniques, outline their applications, and discuss existing solutions for integrating GAI into blockchains. Then, we discuss emerging solutions that demonstrate the effectiveness of GAI in addressing various challenges of blockchain, such as detecting unknown blockchain attacks and smart contract vulnerabilities, designing key secret sharing schemes, and enhancing privacy. Moreover, we present a case study to demonstrate that GAI, specifically the generative diffusion model, can be employed to optimize blockchain network performance metrics. Experimental results clearly show that, compared to a baseline traditional AI approach, the proposed generative diffusion model approach can converge faster, achieve higher rewards, and significantly improve the throughput and latency of the blockchain network. Additionally, we highlight future research directions for GAI in blockchain applications, including personalized GAI-enabled blockchains, GAI-blockchain synergy, and privacy and security considerations within blockchain ecosystems.

Deep Transfer Learning: A Novel Collaborative Learning Model for Cyberattack Detection Systems in IoT Networks

Dec 02, 2021

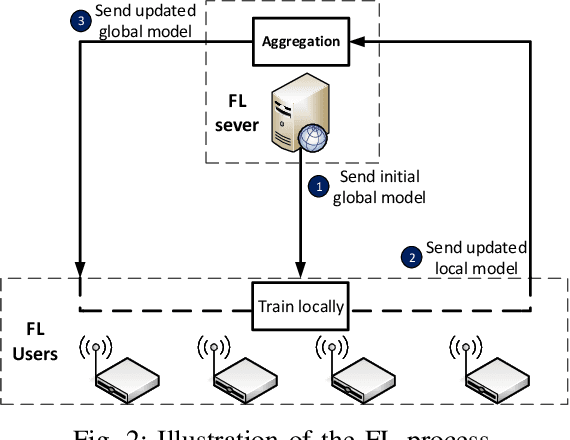

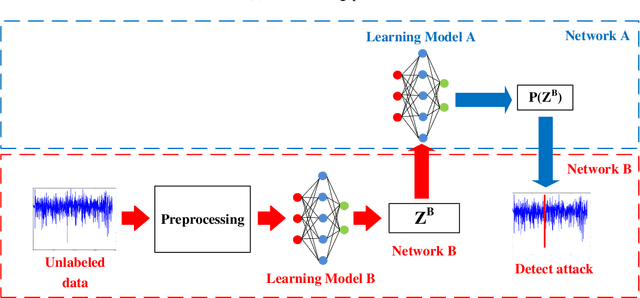

Abstract:Federated Learning (FL) has recently become an effective approach for cyberattack detection systems, especially in Internet-of-Things (IoT) networks. By distributing the learning process across IoT gateways, FL can improve learning efficiency, reduce communication overheads and enhance privacy for cyberattack detection systems. Challenges in implementation of FL in such systems include unavailability of labeled data and dissimilarity of data features in different IoT networks. In this paper, we propose a novel collaborative learning framework that leverages Transfer Learning (TL) to overcome these challenges. Particularly, we develop a novel collaborative learning approach that enables a target network with unlabeled data to effectively and quickly learn knowledge from a source network that possesses abundant labeled data. It is important that the state-of-the-art studies require the participated datasets of networks to have the same features, thus limiting the efficiency, flexibility as well as scalability of intrusion detection systems. However, our proposed framework can address these problems by exchanging the learning knowledge among various deep learning models, even when their datasets have different features. Extensive experiments on recent real-world cybersecurity datasets show that the proposed framework can improve more than 40% as compared to the state-of-the-art deep learning based approaches.

Transfer Learning for Future Wireless Networks: A Comprehensive Survey

Feb 15, 2021

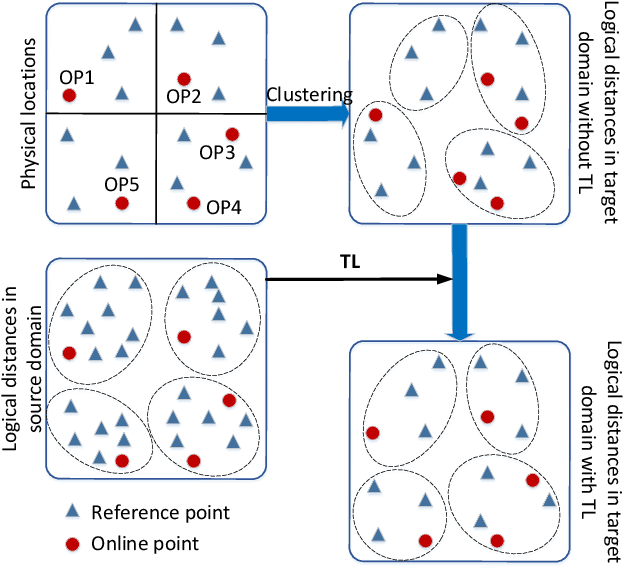

Abstract:With outstanding features, Machine Learning (ML) has been the backbone of numerous applications in wireless networks. However, the conventional ML approaches have been facing many challenges in practical implementation, such as the lack of labeled data, the constantly changing wireless environments, the long training process, and the limited capacity of wireless devices. These challenges, if not addressed, will impede the effectiveness and applicability of ML in future wireless networks. To address these problems, Transfer Learning (TL) has recently emerged to be a very promising solution. The core idea of TL is to leverage and synthesize distilled knowledge from similar tasks as well as from valuable experiences accumulated from the past to facilitate the learning of new problems. Doing so, TL techniques can reduce the dependence on labeled data, improve the learning speed, and enhance the ML methods' robustness to different wireless environments. This article aims to provide a comprehensive survey on applications of TL in wireless networks. Particularly, we first provide an overview of TL including formal definitions, classification, and various types of TL techniques. We then discuss diverse TL approaches proposed to address emerging issues in wireless networks. The issues include spectrum management, localization, signal recognition, security, human activity recognition and caching, which are all important to next-generation networks such as 5G and beyond. Finally, we highlight important challenges, open issues, and future research directions of TL in future wireless networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge