Anaís Garrell

A Survey on Socially Aware Robot Navigation: Taxonomy and Future Challenges

Nov 18, 2023Abstract:Socially aware robot navigation is gaining popularity with the increase in delivery and assistive robots. The research is further fueled by a need for socially aware navigation skills in autonomous vehicles to move safely and appropriately in spaces shared with humans. Although most of these are ground robots, drones are also entering the field. In this paper, we present a literature survey of the works on socially aware robot navigation in the past 10 years. We propose four different faceted taxonomies to navigate the literature and examine the field from four different perspectives. Through the taxonomic review, we discuss the current research directions and the extending scope of applications in various domains. Further, we put forward a list of current research opportunities and present a discussion on possible future challenges that are likely to emerge in the field.

Continual Learning of Hand Gestures for Human-Robot Interaction

Apr 13, 2023

Abstract:In this paper, we present an efficient method to incrementally learn to classify static hand gestures. This method allows users to teach a robot to recognize new symbols in an incremental manner. Contrary to other works which use special sensors or external devices such as color or data gloves, our proposed approach makes use of a single RGB camera to perform static hand gesture recognition from 2D images. Furthermore, our system is able to incrementally learn up to 38 new symbols using only 5 samples for each old class, achieving a final average accuracy of over 90\%. In addition to that, the incremental training time can be reduced to a 10\% of the time required when using all data available.

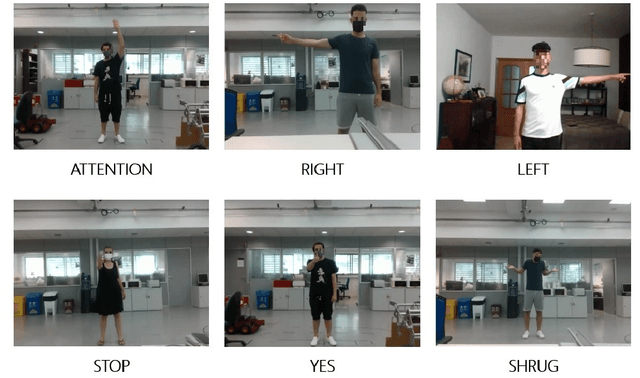

Body Gesture Recognition to Control a Social Robot

Jun 15, 2022

Abstract:In this work, we propose a gesture based language to allow humans to interact with robots using their body in a natural way. We have created a new gesture detection model using neural networks and a custom dataset of humans performing a set of body gestures to train our network. Furthermore, we compare body gesture communication with other communication channels to acknowledge the importance of adding this knowledge to robots. The presented approach is extensively validated in diverse simulations and real-life experiments with non-trained volunteers. This attains remarkable results and shows that it is a valuable framework for social robotics applications, such as human robot collaboration or human-robot interaction.

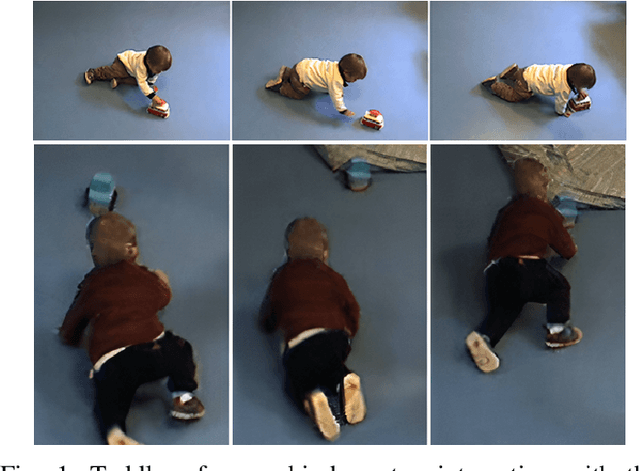

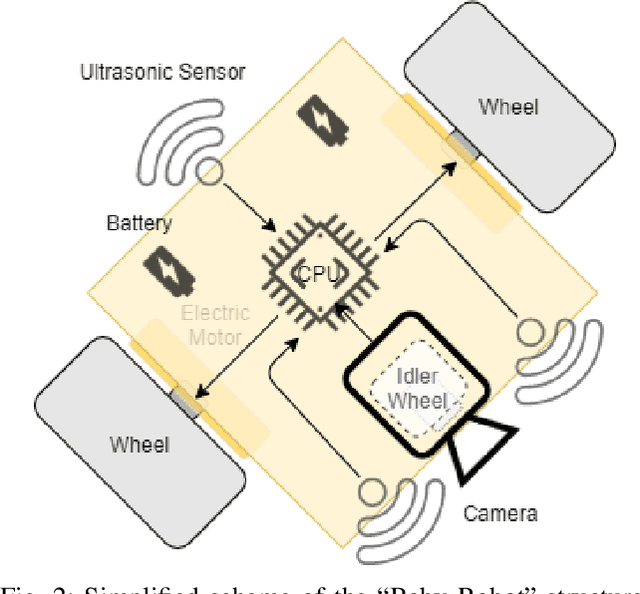

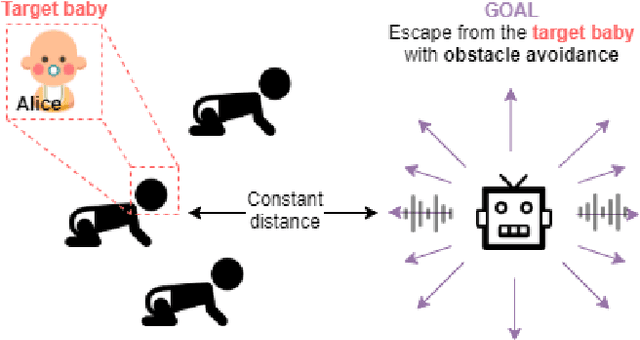

Baby Robot: Improving the Motor Skills of Toddlers

Sep 19, 2021

Abstract:This article introduces "Baby Robot", a robot aiming to improve motor skills of babies and toddlers. Authors developed a car-like toy that moves autonomously using reinforcement learning and computer vision techniques. The robot behaviour is to escape from a target baby that has been previously recognized, or at least detected, while avoiding obstacles, so that the security of the baby is not compromised. A myriad of commercial toys with a similar mobility improvement purpose are into the market; however, there is no one that bets for an intelligent autonomous movement, as they perform simple yet repetitive trajectories in the best of the cases. Two crawling toys -- one in representation of "Baby Robot" -- were tested in a real environment with respect to regular toys in order to check how they improved the toddlers mobility. These real-life experiments were conducted with our proposed robot in a kindergarten, where a group of children interacted with the toys. Significant improvement in the motion skills of participants were detected.

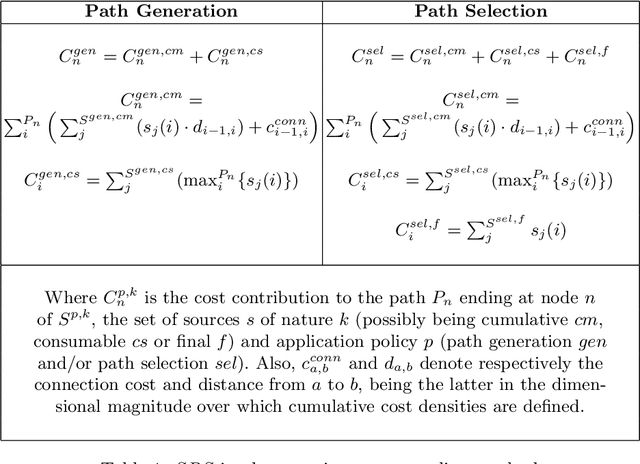

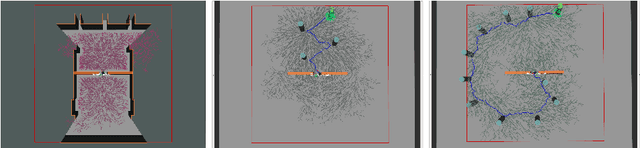

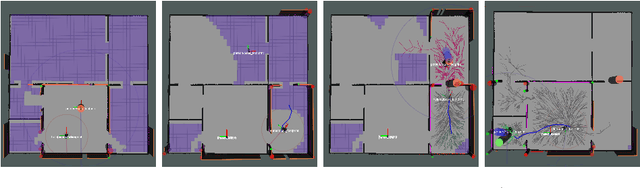

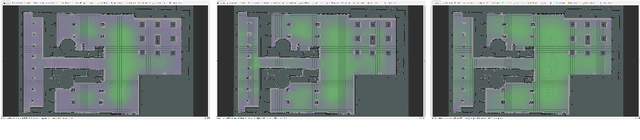

Human-robot Collaborative Navigation Search using Social Reward Sources

Sep 10, 2019

Abstract:This paper proposes a Social Reward Sources (SRS) design for a Human-Robot Collaborative Navigation (HRCN) task: human-robot collaborative search. It is a flexible approach capable of handling the collaborative task, human-robot interaction and environment restrictions, all integrated on a common environment. We modelled task rewards based on unexplored area observability and isolation and evaluated the model through different levels of human-robot communication. The models are validated through quantitative evaluation against both agents' individual performance and qualitative surveying of participants' perception. After that, the three proposed communication levels are compared against each other using the previous metrics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge