Rapid Switching and Multi-Adapter Fusion via Sparse High Rank Adapters

Paper and Code

Jul 22, 2024

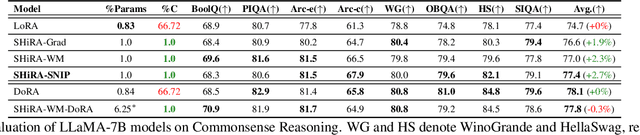

In this paper, we propose Sparse High Rank Adapters (SHiRA) that directly finetune 1-2% of the base model weights while leaving others unchanged, thus, resulting in a highly sparse adapter. This high sparsity incurs no inference overhead, enables rapid switching directly in the fused mode, and significantly reduces concept-loss during multi-adapter fusion. Our extensive experiments on LVMs and LLMs demonstrate that finetuning merely 1-2% parameters in the base model is sufficient for many adapter tasks and significantly outperforms Low Rank Adaptation (LoRA). We also show that SHiRA is orthogonal to advanced LoRA methods such as DoRA and can be easily combined with existing techniques.

* Published at ICML 2024 Workshop on Foundation Models in the Wild.

arXiv admin note: substantial text overlap with arXiv:2406.13175

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge