Deep Neural Network for Blind Visual Quality Assessment of 4K Content

Paper and Code

Jun 09, 2022

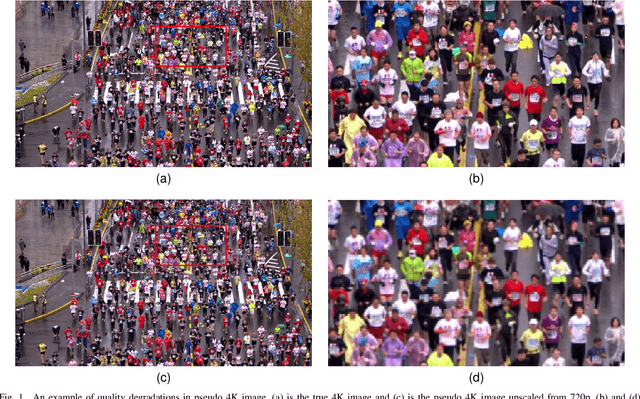

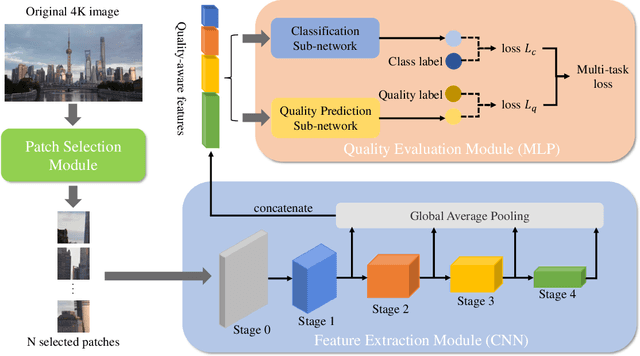

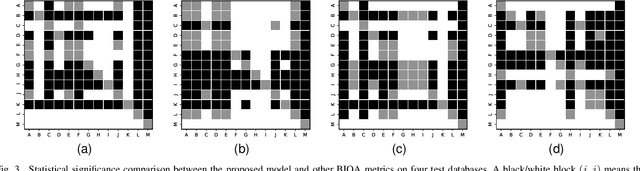

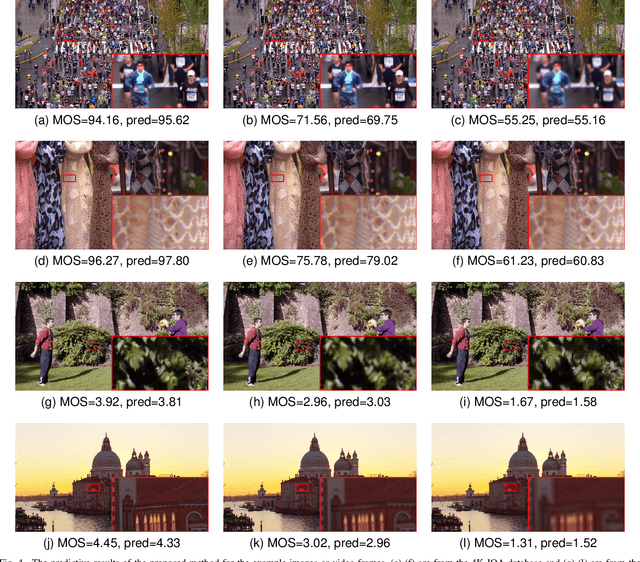

The 4K content can deliver a more immersive visual experience to consumers due to the huge improvement of spatial resolution. However, existing blind image quality assessment (BIQA) methods are not suitable for the original and upscaled 4K contents due to the expanded resolution and specific distortions. In this paper, we propose a deep learning-based BIQA model for 4K content, which on one hand can recognize true and pseudo 4K content and on the other hand can evaluate their perceptual visual quality. Considering the characteristic that high spatial resolution can represent more abundant high-frequency information, we first propose a Grey-level Co-occurrence Matrix (GLCM) based texture complexity measure to select three representative image patches from a 4K image, which can reduce the computational complexity and is proven to be very effective for the overall quality prediction through experiments. Then we extract different kinds of visual features from the intermediate layers of the convolutional neural network (CNN) and integrate them into the quality-aware feature representation. Finally, two multilayer perception (MLP) networks are utilized to map the quality-aware features into the class probability and the quality score for each patch respectively. The overall quality index is obtained through the average pooling of patch results. The proposed model is trained through the multi-task learning manner and we introduce an uncertainty principle to balance the losses of the classification and regression tasks. The experimental results show that the proposed model outperforms all compared BIQA metrics on four 4K content quality assessment databases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge