Yunseok Kwak

SlimFL: Federated Learning with Superposition Coding over Slimmable Neural Networks

Mar 26, 2022

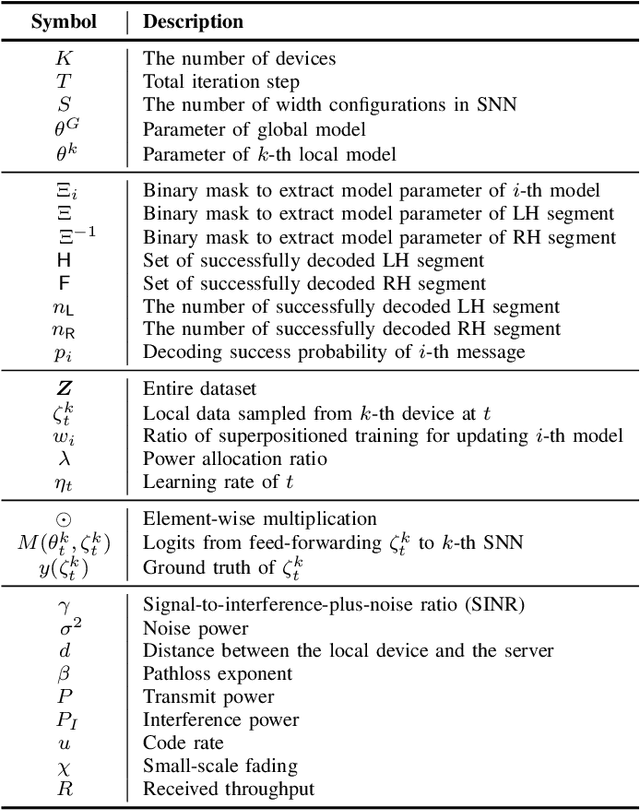

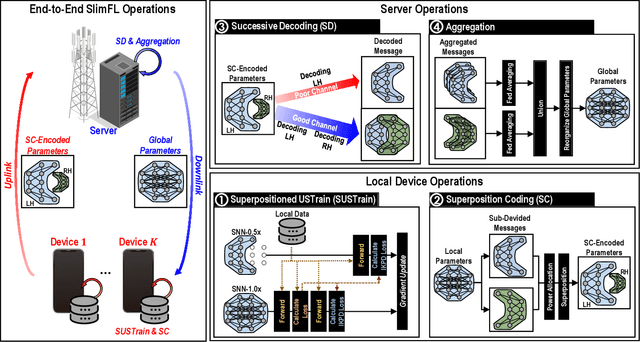

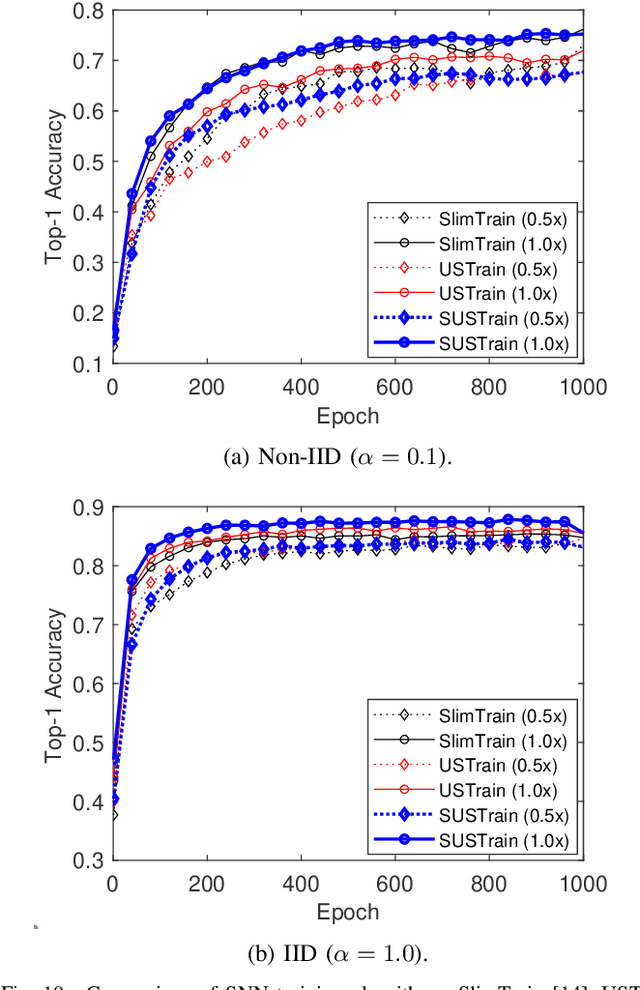

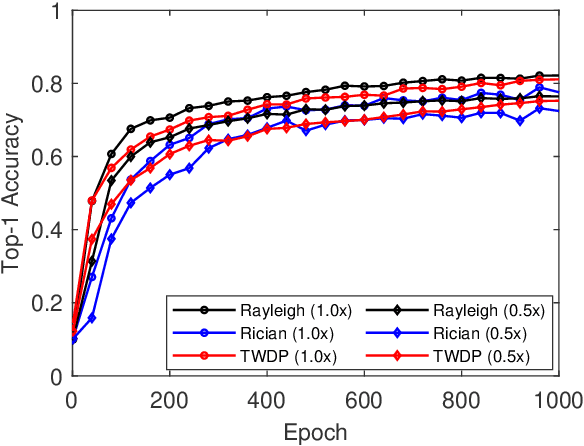

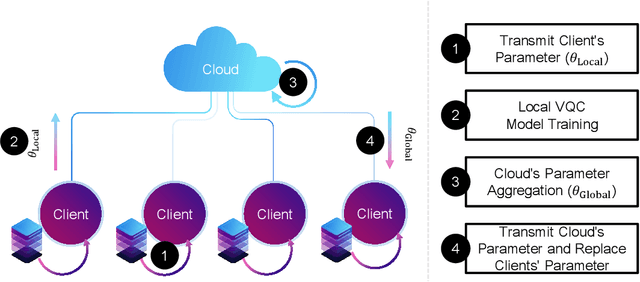

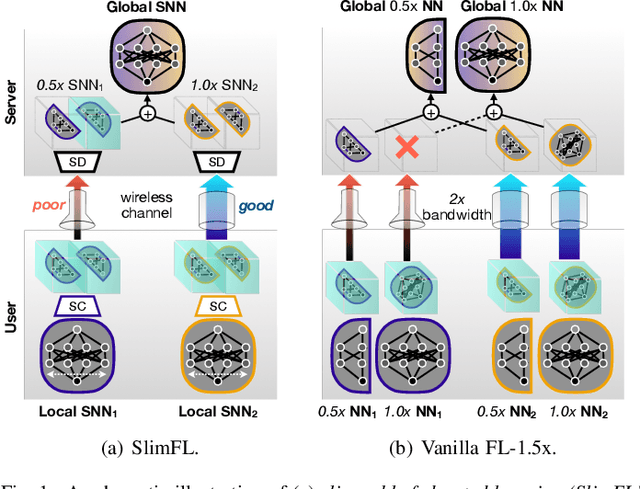

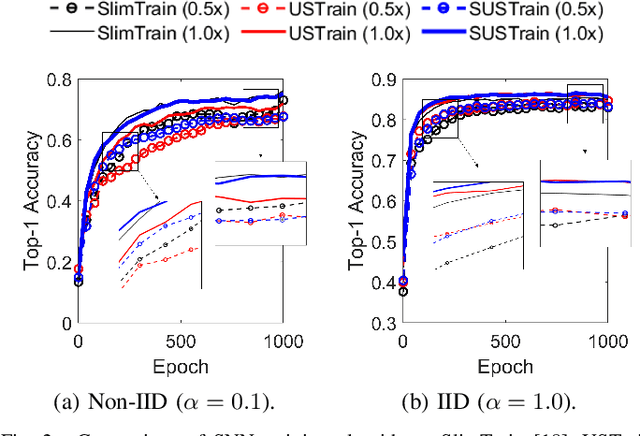

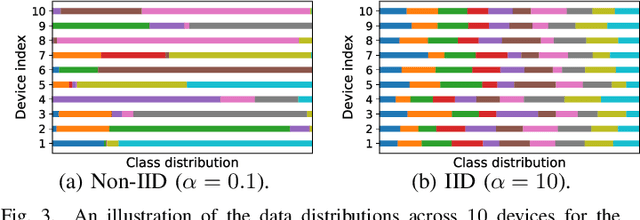

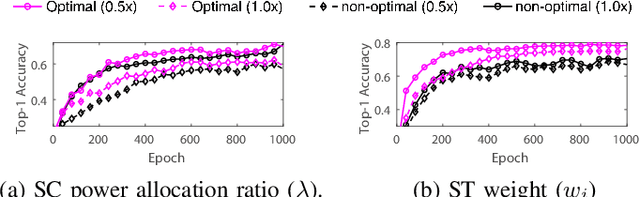

Abstract:Federated learning (FL) is a key enabler for efficient communication and computing leveraging devices' distributed computing capabilities. However, applying FL in practice is challenging due to the local devices' heterogeneous energy, wireless channel conditions, and non-independently and identically distributed (non-IID) data distributions. To cope with these issues, this paper proposes a novel learning framework by integrating FL and width-adjustable slimmable neural networks (SNN). Integrating FL with SNNs is challenging due to time-varing channel conditions and data distributions. In addition, existing multi-width SNN training algorithms are sensitive to the data distributions across devices, which makes SNN ill-suited for FL. Motivated by this, we propose a communication and energy-efficient SNN-based FL (named SlimFL) that jointly utilizes superposition coding (SC) for global model aggregation and superposition training (ST) for updating local models. By applying SC, SlimFL exchanges the superposition of multiple width configurations decoded as many times as possible for a given communication throughput. Leveraging ST, SlimFL aligns the forward propagation of different width configurations while avoiding inter-width interference during backpropagation. We formally prove the convergence of SlimFL. The result reveals that SlimFL is not only communication-efficient but also deals with the non-IID data distributions and poor channel conditions, which is also corroborated by data-intensive simulations.

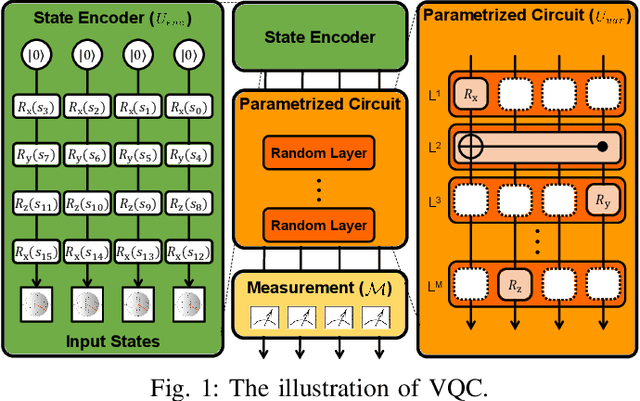

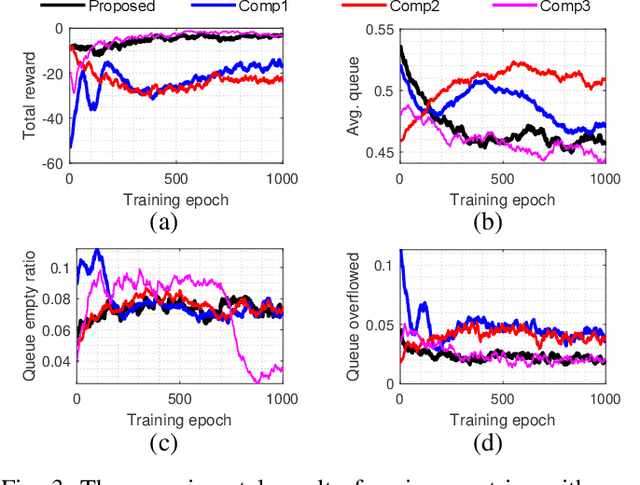

Quantum Multi-Agent Reinforcement Learning via Variational Quantum Circuit Design

Mar 20, 2022

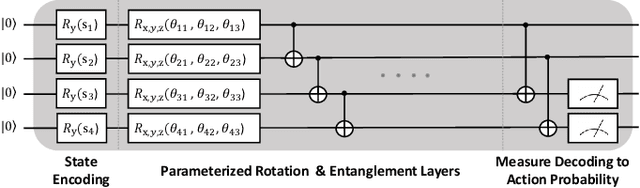

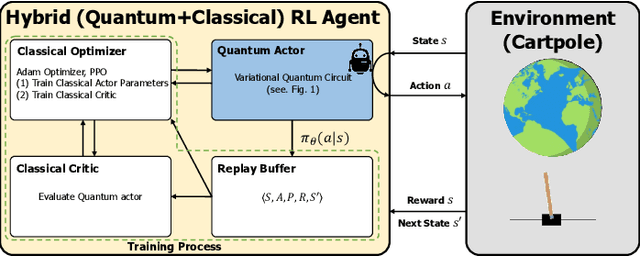

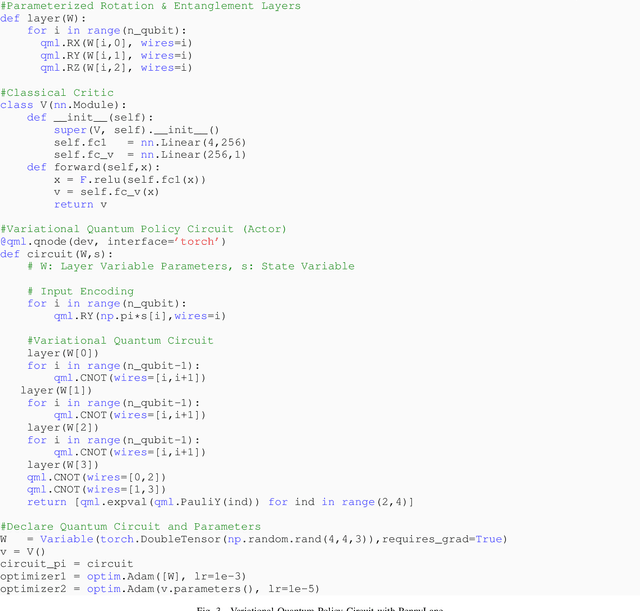

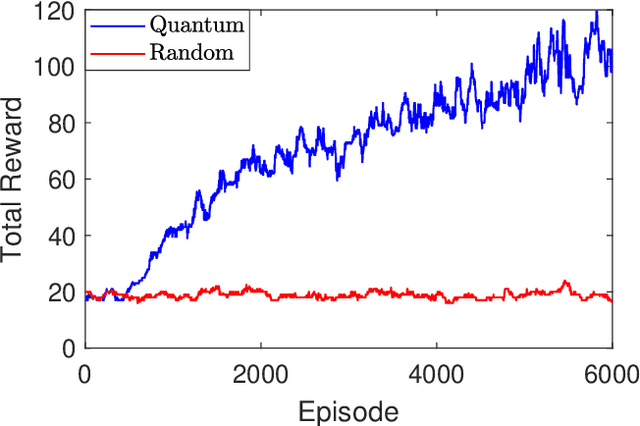

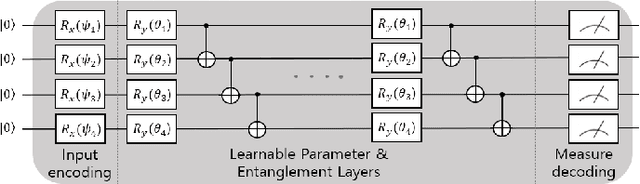

Abstract:In recent years, quantum computing (QC) has been getting a lot of attention from industry and academia. Especially, among various QC research topics, variational quantum circuit (VQC) enables quantum deep reinforcement learning (QRL). Many studies of QRL have shown that the QRL is superior to the classical reinforcement learning (RL) methods under the constraints of the number of training parameters. This paper extends and demonstrates the QRL to quantum multi-agent RL (QMARL). However, the extension of QRL to QMARL is not straightforward due to the challenge of the noise intermediate-scale quantum (NISQ) and the non-stationary properties in classical multi-agent RL (MARL). Therefore, this paper proposes the centralized training and decentralized execution (CTDE) QMARL framework by designing novel VQCs for the framework to cope with these issues. To corroborate the QMARL framework, this paper conducts the QMARL demonstration in a single-hop environment where edge agents offload packets to clouds. The extensive demonstration shows that the proposed QMARL framework enhances 57.7% of total reward than classical frameworks.

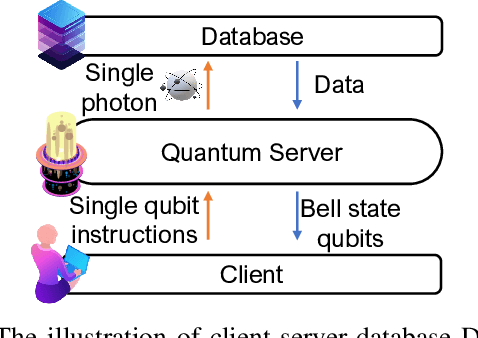

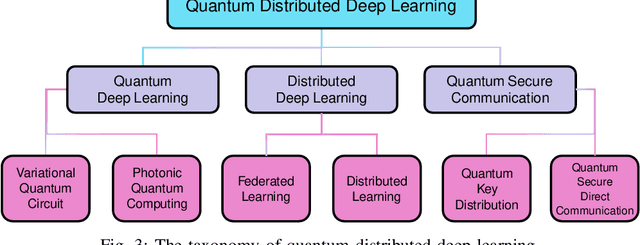

Quantum Heterogeneous Distributed Deep Learning Architectures: Models, Discussions, and Applications

Mar 02, 2022

Abstract:Deep learning (DL) has already become a state-of-the-art technology for various data processing tasks. However, data security and computational overload problems frequently occur due to their high data and computational power dependence. To solve this problem, quantum deep learning (QDL) and distributed deep learning (DDL) are emerging to complement existing DL methods by reducing computational overhead and strengthening data security. Furthermore, a quantum distributed deep learning (QDDL) technique that combines these advantages and maximizes them is in the spotlight. QDL takes computational gains by replacing deep learning computations on local devices and servers with quantum deep learning. On the other hand, besides the advantages of the existing distributed learning structure, it can increase data security by using a quantum secure communication protocol between the server and the client. Although many attempts have been made to confirm and demonstrate these various possibilities, QDDL research is still in its infancy. This paper discusses the model structure studied so far and its possibilities and limitations to introduce and promote these studies. It also discusses the areas of applied research so far and in the future and the possibilities of new methodologies.

Joint Superposition Coding and Training for Federated Learning over Multi-Width Neural Networks

Dec 05, 2021

Abstract:This paper aims to integrate two synergetic technologies, federated learning (FL) and width-adjustable slimmable neural network (SNN) architectures. FL preserves data privacy by exchanging the locally trained models of mobile devices. By adopting SNNs as local models, FL can flexibly cope with the time-varying energy capacities of mobile devices. Combining FL and SNNs is however non-trivial, particularly under wireless connections with time-varying channel conditions. Furthermore, existing multi-width SNN training algorithms are sensitive to the data distributions across devices, so are ill-suited to FL. Motivated by this, we propose a communication and energy-efficient SNN-based FL (named SlimFL) that jointly utilizes superposition coding (SC) for global model aggregation and superposition training (ST) for updating local models. By applying SC, SlimFL exchanges the superposition of multiple width configurations that are decoded as many as possible for a given communication throughput. Leveraging ST, SlimFL aligns the forward propagation of different width configurations, while avoiding the inter-width interference during backpropagation. We formally prove the convergence of SlimFL. The result reveals that SlimFL is not only communication-efficient but also can counteract non-IID data distributions and poor channel conditions, which is also corroborated by simulations.

Introduction to Quantum Reinforcement Learning: Theory and PennyLane-based Implementation

Aug 16, 2021

Abstract:The emergence of quantum computing enables for researchers to apply quantum circuit on many existing studies. Utilizing quantum circuit and quantum differential programming, many research are conducted such as \textit{Quantum Machine Learning} (QML). In particular, quantum reinforcement learning is a good field to test the possibility of quantum machine learning, and a lot of research is being done. This work will introduce the concept of quantum reinforcement learning using a variational quantum circuit, and confirm its possibility through implementation and experimentation. We will first present the background knowledge and working principle of quantum reinforcement learning, and then guide the implementation method using the PennyLane library. We will also discuss the power and possibility of quantum reinforcement learning from the experimental results obtained through this work.

Quantum Neural Networks: Concepts, Applications, and Challenges

Aug 02, 2021

Abstract:Quantum deep learning is a research field for the use of quantum computing techniques for training deep neural networks. The research topics and directions of deep learning and quantum computing have been separated for long time, however by discovering that quantum circuits can act like artificial neural networks, quantum deep learning research is widely adopted. This paper explains the backgrounds and basic principles of quantum deep learning and also introduces major achievements. After that, this paper discusses the challenges of quantum deep learning research in multiple perspectives. Lastly, this paper presents various future research directions and application fields of quantum deep learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge