Yuming Zhao

Beyond Translation: Cross-Cultural Meme Transcreation with Vision-Language Models

Jan 23, 2026Abstract:Memes are a pervasive form of online communication, yet their cultural specificity poses significant challenges for cross-cultural adaptation. We study cross-cultural meme transcreation, a multimodal generation task that aims to preserve communicative intent and humor while adapting culture-specific references. We propose a hybrid transcreation framework based on vision-language models and introduce a large-scale bidirectional dataset of Chinese and US memes. Using both human judgments and automated evaluation, we analyze 6,315 meme pairs and assess transcreation quality across cultural directions. Our results show that current vision-language models can perform cross-cultural meme transcreation to a limited extent, but exhibit clear directional asymmetries: US-Chinese transcreation consistently achieves higher quality than Chinese-US. We further identify which aspects of humor and visual-textual design transfer across cultures and which remain challenging, and propose an evaluation framework for assessing cross-cultural multimodal generation. Our code and dataset are publicly available at https://github.com/AIM-SCU/MemeXGen.

FlexPara: Flexible Neural Surface Parameterization

Apr 27, 2025Abstract:Surface parameterization is a fundamental geometry processing task, laying the foundations for the visual presentation of 3D assets and numerous downstream shape analysis scenarios. Conventional parameterization approaches demand high-quality mesh triangulation and are restricted to certain simple topologies unless additional surface cutting and decomposition are provided. In practice, the optimal configurations (e.g., type of parameterization domains, distribution of cutting seams, number of mapping charts) may vary drastically with different surface structures and task characteristics, thus requiring more flexible and controllable processing pipelines. To this end, this paper introduces FlexPara, an unsupervised neural optimization framework to achieve both global and multi-chart surface parameterizations by establishing point-wise mappings between 3D surface points and adaptively-deformed 2D UV coordinates. We ingeniously design and combine a series of geometrically-interpretable sub-networks, with specific functionalities of cutting, deforming, unwrapping, and wrapping, to construct a bi-directional cycle mapping framework for global parameterization without the need for manually specified cutting seams. Furthermore, we construct a multi-chart parameterization framework with adaptively-learned chart assignment. Extensive experiments demonstrate the universality, superiority, and inspiring potential of our neural surface parameterization paradigm. The code will be publicly available at https://github.com/AidenZhao/FlexPara

Building Robust Spoken Language Understanding by Cross Attention between Phoneme Sequence and ASR Hypothesis

Mar 22, 2022

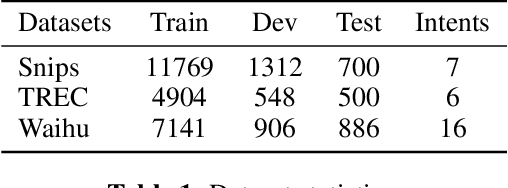

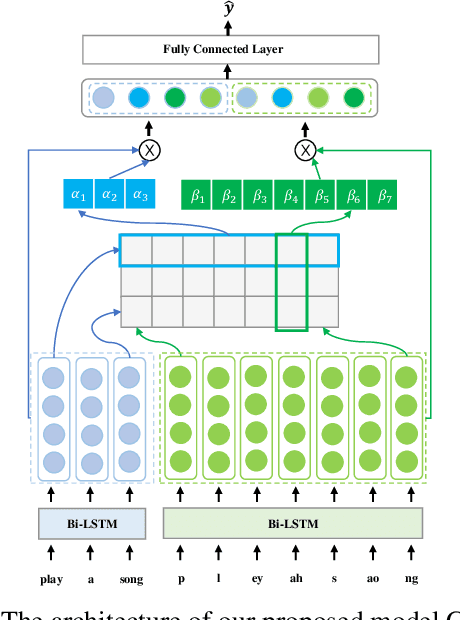

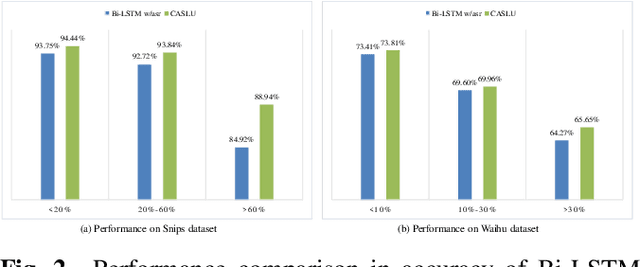

Abstract:Building Spoken Language Understanding (SLU) robust to Automatic Speech Recognition (ASR) errors is an essential issue for various voice-enabled virtual assistants. Considering that most ASR errors are caused by phonetic confusion between similar-sounding expressions, intuitively, leveraging the phoneme sequence of speech can complement ASR hypothesis and enhance the robustness of SLU. This paper proposes a novel model with Cross Attention for SLU (denoted as CASLU). The cross attention block is devised to catch the fine-grained interactions between phoneme and word embeddings in order to make the joint representations catch the phonetic and semantic features of input simultaneously and for overcoming the ASR errors in downstream natural language understanding (NLU) tasks. Extensive experiments are conducted on three datasets, showing the effectiveness and competitiveness of our approach. Additionally, We also validate the universality of CASLU and prove its complementarity when combining with other robust SLU techniques.

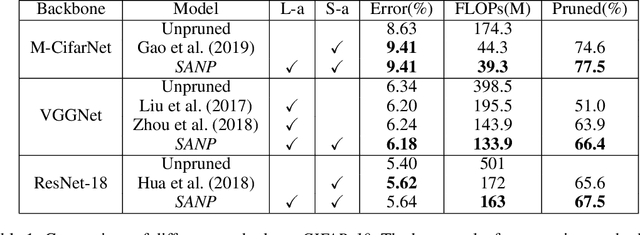

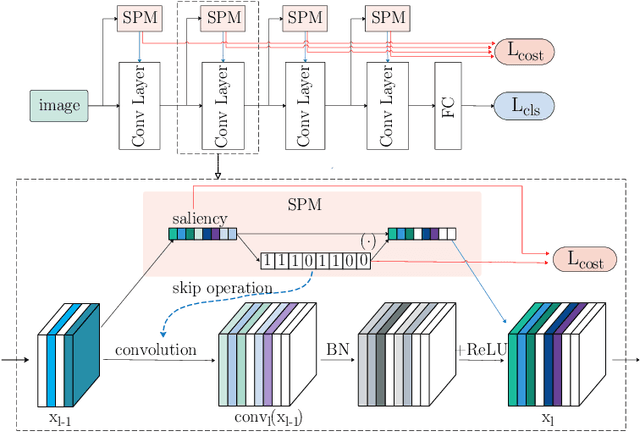

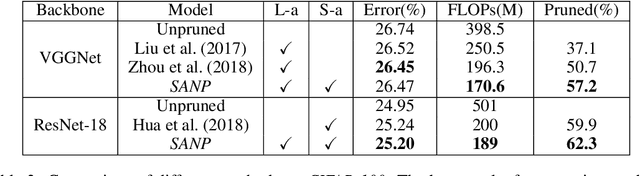

Self-Adaptive Network Pruning

Oct 20, 2019

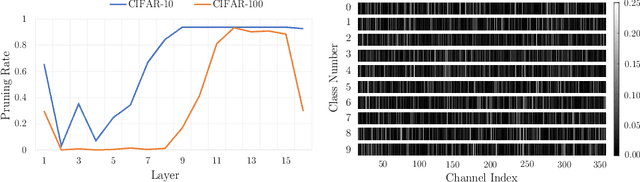

Abstract:Deep convolutional neural networks have been proved successful on a wide range of tasks, yet they are still hindered by their large computation cost in many industrial scenarios. In this paper, we propose to reduce such cost for CNNs through a self-adaptive network pruning method (SANP). Our method introduces a general Saliency-and-Pruning Module (SPM) for each convolutional layer, which learns to predict saliency scores and applies pruning for each channel. Given a total computation budget, SANP adaptively determines the pruning strategy with respect to each layer and each sample, such that the average computation cost meets the budget. This design allows SANP to be more efficient in computation, as well as more robust to datasets and backbones. Extensive experiments on 2 datasets and 3 backbones show that SANP surpasses state-of-the-art methods in both classification accuracy and pruning rate.

* 10 pages, 5 figures, conference

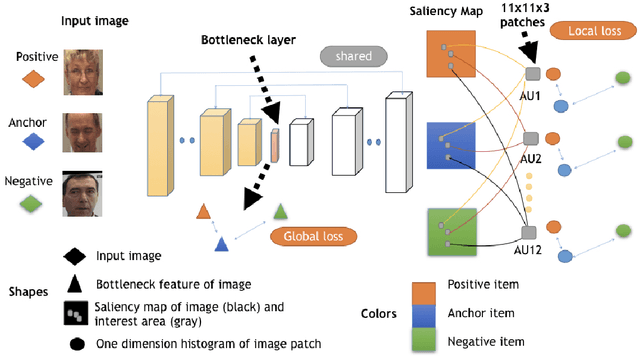

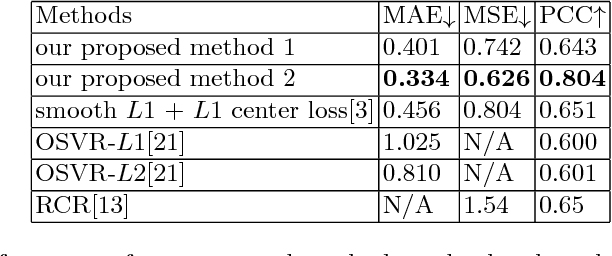

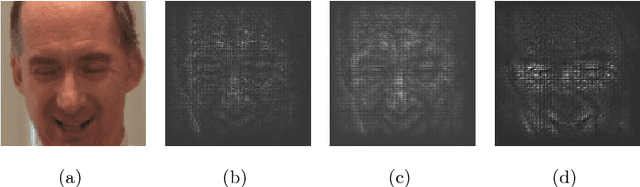

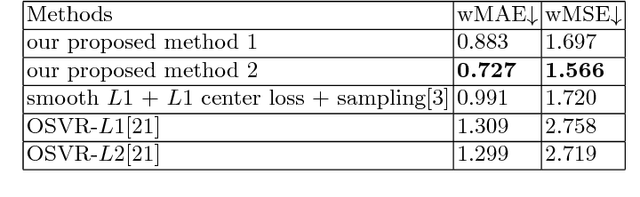

Saliency Supervision: An Intuitive and Effective Approach for Pain Intensity Regression

Nov 16, 2018

Abstract:Getting pain intensity from face images is an important problem in autonomous nursing systems. However, due to the limitation in data sources and the subjectiveness in pain intensity values, it is hard to adopt modern deep neural networks for this problem without domain-specific auxiliary design. Inspired by human vision priori, we propose a novel approach called saliency supervision, where we directly regularize deep networks to focus on facial area that is discriminative for pain regression. Through alternative training between saliency supervision and global loss, our method can learn sparse and robust features, which is proved helpful for pain intensity regression. We verified saliency supervision with face-verification network backbone on the widely-used dataset, and achieved state-of-art performance without bells and whistles. Our saliency supervision is intuitive in spirit, yet effective in performance. We believe such saliency supervision is essential in dealing with ill-posed datasets, and has potential in a wide range of vision tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge