Yuanqing Huang

FuXiWeather2: Learning accurate atmospheric state estimation for operational global weather forecasting

Mar 16, 2026Abstract:Numerical weather prediction has long been constrained by the computational bottlenecks inherent in data assimilation and numerical modeling. While machine learning has accelerated forecasting, existing models largely serve as "emulators of reanalysis products," thereby retaining their systematic biases and operational latencies. Here, we present FuXiWeather2, a unified end-to-end neural framework for assimilation and forecasting. We align training objectives directly with a combination of real-world observations and reanalysis data, enabling the framework to effectively rectify inherent errors within reanalysis products. To address the distribution shift between NWP-derived background inputs during training and self-generated backgrounds during deployment, we introduce a recursive unrolling training method to enhance the precision and stability of analysis generation. Furthermore, our model is trained on a hybrid dataset of raw and simulated observations to mitigate the impact of observational distribution inconsistency. FuXiWeather2 generates high-resolution ($0.25^{\circ}$) global analysis fields and 10-day forecasts within minutes. The analysis fields surpass the NCEP-GFS across most variables and demonstrate superior accuracy over both ERA5 and the ECMWF-HRES system in lower-tropospheric and surface variables. These high-quality analysis fields drive deterministic forecasts that exceed the skill of the HRES system in 91\% of evaluated metrics. Additionally, its outstanding performance in typhoon track prediction underscores its practical value for rapid response to extreme weather events. The FuXiWeather2 analysis dataset is available at https://doi.org/10.5281/zenodo.18872728.

FuXi Weather: An end-to-end machine learning weather data assimilation and forecasting system

Aug 10, 2024

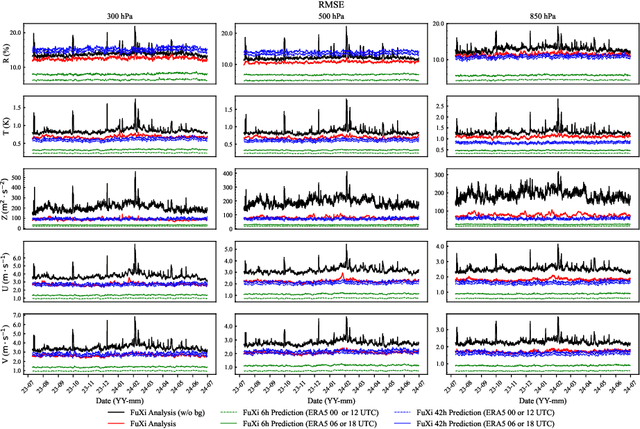

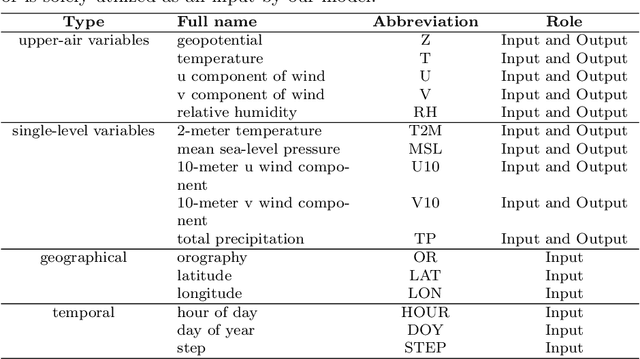

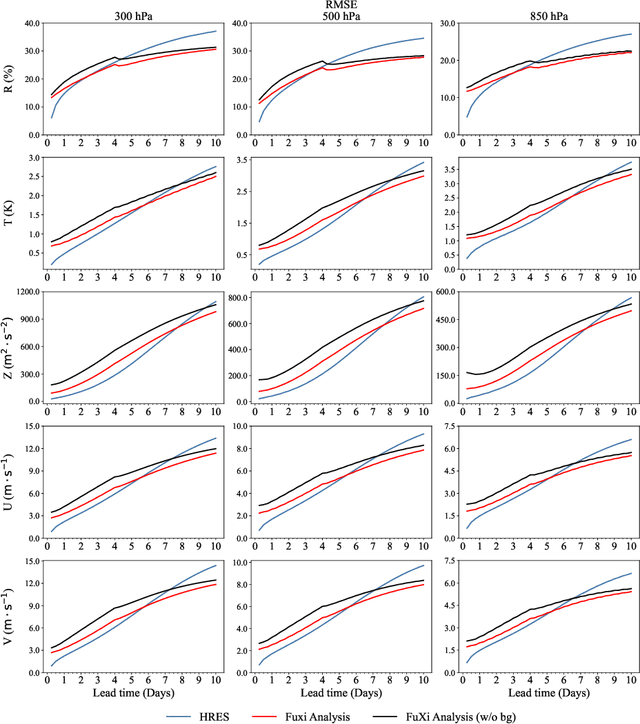

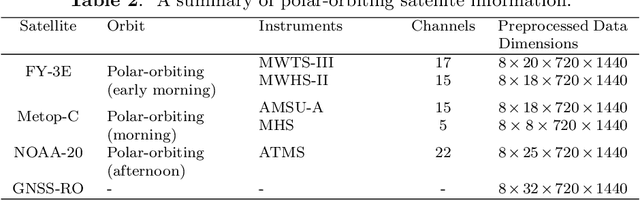

Abstract:Operational numerical weather prediction systems consist of three fundamental components: the global observing system for data collection, data assimilation for generating initial conditions, and the forecasting model to predict future weather conditions. While NWP have undergone a quiet revolution, with forecast skills progressively improving over the past few decades, their advancement has slowed due to challenges such as high computational costs and the complexities associated with assimilating an increasing volume of observational data and managing finer spatial grids. Advances in machine learning offer an alternative path towards more efficient and accurate weather forecasts. The rise of machine learning based weather forecasting models has also spurred the development of machine learning based DA models or even purely machine learning based weather forecasting systems. This paper introduces FuXi Weather, an end-to-end machine learning based weather forecasting system. FuXi Weather employs specialized data preprocessing and multi-modal data fusion techniques to integrate information from diverse sources under all-sky conditions, including microwave sounders from 3 polar-orbiting satellites and radio occultation data from Global Navigation Satellite System. Operating on a 6-hourly DA and forecasting cycle, FuXi Weather independently generates robust and accurate 10-day global weather forecasts at a spatial resolution of 0.25\textdegree. It surpasses the European Centre for Medium-range Weather Forecasts high-resolution forecasts in terms of predictability, extending the skillful forecast lead times for several key weather variables such as the geopotential height at 500 hPa from 9.25 days to 9.5 days. The system's high computational efficiency and robust performance, even with limited observations, demonstrates its potential as a promising alternative to traditional NWP systems.

Adaptive Hybrid Masking Strategy for Privacy-Preserving Face Recognition Against Model Inversion Attack

Mar 14, 2024

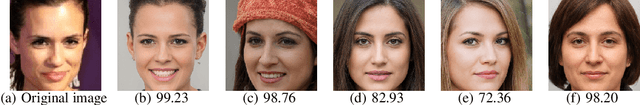

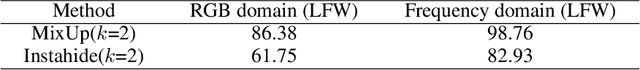

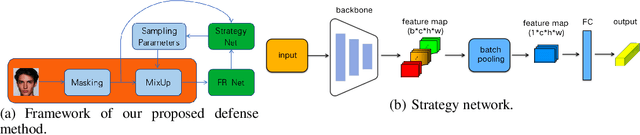

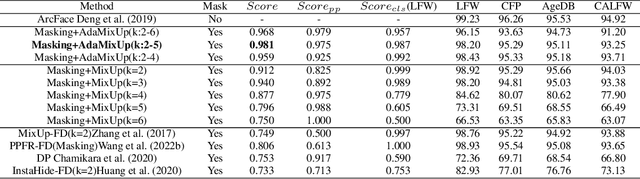

Abstract:The utilization of personal sensitive data in training face recognition (FR) models poses significant privacy concerns, as adversaries can employ model inversion attacks (MIA) to infer the original training data. Existing defense methods, such as data augmentation and differential privacy, have been employed to mitigate this issue. However, these methods often fail to strike an optimal balance between privacy and accuracy. To address this limitation, this paper introduces an adaptive hybrid masking algorithm against MIA. Specifically, face images are masked in the frequency domain using an adaptive MixUp strategy. Unlike the traditional MixUp algorithm, which is predominantly used for data augmentation, our modified approach incorporates frequency domain mixing. Previous studies have shown that increasing the number of images mixed in MixUp can enhance privacy preservation but at the expense of reduced face recognition accuracy. To overcome this trade-off, we develop an enhanced adaptive MixUp strategy based on reinforcement learning, which enables us to mix a larger number of images while maintaining satisfactory recognition accuracy. To optimize privacy protection, we propose maximizing the reward function (i.e., the loss function of the FR system) during the training of the strategy network. While the loss function of the FR network is minimized in the phase of training the FR network. The strategy network and the face recognition network can be viewed as antagonistic entities in the training process, ultimately reaching a more balanced trade-off. Experimental results demonstrate that our proposed hybrid masking scheme outperforms existing defense algorithms in terms of privacy preservation and recognition accuracy against MIA.

Inference Attacks Against Face Recognition Model without Classification Layers

Jan 24, 2024Abstract:Face recognition (FR) has been applied to nearly every aspect of daily life, but it is always accompanied by the underlying risk of leaking private information. At present, almost all attack models against FR rely heavily on the presence of a classification layer. However, in practice, the FR model can obtain complex features of the input via the model backbone, and then compare it with the target for inference, which does not explicitly involve the outputs of the classification layer adopting logit or other losses. In this work, we advocate a novel inference attack composed of two stages for practical FR models without a classification layer. The first stage is the membership inference attack. Specifically, We analyze the distances between the intermediate features and batch normalization (BN) parameters. The results indicate that this distance is a critical metric for membership inference. We thus design a simple but effective attack model that can determine whether a face image is from the training dataset or not. The second stage is the model inversion attack, where sensitive private data is reconstructed using a pre-trained generative adversarial network (GAN) guided by the attack model in the first stage. To the best of our knowledge, the proposed attack model is the very first in the literature developed for FR models without a classification layer. We illustrate the application of the proposed attack model in the establishment of privacy-preserving FR techniques.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge