Youngji Kim

A Single Correspondence Is Enough: Robust Global Registration to Avoid Degeneracy in Urban Environments

Mar 13, 2022

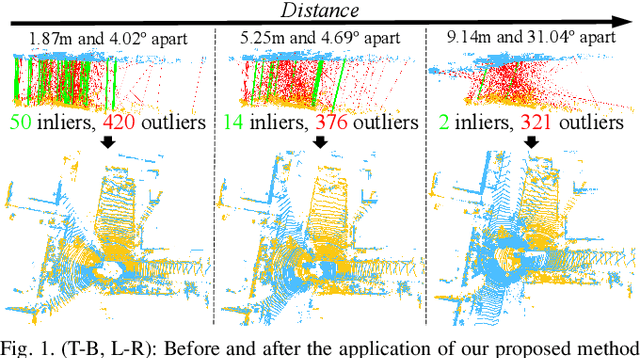

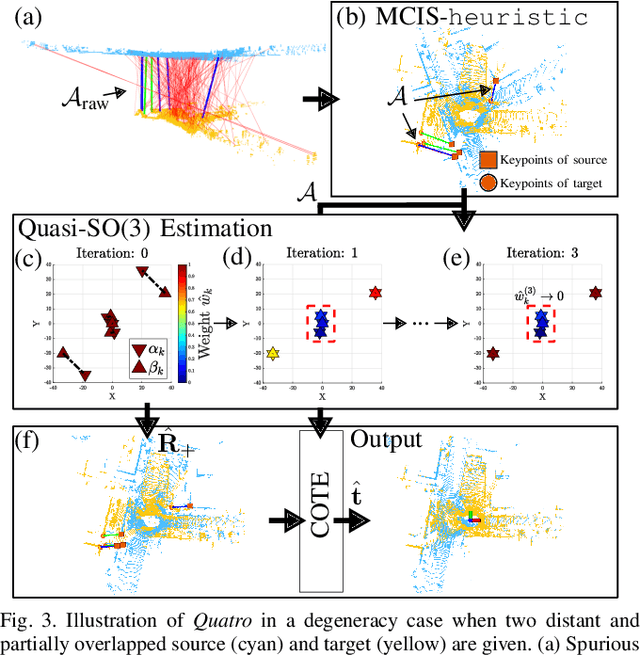

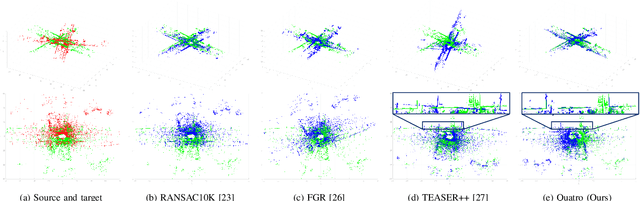

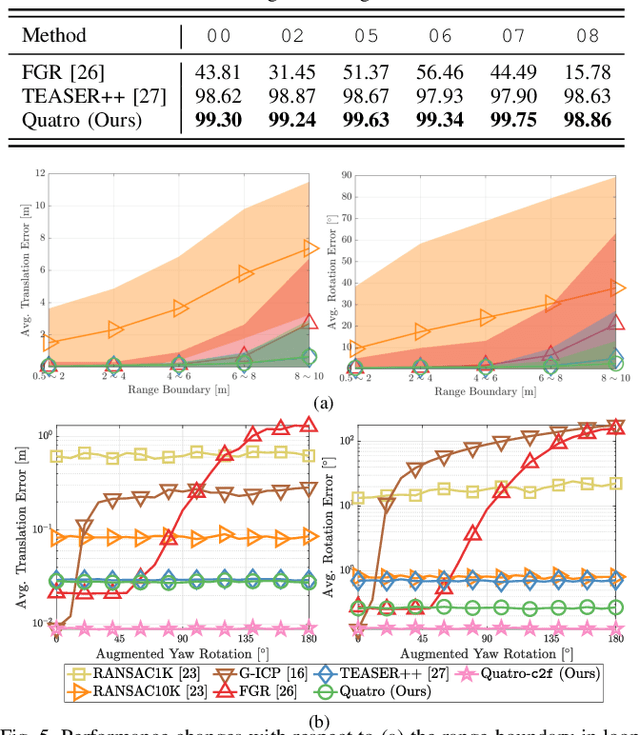

Abstract:Global registration using 3D point clouds is a crucial technology for mobile platforms to achieve localization or manage loop-closing situations. In recent years, numerous researchers have proposed global registration methods to address a large number of outlier correspondences. Unfortunately, the degeneracy problem, which represents the phenomenon in which the number of estimated inliers becomes lower than three, is still potentially inevitable. To tackle the problem, a degeneracy-robust decoupling-based global registration method is proposed, called Quatro. In particular, our method employs quasi-SO(3) estimation by leveraging the Atlanta world assumption in urban environments to avoid degeneracy in rotation estimation. Thus, the minimum degree of freedom (DoF) of our method is reduced from three to one. As verified in indoor and outdoor 3D LiDAR datasets, our proposed method yields robust global registration performance compared with other global registration methods, even for distant point cloud pairs. Furthermore, the experimental results confirm the applicability of our method as a coarse alignment. Our code is available: https://github.com/url-kaist/quatro.

Multi-Task Learning for Scalable and Dense Multi-Layer Bayesian Map Inference

Jun 28, 2021

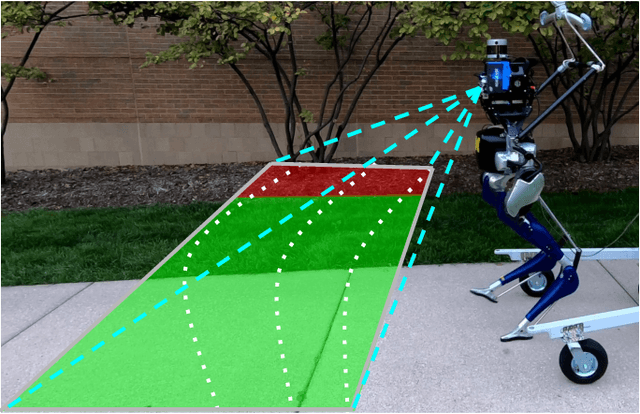

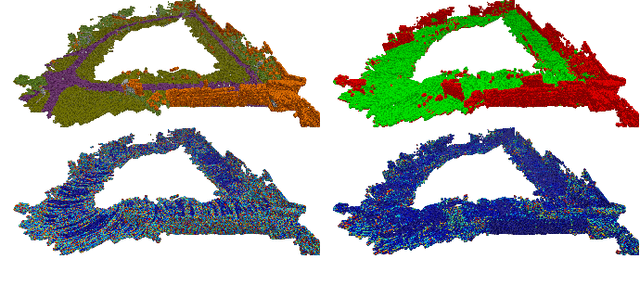

Abstract:This paper presents a novel and flexible multi-task multi-layer Bayesian mapping framework with readily extendable attribute layers. The proposed framework goes beyond modern metric-semantic maps to provide even richer environmental information for robots in a single mapping formalism while exploiting existing inter-layer correlations. It removes the need for a robot to access and process information from many separate maps when performing a complex task and benefits from the correlation between map layers, advancing the way robots interact with their environments. To this end, we design a multi-task deep neural network with attention mechanisms as our front-end to provide multiple observations for multiple map layers simultaneously. Our back-end runs a scalable closed-form Bayesian inference with only logarithmic time complexity. We apply the framework to build a dense robotic map including metric-semantic occupancy and traversability layers. Traversability ground truth labels are automatically generated from exteroceptive sensory data in a self-supervised manner. We present extensive experimental results on publicly available data sets and data collected by a 3D bipedal robot platform on the University of Michigan North Campus and show reliable mapping performance in different environments. Finally, we also discuss how the current framework can be extended to incorporate more information such as friction, signal strength, temperature, and physical quantity concentration using Gaussian map layers. The software for reproducing the presented results or running on customized data is made publicly available.

Sequential Learning of Visual Tracking and Mapping Using Unsupervised Deep Neural Networks

Feb 26, 2019

Abstract:We proposed an end-to-end deep learning-based simultaneous localization and mapping (SLAM) system following conventional visual odometry (VO) pipelines. The proposed method completes the SLAM framework by including tracking, mapping, and sequential optimization networks while training them in an unsupervised manner. Together with the camera pose and depth map, we estimated the observational uncertainty to make our system robust to noises such as dynamic objects. We evaluated our method using public indoor and outdoor datasets. The experiment demonstrated that our method works well in tracking and mapping tasks and performs comparably with other learning-based VO approaches. Notably, the proposed uncertainty modeling and sequential training yielded improved generality in a variety of environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge