Yonas Tadesse

A wearable sensor vest for social humanoid robots with GPGPU, IoT, and modular software architecture

Jan 06, 2022

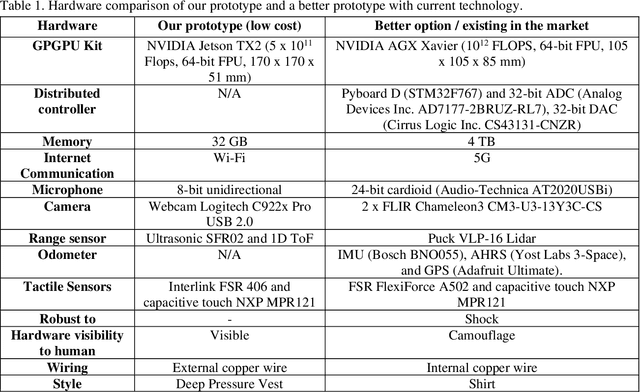

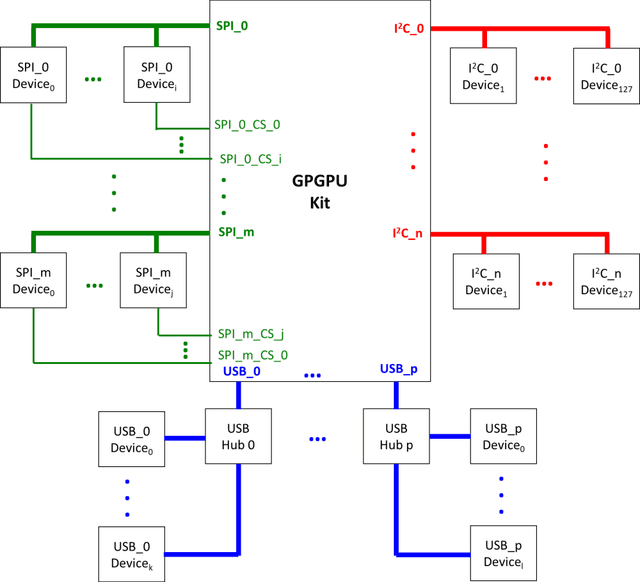

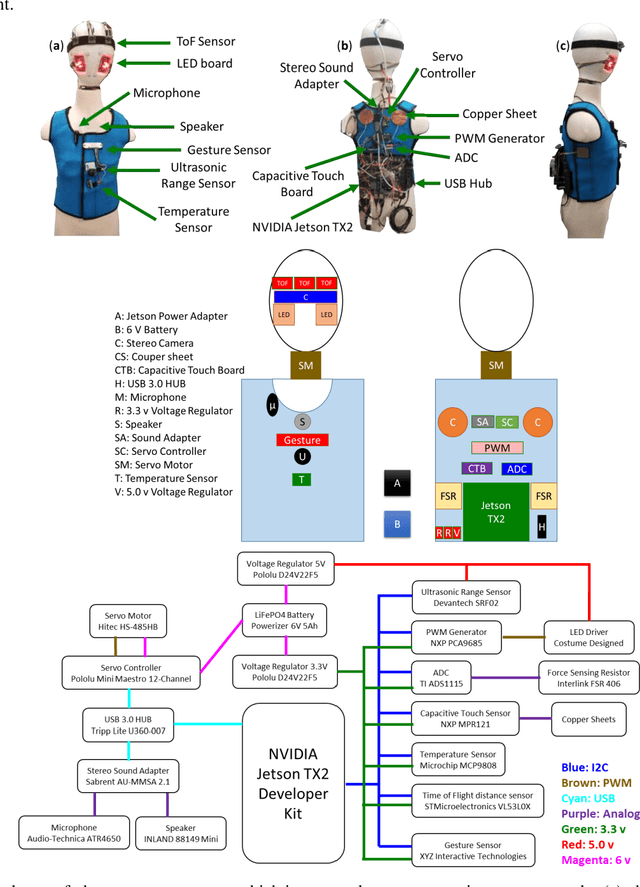

Abstract:Currently, most social robots interact with their surroundings and humans through sensors that are integral parts of the robots, which limits the usability of the sensors, human-robot interaction, and interchangeability. A wearable sensor garment that fits many robots is needed in many applications. This article presents an affordable wearable sensor vest, and an open-source software architecture with the Internet of Things (IoT) for social humanoid robots. The vest consists of touch, temperature, gesture, distance, vision sensors, and a wireless communication module. The IoT feature allows the robot to interact with humans locally and over the Internet. The designed architecture works for any social robot that has a general-purpose graphics processing unit (GPGPU), I2C/SPI buses, Internet connection, and the Robotics Operating System (ROS). The modular design of this architecture enables developers to easily add/remove/update complex behaviors. The proposed software architecture provides IoT technology, GPGPU nodes, I2C and SPI bus mangers, audio-visual interaction nodes (speech to text, text to speech, and image understanding), and isolation between behavior nodes and other nodes. The proposed IoT solution consists of related nodes in the robot, a RESTful web service, and user interfaces. We used the HTTP protocol as a means of two-way communication with the social robot over the Internet. Developers can easily edit or add nodes in C, C++, and Python programming languages. Our architecture can be used for designing more sophisticated behaviors for social humanoid robots.

* This is the preprint version. The final version is published in Robotics and Autonomous Systems, Volume 139, 2021, Page 103536, ISSN 0921-8890, https://doi.org/10.1016/j.robot.2020.103536

End-to-End Learning of Speech 2D Feature-Trajectory for Prosthetic Hands

Sep 22, 2020

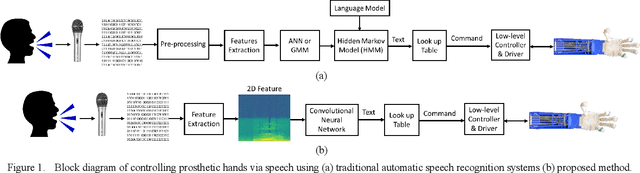

Abstract:Speech is one of the most common forms of communication in humans. Speech commands are essential parts of multimodal controlling of prosthetic hands. In the past decades, researchers used automatic speech recognition systems for controlling prosthetic hands by using speech commands. Automatic speech recognition systems learn how to map human speech to text. Then, they used natural language processing or a look-up table to map the estimated text to a trajectory. However, the performance of conventional speech-controlled prosthetic hands is still unsatisfactory. Recent advancements in general-purpose graphics processing units (GPGPUs) enable intelligent devices to run deep neural networks in real-time. Thus, architectures of intelligent systems have rapidly transformed from the paradigm of composite subsystems optimization to the paradigm of end-to-end optimization. In this paper, we propose an end-to-end convolutional neural network (CNN) that maps speech 2D features directly to trajectories for prosthetic hands. The proposed convolutional neural network is lightweight, and thus it runs in real-time in an embedded GPGPU. The proposed method can use any type of speech 2D feature that has local correlations in each dimension such as spectrogram, MFCC, or PNCC. We omit the speech to text step in controlling the prosthetic hand in this paper. The network is written in Python with Keras library that has a TensorFlow backend. We optimized the CNN for NVIDIA Jetson TX2 developer kit. Our experiment on this CNN demonstrates a root-mean-square error of 0.119 and 20ms running time to produce trajectory outputs corresponding to the voice input data. To achieve a lower error in real-time, we can optimize a similar CNN for a more powerful embedded GPGPU such as NVIDIA AGX Xavier.

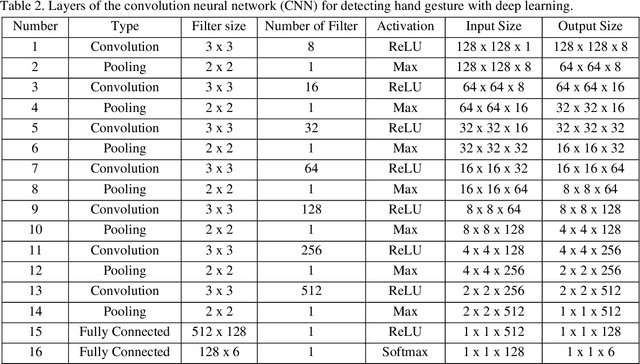

Convolutional Neural Networks for Speech Controlled Prosthetic Hands

Oct 03, 2019

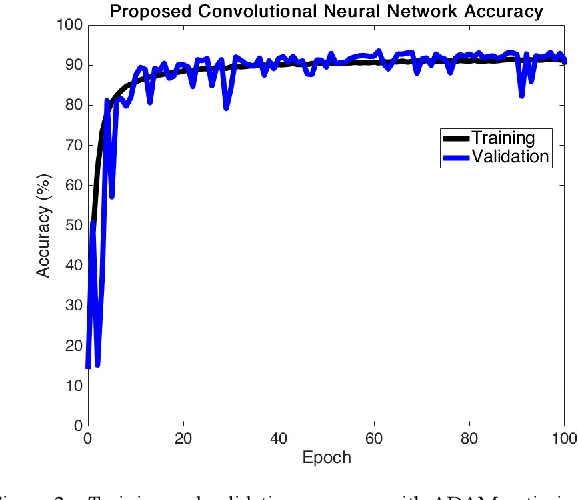

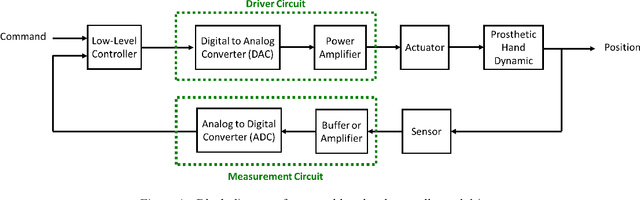

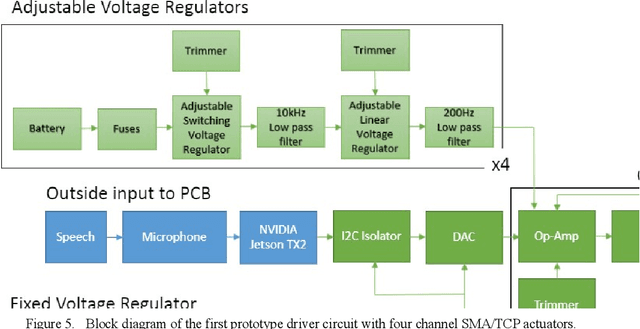

Abstract:Speech recognition is one of the key topics in artificial intelligence, as it is one of the most common forms of communication in humans. Researchers have developed many speech-controlled prosthetic hands in the past decades, utilizing conventional speech recognition systems that use a combination of neural network and hidden Markov model. Recent advancements in general-purpose graphics processing units (GPGPUs) enable intelligent devices to run deep neural networks in real-time. Thus, state-of-the-art speech recognition systems have rapidly shifted from the paradigm of composite subsystems optimization to the paradigm of end-to-end optimization. However, a low-power embedded GPGPU cannot run these speech recognition systems in real-time. In this paper, we show the development of deep convolutional neural networks (CNN) for speech control of prosthetic hands that run in real-time on a NVIDIA Jetson TX2 developer kit. First, the device captures and converts speech into 2D features (like spectrogram). The CNN receives the 2D features and classifies the hand gestures. Finally, the hand gesture classes are sent to the prosthetic hand motion control system. The whole system is written in Python with Keras, a deep learning library that has a TensorFlow backend. Our experiments on the CNN demonstrate the 91% accuracy and 2ms running time of hand gestures (text output) from speech commands, which can be used to control the prosthetic hands in real-time.

* 2019 First International Conference on Transdisciplinary AI (TransAI), Laguna Hills, California, USA, 2019, pp. 35-42

Deep learning approach to control of prosthetic hands with electromyography signals

Sep 21, 2019

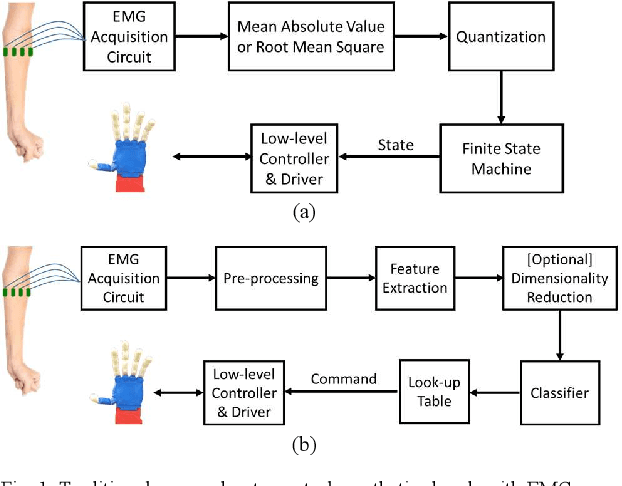

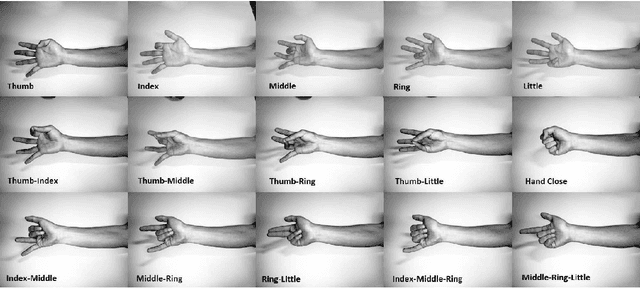

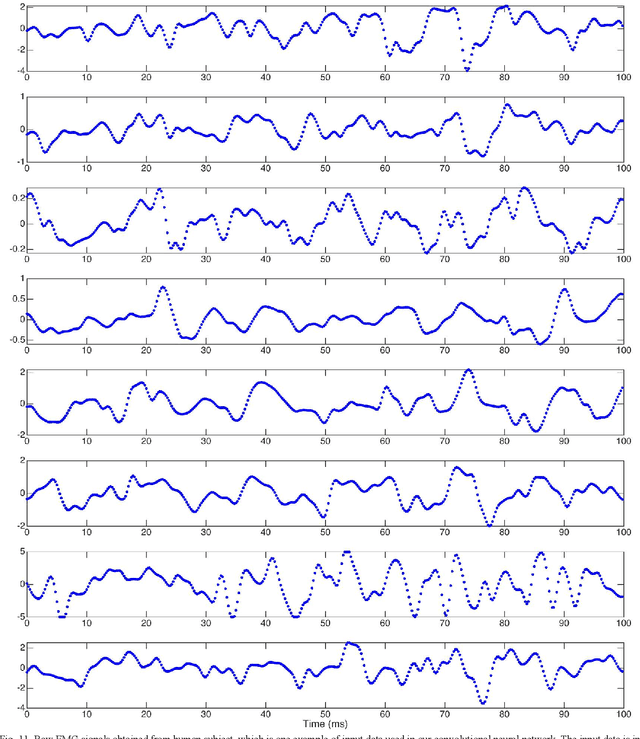

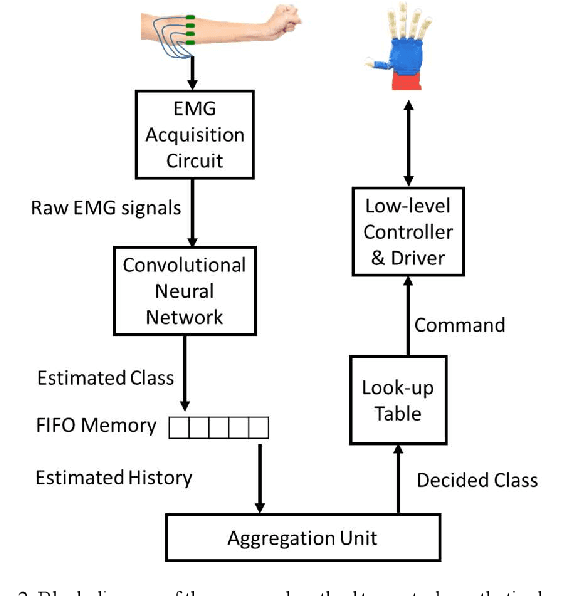

Abstract:Natural muscles provide mobility in response to nerve impulses. Electromyography (EMG) measures the electrical activity of muscles in response to a nerve's stimulation. In the past few decades, EMG signals have been used extensively in the identification of user intention to potentially control assistive devices such as smart wheelchairs, exoskeletons, and prosthetic devices. In the design of conventional assistive devices, developers optimize multiple subsystems independently. Feature extraction and feature description are essential subsystems of this approach. Therefore, researchers proposed various hand-crafted features to interpret EMG signals. However, the performance of conventional assistive devices is still unsatisfactory. In this paper, we propose a deep learning approach to control prosthetic hands with raw EMG signals. We use a novel deep convolutional neural network to eschew the feature-engineering step. Removing the feature extraction and feature description is an important step toward the paradigm of end-to-end optimization. Fine-tuning and personalization are additional advantages of our approach. The proposed approach is implemented in Python with TensorFlow deep learning library, and it runs in real-time in general-purpose graphics processing units of NVIDIA Jetson TX2 developer kit. Our results demonstrate the ability of our system to predict fingers position from raw EMG signals. We anticipate our EMG-based control system to be a starting point to design more sophisticated prosthetic hands. For example, a pressure measurement unit can be added to transfer the perception of the environment to the user. Furthermore, our system can be modified for other prosthetic devices.

* Conference. Houston, Texas, USA. September, 2019

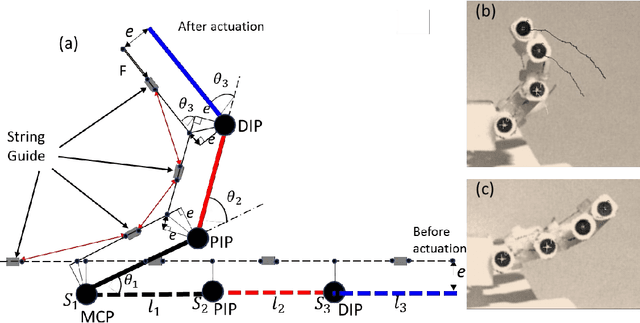

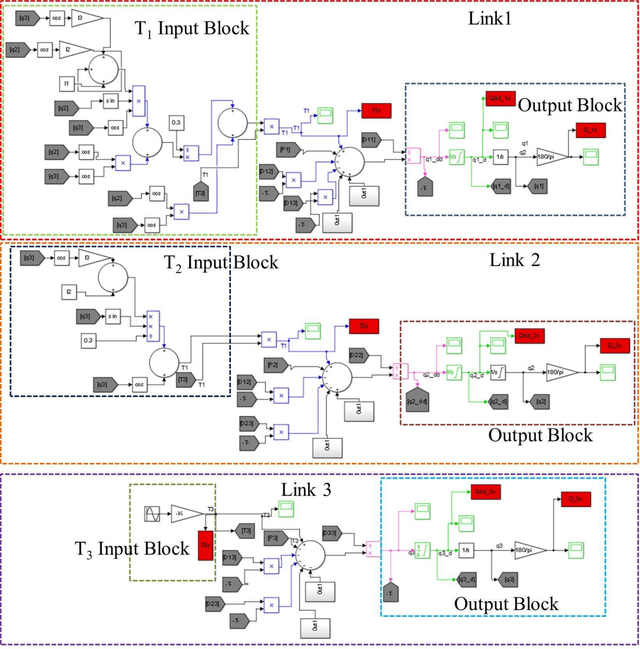

Modeling and Simulation of Robotic Finger Powered by Nylon Artificial Muscles- Equations with Simulink model

Jan 28, 2019

Abstract:This paper shows a detailed modeling of three-link robotic finger that is actuated by nylon artificial muscles and a simulink model that can be used for numerical study of a robotic finger. The robotic hand prototype was recently demonstrated in recent publication Wu, L., Jung de Andrade, M., Saharan, L.,Rome, R., Baughman, R., and Tadesse, Y., 2017, Compact and Low-cost Humanoid Hand Powered by Nylon Artificial Muscles, Bioinspiration & Biomimetics, 12 (2). The robotic hand is a 3D printed, lightweight and compact hand actuated by silver-coated nylon muscles, often called Twisted and coiled Polymer (TCP) muscles. TCP muscles are thermal actuators that contract when they are heated and they are getting attention for application in robotics. The purpose of this paper is to demonstrate the modeling equations that were derived based on Euler Lagrangian approach that is suitable for implementation in simulink model.

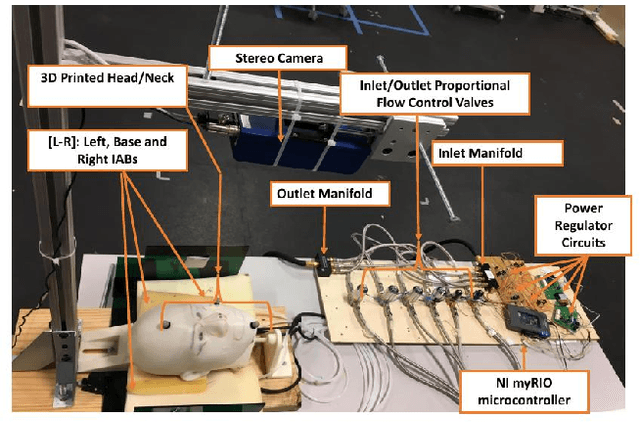

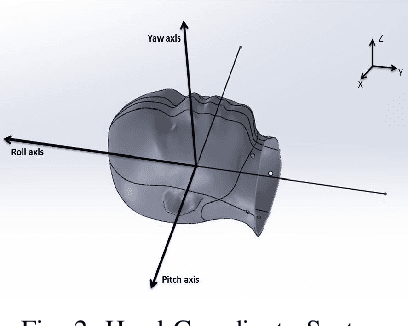

Soft-NeuroAdapt: A 3-DOF Neuro-Adaptive Patient Pose Correction System For Frameless and Maskless Cancer Radiotherapy

Sep 22, 2017

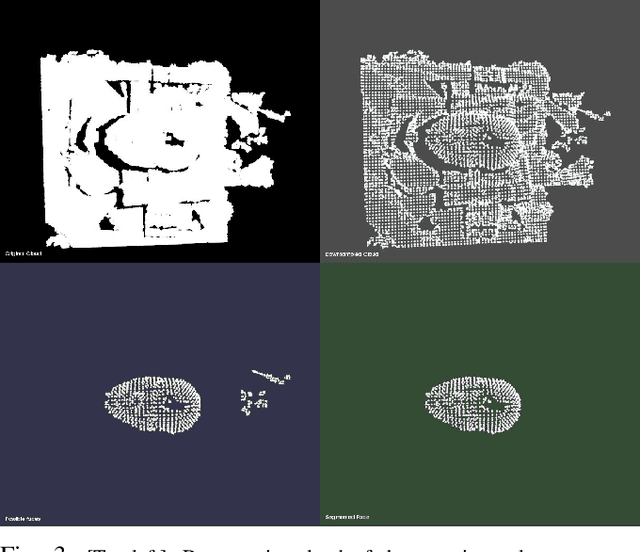

Abstract:Precise patient positioning is fundamental to successful removal of malignant tumors during treatment of head and neck cancers. Errors in patient positioning have been known to damage critical organs and cause complications. To better address issues of patient positioning and motion, we introduce a 3-DOF neuro-adaptive soft-robot, called Soft-NeuroAdapt to correct deviations along 3 axes. The robot consists of inflatable air bladders that adaptively control head deviations from target while ensuring patient safety and comfort. The adaptive-neuro controller combines a state feedback component, a feedforward regulator, and a neural network that ensures correct adaptation. States are measured by a 3D vision system. We validate Soft-NeuroAdapt on a 3D printed head-and-neck dummy, and demonstrate that the controller provides adaptive actuation that compensates for intrafractional deviations in patient positioning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge