Xu Dong

FadeMem: Biologically-Inspired Forgetting for Efficient Agent Memory

Jan 26, 2026Abstract:Large language models deployed as autonomous agents face critical memory limitations, lacking selective forgetting mechanisms that lead to either catastrophic forgetting at context boundaries or information overload within them. While human memory naturally balances retention and forgetting through adaptive decay processes, current AI systems employ binary retention strategies that preserve everything or lose it entirely. We propose FadeMem, a biologically-inspired agent memory architecture that incorporates active forgetting mechanisms mirroring human cognitive efficiency. FadeMem implements differential decay rates across a dual-layer memory hierarchy, where retention is governed by adaptive exponential decay functions modulated by semantic relevance, access frequency, and temporal patterns. Through LLM-guided conflict resolution and intelligent memory fusion, our system consolidates related information while allowing irrelevant details to fade. Experiments on Multi-Session Chat, LoCoMo, and LTI-Bench demonstrate superior multi-hop reasoning and retrieval with 45\% storage reduction, validating the effectiveness of biologically-inspired forgetting in agent memory systems.

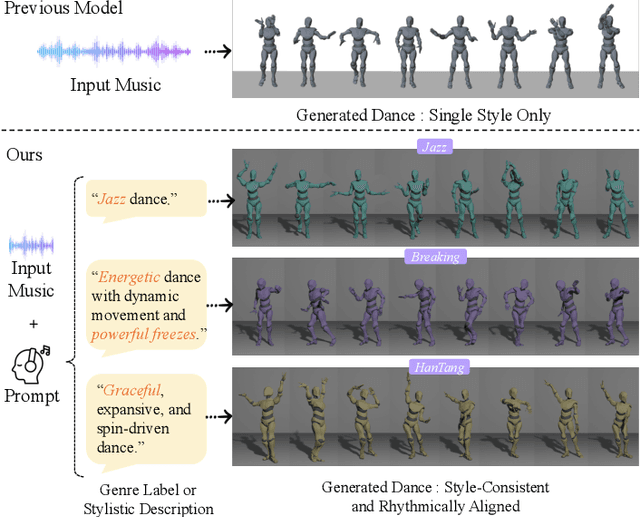

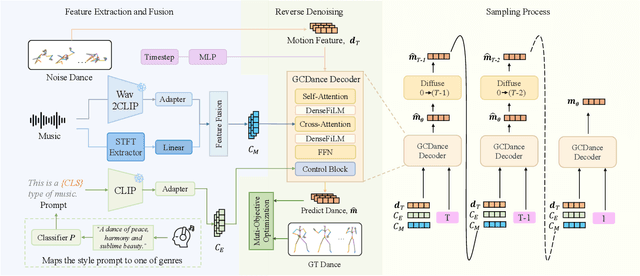

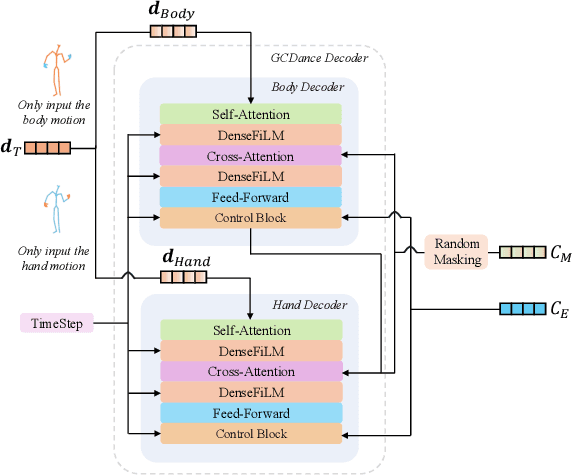

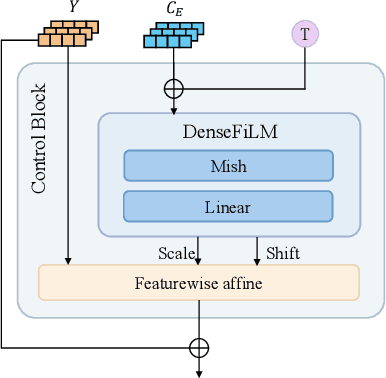

GCDance: Genre-Controlled 3D Full Body Dance Generation Driven By Music

Feb 25, 2025

Abstract:Generating high-quality full-body dance sequences from music is a challenging task as it requires strict adherence to genre-specific choreography. Moreover, the generated sequences must be both physically realistic and precisely synchronized with the beats and rhythm of the music. To overcome these challenges, we propose GCDance, a classifier-free diffusion framework for generating genre-specific dance motions conditioned on both music and textual prompts. Specifically, our approach extracts music features by combining high-level pre-trained music foundation model features with hand-crafted features for multi-granularity feature fusion. To achieve genre controllability, we leverage CLIP to efficiently embed genre-based textual prompt representations at each time step within our dance generation pipeline. Our GCDance framework can generate diverse dance styles from the same piece of music while ensuring coherence with the rhythm and melody of the music. Extensive experimental results obtained on the FineDance dataset demonstrate that GCDance significantly outperforms the existing state-of-the-art approaches, which also achieve competitive results on the AIST++ dataset. Our ablation and inference time analysis demonstrate that GCDance provides an effective solution for high-quality music-driven dance generation.

Interpretable Long-term Action Quality Assessment

Aug 21, 2024Abstract:Long-term Action Quality Assessment (AQA) evaluates the execution of activities in videos. However, the length presents challenges in fine-grained interpretability, with current AQA methods typically producing a single score by averaging clip features, lacking detailed semantic meanings of individual clips. Long-term videos pose additional difficulty due to the complexity and diversity of actions, exacerbating interpretability challenges. While query-based transformer networks offer promising long-term modeling capabilities, their interpretability in AQA remains unsatisfactory due to a phenomenon we term Temporal Skipping, where the model skips self-attention layers to prevent output degradation. To address this, we propose an attention loss function and a query initialization method to enhance performance and interpretability. Additionally, we introduce a weight-score regression module designed to approximate the scoring patterns observed in human judgments and replace conventional single-score regression, improving the rationality of interpretability. Our approach achieves state-of-the-art results on three real-world, long-term AQA benchmarks. Our code is available at: https://github.com/dx199771/Interpretability-AQA

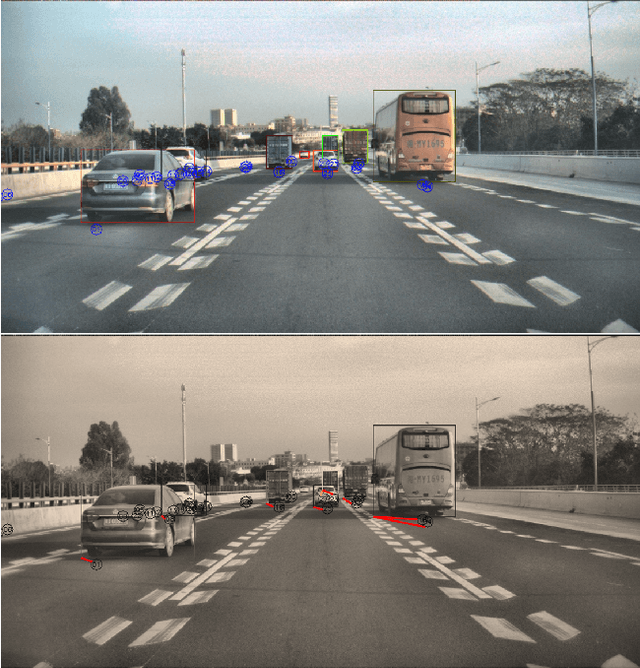

Radar Camera Fusion via Representation Learning in Autonomous Driving

Mar 14, 2021

Abstract:Radars and cameras are mature, cost-effective, and robust sensors and have been widely used in the perception stack of mass-produced autonomous driving systems. Due to their complementary properties, outputs from radar detection (radar pins) and camera perception (2D bounding boxes) are usually fused to generate the best perception results. The key to successful radar-camera fusion is accurate data association. The challenges in radar-camera association can be attributed to the complexity of driving scenes, the noisy and sparse nature of radar measurements, and the depth ambiguity from 2D bounding boxes. Traditional rule-based association methods are susceptible to performance degradation in challenging scenarios and failure in corner cases. In this study, we propose to address rad-cam association via deep representation learning, to explore feature-level interaction and global reasoning. Concretely, we design a loss sampling mechanism and an innovative ordinal loss to overcome the difficulty of imperfect labeling and to enforce critical human reasoning. Despite being trained with noisy labels generated by a rule-based algorithm, our proposed method achieves a performance of 92.2% F1 score, which is 11.6% higher than the rule-based teacher. Moreover, this data-driven method also lends itself to continuous improvement via corner case mining.

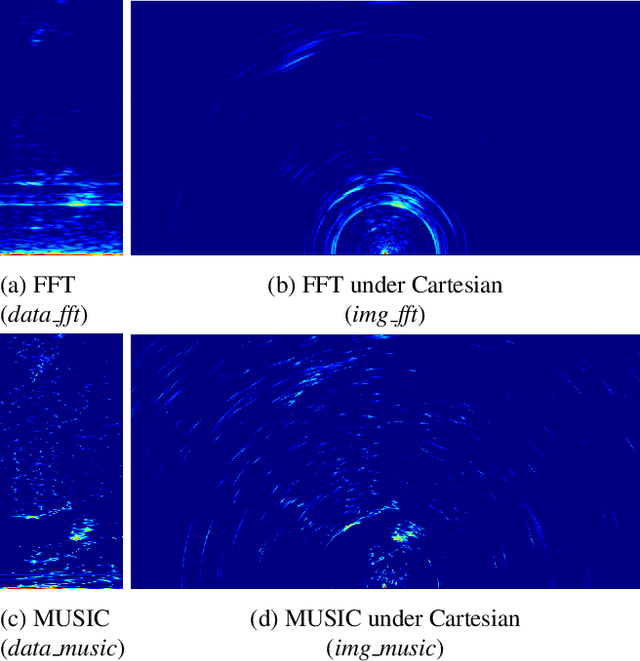

Probabilistic Oriented Object Detection in Automotive Radar

Apr 18, 2020

Abstract:Autonomous radar has been an integral part of advanced driver assistance systems due to its robustness to adverse weather and various lighting conditions. Conventional automotive radars use digital signal processing (DSP) algorithms to process raw data into sparse radar pins that do not provide information regarding the size and orientation of the objects. In this paper, we propose a deep-learning based algorithm for radar object detection. The algorithm takes in radar data in its raw tensor representation and places probabilistic oriented bounding boxes around the detected objects in bird's-eye-view space. We created a new multimodal dataset with 102544 frames of raw radar and synchronized LiDAR data. To reduce human annotation effort we developed a scalable pipeline to automatically annotate ground truth using LiDAR as reference. Based on this dataset we developed a vehicle detection pipeline using raw radar data as the only input. Our best performing radar detection model achieves 77.28\% AP under oriented IoU of 0.3. To the best of our knowledge, this is the first attempt to investigate object detection with raw radar data for conventional corner automotive radars.

Sinogram interpolation for sparse-view micro-CT with deep learning neural network

Feb 12, 2019Abstract:In sparse-view Computed Tomography (CT), only a small number of projection images are taken around the object, and sinogram interpolation method has a significant impact on final image quality. When the amount of sparsity (the amount of missing views in sinogram data) is not high, conventional interpolation methods have yielded good results. When the amount of sparsity is high, more advanced sinogram interpolation methods are needed. Recently, several deep learning (DL) based sinogram interpolation methods have been proposed. However, those DL-based methods have mostly tested so far on computer simulated sinogram data rather experimentally acquired sinogram data. In this study, we developed a sinogram interpolation method for sparse-view micro-CT based on the combination of U-Net and residual learning. We applied the method to sinogram data obtained from sparse-view micro-CT experiments, where the sparsity reached 90%. The interpolated sinogram by the DL neural network was fed to FBP algorithm for reconstruction. The result shows that both RMSE and SSIM of CT image are greatly improved. The experimental results demonstrate that this sinogram interpolation method produce significantly better results over standard linear interpolation methods when the sinogram data are extremely sparse.

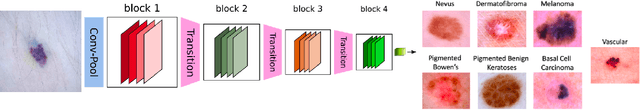

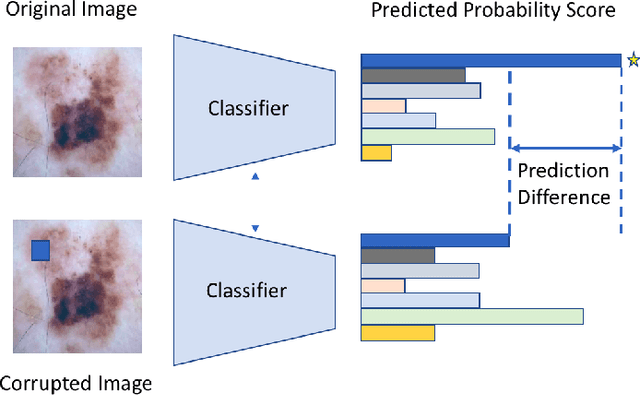

What evidence does deep learning model use to classify Skin Lesions?

Nov 02, 2018

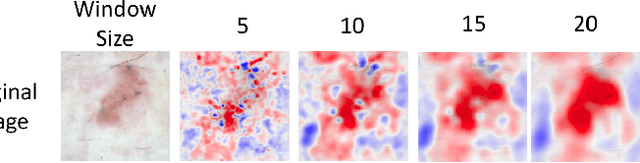

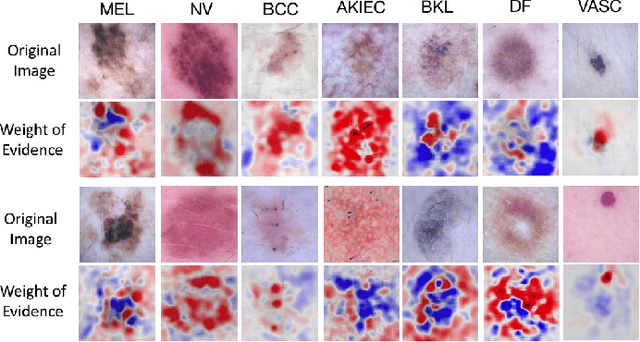

Abstract:Melanoma is a type of skin cancer with the most rapidly increasing incidence. Early detection of melanoma using dermoscopy images significantly increases patients' survival rate. However, accurately classifying skin lesions, especially in the early stage, is extremely challenging via dermatologists' observation. Hence, the discovery of reliable biomarkers for melanoma diagnosis will be meaningful. Recent years, deep learning empowered computer-assisted diagnosis has been shown its value in medical imaging-based decision making. However, lots of research focus on improving disease detection accuracy but not exploring the evidence of pathology. In this paper, we propose a method to interpret the deep learning classification findings. Firstly, we propose an accurate neural network architecture to classify skin lesion. Secondly, we utilize a prediction difference analysis method that examining each patch on the image through patch wised corrupting for detecting the biomarkers. Lastly, we validate that our biomarker findings are corresponding to the patterns in the literature. The findings might be significant to guide clinical diagnosis.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge