Xiaoxi Wei

The 'Sandwich' meta-framework for architecture agnostic deep privacy-preserving transfer learning for non-invasive brainwave decoding

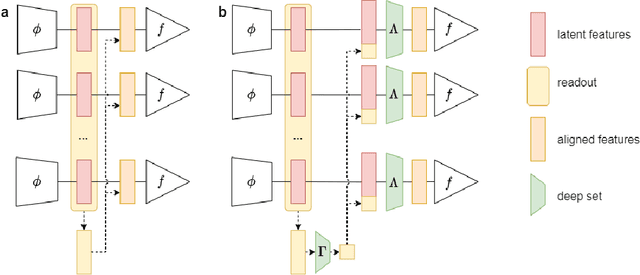

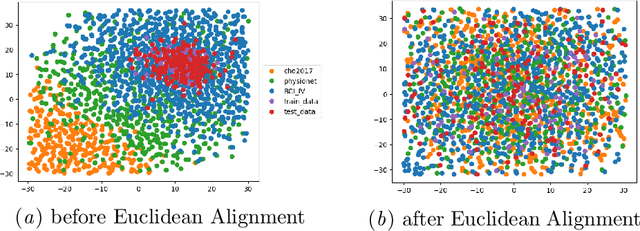

Apr 10, 2024Abstract:Machine learning has enhanced the performance of decoding signals indicating human behaviour. EEG decoding, as an exemplar indicating neural activity and human thoughts non-invasively, has been helpful in neural activity analysis and aiding patients via brain-computer interfaces. However, training machine learning algorithms on EEG encounters two primary challenges: variability across data sets and privacy concerns using data from individuals and data centres. Our objective is to address these challenges by integrating transfer learning for data variability and federated learning for data privacy into a unified approach. We introduce the Sandwich as a novel deep privacy-preserving meta-framework combining transfer learning and federated learning. The Sandwich framework comprises three components: federated networks (first layers) that handle data set differences at the input level, a shared network (middle layer) learning common rules and applying transfer learning, and individual classifiers (final layers) for specific tasks of each data set. It enables the central network (central server) to benefit from multiple data sets, while local branches (local servers) maintain data and label privacy. We evaluated the `Sandwich' meta-architecture in various configurations using the BEETL motor imagery challenge, a benchmark for heterogeneous EEG data sets. Compared with baseline models, our `Sandwich' implementations showed superior performance. The best-performing model, the Inception Sandwich with deep set alignment (Inception-SD-Deepset), exceeded baseline methods by 9%. The `Sandwich' framework demonstrates significant advancements in federated deep transfer learning for diverse tasks and data sets. It outperforms conventional deep learning methods, showcasing the potential for effective use of larger, heterogeneous data sets with enhanced privacy as a model-agnostic meta-framework.

Survey of Consciousness Theory from Computational Perspective

Sep 18, 2023

Abstract:Human consciousness has been a long-lasting mystery for centuries, while machine intelligence and consciousness is an arduous pursuit. Researchers have developed diverse theories for interpreting the consciousness phenomenon in human brains from different perspectives and levels. This paper surveys several main branches of consciousness theories originating from different subjects including information theory, quantum physics, cognitive psychology, physiology and computer science, with the aim of bridging these theories from a computational perspective. It also discusses the existing evaluation metrics of consciousness and possibility for current computational models to be conscious. Breaking the mystery of consciousness can be an essential step in building general artificial intelligence with computing machines.

EEG Decoding for Datasets with Heterogenous Electrode Configurations using Transfer Learning Graph Neural Networks

Jun 20, 2023Abstract:Brain-Machine Interfacing (BMI) has greatly benefited from adopting machine learning methods for feature learning that require extensive data for training, which are often unavailable from a single dataset. Yet, it is difficult to combine data across labs or even data within the same lab collected over the years due to the variation in recording equipment and electrode layouts resulting in shifts in data distribution, changes in data dimensionality, and altered identity of data dimensions. Our objective is to overcome this limitation and learn from many different and diverse datasets across labs with different experimental protocols. To tackle the domain adaptation problem, we developed a novel machine learning framework combining graph neural networks (GNNs) and transfer learning methodologies for non-invasive Motor Imagery (MI) EEG decoding, as an example of BMI. Empirically, we focus on the challenges of learning from EEG data with different electrode layouts and varying numbers of electrodes. We utilise three MI EEG databases collected using very different numbers of EEG sensors (from 22 channels to 64) and layouts (from custom layouts to 10-20). Our model achieved the highest accuracy with lower standard deviations on the testing datasets. This indicates that the GNN-based transfer learning framework can effectively aggregate knowledge from multiple datasets with different electrode layouts, leading to improved generalization in subject-independent MI EEG classification. The findings of this study have important implications for Brain-Computer-Interface (BCI) research, as they highlight a promising method for overcoming the limitations posed by non-unified experimental setups. By enabling the integration of diverse datasets with varying electrode layouts, our proposed approach can help advance the development and application of BMI technologies.

Federated deep transfer learning for EEG decoding using multiple BCI tasks

Nov 22, 2022Abstract:Deep learning has been successful in BCI decoding. However, it is very data-hungry and requires pooling data from multiple sources. EEG data from various sources decrease the decoding performance due to negative transfer. Recently, transfer learning for EEG decoding has been suggested as a remedy and become subject to recent BCI competitions (e.g. BEETL), but there are two complications in combining data from many subjects. First, privacy is not protected as highly personal brain data needs to be shared (and copied across increasingly tight information governance boundaries). Moreover, BCI data are collected from different sources and are often based on different BCI tasks, which has been thought to limit their reusability. Here, we demonstrate a federated deep transfer learning technique, the Multi-dataset Federated Separate-Common-Separate Network (MF-SCSN) based on our previous work of SCSN, which integrates privacy-preserving properties into deep transfer learning to utilise data sets with different tasks. This framework trains a BCI decoder using different source data sets obtained from different imagery tasks (e.g. some data sets with hands and feet, vs others with single hands and tongue, etc). Therefore, by introducing privacy-preserving transfer learning techniques, we unlock the reusability and scalability of existing BCI data sets. We evaluated our federated transfer learning method on the NeurIPS 2021 BEETL competition BCI task. The proposed architecture outperformed the baseline decoder by 3%. Moreover, compared with the baseline and other transfer learning algorithms, our method protects the privacy of the brain data from different data centres.

2021 BEETL Competition: Advancing Transfer Learning for Subject Independence & Heterogenous EEG Data Sets

Feb 14, 2022

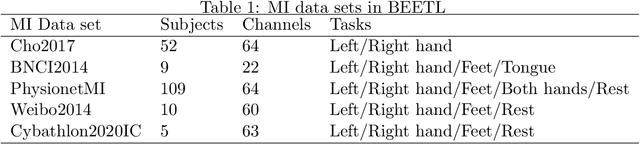

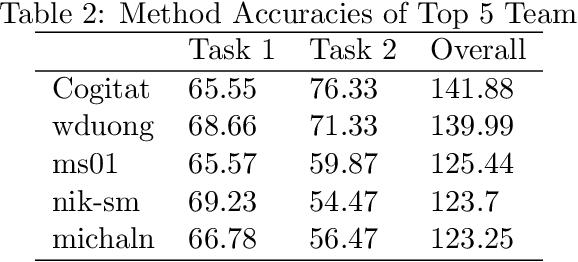

Abstract:Transfer learning and meta-learning offer some of the most promising avenues to unlock the scalability of healthcare and consumer technologies driven by biosignal data. This is because current methods cannot generalise well across human subjects' data and handle learning from different heterogeneously collected data sets, thus limiting the scale of training data. On the other side, developments in transfer learning would benefit significantly from a real-world benchmark with immediate practical application. Therefore, we pick electroencephalography (EEG) as an exemplar for what makes biosignal machine learning hard. We design two transfer learning challenges around diagnostics and Brain-Computer-Interfacing (BCI), that have to be solved in the face of low signal-to-noise ratios, major variability among subjects, differences in the data recording sessions and techniques, and even between the specific BCI tasks recorded in the dataset. Task 1 is centred on the field of medical diagnostics, addressing automatic sleep stage annotation across subjects. Task 2 is centred on Brain-Computer Interfacing (BCI), addressing motor imagery decoding across both subjects and data sets. The BEETL competition with its over 30 competing teams and its 3 winning entries brought attention to the potential of deep transfer learning and combinations of set theory and conventional machine learning techniques to overcome the challenges. The results set a new state-of-the-art for the real-world BEETL benchmark.

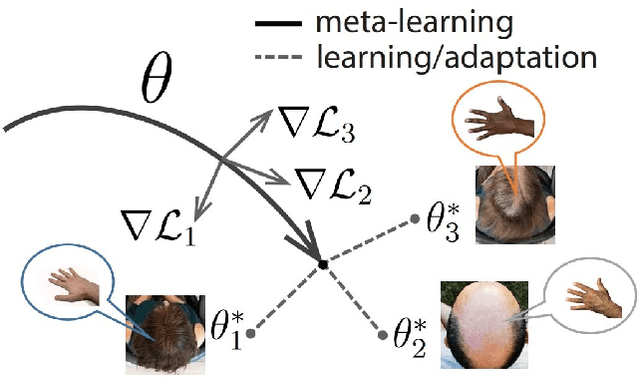

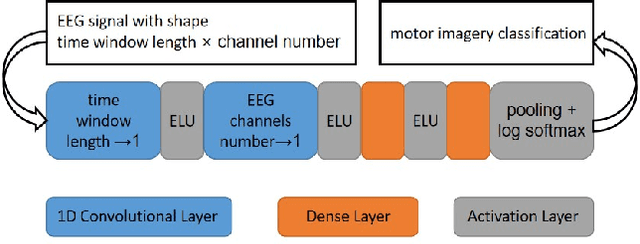

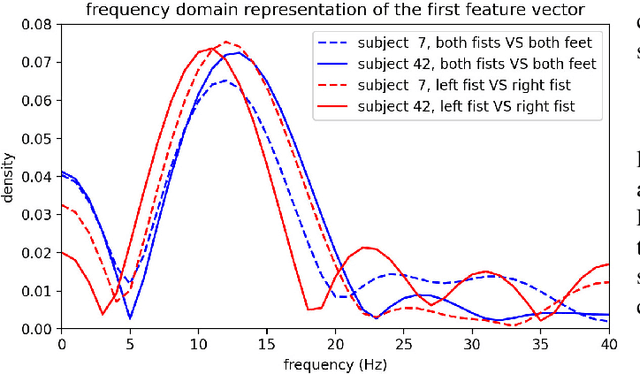

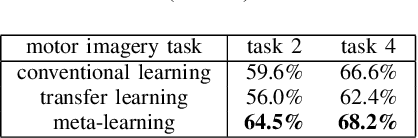

Model-Agnostic Meta-Learning for EEG Motor Imagery Decoding in Brain-Computer-Interfacing

Mar 10, 2021

Abstract:We introduce here the idea of Meta-Learning for training EEG BCI decoders. Meta-Learning is a way of training machine learning systems so they learn to learn. We apply here meta-learning to a simple Deep Learning BCI architecture and compare it to transfer learning on the same architecture. Our Meta-learning strategy operates by finding optimal parameters for the BCI decoder so that it can quickly generalise between different users and recording sessions -- thereby also generalising to new users or new sessions quickly. We tested our algorithm on the Physionet EEG motor imagery dataset. Our approach increased motor imagery classification accuracy between 60% to 80%, outperforming other algorithms under the little-data condition. We believe that establishing the meta-learning or learning-to-learn approach will help neural engineering and human interfacing with the challenges of quickly setting up decoders of neural signals to make them more suitable for daily-life.

Inter-subject Deep Transfer Learning for Motor Imagery EEG Decoding

Mar 09, 2021

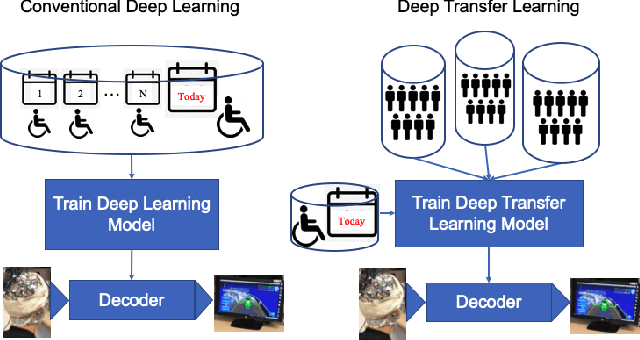

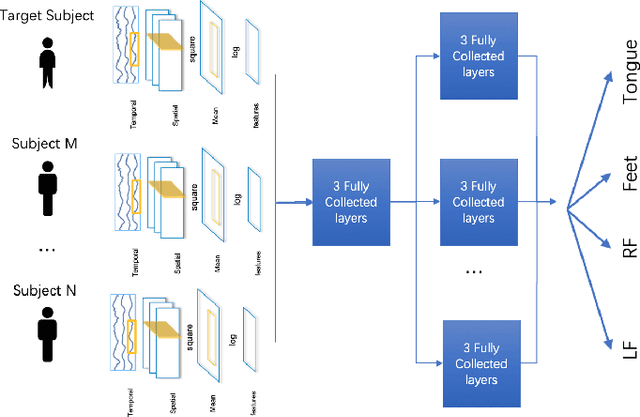

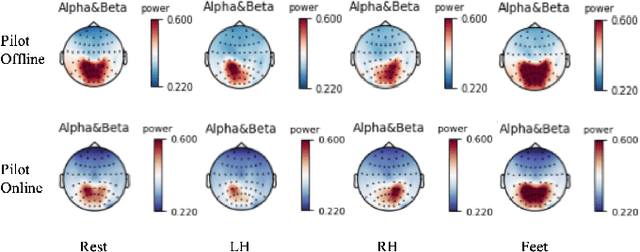

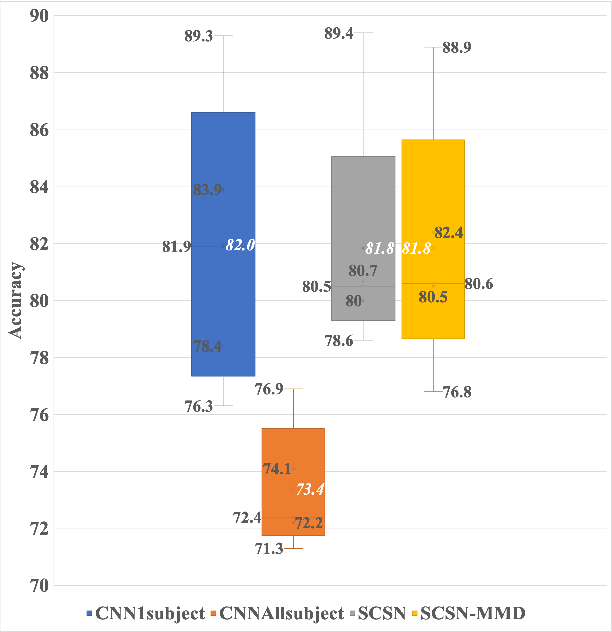

Abstract:Convolutional neural networks (CNNs) have become a powerful technique to decode EEG and have become the benchmark for motor imagery EEG Brain-Computer-Interface (BCI) decoding. However, it is still challenging to train CNNs on multiple subjects' EEG without decreasing individual performance. This is known as the negative transfer problem, i.e. learning from dissimilar distributions causes CNNs to misrepresent each of them instead of learning a richer representation. As a result, CNNs cannot directly use multiple subjects' EEG to enhance model performance directly. To address this problem, we extend deep transfer learning techniques to the EEG multi-subject training case. We propose a multi-branch deep transfer network, the Separate-Common-Separate Network (SCSN) based on splitting the network's feature extractors for individual subjects. We also explore the possibility of applying Maximum-mean discrepancy (MMD) to the SCSN (SCSN-MMD) to better align distributions of features from individual feature extractors. The proposed network is evaluated on the BCI Competition IV 2a dataset (BCICIV2a dataset) and our online recorded dataset. Results show that the proposed SCSN (81.8%, 53.2%) and SCSN-MMD (81.8%, 54.8%) outperformed the benchmark CNN (73.4%, 48.8%) on both datasets using multiple subjects. Our proposed networks show the potential to utilise larger multi-subject datasets to train an EEG decoder without being influenced by negative transfer.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge