Weitong Wu

WHU-PCPR: A cross-platform heterogeneous point cloud dataset for place recognition in complex urban scenes

Jan 10, 2026Abstract:Point Cloud-based Place Recognition (PCPR) demonstrates considerable potential in applications such as autonomous driving, robot localization and navigation, and map update. In practical applications, point clouds used for place recognition are often acquired from different platforms and LiDARs across varying scene. However, existing PCPR datasets lack diversity in scenes, platforms, and sensors, which limits the effective development of related research. To address this gap, we establish WHU-PCPR, a cross-platform heterogeneous point cloud dataset designed for place recognition. The dataset differentiates itself from existing datasets through its distinctive characteristics: 1) cross-platform heterogeneous point clouds: collected from survey-grade vehicle-mounted Mobile Laser Scanning (MLS) systems and low-cost Portable helmet-mounted Laser Scanning (PLS) systems, each equipped with distinct mechanical and solid-state LiDAR sensors. 2) Complex localization scenes: encompassing real-time and long-term changes in both urban and campus road scenes. 3) Large-scale spatial coverage: featuring 82.3 km of trajectory over a 60-month period and an unrepeated route of approximately 30 km. Based on WHU-PCPR, we conduct extensive evaluation and in-depth analysis of several representative PCPR methods, and provide a concise discussion of key challenges and future research directions. The dataset and benchmark code are available at https://github.com/zouxianghong/WHU-PCPR.

HybridMamba: A Dual-domain Mamba for 3D Medical Image Segmentation

Sep 18, 2025Abstract:In the domain of 3D biomedical image segmentation, Mamba exhibits the superior performance for it addresses the limitations in modeling long-range dependencies inherent to CNNs and mitigates the abundant computational overhead associated with Transformer-based frameworks when processing high-resolution medical volumes. However, attaching undue importance to global context modeling may inadvertently compromise critical local structural information, thus leading to boundary ambiguity and regional distortion in segmentation outputs. Therefore, we propose the HybridMamba, an architecture employing dual complementary mechanisms: 1) a feature scanning strategy that progressively integrates representations both axial-traversal and local-adaptive pathways to harmonize the relationship between local and global representations, and 2) a gated module combining spatial-frequency analysis for comprehensive contextual modeling. Besides, we collect a multi-center CT dataset related to lung cancer. Experiments on MRI and CT datasets demonstrate that HybridMamba significantly outperforms the state-of-the-art methods in 3D medical image segmentation.

Reliable-loc: Robust sequential LiDAR global localization in large-scale street scenes based on verifiable cues

Nov 09, 2024Abstract:Wearable laser scanning (WLS) system has the advantages of flexibility and portability. It can be used for determining the user's path within a prior map, which is a huge demand for applications in pedestrian navigation, collaborative mapping, augmented reality, and emergency rescue. However, existing LiDAR-based global localization methods suffer from insufficient robustness, especially in complex large-scale outdoor scenes with insufficient features and incomplete coverage of the prior map. To address such challenges, we propose LiDAR-based reliable global localization (Reliable-loc) exploiting the verifiable cues in the sequential LiDAR data. First, we propose a Monte Carlo Localization (MCL) based on spatially verifiable cues, utilizing the rich information embedded in local features to adjust the particles' weights hence avoiding the particles converging to erroneous regions. Second, we propose a localization status monitoring mechanism guided by the sequential pose uncertainties and adaptively switching the localization mode using the temporal verifiable cues to avoid the crash of the localization system. To validate the proposed Reliable-loc, comprehensive experiments have been conducted on a large-scale heterogeneous point cloud dataset consisting of high-precision vehicle-mounted mobile laser scanning (MLS) point clouds and helmet-mounted WLS point clouds, which cover various street scenes with a length of over 20km. The experimental results indicate that Reliable-loc exhibits high robustness, accuracy, and efficiency in large-scale, complex street scenes, with a position accuracy of 1.66m, yaw accuracy of 3.09 degrees, and achieves real-time performance. For the code and detailed experimental results, please refer to https://github.com/zouxianghong/Reliable-loc.

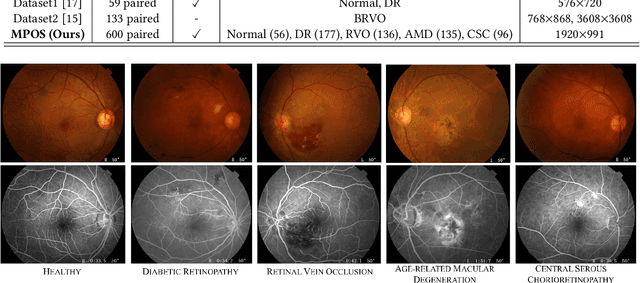

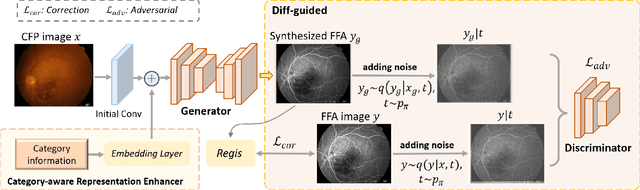

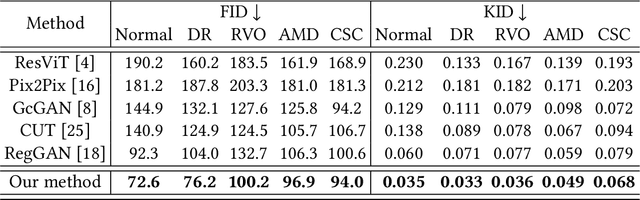

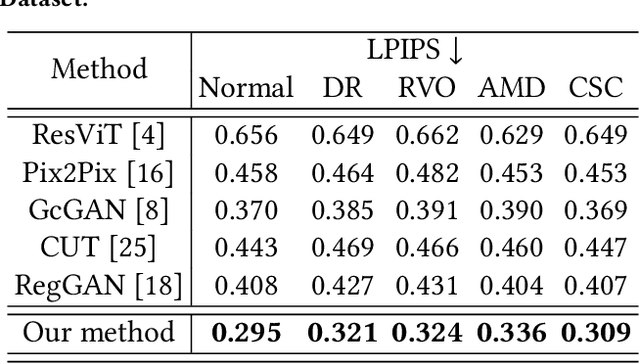

Non-Invasive to Invasive: Enhancing FFA Synthesis from CFP with a Benchmark Dataset and a Novel Network

Oct 19, 2024

Abstract:Fundus imaging is a pivotal tool in ophthalmology, and different imaging modalities are characterized by their specific advantages. For example, Fundus Fluorescein Angiography (FFA) uniquely provides detailed insights into retinal vascular dynamics and pathology, surpassing Color Fundus Photographs (CFP) in detecting microvascular abnormalities and perfusion status. However, the conventional invasive FFA involves discomfort and risks due to fluorescein dye injection, and it is meaningful but challenging to synthesize FFA images from non-invasive CFP. Previous studies primarily focused on FFA synthesis in a single disease category. In this work, we explore FFA synthesis in multiple diseases by devising a Diffusion-guided generative adversarial network, which introduces an adaptive and dynamic diffusion forward process into the discriminator and adds a category-aware representation enhancer. Moreover, to facilitate this research, we collect the first multi-disease CFP and FFA paired dataset, named the Multi-disease Paired Ocular Synthesis (MPOS) dataset, with four different fundus diseases. Experimental results show that our FFA synthesis network can generate better FFA images compared to state-of-the-art methods. Furthermore, we introduce a paired-modal diagnostic network to validate the effectiveness of synthetic FFA images in the diagnosis of multiple fundus diseases, and the results show that our synthesized FFA images with the real CFP images have higher diagnosis accuracy than that of the compared FFA synthesizing methods. Our research bridges the gap between non-invasive imaging and FFA, thereby offering promising prospects to enhance ophthalmic diagnosis and patient care, with a focus on reducing harm to patients through non-invasive procedures. Our dataset and code will be released to support further research in this field (https://github.com/whq-xxh/FFA-Synthesis).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge