Weifan Wang

Modeling Rapid Contextual Learning in the Visual Cortex with Fast-Weight Deep Autoencoder Networks

Aug 07, 2025Abstract:Recent neurophysiological studies have revealed that the early visual cortex can rapidly learn global image context, as evidenced by a sparsification of population responses and a reduction in mean activity when exposed to familiar versus novel image contexts. This phenomenon has been attributed primarily to local recurrent interactions, rather than changes in feedforward or feedback pathways, supported by both empirical findings and circuit-level modeling. Recurrent neural circuits capable of simulating these effects have been shown to reshape the geometry of neural manifolds, enhancing robustness and invariance to irrelevant variations. In this study, we employ a Vision Transformer (ViT)-based autoencoder to investigate, from a functional perspective, how familiarity training can induce sensitivity to global context in the early layers of a deep neural network. We hypothesize that rapid learning operates via fast weights, which encode transient or short-term memory traces, and we explore the use of Low-Rank Adaptation (LoRA) to implement such fast weights within each Transformer layer. Our results show that (1) The proposed ViT-based autoencoder's self-attention circuit performs a manifold transform similar to a neural circuit model of the familiarity effect. (2) Familiarity training aligns latent representations in early layers with those in the top layer that contains global context information. (3) Familiarity training broadens the self-attention scope within the remembered image context. (4) These effects are significantly amplified by LoRA-based fast weights. Together, these findings suggest that familiarity training introduces global sensitivity to earlier layers in a hierarchical network, and that a hybrid fast-and-slow weight architecture may provide a viable computational model for studying rapid global context learning in the brain.

CLIP-aware Domain-Adaptive Super-Resolution

May 18, 2025Abstract:This work introduces CLIP-aware Domain-Adaptive Super-Resolution (CDASR), a novel framework that addresses the critical challenge of domain generalization in single image super-resolution. By leveraging the semantic capabilities of CLIP (Contrastive Language-Image Pre-training), CDASR achieves unprecedented performance across diverse domains and extreme scaling factors. The proposed method integrates CLIP-guided feature alignment mechanism with a meta-learning inspired few-shot adaptation strategy, enabling efficient knowledge transfer and rapid adaptation to target domains. A custom domain-adaptive module processes CLIP features alongside super-resolution features through a multi-stage transformation process, including CLIP feature processing, spatial feature generation, and feature fusion. This intricate process ensures effective incorporation of semantic information into the super-resolution pipeline. Additionally, CDASR employs a multi-component loss function that combines pixel-wise reconstruction, perceptual similarity, and semantic consistency. Extensive experiments on benchmark datasets demonstrate CDASR's superiority, particularly in challenging scenarios. On the Urban100 dataset at $\times$8 scaling, CDASR achieves a significant PSNR gain of 0.15dB over existing methods, with even larger improvements of up to 0.30dB observed at $\times$16 scaling.

Differentiable NMS via Sinkhorn Matching for End-to-End Fabric Defect Detection

May 11, 2025Abstract:Fabric defect detection confronts two fundamental challenges. First, conventional non-maximum suppression disrupts gradient flow, which hinders genuine end-to-end learning. Second, acquiring pixel-level annotations at industrial scale is prohibitively costly. Addressing these limitations, we propose a differentiable NMS framework for fabric defect detection that achieves superior localization precision through end-to-end optimization. We reformulate NMS as a differentiable bipartite matching problem solved through the Sinkhorn-Knopp algorithm, maintaining uninterrupted gradient flow throughout the network. This approach specifically targets the irregular morphologies and ambiguous boundaries of fabric defects by integrating proposal quality, feature similarity, and spatial relationships. Our entropy-constrained mask refinement mechanism further enhances localization precision through principled uncertainty modeling. Extensive experiments on the Tianchi fabric defect dataset demonstrate significant performance improvements over existing methods while maintaining real-time speeds suitable for industrial deployment. The framework exhibits remarkable adaptability across different architectures and generalizes effectively to general object detection tasks.

Single-image reflection removal via self-supervised diffusion models

Dec 29, 2024Abstract:Reflections often degrade the visual quality of images captured through transparent surfaces, and reflection removal methods suffers from the shortage of paired real-world samples.This paper proposes a hybrid approach that combines cycle-consistency with denoising diffusion probabilistic models (DDPM) to effectively remove reflections from single images without requiring paired training data. The method introduces a Reflective Removal Network (RRN) that leverages DDPMs to model the decomposition process and recover the transmission image, and a Reflective Synthesis Network (RSN) that re-synthesizes the input image using the separated components through a nonlinear attention-based mechanism. Experimental results demonstrate the effectiveness of the proposed method on the SIR$^2$, Flash-Based Reflection Removal (FRR) Dataset, and a newly introduced Museum Reflection Removal (MRR) dataset, showing superior performance compared to state-of-the-art methods.

The Devil is in the Sources! Knowledge Enhanced Cross-Domain Recommendation in an Information Bottleneck Perspective

Sep 29, 2024

Abstract:Cross-domain Recommendation (CDR) aims to alleviate the data sparsity and the cold-start problems in traditional recommender systems by leveraging knowledge from an informative source domain. However, previously proposed CDR models pursue an imprudent assumption that the entire information from the source domain is equally contributed to the target domain, neglecting the evil part that is completely irrelevant to users' intrinsic interest. To address this concern, in this paper, we propose a novel knowledge enhanced cross-domain recommendation framework named CoTrans, which remolds the core procedures of CDR models with: Compression on the knowledge from the source domain and Transfer of the purity to the target domain. Specifically, following the theory of Graph Information Bottleneck, CoTrans first compresses the source behaviors with the perception of information from the target domain. Then to preserve all the important information for the CDR task, the feedback signals from both domains are utilized to promote the effectiveness of the transfer procedure. Additionally, a knowledge-enhanced encoder is employed to narrow gaps caused by the non-overlapped items across separate domains. Comprehensive experiments on three widely used cross-domain datasets demonstrate that CoTrans significantly outperforms both single-domain and state-of-the-art cross-domain recommendation approaches.

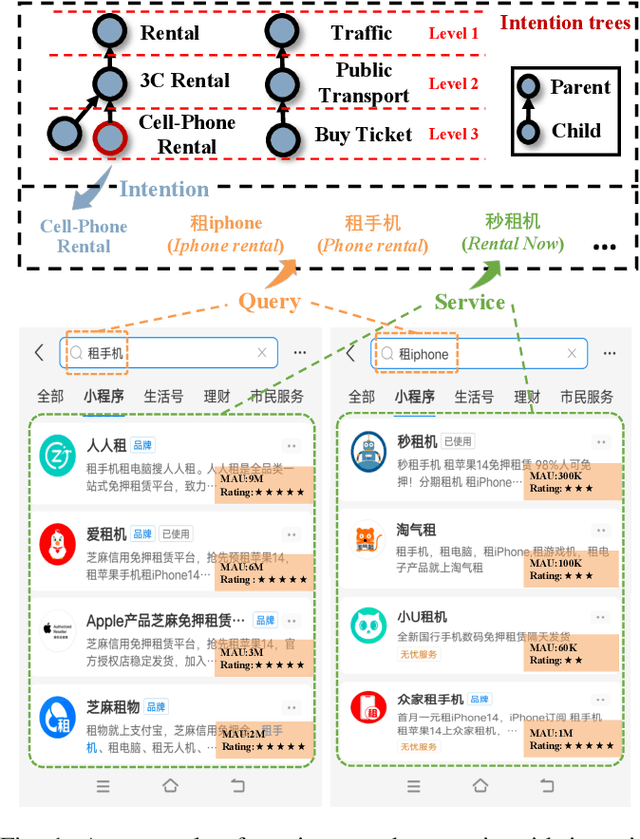

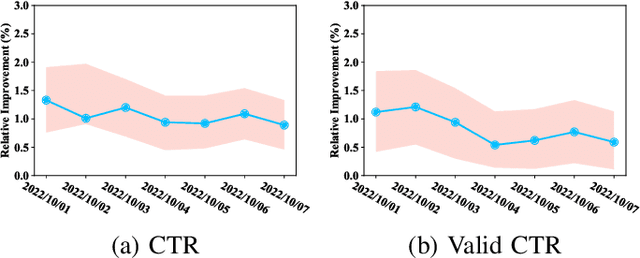

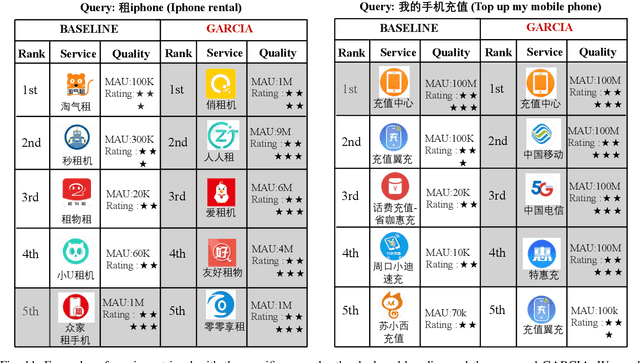

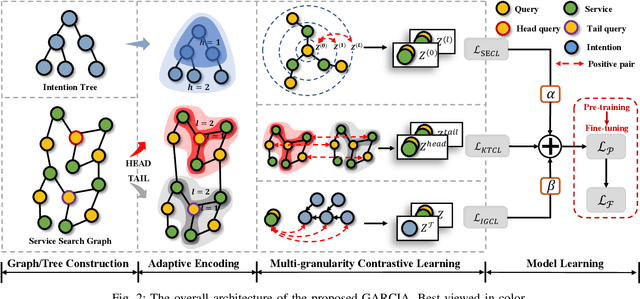

GARCIA: Powering Representations of Long-tail Query with Multi-granularity Contrastive Learning

Apr 25, 2023

Abstract:Recently, the growth of service platforms brings great convenience to both users and merchants, where the service search engine plays a vital role in improving the user experience by quickly obtaining desirable results via textual queries. Unfortunately, users' uncontrollable search customs usually bring vast amounts of long-tail queries, which severely threaten the capability of search models. Inspired by recently emerging graph neural networks (GNNs) and contrastive learning (CL), several efforts have been made in alleviating the long-tail issue and achieve considerable performance. Nevertheless, they still face a few major weaknesses. Most importantly, they do not explicitly utilize the contextual structure between heads and tails for effective knowledge transfer, and intention-level information is commonly ignored for more generalized representations. To this end, we develop a novel framework GARCIA, which exploits the graph based knowledge transfer and intention based representation generalization in a contrastive setting. In particular, we employ an adaptive encoder to produce informative representations for queries and services, as well as hierarchical structure aware representations of intentions. To fully understand tail queries and services, we equip GARCIA with a novel multi-granularity contrastive learning module, which powers representations through knowledge transfer, structure enhancement and intention generalization. Subsequently, the complete GARCIA is well trained in a pre-training&fine-tuning manner. At last, we conduct extensive experiments on both offline and online environments, which demonstrates the superior capability of GARCIA in improving tail queries and overall performance in service search scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge