Viacheslav Zakharov

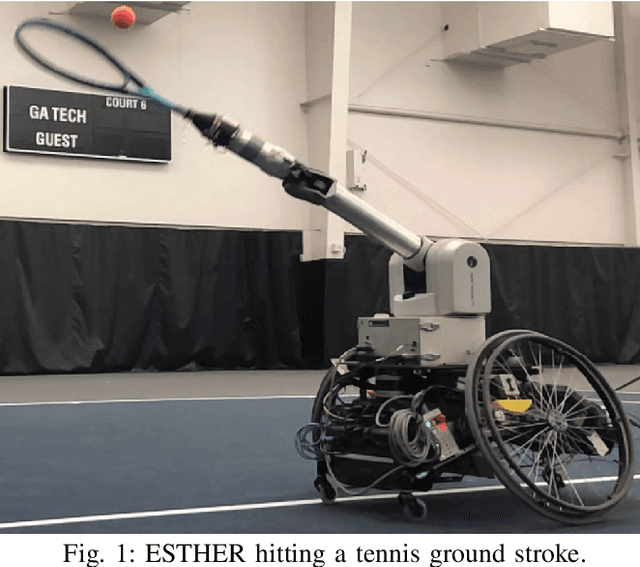

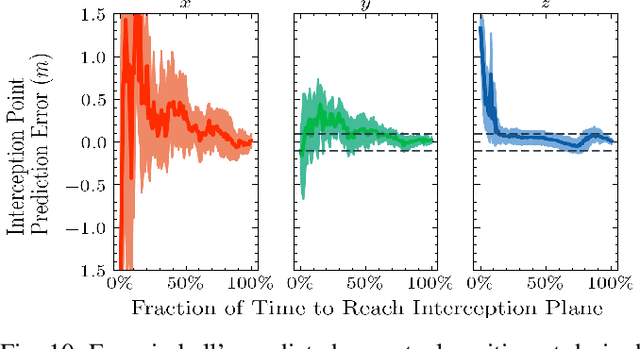

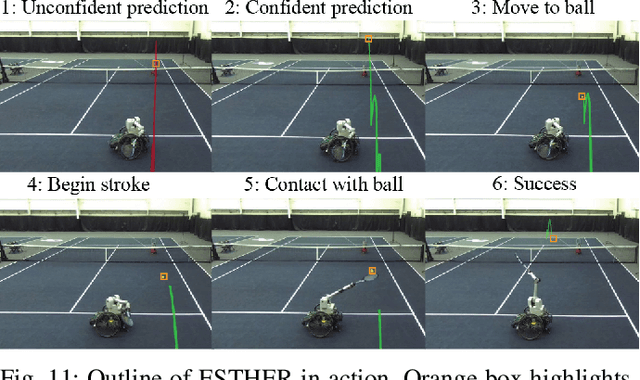

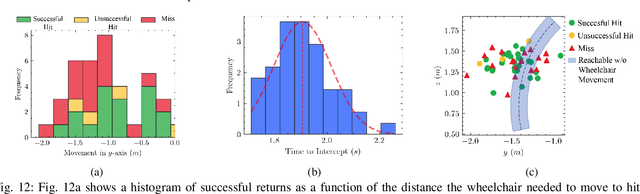

Athletic Mobile Manipulator System for Robotic Wheelchair Tennis

Oct 05, 2022

Abstract:Athletics are a quintessential and universal expression of humanity. From French monks who in the 12th century invented jeu de paume, the precursor to modern lawn tennis, back to the K'iche' people who played the Maya Ballgame as a form of religious expression over three thousand years ago, humans have sought to train their minds and bodies to excel in sporting contests. Advances in robotics are opening up the possibility of robots in sports. Yet, key challenges remain, as most prior works in robotics for sports are limited to pristine sensing environments, do not require significant force generation, or are on miniaturized scales unsuited for joint human-robot play. In this paper, we propose the first open-source, autonomous robot for playing regulation wheelchair tennis. We demonstrate the performance of our full-stack system in executing ground strokes and evaluate each of the system's hardware and software components. The goal of this paper is to (1) inspire more research in human-scale robot athletics and (2) establish the first baseline towards developing a robot in future work that can serve as a teammate for mixed, human-robot doubles play. Our paper contributes to the science of systems design and poses a set of key challenges for the robotics community to address in striving towards a vision of human-robot collaboration in sports.

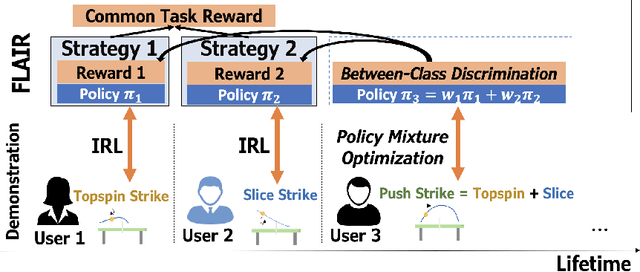

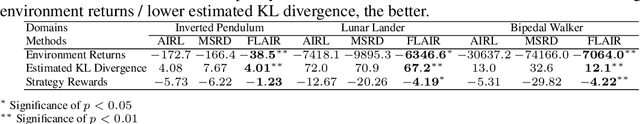

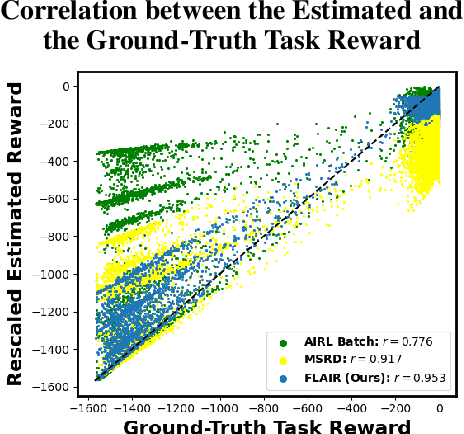

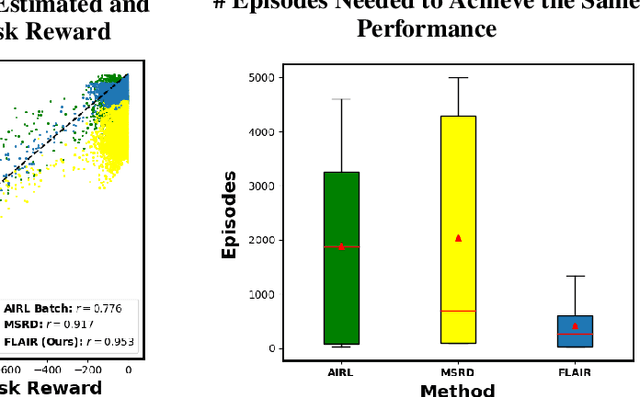

Fast Lifelong Adaptive Inverse Reinforcement Learning from Demonstrations

Sep 24, 2022

Abstract:Learning from Demonstration (LfD) approaches empower end-users to teach robots novel tasks via demonstrations of the desired behaviors, democratizing access to robotics. However, current LfD frameworks are not capable of fast adaptation to heterogeneous human demonstrations nor the large-scale deployment in ubiquitous robotics applications. In this paper, we propose a novel LfD framework, Fast Lifelong Adaptive Inverse Reinforcement learning (FLAIR). Our approach (1) leverages learned strategies to construct policy mixtures for fast adaptation to new demonstrations, allowing for quick end-user personalization; (2) distills common knowledge across demonstrations, achieving accurate task inference; and (3) expands its model only when needed in lifelong deployments, maintaining a concise set of prototypical strategies that can approximate all behaviors via policy mixtures. We empirically validate that FLAIR achieves adaptability (i.e., the robot adapts to heterogeneous, user-specific task preferences), efficiency (i.e., the robot achieves sample-efficient adaptation), and scalability (i.e., the model grows sublinearly with the number of demonstrations while maintaining high performance). FLAIR surpasses benchmarks across three continuous control tasks with an average 57% improvement in policy returns and an average 78% fewer episodes required for demonstration modeling using policy mixtures. Finally, we demonstrate the success of FLAIR in a real-robot table tennis task.

Perceptual Attention-based Predictive Control

Apr 26, 2019

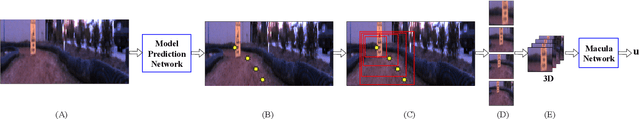

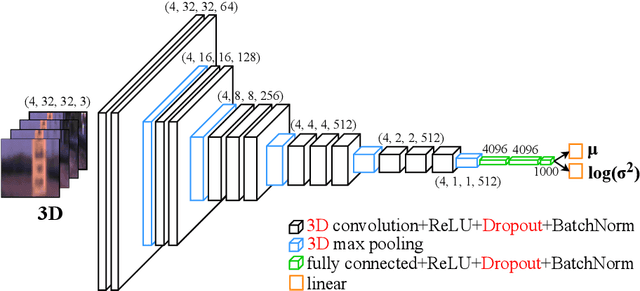

Abstract:In this paper, we present a novel information processing architecture for end-to-end visual navigation of autonomous systems. The proposed information processing architecture is used to support a perceptual attention-based predictive control algorithm that leverages model predictive control, convolutional neural networks and uncertainty quantification methods. The key idea relies on using model predictive control to train convolutional neural networks to predict regions of interest in the input visual information. These regions of interest are then used as input to the Macula-Network, a 3D convolutional neural network that is trained to produce control actions as well as estimates of epistemic and aleatoric uncertainty in the incoming stream of data. The proposed architecture is tested on simulated examples and a 1:5 scale terrestrial vehicle. Experimental results show that the proposed architecture outperforms previous approaches on early detection of novel object/data which are outside of the initial training set. The proposed architecture is a first step towards using end-to-end perceptual control policies in safety-critical domains.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge