Uwe Springmann

Open Source Handwritten Text Recognition on Medieval Manuscripts using Mixed Models and Document-Specific Finetuning

Jan 19, 2022Abstract:This paper deals with the task of practical and open source Handwritten Text Recognition (HTR) on German medieval manuscripts. We report on our efforts to construct mixed recognition models which can be applied out-of-the-box without any further document-specific training but also serve as a starting point for finetuning by training a new model on a few pages of transcribed text (ground truth). To train the mixed models we collected a corpus of 35 manuscripts and ca. 12.5k text lines for two widely used handwriting styles, Gothic and Bastarda cursives. Evaluating the mixed models out-of-the-box on four unseen manuscripts resulted in an average Character Error Rate (CER) of 6.22%. After training on 2, 4 and eventually 32 pages the CER dropped to 3.27%, 2.58%, and 1.65%, respectively. While the in-domain recognition and training of models (Bastarda model to Bastarda material, Gothic to Gothic) unsurprisingly yielded the best results, finetuning out-of-domain models to unseen scripts was still shown to be superior to training from scratch. Our new mixed models have been made openly available to the community.

Mixed Model OCR Training on Historical Latin Script for Out-of-the-Box Recognition and Finetuning

Jun 15, 2021

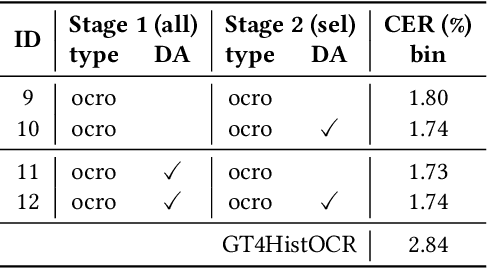

Abstract:In order to apply Optical Character Recognition (OCR) to historical printings of Latin script fully automatically, we report on our efforts to construct a widely-applicable polyfont recognition model yielding text with a Character Error Rate (CER) around 2% when applied out-of-the-box. Moreover, we show how this model can be further finetuned to specific classes of printings with little manual and computational effort. The mixed or polyfont model is trained on a wide variety of materials, in terms of age (from the 15th to the 19th century), typography (various types of Fraktur and Antiqua), and languages (among others, German, Latin, and French). To optimize the results we combined established techniques of OCR training like pretraining, data augmentation, and voting. In addition, we used various preprocessing methods to enrich the training data and obtain more robust models. We also implemented a two-stage approach which first trains on all available, considerably unbalanced data and then refines the output by training on a selected more balanced subset. Evaluations on 29 previously unseen books resulted in a CER of 1.73%, outperforming a widely used standard model with a CER of 2.84% by almost 40%. Training a more specialized model for some unseen Early Modern Latin books starting from our mixed model led to a CER of 1.47%, an improvement of up to 50% compared to training from scratch and up to 30% compared to training from the aforementioned standard model. Our new mixed model is made openly available to the community.

OCR4all -- An Open-Source Tool Providing a Automatic OCR Workflow for Historical Printings

Sep 09, 2019

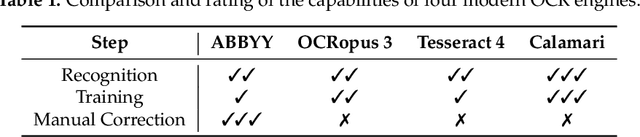

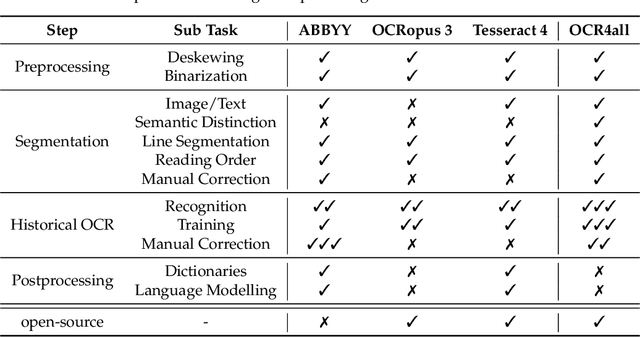

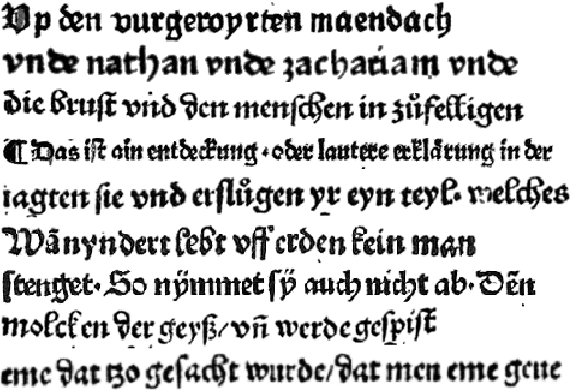

Abstract:Optical Character Recognition (OCR) on historical printings is a challenging task mainly due to the complexity of the layout and the highly variant typography. Nevertheless, in the last few years great progress has been made in the area of historical OCR, resulting in several powerful open-source tools for preprocessing, layout recognition and segmentation, character recognition and post-processing. The drawback of these tools often is their limited applicability by non-technical users like humanist scholars and in particular the combined use of several tools in a workflow. In this paper we present an open-source OCR software called OCR4all, which combines state-of-the-art OCR components and continuous model training into a comprehensive workflow. A comfortable GUI allows error corrections not only in the final output, but already in early stages to minimize error propagations. Further on, extensive configuration capabilities are provided to set the degree of automation of the workflow and to make adaptations to the carefully selected default parameters for specific printings, if necessary. Experiments showed that users with minimal or no experience were able to capture the text of even the earliest printed books with manageable effort and great quality, achieving excellent character error rates (CERs) below 0.5%. The fully automated application on 19th century novels showed that OCR4all can considerably outperform the commercial state-of-the-art tool ABBYY Finereader on moderate layouts if suitably pretrained mixed OCR models are available. The architecture of OCR4all allows the easy integration (or substitution) of newly developed tools for its main components by standardized interfaces like PageXML, thus aiming at continual higher automation for historical printings.

State of the Art Optical Character Recognition of 19th Century Fraktur Scripts using Open Source Engines

Oct 08, 2018Abstract:In this paper we evaluate Optical Character Recognition (OCR) of 19th century Fraktur scripts without book-specific training using mixed models, i.e. models trained to recognize a variety of fonts and typesets from previously unseen sources. We describe the training process leading to strong mixed OCR models and compare them to freely available models of the popular open source engines OCRopus and Tesseract as well as the commercial state of the art system ABBYY. For evaluation, we use a varied collection of unseen data from books, journals, and a dictionary from the 19th century. The experiments show that training mixed models with real data is superior to training with synthetic data and that the novel OCR engine Calamari outperforms the other engines considerably, on average reducing ABBYYs character error rate (CER) by over 70%, resulting in an average CER below 1%.

Ground Truth for training OCR engines on historical documents in German Fraktur and Early Modern Latin

Sep 14, 2018

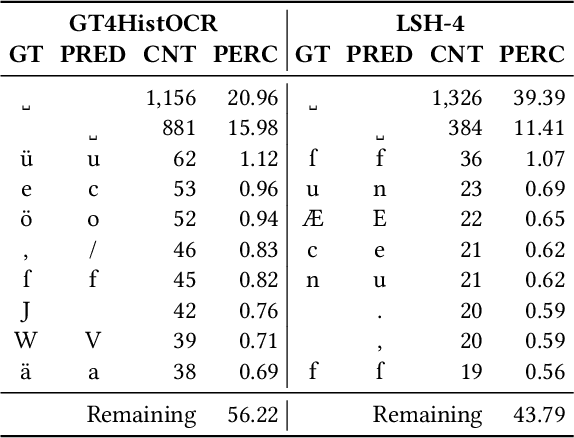

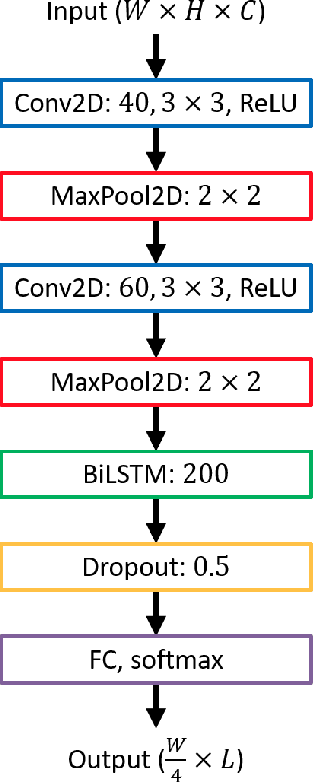

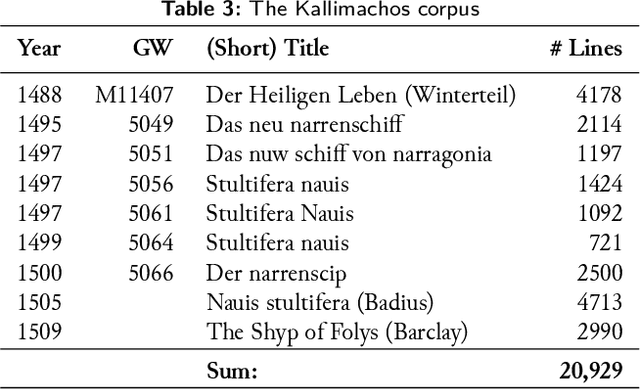

Abstract:In this paper we describe a dataset of German and Latin \textit{ground truth} (GT) for historical OCR in the form of printed text line images paired with their transcription. This dataset, called \textit{GT4HistOCR}, consists of 313,173 line pairs covering a wide period of printing dates from incunabula from the 15th century to 19th century books printed in Fraktur types and is openly available under a CC-BY 4.0 license. The special form of GT as line image/transcription pairs makes it directly usable to train state-of-the-art recognition models for OCR software employing recurring neural networks in LSTM architecture such as Tesseract 4 or OCRopus. We also provide some pretrained OCRopus models for subcorpora of our dataset yielding between 95\% (early printings) and 98\% (19th century Fraktur printings) character accuracy rates on unseen test cases, a Perl script to harmonize GT produced by different transcription rules, and give hints on how to construct GT for OCR purposes which has requirements that may differ from linguistically motivated transcriptions.

Improving OCR Accuracy on Early Printed Books by combining Pretraining, Voting, and Active Learning

Feb 28, 2018

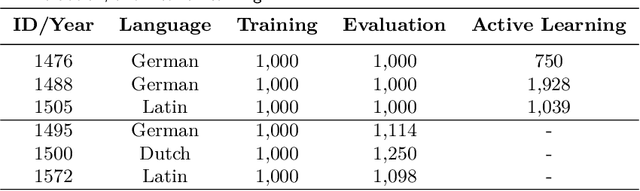

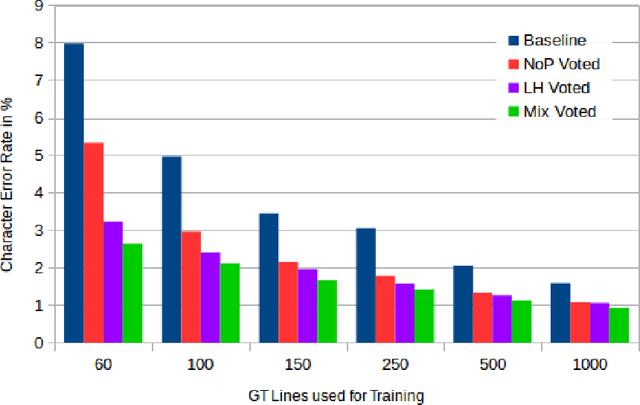

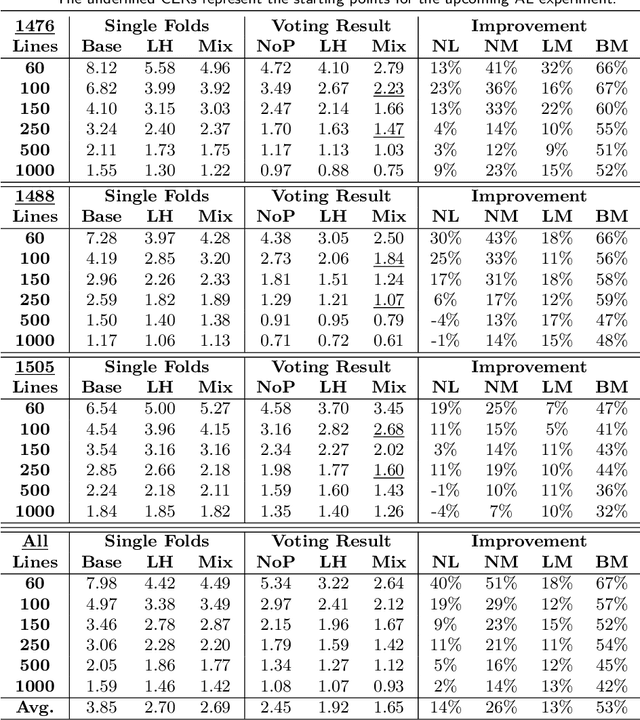

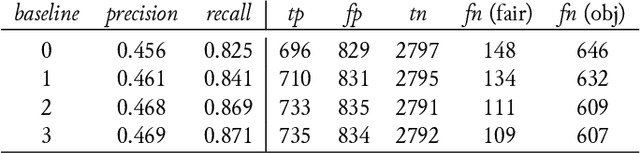

Abstract:We combine three methods which significantly improve the OCR accuracy of OCR models trained on early printed books: (1) The pretraining method utilizes the information stored in already existing models trained on a variety of typesets (mixed models) instead of starting the training from scratch. (2) Performing cross fold training on a single set of ground truth data (line images and their transcriptions) with a single OCR engine (OCRopus) produces a committee whose members then vote for the best outcome by also taking the top-N alternatives and their intrinsic confidence values into account. (3) Following the principle of maximal disagreement we select additional training lines which the voters disagree most on, expecting them to offer the highest information gain for a subsequent training (active learning). Evaluations on six early printed books yielded the following results: On average the combination of pretraining and voting improved the character accuracy by 46% when training five folds starting from the same mixed model. This number rose to 53% when using different models for pretraining, underlining the importance of diverse voters. Incorporating active learning improved the obtained results by another 16% on average (evaluated on three of the six books). Overall, the proposed methods lead to an average error rate of 2.5% when training on only 60 lines. Using a substantial ground truth pool of 1,000 lines brought the error rate down even further to less than 1% on average.

Transfer Learning for OCRopus Model Training on Early Printed Books

Dec 21, 2017

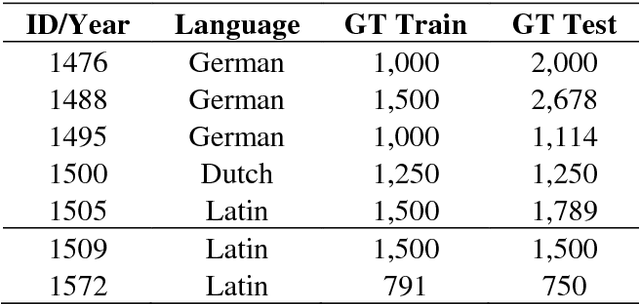

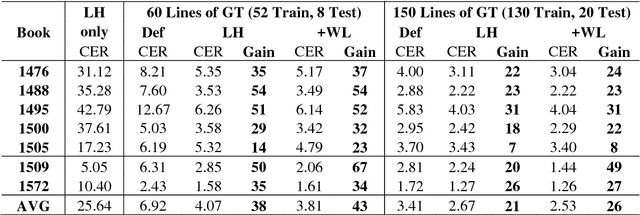

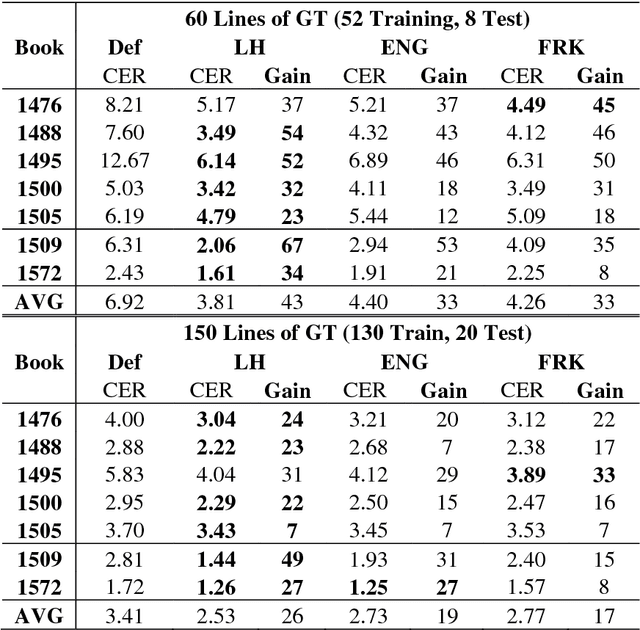

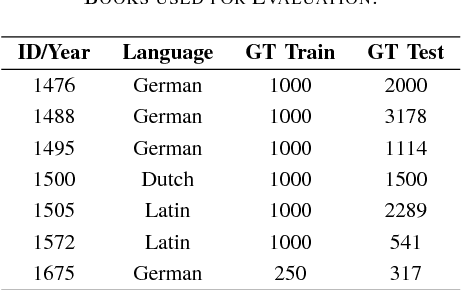

Abstract:A method is presented that significantly reduces the character error rates for OCR text obtained from OCRopus models trained on early printed books when only small amounts of diplomatic transcriptions are available. This is achieved by building from already existing models during training instead of starting from scratch. To overcome the discrepancies between the set of characters of the pretrained model and the additional ground truth the OCRopus code is adapted to allow for alphabet expansion or reduction. The character set is now capable of flexibly adding and deleting characters from the pretrained alphabet when an existing model is loaded. For our experiments we use a self-trained mixed model on early Latin prints and the two standard OCRopus models on modern English and German Fraktur texts. The evaluation on seven early printed books showed that training from the Latin mixed model reduces the average amount of errors by 43% and 26%, respectively compared to training from scratch with 60 and 150 lines of ground truth, respectively. Furthermore, it is shown that even building from mixed models trained on data unrelated to the newly added training and test data can lead to significantly improved recognition results.

Improving OCR Accuracy on Early Printed Books by utilizing Cross Fold Training and Voting

Nov 27, 2017

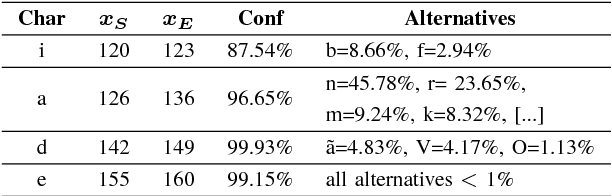

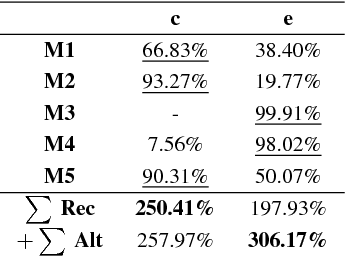

Abstract:In this paper we introduce a method that significantly reduces the character error rates for OCR text obtained from OCRopus models trained on early printed books. The method uses a combination of cross fold training and confidence based voting. After allocating the available ground truth in different subsets several training processes are performed, each resulting in a specific OCR model. The OCR text generated by these models then gets voted to determine the final output by taking the recognized characters, their alternatives, and the confidence values assigned to each character into consideration. Experiments on seven early printed books show that the proposed method outperforms the standard approach considerably by reducing the amount of errors by up to 50% and more.

LAREX - A semi-automatic open-source Tool for Layout Analysis and Region Extraction on Early Printed Books

Jan 20, 2017

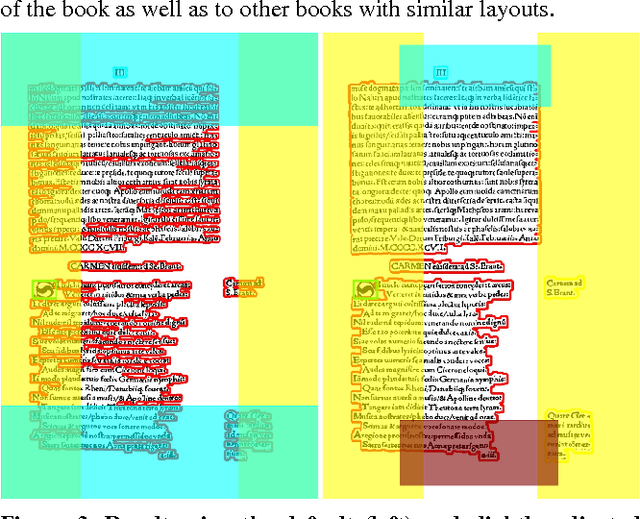

Abstract:A semi-automatic open-source tool for layout analysis on early printed books is presented. LAREX uses a rule based connected components approach which is very fast, easily comprehensible for the user and allows an intuitive manual correction if necessary. The PageXML format is used to support integration into existing OCR workflows. Evaluations showed that LAREX provides an efficient and flexible way to segment pages of early printed books.

Profiling of OCR'ed Historical Texts Revisited

Jan 19, 2017

Abstract:In the absence of ground truth it is not possible to automatically determine the exact spectrum and occurrences of OCR errors in an OCR'ed text. Yet, for interactive postcorrection of OCR'ed historical printings it is extremely useful to have a statistical profile available that provides an estimate of error classes with associated frequencies, and that points to conjectured errors and suspicious tokens. The method introduced in Reffle (2013) computes such a profile, combining lexica, pattern sets and advanced matching techniques in a specialized Expectation Maximization (EM) procedure. Here we improve this method in three respects: First, the method in Reffle (2013) is not adaptive: user feedback obtained by actual postcorrection steps cannot be used to compute refined profiles. We introduce a variant of the method that is open for adaptivity, taking correction steps of the user into account. This leads to higher precision with respect to recognition of erroneous OCR tokens. Second, during postcorrection often new historical patterns are found. We show that adding new historical patterns to the linguistic background resources leads to a second kind of improvement, enabling even higher precision by telling historical spellings apart from OCR errors. Third, the method in Reffle (2013) does not make any active use of tokens that cannot be interpreted in the underlying channel model. We show that adding these uninterpretable tokens to the set of conjectured errors leads to a significant improvement of the recall for error detection, at the same time improving precision.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge