Ulysse Côté-Allard

Latent Space Unsupervised Semantic Segmentation

Jul 31, 2022

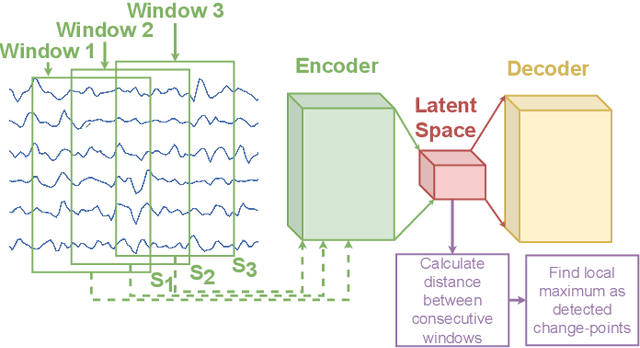

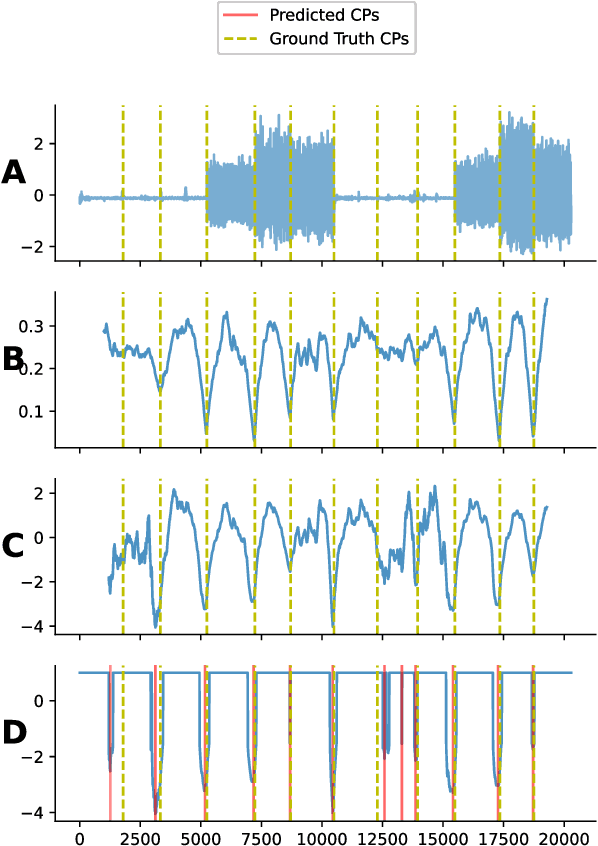

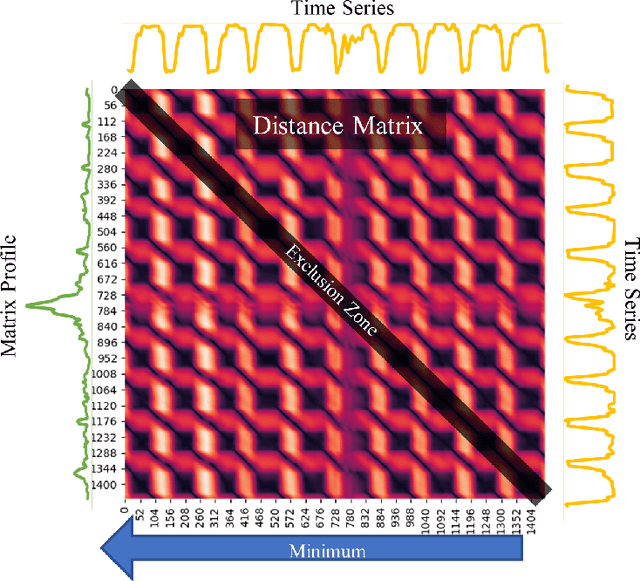

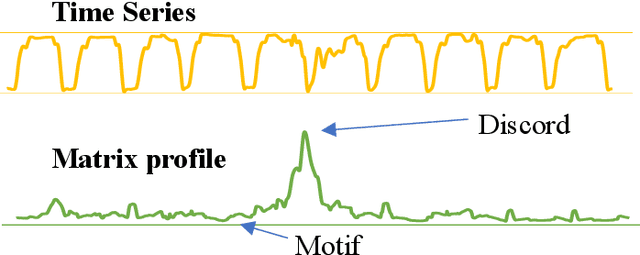

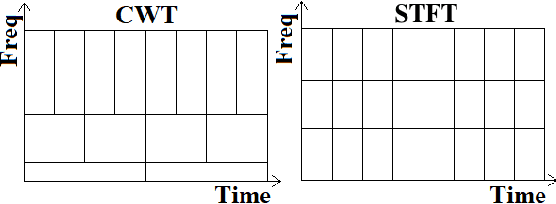

Abstract:The development of compact and energy-efficient wearable sensors has led to an increase in the availability of biosignals. To analyze these continuously recorded, and often multidimensional, time series at scale, being able to conduct meaningful unsupervised data segmentation is an auspicious target. A common way to achieve this is to identify change-points within the time series as the segmentation basis. However, traditional change-point detection algorithms often come with drawbacks, limiting their real-world applicability. Notably, they generally rely on the complete time series to be available and thus cannot be used for real-time applications. Another common limitation is that they poorly (or cannot) handle the segmentation of multidimensional time series. Consequently, the main contribution of this work is to propose a novel unsupervised segmentation algorithm for multidimensional time series named Latent Space Unsupervised Semantic Segmentation (LS-USS), which was designed to work easily with both online and batch data. When comparing LS-USS against other state-of-the-art change-point detection algorithms on a variety of real-world datasets, in both the offline and real-time setting, LS-USS systematically achieves on par or better performances.

Adherence Forecasting for Guided Internet-Delivered Cognitive Behavioral Therapy: A Minimally Data-Sensitive Approach

Jan 11, 2022

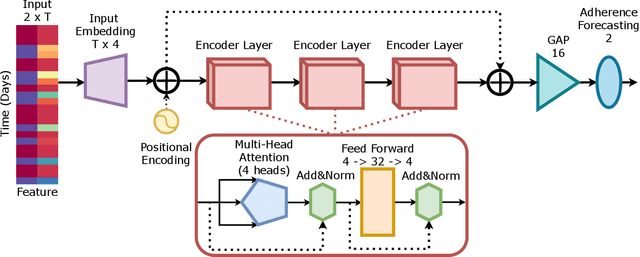

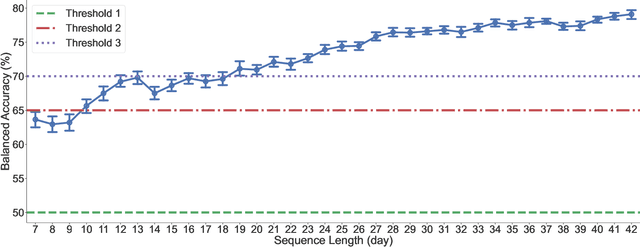

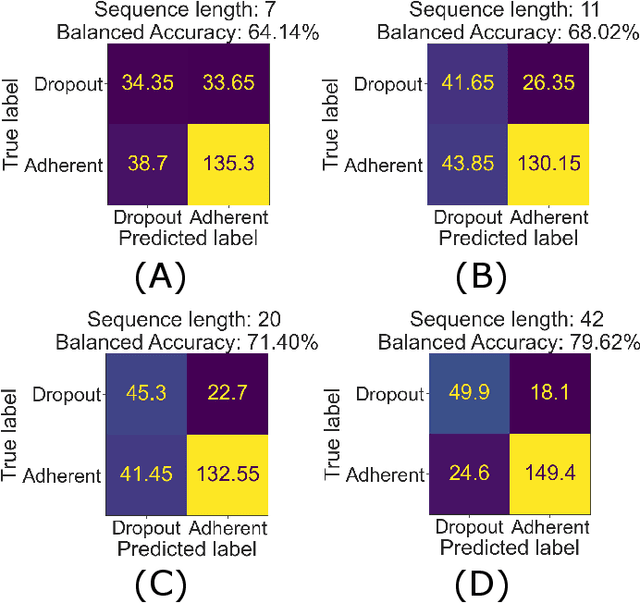

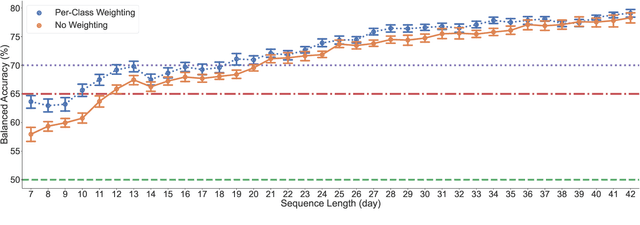

Abstract:Internet-delivered psychological treatments (IDPT) are seen as an effective and scalable pathway to improving the accessibility of mental healthcare. Within this context, treatment adherence is an especially relevant challenge to address due to the reduced interaction between healthcare professionals and patients, compared to more traditional interventions. In parallel, there are increasing regulations when using peoples' personal data, especially in the digital sphere. In such regulations, data minimization is often a core tenant such as within the General Data Protection Regulation (GDPR). Consequently, this work proposes a deep-learning approach to perform automatic adherence forecasting, while only relying on minimally sensitive login/logout data. This approach was tested on a dataset containing 342 patients undergoing guided internet-delivered cognitive behavioral therapy (G-ICBT) treatment. The proposed Self-Attention Network achieved over 70% average balanced accuracy, when only 1/3 of the treatment duration had elapsed. As such, this study demonstrates that automatic adherence forecasting for G-ICBT, is achievable using only minimally sensitive data, thus facilitating the implementation of such tools within real-world IDPT platforms.

Reinforcement Learning-based Switching Controller for a Milliscale Robot in a Constrained Environment

Nov 27, 2021

Abstract:This work presents a reinforcement learning-based switching control mechanism to autonomously move a ferromagnetic object (representing a milliscale robot) around obstacles within a constrained environment in the presence of disturbances. This mechanism can be used to navigate objects (e.g., capsule endoscopy, swarms of drug particles) through complex environments when active control is a necessity but where direct manipulation can be hazardous. The proposed control scheme consists of a switching control architecture implemented by two sub-controllers. The first sub-controller is designed to employs the robot's inverse kinematic solutions to do an environment search of the to-be-carried ferromagnetic particle while being robust to disturbances. The second sub-controller uses a customized rainbow algorithm to control a robotic arm, i.e., the UR5 robot, to carry a ferromagnetic particle to a desired position through a constrained environment. For the customized Rainbow algorithm, Quantile Huber loss from the Implicit Quantile Networks (IQN) algorithm and ResNet are employed. The proposed controller is first trained and tested in a real-time physics simulation engine (PyBullet). Afterward, the trained controller is transferred to a UR5 robot to remotely transport a ferromagnetic particle in a real-world scenario to demonstrate the applicability of the proposed approach. The experimental results show an average success rate of 98.86\% calculated over 30 episodes for randomly generated trajectories.

Long-Short Ensemble Network for Bipolar Manic-Euthymic State Recognition Based on Wrist-worn Sensors

Jul 01, 2021

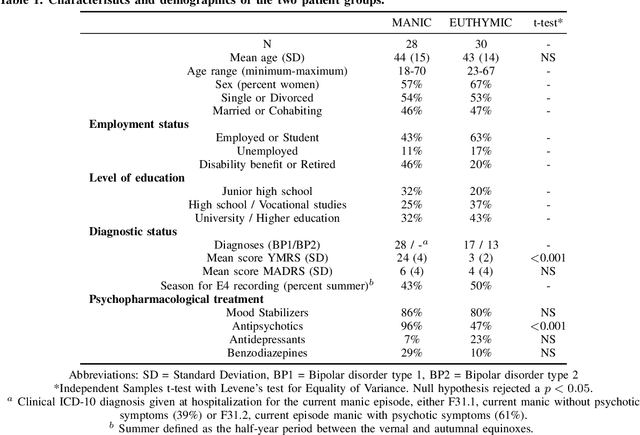

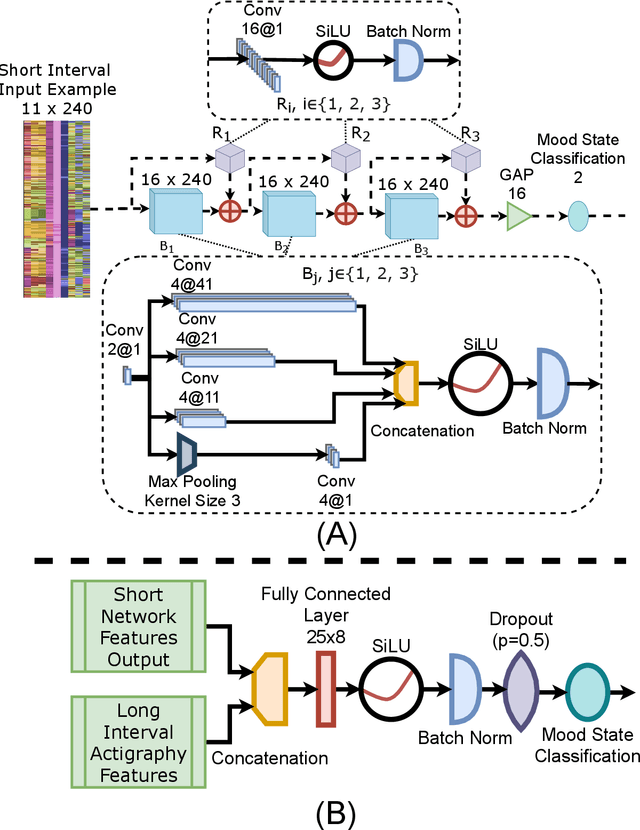

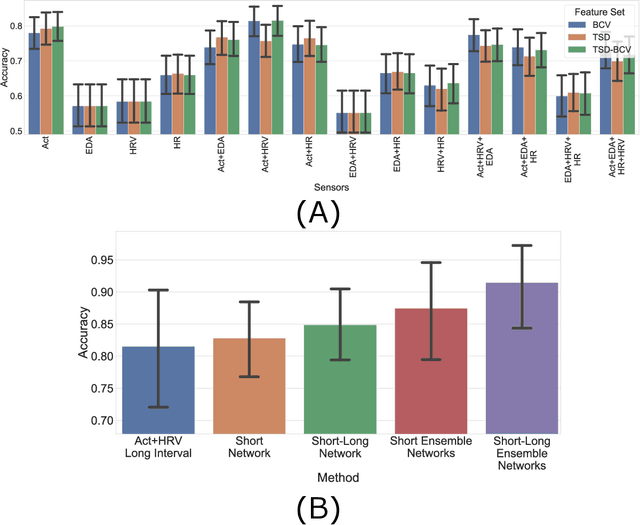

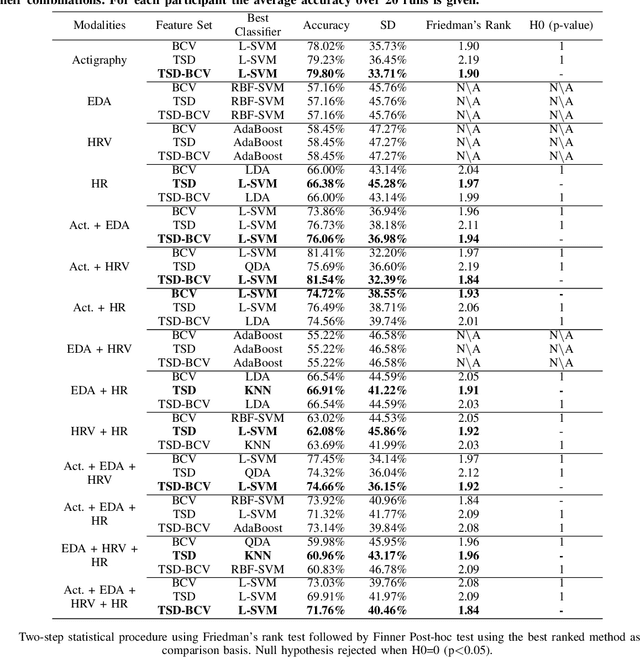

Abstract:Manic episodes of bipolar disorder can lead to uncritical behaviour and delusional psychosis, often with destructive consequences for those affected and their surroundings. Early detection and intervention of a manic episode are crucial to prevent escalation, hospital admission and premature death. However, people with bipolar disorder may not recognize that they are experiencing a manic episode and symptoms such as euphoria and increased productivity can also deter affected individuals from seeking help. This work proposes to perform user-independent, automatic mood-state detection based on actigraphy and electrodermal activity acquired from a wrist-worn device during mania and after recovery (euthymia). This paper proposes a new deep learning-based ensemble method leveraging long (20h) and short (5 minutes) time-intervals to discriminate between the mood-states. When tested on 47 bipolar patients, the proposed classification scheme achieves an average accuracy of 91.59% in euthymic/manic mood-state recognition.

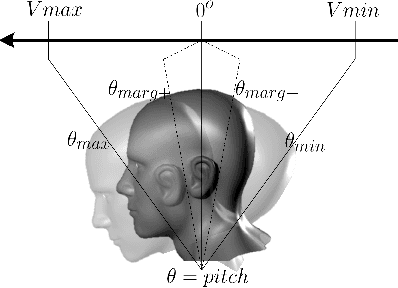

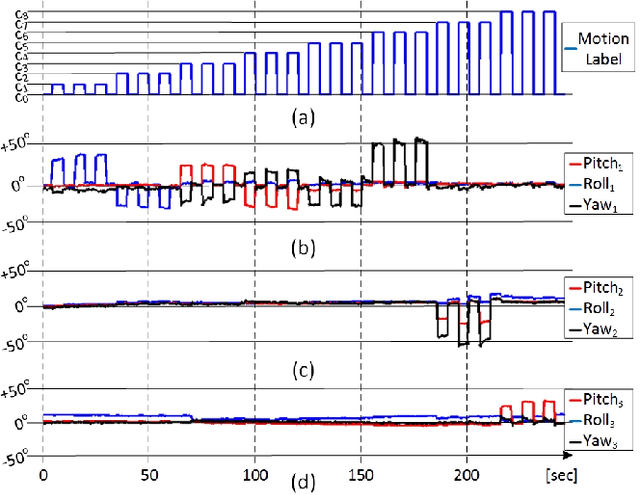

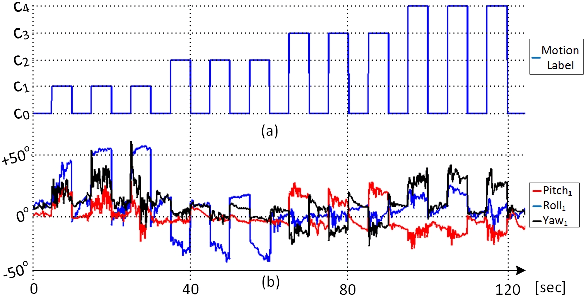

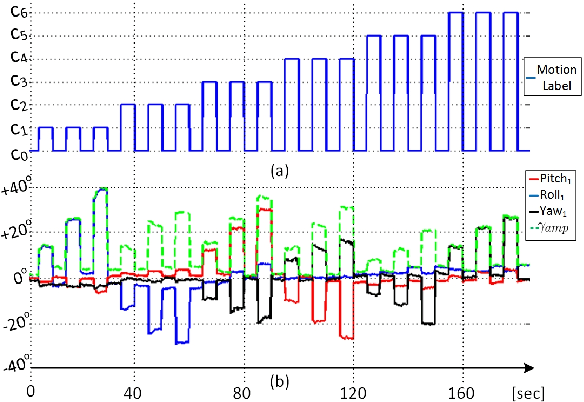

A Flexible and Modular Body-Machine Interface for Individuals Living with Severe Disabilities

Jul 29, 2020

Abstract:This paper presents a control interface to translate the residual body motions of individuals living with severe disabilities, into control commands for body-machine interaction. A custom, wireless, wearable multi-sensor network is used to collect motion data from multiple points on the body in real-time. The solution proposed successfully leverage electromyography gesture recognition techniques for the recognition of inertial measurement units-based commands (IMU), without the need for cumbersome and noisy surface electrodes. Motion pattern recognition is performed using a computationally inexpensive classifier (Linear Discriminant Analysis) so that the solution can be deployed onto lightweight embedded platforms. Five participants (three able-bodied and two living with upper-body disabilities) presenting different motion limitations (e.g. spasms, reduced motion range) were recruited. They were asked to perform up to 9 different motion classes, including head, shoulder, finger, and foot motions, with respect to their residual functional capacities. The measured prediction performances show an average accuracy of 99.96% for able-bodied individuals and 91.66% for participants with upper-body disabilities. The recorded dataset has also been made available online to the research community. Proof of concept for the real-time use of the system is given through an assembly task replicating activities of daily living using the JACO arm from Kinova Robotics.

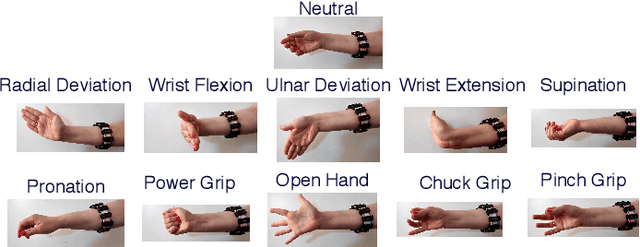

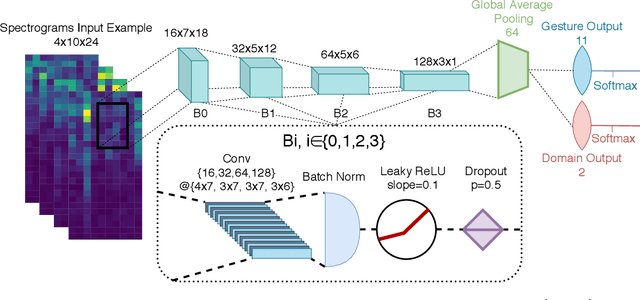

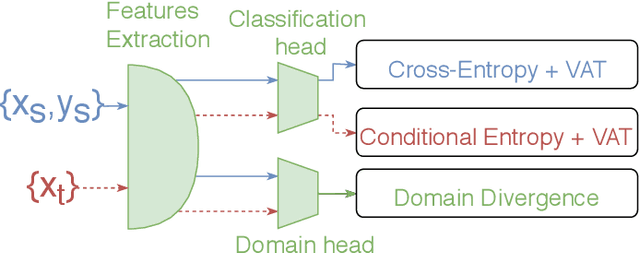

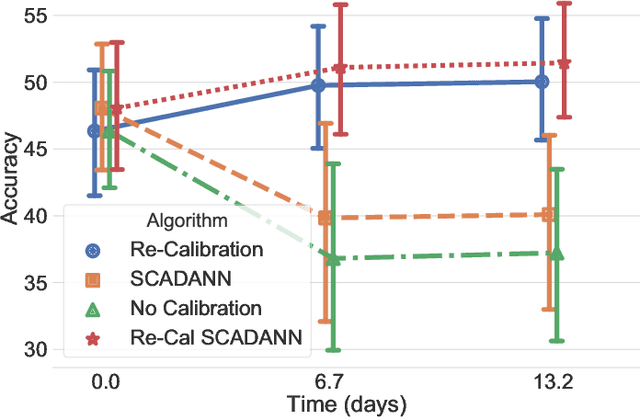

Unsupervised Domain Adversarial Self-Calibration for Electromyographic-based Gesture Recognition

Dec 21, 2019

Abstract:Surface electromyography (sEMG) provides an intuitive and non-invasive interface from which to control machines. However, preserving the myoelectric control system's performance over multiple days is challenging, due to the transient nature of this recording technique. In practice, if the system is to remain usable, a time-consuming and periodic re-calibration is necessary. In the case where the sEMG interface is employed every few days, the user might need to do this re-calibration before every use. Thus, severely limiting the practicality of such a control method. Consequently, this paper proposes tackling the especially challenging task of adapting to sEMG signals when multiple days have elapsed between each recording, by presenting SCADANN, a new, deep learning-based, self-calibrating algorithm. SCADANN is ranked against three state of the art domain adversarial algorithms and a multiple-vote self-calibrating algorithm on both offline and online datasets. Overall, SCADANN is shown to systematically improve classifiers' performance over no adaptation and ranks first on almost all the cases tested.

Virtual Reality to Study the Gap Between Offline and Real-Time EMG-based Gesture Recognition

Dec 16, 2019

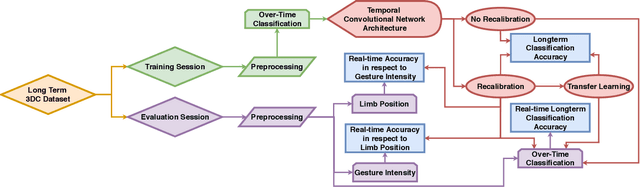

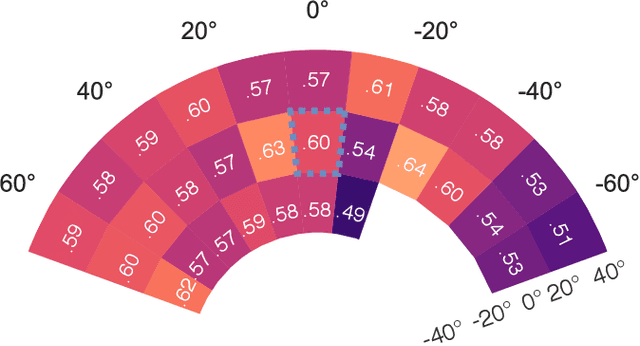

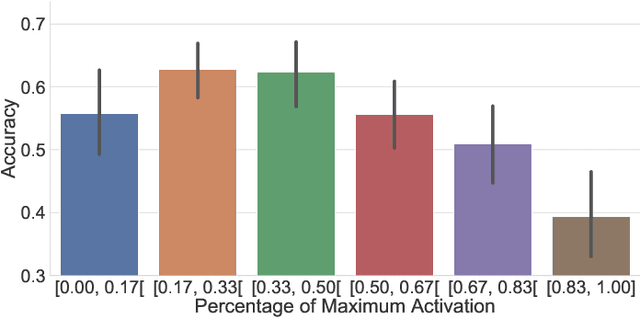

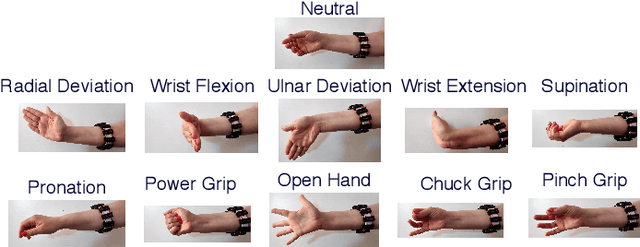

Abstract:Within sEMG-based gesture recognition, a chasm exists in the literature between offline accuracy and real-time usability of a classifier. This gap mainly stems from the four main dynamic factors in sEMG-based gesture recognition: gesture intensity, limb position, electrode shift and transient changes in the signal. These factors are hard to include within an offline dataset as each of them exponentially augment the number of segments to be recorded. On the other hand, online datasets are biased towards the sEMG-based algorithms providing feedback to the participants, limiting the usability of such datasets as benchmarks. This paper proposes a virtual reality (VR) environment and a real-time experimental protocol from which the four main dynamic factors can more easily be studied. During the online experiment, the gesture recognition feedback is provided through the leap motion camera, enabling the proposed dataset to be re-used to compare future sEMG-based algorithms. 20 able-bodied persons took part in this study, completing three to four sessions over a period spanning between 14 and 21 days. Finally, TADANN, a new transfer learning-based algorithm, is proposed for long term gesture classification and significantly (p<0.05) outperforms fine-tuning a network.

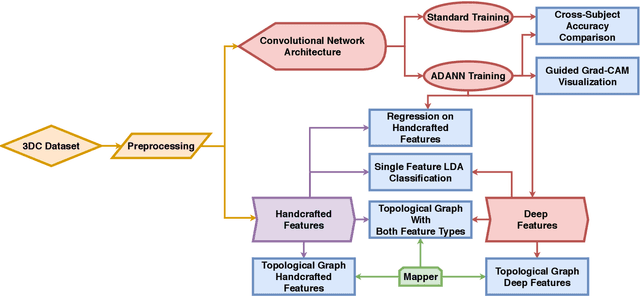

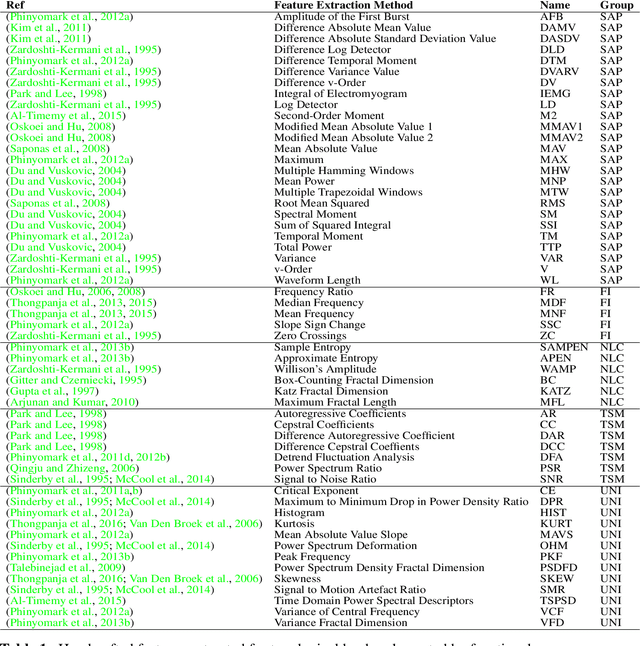

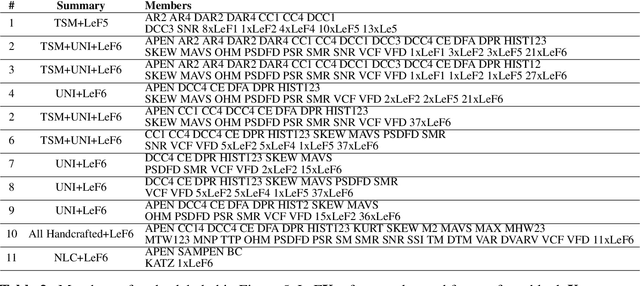

Interpreting Deep Learning Features for Myoelectric Control: A Comparison with Handcrafted Features

Nov 30, 2019

Abstract:The research in myoelectric control systems primarily focuses on extracting discriminative representations from the electromyographic (EMG) signal by designing handcrafted features. Recently, deep learning techniques have been applied to the challenging task of EMG-based gesture recognition. The adoption of these techniques slowly shifts the focus from feature engineering to feature learning. However, the black-box nature of deep learning makes it hard to understand the type of information learned by the network and how it relates to handcrafted features. Additionally, due to the high variability in EMG recordings between participants, deep features tend to generalize poorly across subjects using standard training methods. Consequently, this work introduces a new multi-domain learning algorithm, named ADANN, which significantly enhances (p=0.00004) inter-subject classification accuracy by an average of 19.40\% compared to standard training. Using ADANN-generated features, the main contribution of this work is to provide the first topological data analysis of EMG-based gesture recognition for the characterisation of the information encoded within a deep network, using handcrafted features as landmarks. This analysis reveals that handcrafted features and the learned features (in the earlier layers) both try to discriminate between all gestures, but do not encode the same information to do so. Furthermore, using convolutional network visualization techniques reveal that learned features tend to ignore the most activated channel during gesture contraction, which is in stark contrast with the prevalence of handcrafted features designed to capture amplitude information. Overall, this work paves the way for hybrid feature sets by providing a clear guideline of complementary information encoded within learned and handcrafted features.

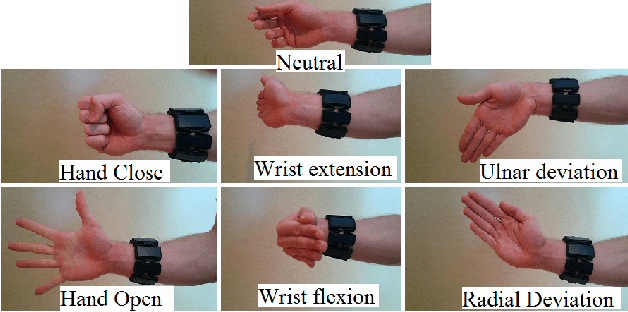

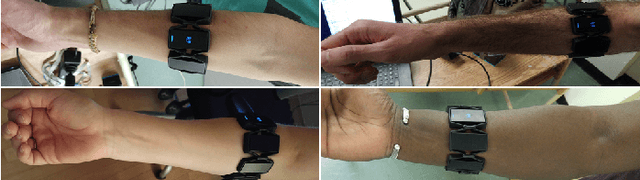

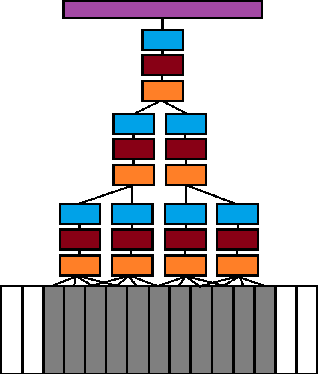

Deep Learning for Electromyographic Hand Gesture Signal Classification Using Transfer Learning

Jun 12, 2018

Abstract:In recent years, the use of deep learning algorithms has become increasingly more prominent for their unparalleled ability to automatically learn discriminant features from large amounts of data. However, within the field of electromyography-based gesture recognition, deep learning algorithms are seldom employed as it requires an unreasonable amount of time for a single person, in a single session, to generate tens of thousands of examples. This work's hypothesis is that general, informative features can be learned from the large amount of data generated by aggregating the signals of multiple users, thus reducing the recording burden imposed on a single person while enhancing gesture recognition. As such, this paper proposes applying transfer learning on the aggregated data of multiple users, while leveraging the capacity of deep learning algorithms to learn discriminant features from large dataset, without the need for in-depth feature engineering. To this end, two datasets are recorded with the Myo Armband (Thalmic Labs), a low-cost, low-sampling rate (200Hz), 8-channel, consumer-grade, dry electrode sEMG armband. These two datasets are comprised of 19 and 17 able-bodied participants respectively. A third dataset, also recorded with the Myo Armband, was taken from the NinaPro database and is comprised of 10 able-bodied participants. This transfer learning scheme is shown to outperform the current state-of-the-art in gesture recognition. It achieves an average accuracy of 98.31% for 7 hand/wrist gestures over 17 able-bodied participants and 65.57% for 18 hand/wrist gestures over 10 able-bodied participants. Finally, a use-case study employing eight able-bodied participants suggests that real-time feedback reduces the degradation in accuracy normally experienced over time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge