Erik Scheme

UNB StepUP: A footStep database for gait analysis and recognition using Underfoot Pressure

Feb 26, 2025Abstract:Gait refers to the patterns of limb movement generated during walking, which are unique to each individual due to both physical and behavioural traits. Walking patterns have been widely studied in biometrics, biomechanics, sports, and rehabilitation. While traditional methods rely on video and motion capture, advances in underfoot pressure sensing technology now offer deeper insights into gait. However, underfoot pressures during walking remain underexplored due to the lack of large, publicly accessible datasets. To address this, the UNB StepUP database was created, featuring gait pressure data collected with high-resolution pressure sensing tiles (4 sensors/cm$^2$, 1.2m by 3.6m). Its first release, UNB StepUP-P150, includes over 200,000 footsteps from 150 individuals across various walking speeds (preferred, slow-to-stop, fast, and slow) and footwear types (barefoot, standard shoes, and two personal shoes). As the largest and most comprehensive dataset of its kind, it supports biometric gait recognition while presenting new research opportunities in biomechanics and deep learning. The UNB StepUP-P150 dataset sets a new benchmark for pressure-based gait analysis and recognition. Please note that the hypertext links to the dataset on FigShare remain dormant while the document is under review.

Decision-change Informed Rejection Improves Robustness in Pattern Recognition-based Myoelectric Control

Sep 21, 2024

Abstract:Post-processing techniques have been shown to improve the quality of the decision stream generated by classifiers used in pattern-recognition-based myoelectric control. However, these techniques have largely been tested individually and on well-behaved, stationary data, failing to fully evaluate their trade-offs between smoothing and latency during dynamic use. Correspondingly, in this work, we survey and compare 8 different post-processing and decision stream improvement schemes in the context of continuous and dynamic class transitions: majority vote, Bayesian fusion, onset locking, outlier detection, confidence-based rejection, confidence scaling, prior adjustment, and adaptive windowing. We then propose two new temporally aware post-processing schemes that use changes in the decision and confidence streams to better reject uncertain decisions. Our decision-change informed rejection (DCIR) approach outperforms existing schemes during both steady-state and transitions based on error rates and decision stream volatility whether using conventional or deep classifiers. These results suggest that added robustness can be gained by appropriately leveraging temporal context in myoelectric control.

* Published in IEEE JOURNAL OF BIOMEDICAL AND HEALTH INFORMATICS

Towards Robust and Interpretable EMG-based Hand Gesture Recognition using Deep Metric Meta Learning

Apr 17, 2024

Abstract:Current electromyography (EMG) pattern recognition (PR) models have been shown to generalize poorly in unconstrained environments, setting back their adoption in applications such as hand gesture control. This problem is often due to limited training data, exacerbated by the use of supervised classification frameworks that are known to be suboptimal in such settings. In this work, we propose a shift to deep metric-based meta-learning in EMG PR to supervise the creation of meaningful and interpretable representations. We use a Siamese Deep Convolutional Neural Network (SDCNN) and contrastive triplet loss to learn an EMG feature embedding space that captures the distribution of the different classes. A nearest-centroid approach is subsequently employed for inference, relying on how closely a test sample aligns with the established data distributions. We derive a robust class proximity-based confidence estimator that leads to a better rejection of incorrect decisions, i.e. false positives, especially when operating beyond the training data domain. We show our approach's efficacy by testing the trained SDCNN's predictions and confidence estimations on unseen data, both in and out of the training domain. The evaluation metrics include the accuracy-rejection curve and the Kullback-Leibler divergence between the confidence distributions of accurate and inaccurate predictions. Outperforming comparable models on both metrics, our results demonstrate that the proposed meta-learning approach improves the classifier's precision in active decisions (after rejection), thus leading to better generalization and applicability.

On-Demand Myoelectric Control Using Wake Gestures to Eliminate False Activations During Activities of Daily Living

Feb 15, 2024Abstract:While myoelectric control has recently become a focus of increased research as a possible flexible hands-free input modality, current control approaches are prone to inadvertent false activations in real-world conditions. In this work, a novel myoelectric control paradigm -- on-demand myoelectric control -- is proposed, designed, and evaluated, to reduce the number of unrelated muscle movements that are incorrectly interpreted as input gestures . By leveraging the concept of wake gestures, users were able to switch between a dedicated control mode and a sleep mode, effectively eliminating inadvertent activations during activities of daily living (ADLs). The feasibility of wake gestures was demonstrated in this work through two online ubiquitous EMG control tasks with varying difficulty levels; dismissing an alarm and controlling a robot. The proposed control scheme was able to appropriately ignore almost all non-targeted muscular inputs during ADLs (>99.9%) while maintaining sufficient sensitivity for reliable mode switching during intentional wake gesture elicitation. These results highlight the potential of wake gestures as a critical step towards enabling ubiquitous myoelectric control-based on-demand input for a wide range of applications.

Unsupervised Domain Adversarial Self-Calibration for Electromyographic-based Gesture Recognition

Dec 21, 2019

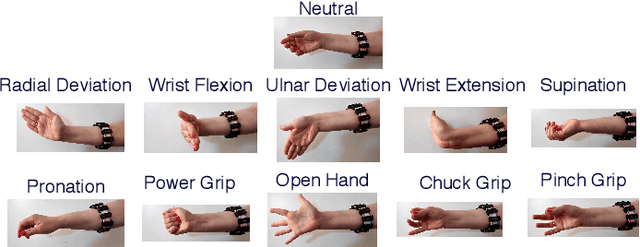

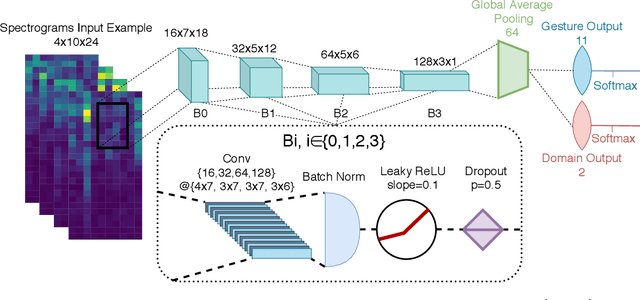

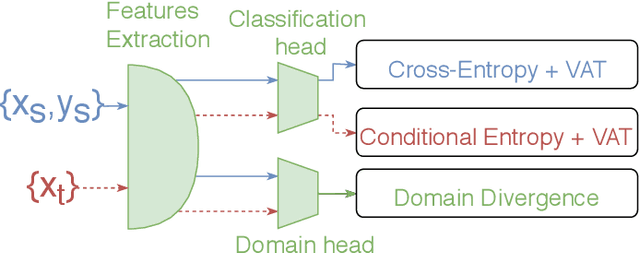

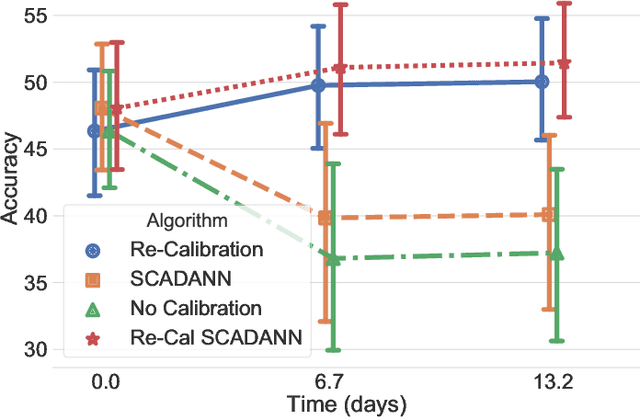

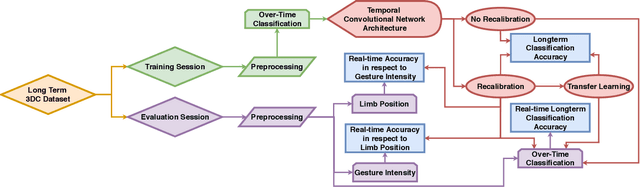

Abstract:Surface electromyography (sEMG) provides an intuitive and non-invasive interface from which to control machines. However, preserving the myoelectric control system's performance over multiple days is challenging, due to the transient nature of this recording technique. In practice, if the system is to remain usable, a time-consuming and periodic re-calibration is necessary. In the case where the sEMG interface is employed every few days, the user might need to do this re-calibration before every use. Thus, severely limiting the practicality of such a control method. Consequently, this paper proposes tackling the especially challenging task of adapting to sEMG signals when multiple days have elapsed between each recording, by presenting SCADANN, a new, deep learning-based, self-calibrating algorithm. SCADANN is ranked against three state of the art domain adversarial algorithms and a multiple-vote self-calibrating algorithm on both offline and online datasets. Overall, SCADANN is shown to systematically improve classifiers' performance over no adaptation and ranks first on almost all the cases tested.

Virtual Reality to Study the Gap Between Offline and Real-Time EMG-based Gesture Recognition

Dec 16, 2019

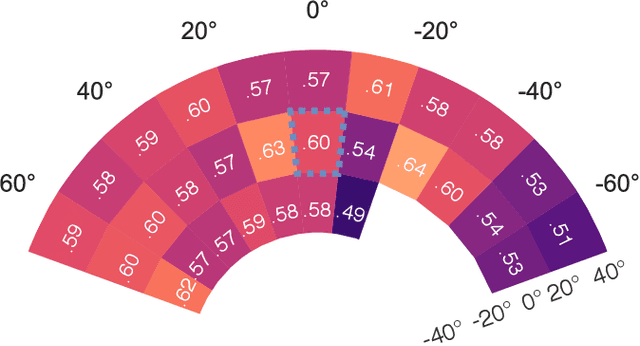

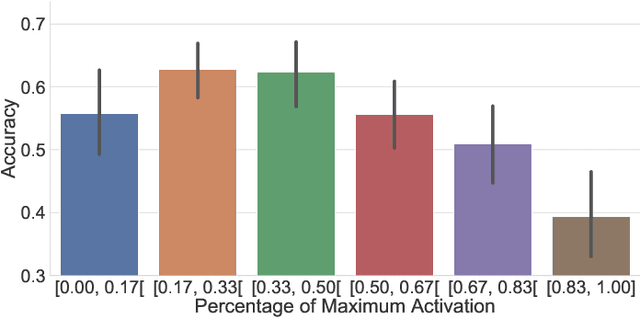

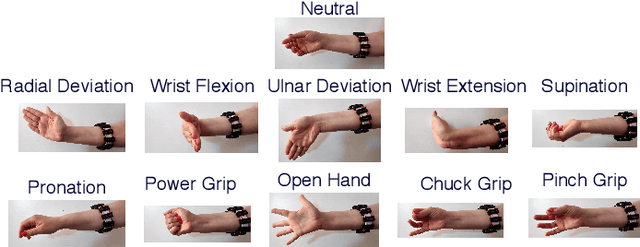

Abstract:Within sEMG-based gesture recognition, a chasm exists in the literature between offline accuracy and real-time usability of a classifier. This gap mainly stems from the four main dynamic factors in sEMG-based gesture recognition: gesture intensity, limb position, electrode shift and transient changes in the signal. These factors are hard to include within an offline dataset as each of them exponentially augment the number of segments to be recorded. On the other hand, online datasets are biased towards the sEMG-based algorithms providing feedback to the participants, limiting the usability of such datasets as benchmarks. This paper proposes a virtual reality (VR) environment and a real-time experimental protocol from which the four main dynamic factors can more easily be studied. During the online experiment, the gesture recognition feedback is provided through the leap motion camera, enabling the proposed dataset to be re-used to compare future sEMG-based algorithms. 20 able-bodied persons took part in this study, completing three to four sessions over a period spanning between 14 and 21 days. Finally, TADANN, a new transfer learning-based algorithm, is proposed for long term gesture classification and significantly (p<0.05) outperforms fine-tuning a network.

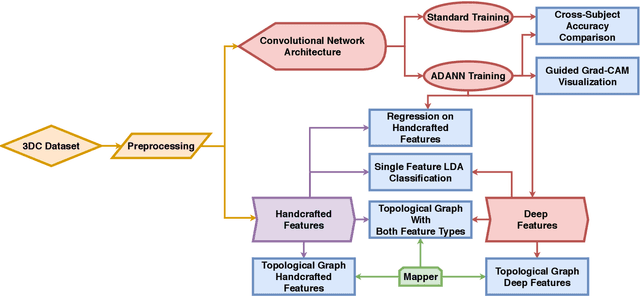

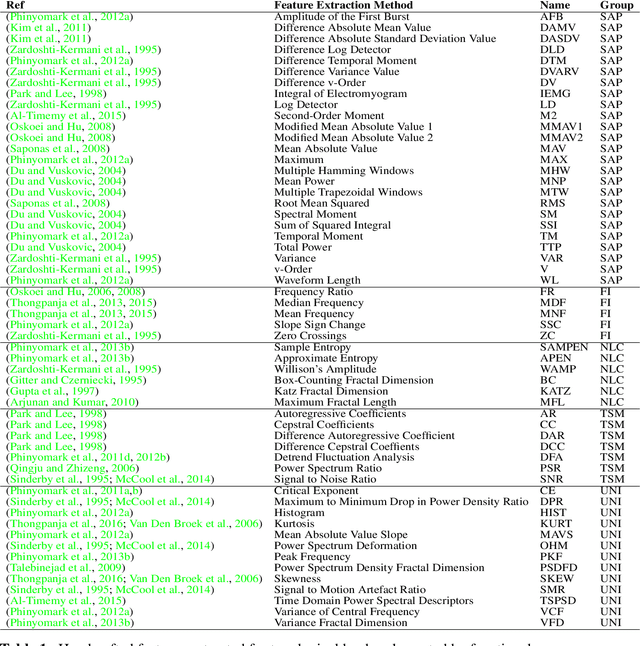

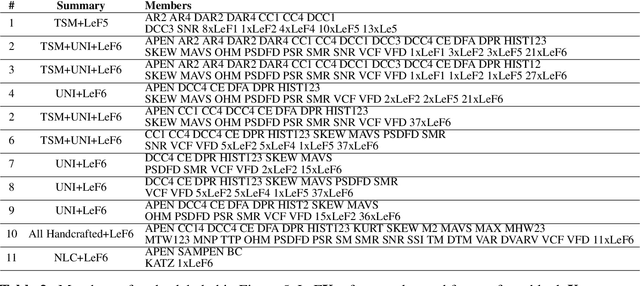

Interpreting Deep Learning Features for Myoelectric Control: A Comparison with Handcrafted Features

Nov 30, 2019

Abstract:The research in myoelectric control systems primarily focuses on extracting discriminative representations from the electromyographic (EMG) signal by designing handcrafted features. Recently, deep learning techniques have been applied to the challenging task of EMG-based gesture recognition. The adoption of these techniques slowly shifts the focus from feature engineering to feature learning. However, the black-box nature of deep learning makes it hard to understand the type of information learned by the network and how it relates to handcrafted features. Additionally, due to the high variability in EMG recordings between participants, deep features tend to generalize poorly across subjects using standard training methods. Consequently, this work introduces a new multi-domain learning algorithm, named ADANN, which significantly enhances (p=0.00004) inter-subject classification accuracy by an average of 19.40\% compared to standard training. Using ADANN-generated features, the main contribution of this work is to provide the first topological data analysis of EMG-based gesture recognition for the characterisation of the information encoded within a deep network, using handcrafted features as landmarks. This analysis reveals that handcrafted features and the learned features (in the earlier layers) both try to discriminate between all gestures, but do not encode the same information to do so. Furthermore, using convolutional network visualization techniques reveal that learned features tend to ignore the most activated channel during gesture contraction, which is in stark contrast with the prevalence of handcrafted features designed to capture amplitude information. Overall, this work paves the way for hybrid feature sets by providing a clear guideline of complementary information encoded within learned and handcrafted features.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge