Evan Campbell

On-Demand Myoelectric Control Using Wake Gestures to Eliminate False Activations During Activities of Daily Living

Feb 15, 2024Abstract:While myoelectric control has recently become a focus of increased research as a possible flexible hands-free input modality, current control approaches are prone to inadvertent false activations in real-world conditions. In this work, a novel myoelectric control paradigm -- on-demand myoelectric control -- is proposed, designed, and evaluated, to reduce the number of unrelated muscle movements that are incorrectly interpreted as input gestures . By leveraging the concept of wake gestures, users were able to switch between a dedicated control mode and a sleep mode, effectively eliminating inadvertent activations during activities of daily living (ADLs). The feasibility of wake gestures was demonstrated in this work through two online ubiquitous EMG control tasks with varying difficulty levels; dismissing an alarm and controlling a robot. The proposed control scheme was able to appropriately ignore almost all non-targeted muscular inputs during ADLs (>99.9%) while maintaining sufficient sensitivity for reliable mode switching during intentional wake gesture elicitation. These results highlight the potential of wake gestures as a critical step towards enabling ubiquitous myoelectric control-based on-demand input for a wide range of applications.

Interpreting Deep Learning Features for Myoelectric Control: A Comparison with Handcrafted Features

Nov 30, 2019

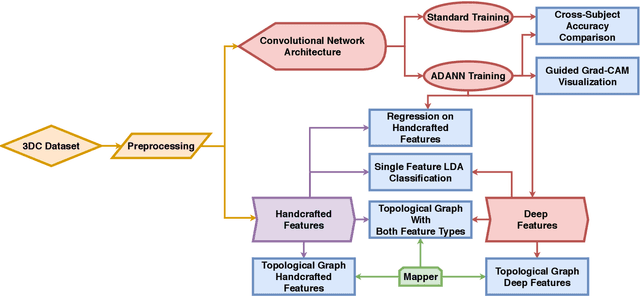

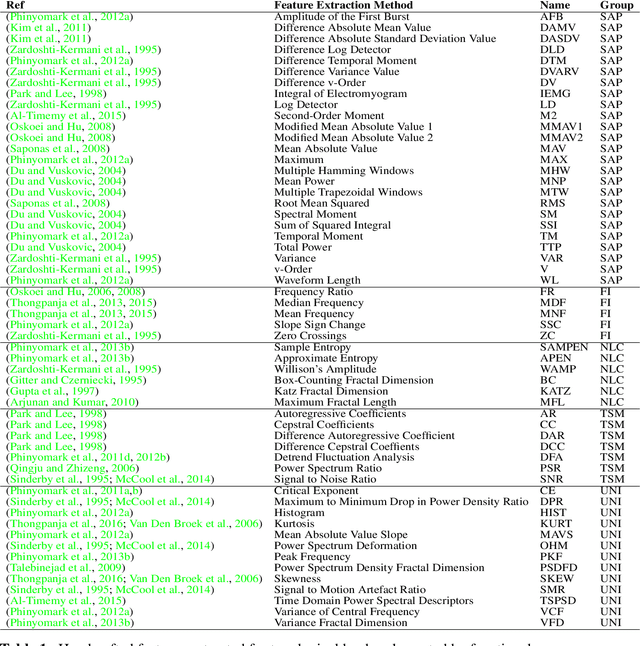

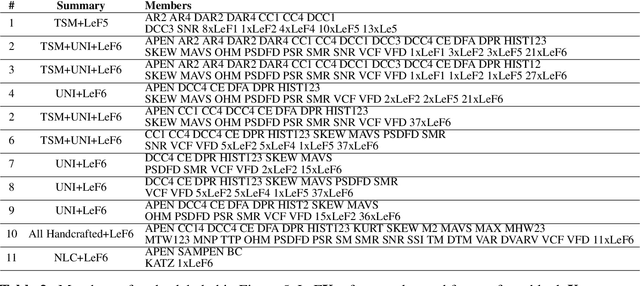

Abstract:The research in myoelectric control systems primarily focuses on extracting discriminative representations from the electromyographic (EMG) signal by designing handcrafted features. Recently, deep learning techniques have been applied to the challenging task of EMG-based gesture recognition. The adoption of these techniques slowly shifts the focus from feature engineering to feature learning. However, the black-box nature of deep learning makes it hard to understand the type of information learned by the network and how it relates to handcrafted features. Additionally, due to the high variability in EMG recordings between participants, deep features tend to generalize poorly across subjects using standard training methods. Consequently, this work introduces a new multi-domain learning algorithm, named ADANN, which significantly enhances (p=0.00004) inter-subject classification accuracy by an average of 19.40\% compared to standard training. Using ADANN-generated features, the main contribution of this work is to provide the first topological data analysis of EMG-based gesture recognition for the characterisation of the information encoded within a deep network, using handcrafted features as landmarks. This analysis reveals that handcrafted features and the learned features (in the earlier layers) both try to discriminate between all gestures, but do not encode the same information to do so. Furthermore, using convolutional network visualization techniques reveal that learned features tend to ignore the most activated channel during gesture contraction, which is in stark contrast with the prevalence of handcrafted features designed to capture amplitude information. Overall, this work paves the way for hybrid feature sets by providing a clear guideline of complementary information encoded within learned and handcrafted features.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge