Ulindu De Silva

Test-Time Optimization for Domain Adaptive Open Vocabulary Segmentation

Jan 08, 2025Abstract:We present Seg-TTO, a novel framework for zero-shot, open-vocabulary semantic segmentation (OVSS), designed to excel in specialized domain tasks. While current open vocabulary approaches show impressive performance on standard segmentation benchmarks under zero-shot settings, they fall short of supervised counterparts on highly domain-specific datasets. We focus on segmentation-specific test-time optimization to address this gap. Segmentation requires an understanding of multiple concepts within a single image while retaining the locality and spatial structure of representations. We propose a novel self-supervised objective adhering to these requirements and use it to align the model parameters with input images at test time. In the textual modality, we learn multiple embeddings for each category to capture diverse concepts within an image, while in the visual modality, we calculate pixel-level losses followed by embedding aggregation operations specific to preserving spatial structure. Our resulting framework termed Seg-TTO is a plug-in-play module. We integrate Seg-TTO with three state-of-the-art OVSS approaches and evaluate across 22 challenging OVSS tasks covering a range of specialized domains. Our Seg-TTO demonstrates clear performance improvements across these establishing new state-of-the-art. Code: https://github.com/UlinduP/SegTTO.

Video Summarisation with Incident and Context Information using Generative AI

Jan 08, 2025

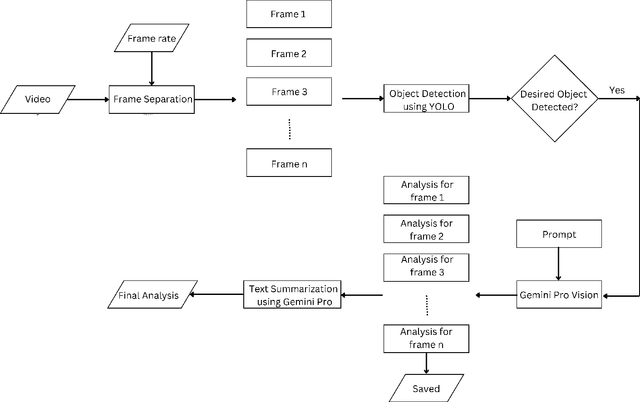

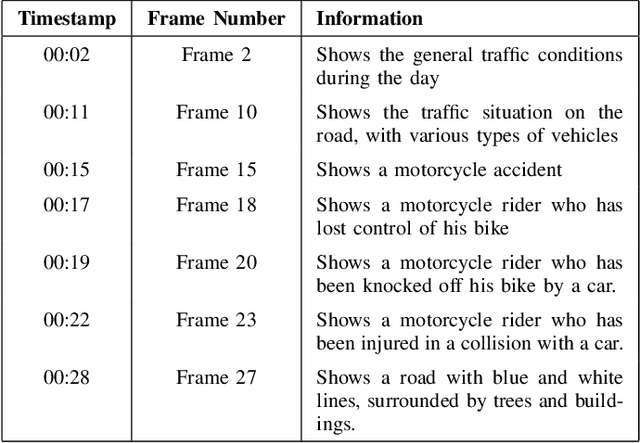

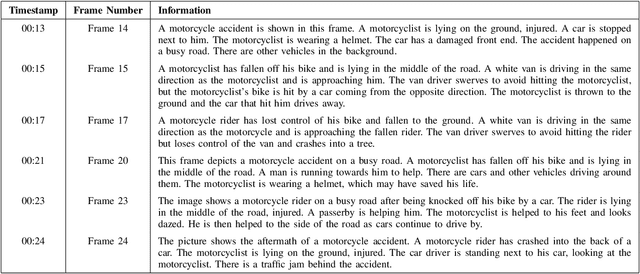

Abstract:The proliferation of video content production has led to vast amounts of data, posing substantial challenges in terms of analysis efficiency and resource utilization. Addressing this issue calls for the development of robust video analysis tools. This paper proposes a novel approach leveraging Generative Artificial Intelligence (GenAI) to facilitate streamlined video analysis. Our tool aims to deliver tailored textual summaries of user-defined queries, offering a focused insight amidst extensive video datasets. Unlike conventional frameworks that offer generic summaries or limited action recognition, our method harnesses the power of GenAI to distil relevant information, enhancing analysis precision and efficiency. Employing YOLO-V8 for object detection and Gemini for comprehensive video and text analysis, our solution achieves heightened contextual accuracy. By combining YOLO with Gemini, our approach furnishes textual summaries extracted from extensive CCTV footage, enabling users to swiftly navigate and verify pertinent events without the need for exhaustive manual review. The quantitative evaluation revealed a similarity of 72.8%, while the qualitative assessment rated an accuracy of 85%, demonstrating the capability of the proposed method.

Large Language Models for Video Surveillance Applications

Jan 06, 2025

Abstract:The rapid increase in video content production has resulted in enormous data volumes, creating significant challenges for efficient analysis and resource management. To address this, robust video analysis tools are essential. This paper presents an innovative proof of concept using Generative Artificial Intelligence (GenAI) in the form of Vision Language Models to enhance the downstream video analysis process. Our tool generates customized textual summaries based on user-defined queries, providing focused insights within extensive video datasets. Unlike traditional methods that offer generic summaries or limited action recognition, our approach utilizes Vision Language Models to extract relevant information, improving analysis precision and efficiency. The proposed method produces textual summaries from extensive CCTV footage, which can then be stored for an indefinite time in a very small storage space compared to videos, allowing users to quickly navigate and verify significant events without exhaustive manual review. Qualitative evaluations result in 80% and 70% accuracy in temporal and spatial quality and consistency of the pipeline respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge