Thomas Besnier

FreeTalk: Emotional Topology-Free 3D Talking Heads

Mar 16, 2026Abstract:Speech-driven 3D facial animation has advanced rapidly, yet most approaches remain tied to registered template meshes, preventing effective deployment on raw 3D scans with arbitrary topology. At the same time, modeling controllable emotional dynamics beyond lip articulation remains challenging, and is often tied to template-based parameterizations. We address these challenges by proposing FreeTalk, a two-stage framework for emotion-conditioned 3D talking-head animation that generalizes to unregistered face meshes with arbitrary vertex count and connectivity. First, Audio-To-Sparse (ATS) predicts a temporally coherent sequence of 3D landmark displacements from speech audio, conditioned on an emotion category and intensity. This sparse representation captures both articulatory and affective motion while remaining independent of mesh topology. Second, Sparse-To-Mesh (STM) transfers the predicted landmark motion to a target mesh by combining intrinsic surface features with landmark-to-vertex conditioning, producing dense per-vertex deformations without template fitting or correspondence supervision at test time. Extensive experiments show that FreeTalk matches specialized baselines when trained in-domain, while providing substantially improved robustness to unseen identities and mesh topologies. Code and pre-trained models will be made publicly available.

Partial Non-rigid Deformations and interpolations of Human Body Surfaces

Dec 03, 2024

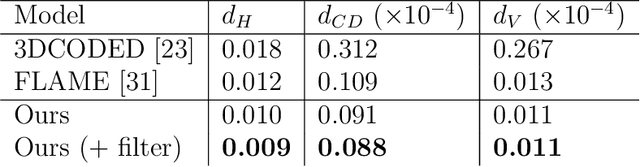

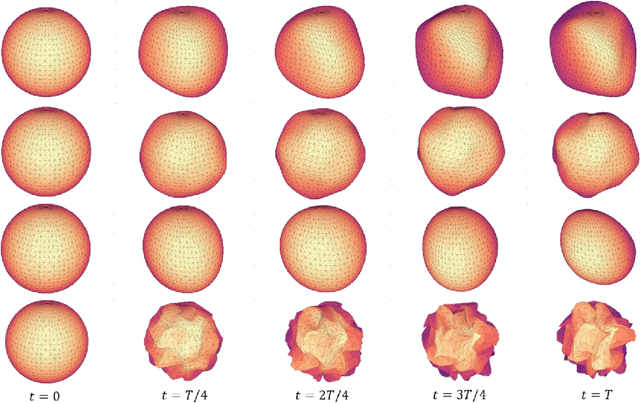

Abstract:Non-rigid shape deformations pose significant challenges, and most existing methods struggle to handle partial deformations effectively. We present Partial Non-rigid Deformations and interpolations of the human body Surfaces (PaNDAS), a new method to learn local and global deformations of 3D surface meshes by building on recent deep models. Unlike previous approaches, our method enables restricting deformations to specific parts of the shape in a versatile way and allows for mixing and combining various poses from the database, all while not requiring any optimization at inference time. We demonstrate that the proposed framework can be used to generate new shapes, interpolate between parts of shapes, and perform other shape manipulation tasks with state-of-the-art accuracy and greater locality across various types of human surface data. Code and data will be made available soon.

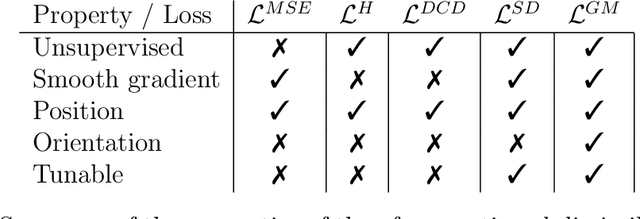

Beyond Fixed Topologies: Unregistered Training and Comprehensive Evaluation Metrics for 3D Talking Heads

Oct 14, 2024

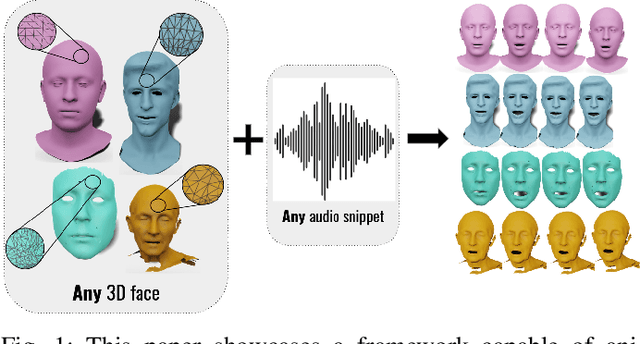

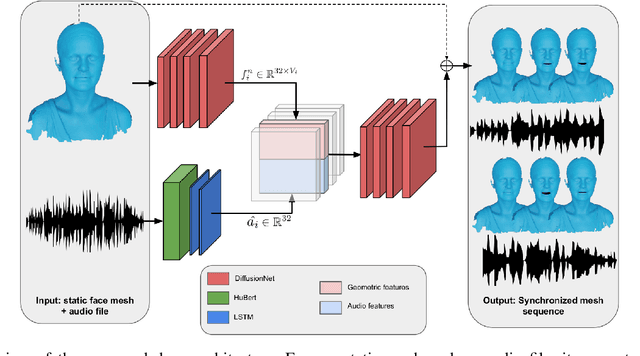

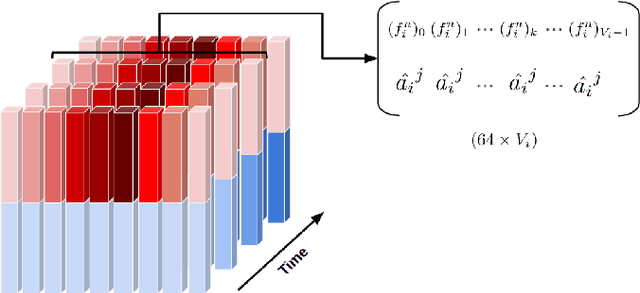

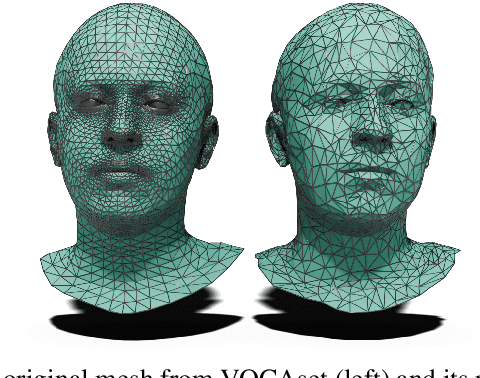

Abstract:Generating speech-driven 3D talking heads presents numerous challenges; among those is dealing with varying mesh topologies. Existing methods require a registered setting, where all meshes share a common topology: a point-wise correspondence across all meshes the model can animate. While simplifying the problem, it limits applicability as unseen meshes must adhere to the training topology. This work presents a framework capable of animating 3D faces in arbitrary topologies, including real scanned data. Our approach relies on a model leveraging heat diffusion over meshes to overcome the fixed topology constraint. We explore two training settings: a supervised one, in which training sequences share a fixed topology within a sequence but any mesh can be animated at test time, and an unsupervised one, which allows effective training with varying mesh structures. Additionally, we highlight the limitations of current evaluation metrics and propose new metrics for better lip-syncing evaluation between speech and facial movements. Our extensive evaluation shows our approach performs favorably compared to fixed topology techniques, setting a new benchmark by offering a versatile and high-fidelity solution for 3D talking head generation.

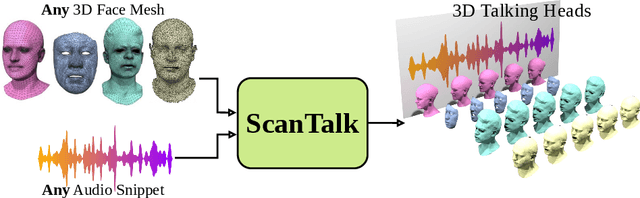

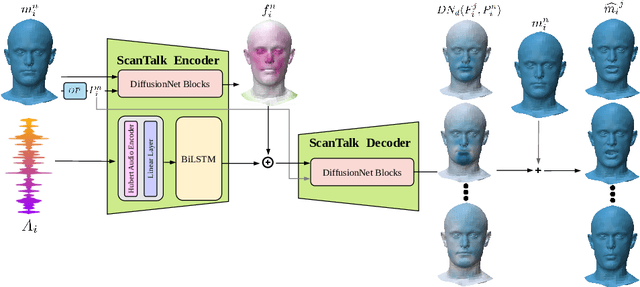

ScanTalk: 3D Talking Heads from Unregistered Scans

Mar 19, 2024

Abstract:Speech-driven 3D talking heads generation has emerged as a significant area of interest among researchers, presenting numerous challenges. Existing methods are constrained by animating faces with fixed topologies, wherein point-wise correspondence is established, and the number and order of points remains consistent across all identities the model can animate. In this work, we present ScanTalk, a novel framework capable of animating 3D faces in arbitrary topologies including scanned data. Our approach relies on the DiffusionNet architecture to overcome the fixed topology constraint, offering promising avenues for more flexible and realistic 3D animations. By leveraging the power of DiffusionNet, ScanTalk not only adapts to diverse facial structures but also maintains fidelity when dealing with scanned data, thereby enhancing the authenticity and versatility of generated 3D talking heads. Through comprehensive comparisons with state-of-the-art methods, we validate the efficacy of our approach, demonstrating its capacity to generate realistic talking heads comparable to existing techniques. While our primary objective is to develop a generic method free from topological constraints, all state-of-the-art methodologies are bound by such limitations. Code for reproducing our results, and the pre-trained model will be made available.

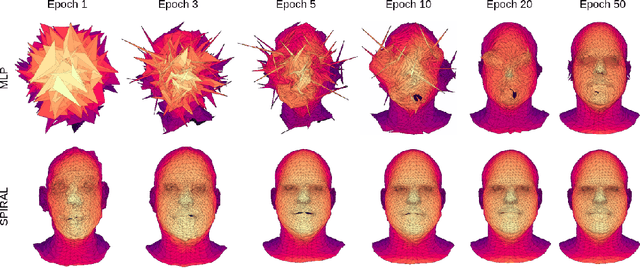

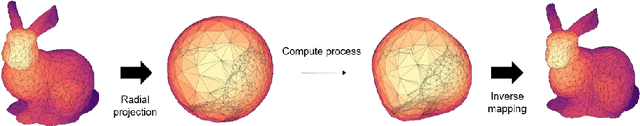

Toward Mesh-Invariant 3D Generative Deep Learning with Geometric Measures

Jun 27, 2023

Abstract:3D generative modeling is accelerating as the technology allowing the capture of geometric data is developing. However, the acquired data is often inconsistent, resulting in unregistered meshes or point clouds. Many generative learning algorithms require correspondence between each point when comparing the predicted shape and the target shape. We propose an architecture able to cope with different parameterizations, even during the training phase. In particular, our loss function is built upon a kernel-based metric over a representation of meshes using geometric measures such as currents and varifolds. The latter allows to implement an efficient dissimilarity measure with many desirable properties such as robustness to resampling of the mesh or point cloud. We demonstrate the efficiency and resilience of our model with a generative learning task of human faces.

A function space perspective on stochastic shape evolution

Feb 10, 2023

Abstract:Modelling randomness in shape data, for example, the evolution of shapes of organisms in biology, requires stochastic models of shapes. This paper presents a new stochastic shape model based on a description of shapes as functions in a Sobolev space. Using an explicit orthonormal basis as a reference frame for the noise, the model is independent of the parameterisation of the mesh. We define the stochastic model, explore its properties, and illustrate examples of stochastic shape evolutions using the resulting numerical framework.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge