Tatsuya Aoki

Decentralized Collective World Model for Emergent Communication and Coordination

Apr 04, 2025

Abstract:We propose a fully decentralized multi-agent world model that enables both symbol emergence for communication and coordinated behavior through temporal extension of collective predictive coding. Unlike previous research that focuses on either communication or coordination separately, our approach achieves both simultaneously. Our method integrates world models with communication channels, enabling agents to predict environmental dynamics, estimate states from partial observations, and share critical information through bidirectional message exchange with contrastive learning for message alignment. Using a two-agent trajectory drawing task, we demonstrate that our communication-based approach outperforms non-communicative models when agents have divergent perceptual capabilities, achieving the second-best coordination after centralized models. Importantly, our distributed approach with constraints preventing direct access to other agents' internal states facilitates the emergence of more meaningful symbol systems that accurately reflect environmental states. These findings demonstrate the effectiveness of decentralized communication for supporting coordination while developing shared representations of the environment.

LiP-LLM: Integrating Linear Programming and dependency graph with Large Language Models for multi-robot task planning

Oct 28, 2024

Abstract:This study proposes LiP-LLM: integrating linear programming and dependency graph with large language models (LLMs) for multi-robot task planning. In order for multiple robots to perform tasks more efficiently, it is necessary to manage the precedence dependencies between tasks. Although multi-robot decentralized and centralized task planners using LLMs have been proposed, none of these studies focus on precedence dependencies from the perspective of task efficiency or leverage traditional optimization methods. It addresses key challenges in managing dependencies between skills and optimizing task allocation. LiP-LLM consists of three steps: skill list generation and dependency graph generation by LLMs, and task allocation using linear programming. The LLMs are utilized to generate a comprehensive list of skills and to construct a dependency graph that maps the relationships and sequential constraints among these skills. To ensure the feasibility and efficiency of skill execution, the skill list is generated by calculated likelihood, and linear programming is used to optimally allocate tasks to each robot. Experimental evaluations in simulated environments demonstrate that this method outperforms existing task planners, achieving higher success rates and efficiency in executing complex, multi-robot tasks. The results indicate the potential of combining LLMs with optimization techniques to enhance the capabilities of multi-robot systems in executing coordinated tasks accurately and efficiently. In an environment with two robots, a maximum success rate difference of 0.82 is observed in the language instruction group with a change in the object name.

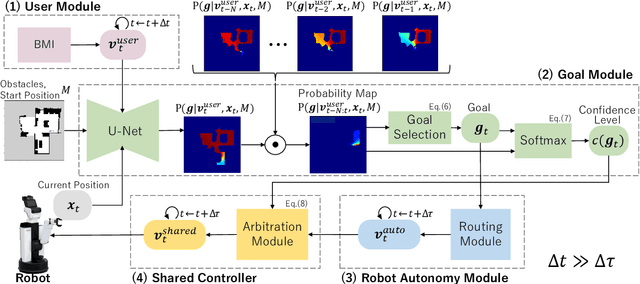

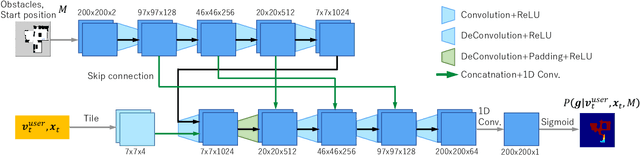

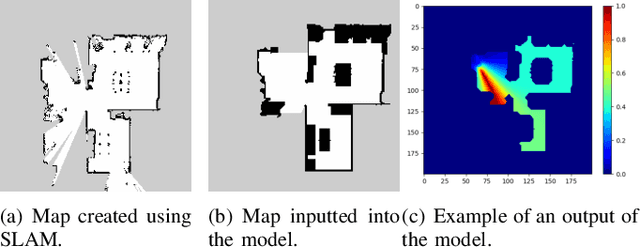

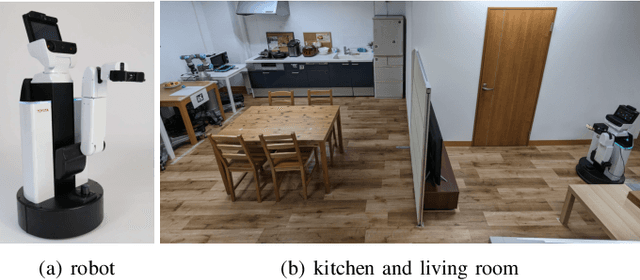

Goal Estimation-based Adaptive Shared Control for Brain-Machine Interfaces Remote Robot Navigation

Jul 25, 2024

Abstract:In this study, we propose a shared control method for teleoperated mobile robots using brain-machine interfaces (BMI). The control commands generated through BMI for robot operation face issues of low input frequency, discreteness, and uncertainty due to noise. To address these challenges, our method estimates the user's intended goal from their commands and uses this goal to generate auxiliary commands through the autonomous system that are both at a higher input frequency and more continuous. Furthermore, by defining the confidence level of the estimation, we adaptively calculated the weights for combining user and autonomous commands, thus achieving shared control.

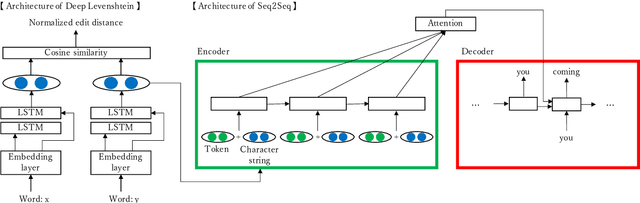

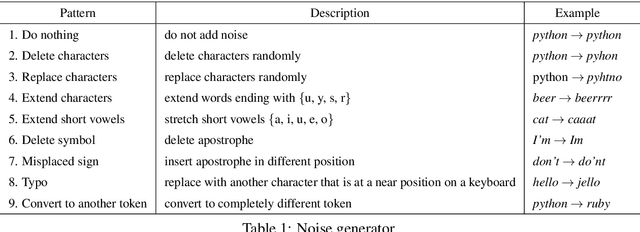

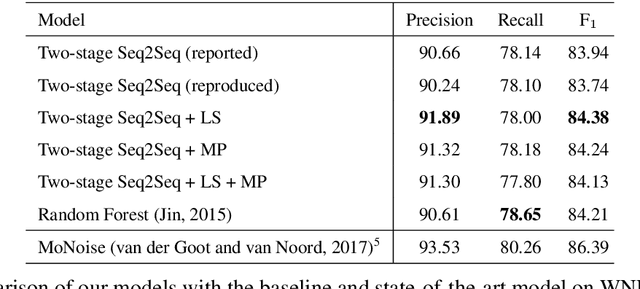

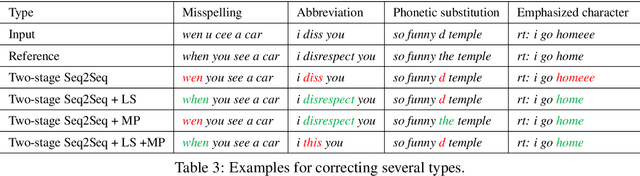

Neural text normalization leveraging similarities of strings and sounds

Nov 04, 2020

Abstract:We propose neural models that can normalize text by considering the similarities of word strings and sounds. We experimentally compared a model that considers the similarities of both word strings and sounds, a model that considers only the similarity of word strings or of sounds, and a model without the similarities as a baseline. Results showed that leveraging the word string similarity succeeded in dealing with misspellings and abbreviations, and taking into account the sound similarity succeeded in dealing with phonetic substitutions and emphasized characters. So that the proposed models achieved higher F$_1$ scores than the baseline.

lamBERT: Language and Action Learning Using Multimodal BERT

Apr 15, 2020

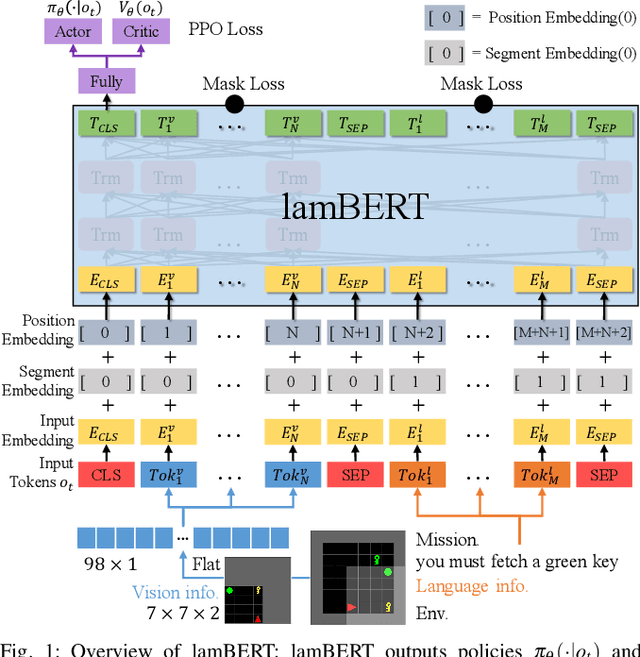

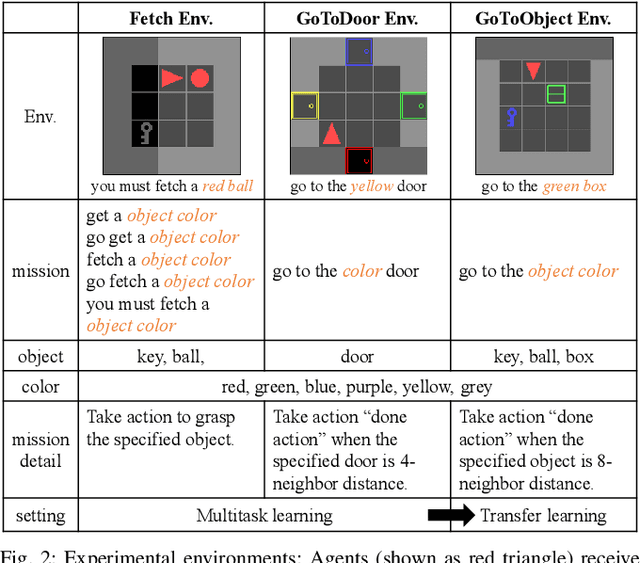

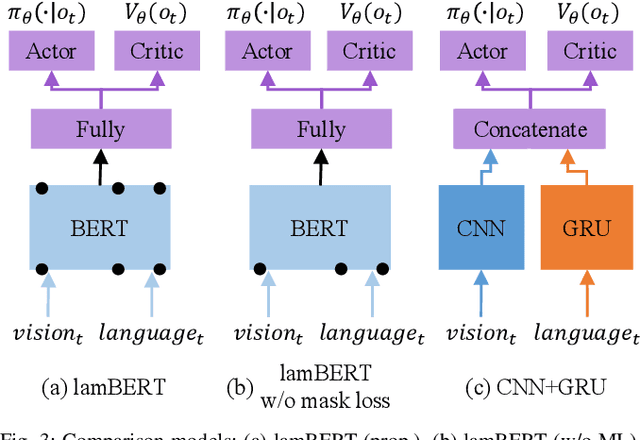

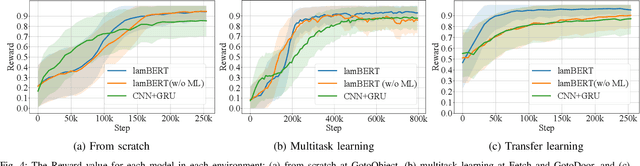

Abstract:Recently, the bidirectional encoder representations from transformers (BERT) model has attracted much attention in the field of natural language processing, owing to its high performance in language understanding-related tasks. The BERT model learns language representation that can be adapted to various tasks via pre-training using a large corpus in an unsupervised manner. This study proposes the language and action learning using multimodal BERT (lamBERT) model that enables the learning of language and actions by 1) extending the BERT model to multimodal representation and 2) integrating it with reinforcement learning. To verify the proposed model, an experiment is conducted in a grid environment that requires language understanding for the agent to act properly. As a result, the lamBERT model obtained higher rewards in multitask settings and transfer settings when compared to other models, such as the convolutional neural network-based model and the lamBERT model without pre-training.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge