Stefano Albrecht

$TAR^2$: Temporal-Agent Reward Redistribution for Optimal Policy Preservation in Multi-Agent Reinforcement Learning

Feb 07, 2025

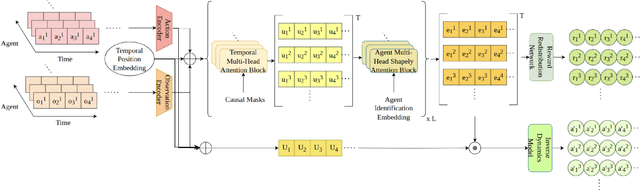

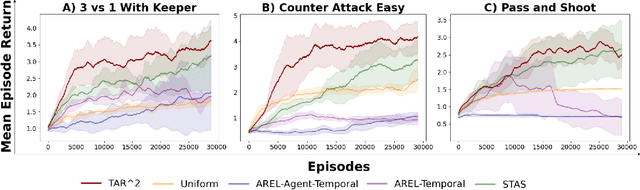

Abstract:In cooperative multi-agent reinforcement learning (MARL), learning effective policies is challenging when global rewards are sparse and delayed. This difficulty arises from the need to assign credit across both agents and time steps, a problem that existing methods often fail to address in episodic, long-horizon tasks. We propose Temporal-Agent Reward Redistribution $TAR^2$, a novel approach that decomposes sparse global rewards into agent-specific, time-step-specific components, thereby providing more frequent and accurate feedback for policy learning. Theoretically, we show that $TAR^2$ (i) aligns with potential-based reward shaping, preserving the same optimal policies as the original environment, and (ii) maintains policy gradient update directions identical to those under the original sparse reward, ensuring unbiased credit signals. Empirical results on two challenging benchmarks, SMACLite and Google Research Football, demonstrate that $TAR^2$ significantly stabilizes and accelerates convergence, outperforming strong baselines like AREL and STAS in both learning speed and final performance. These findings establish $TAR^2$ as a principled and practical solution for agent-temporal credit assignment in sparse-reward multi-agent systems.

PILOT: Efficient Planning by Imitation Learning and Optimisation for Safe Autonomous Driving

Nov 01, 2020

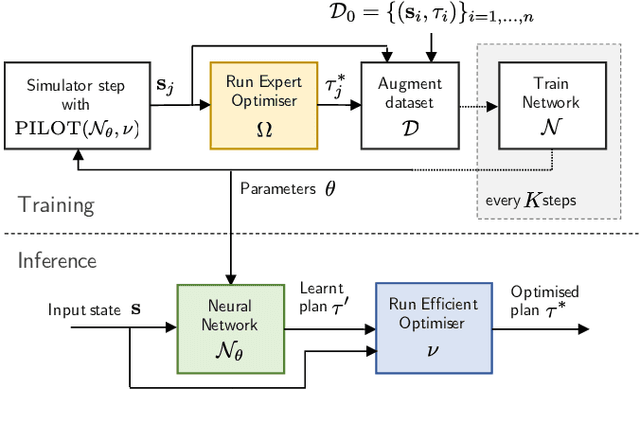

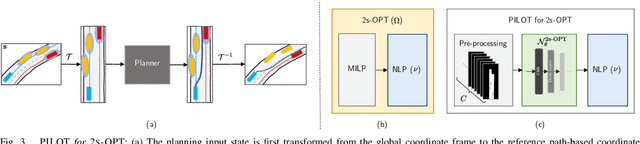

Abstract:Achieving the right balance between planning quality, safety and runtime efficiency is a major challenge for autonomous driving research. Optimisation-based planners are typically capable of producing high-quality, safe plans, but at the cost of efficiency. We present PILOT, a two-stage planning framework comprising an imitation neural network and an efficient optimisation component that guarantees the satisfaction of requirements of safety and comfort. The neural network is trained to imitate an expensive-to-run optimisation-based planning system with the same objective as the efficient optimisation component of PILOT. We demonstrate in simulated autonomous driving experiments that the proposed framework achieves a significant reduction in runtime when compared to the optimisation-based expert it imitates, without sacrificing the planning quality.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge