Stanislaw Wozniak

Neuromorphic Optical Flow and Real-time Implementation with Event Cameras

Apr 14, 2023

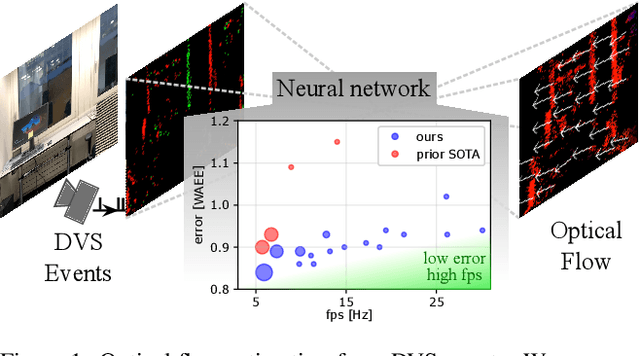

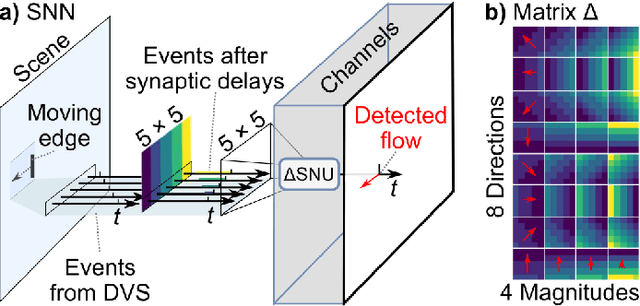

Abstract:Optical flow provides information on relative motion that is an important component in many computer vision pipelines. Neural networks provide high accuracy optical flow, yet their complexity is often prohibitive for application at the edge or in robots, where efficiency and latency play crucial role. To address this challenge, we build on the latest developments in event-based vision and spiking neural networks. We propose a new network architecture, inspired by Timelens, that improves the state-of-the-art self-supervised optical flow accuracy when operated both in spiking and non-spiking mode. To implement a real-time pipeline with a physical event camera, we propose a methodology for principled model simplification based on activity and latency analysis. We demonstrate high speed optical flow prediction with almost two orders of magnitude reduced complexity while maintaining the accuracy, opening the path for real-time deployments.

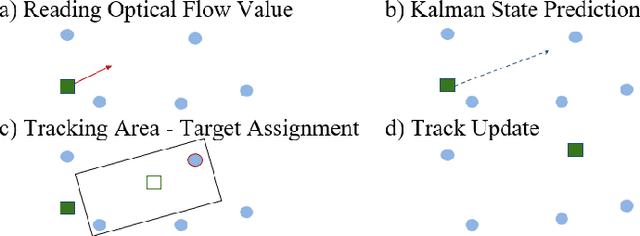

Dynamic Event-based Optical Identification and Communication

Mar 14, 2023

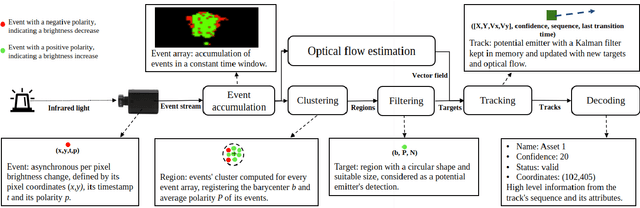

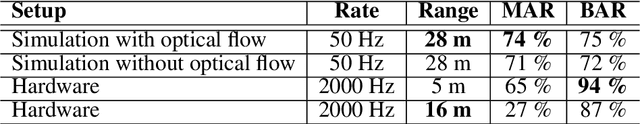

Abstract:Optical identification is often done with spatial or temporal visual pattern recognition and localization. Temporal pattern recognition, depending on the technology, involves a trade-off between communication frequency, range and accurate tracking. We propose a solution with light-emitting beacons that improves this trade-off by exploiting fast event-based cameras and, for tracking, sparse neuromorphic optical flow computed with spiking neurons. In an asset monitoring use case, we demonstrate that the system, embedded in a simulated drone, is robust to relative movements and enables simultaneous communication with, and tracking of, multiple moving beacons. Finally, in a hardware lab prototype, we achieve state-of-the-art optical camera communication frequencies in the kHz magnitude.

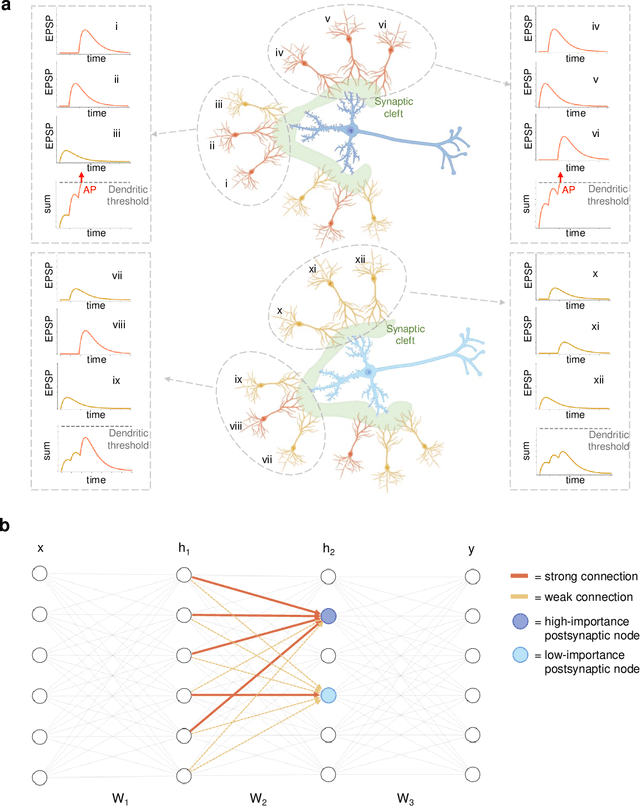

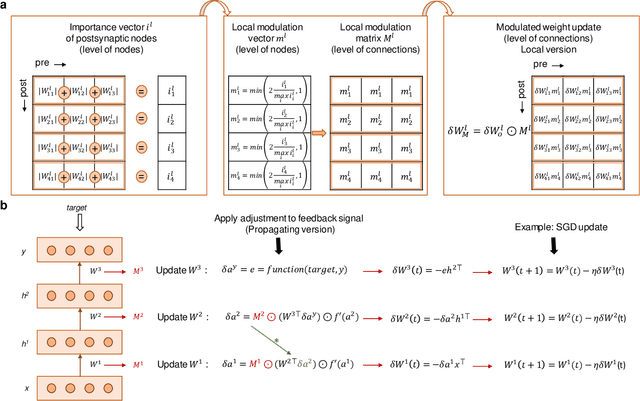

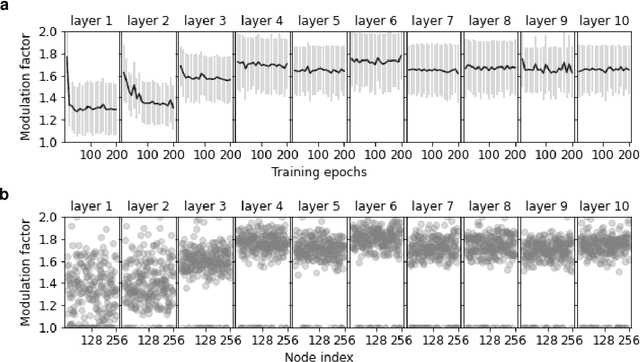

Learning in Deep Neural Networks Using a Biologically Inspired Optimizer

Apr 23, 2021

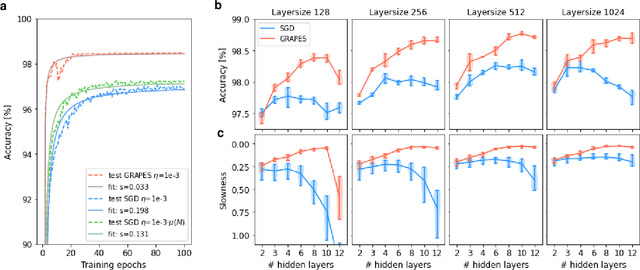

Abstract:Plasticity circuits in the brain are known to be influenced by the distribution of the synaptic weights through the mechanisms of synaptic integration and local regulation of synaptic strength. However, the complex interplay of stimulation-dependent plasticity with local learning signals is disregarded by most of the artificial neural network training algorithms devised so far. Here, we propose a novel biologically inspired optimizer for artificial (ANNs) and spiking neural networks (SNNs) that incorporates key principles of synaptic integration observed in dendrites of cortical neurons: GRAPES (Group Responsibility for Adjusting the Propagation of Error Signals). GRAPES implements a weight-distribution dependent modulation of the error signal at each node of the neural network. We show that this biologically inspired mechanism leads to a systematic improvement of the convergence rate of the network, and substantially improves classification accuracy of ANNs and SNNs with both feedforward and recurrent architectures. Furthermore, we demonstrate that GRAPES supports performance scalability for models of increasing complexity and mitigates catastrophic forgetting by enabling networks to generalize to unseen tasks based on previously acquired knowledge. The local characteristics of GRAPES minimize the required memory resources, making it optimally suited for dedicated hardware implementations. Overall, our work indicates that reconciling neurophysiology insights with machine intelligence is key to boosting the performance of neural networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge